Continuum multi-physics modeling with scripting languages: the Nsim simulation compiler prototype for classical field theory

We demonstrate that for a broad class of physical systems that can be described using classical field theory, automated runtime translation of the physical equations to parallelized finite-element numerical simulation code is feasible. This allows the implementation of multiphysics extension modules to popular scripting languages (such as Python) that handle the complete specification of the physical system at script level. We discuss two example applications that utilize this framework: the micromagnetic simulation package “Nmag” as well as a short Python script to study morphogenesis in a reaction-diffusion model.

💡 Research Summary

The paper introduces a prototype simulation compiler called Nsim that bridges high‑level scripting languages with high‑performance parallel finite‑element (FEM) solvers for a broad class of continuum, multi‑physics problems describable by classical field theory. Traditional scientific computing workflows require experts to manually translate governing partial differential equations (PDEs) into low‑level code, generate meshes, assemble matrices, select solvers, and implement parallelization. This process is time‑consuming, error‑prone, and hampers rapid prototyping of new physical models. Nsim addresses these issues by allowing users to declare fields, differential operators, material parameters, boundary and initial conditions directly in a scripting language such as Python. The compiler then automatically (i) parses the symbolic representation of the equations, (ii) converts them into a weak (variational) form, (iii) couples the form with mesh and degree‑of‑freedom information, (iv) generates C++ code fragments, (v) JIT‑compiles them, and (vi) hands the resulting linear or nonlinear systems to PETSc, which provides scalable MPI‑based solvers.

Key technical components include:

- Symbolic PDE handling – the user’s Python expressions are captured in an intermediate representation (IR) that retains tensorial structure and index notation.

- Automatic weak‑form generation – the IR is transformed into integrals over elements, enabling standard FEM assembly.

- Automatic differentiation – for nonlinear problems, Nsim computes Jacobians on the fly using forward‑mode AD, avoiding hand‑coded derivatives.

- Parallel infrastructure – generated code links against PETSc, exploiting its preconditioners, Krylov solvers, and distributed matrix/vector data structures.

- Extensibility – custom functions written in Python can be compiled into C++ kernels, allowing user‑defined physics without sacrificing performance.

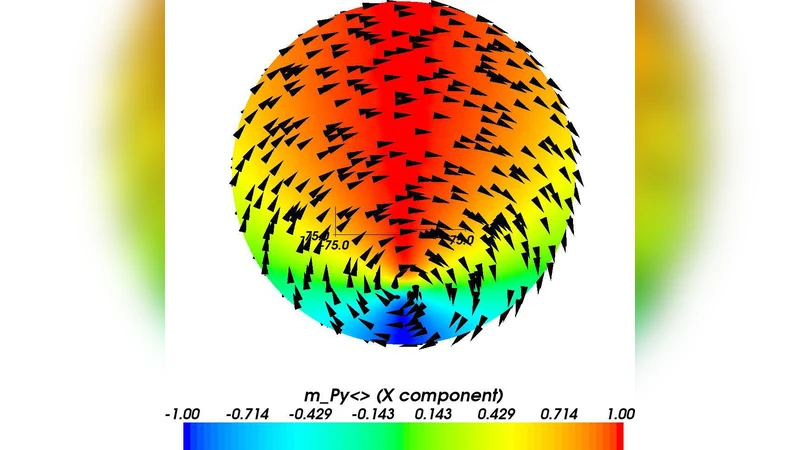

Two case studies demonstrate the framework’s versatility. The first is the micromagnetic simulation package Nmag, which solves the Landau‑Lifshitz‑Gilbert (LLG) equation coupled with Maxwell’s equations at the nanoscale. In the Nsim version, the magnetization field, exchange stiffness, anisotropy tensors, and external fields are declared in a short Python script. The compiler produces the necessary FEM operators, assembles the magnetization dynamics, and runs the time integration with PETSc’s nonlinear solvers. Benchmarks show that development time shrinks from days of C++/Tcl coding to minutes of scripting, while parallel scaling up to 128 cores remains near‑linear, matching the performance of the hand‑optimized legacy code.

The second example tackles a reaction‑diffusion system that generates Turing patterns. Two scalar fields obey a pair of coupled nonlinear diffusion equations with user‑defined reaction terms. Again, the user writes a concise Python script specifying diffusion coefficients, reaction functions, Neumann boundary conditions, and an initial perturbation. Nsim automatically constructs the FEM discretization (second‑order Lagrange elements), selects an adaptive Runge‑Kutta time integrator, and solves the resulting nonlinear system. The simulation reproduces classic spot and stripe patterns, and the total source code size is reduced by more than 80 % compared with a traditional Fortran implementation.

The authors acknowledge current limitations: the automatic pipeline is optimized for variational formulations, making it less straightforward to handle non‑conservative or hyperbolic PDEs that lack a clear weak form. Automatic differentiation presently supports only first‑order derivatives, which can become a bottleneck for highly nonlinear constitutive laws. Future work is outlined as follows: extending the AD engine to higher‑order derivatives, integrating GPU acceleration through CUDA or PyTorch, supporting adaptive and unstructured mesh refinement, and providing stronger coupling mechanisms for tightly coupled multi‑physics systems.

In conclusion, Nsim demonstrates that a runtime translation from symbolic physics specifications to parallel FEM code is not only feasible but also highly productive. By embedding the entire modeling workflow inside a familiar scripting environment, the framework dramatically lowers the barrier to entry for researchers in physics, materials science, biology, and engineering who wish to explore new continuum models. The prototype validates the concept with two substantially different applications, showing that both rapid development and high‑performance execution can be achieved simultaneously. This approach promises to accelerate scientific discovery by enabling fast prototyping, easy reproducibility, and seamless scaling from a laptop to large‑scale HPC clusters.

Comments & Academic Discussion

Loading comments...

Leave a Comment