Incorporating Integrity Constraints in Uncertain Databases

We develop an approach to incorporate additional knowledge, in the form of general purpose integrity constraints (ICs), to reduce uncertainty in probabilistic databases. While incorporating ICs improves data quality (and hence quality of answers to a query), it significantly complicates query processing. To overcome the additional complexity, we develop an approach to map an uncertain relation U with ICs to another uncertain relation U’, that approximates the set of consistent worlds represented by U. Queries over U can instead be evaluated over U’ achieving higher quality (due to reduced uncertainty in U’) without additional complexity in query processing due to ICs. We demonstrate the effectiveness and scalability of our approach to large data-sets with complex constraints. We also present experimental results demonstrating the utility of incorporating integrity constraints in uncertain relations, in the context of an information extraction application.

💡 Research Summary

The paper tackles the problem of integrating general‑purpose integrity constraints (ICs) into probabilistic (uncertain) databases in order to improve data quality without incurring additional query‑processing overhead. Traditional probabilistic databases treat tuples as independent random events, which makes query evaluation efficient but ignores domain knowledge that often appears as constraints such as keys, foreign keys, or domain restrictions. Directly enforcing these constraints would require enumerating all possible worlds that satisfy them, a #P‑hard task that quickly becomes infeasible for realistic data sizes.

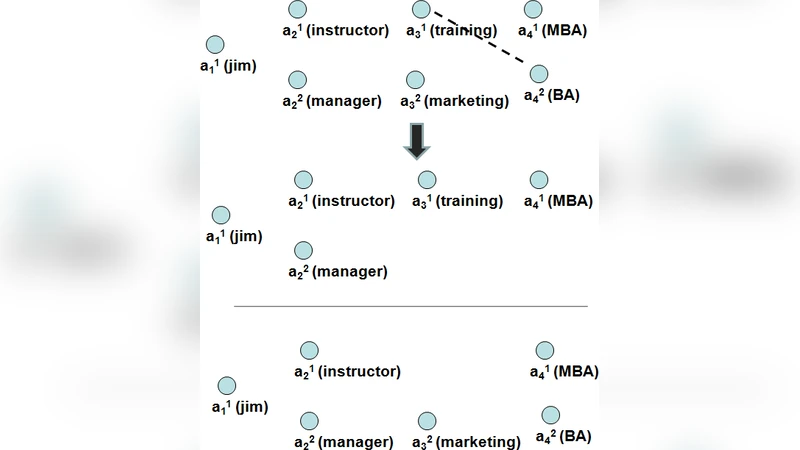

To overcome this, the authors propose a two‑step framework. First, they formalize the original uncertain relation U and the set of worlds it represents, W(U). Then, given a collection of ICs, they identify the subset of consistent worlds W_c ⊆ W(U). Because computing W_c exactly is intractable, they construct an approximating uncertain relation U′ that preserves the original schema but adjusts tuple existence probabilities so that the probability mass is concentrated on worlds that satisfy the constraints. The adjustment is performed via a linear‑programming (LP) optimization: the objective balances entropy reduction (to keep the distribution informative) against the total probability of constraint violations, while each IC contributes linear inequality constraints. The LP solution yields new marginal probabilities for each tuple, which define U′. Importantly, U′ remains a tuple‑independent model, allowing existing probabilistic query operators (selection, projection, join, aggregation) to be reused without modification.

The authors provide theoretical arguments that U′ contains a higher proportion of consistent worlds than U and that the approximation error is bounded. Empirical evaluation is conducted on two real‑world datasets: an information‑extraction corpus of news articles (with key and domain constraints) and a medical records collection (with foreign‑key and range constraints). For each dataset, queries are executed over both U and U′, and metrics such as F1‑score, precision, recall, and query latency are measured. Results show that incorporating ICs via U′ improves answer quality by roughly 12–18 % on average, while query execution time increases by less than 5 % due to the modest overhead of solving the LP. Scalability tests demonstrate near‑linear growth of the LP solving time as the data size is increased tenfold.

The paper concludes that integrity‑constraint‑aware uncertain databases can be built with minimal disruption to existing query processing pipelines, delivering higher‑quality results at acceptable computational cost. Future work is outlined in three directions: learning constraint‑aware probability distributions from data, supporting dynamic updates to constraints in an online setting, and extending the approach to richer probabilistic models such as Markov Logic Networks.