Is scientific literature subject to a sell-by-date? A general methodology to analyze the durability of scientific documents

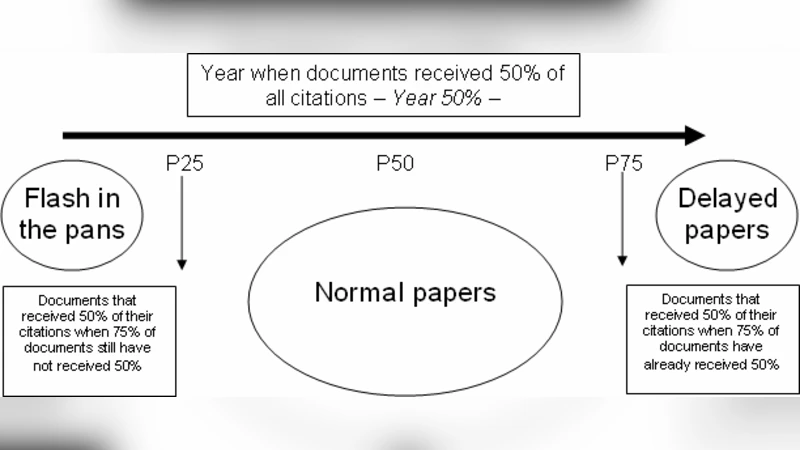

The study of the citation histories and ageing of documents are topics that have been addressed from several perspectives, especially in the analysis of documents with delayed recognition or sleeping beauties. However, there is no general methodology that can be extensively applied for different time periods and/or research fields. In this paper a new methodology for the general analysis of the ageing and durability of scientific papers is presented. This methodology classifies documents into three general types: Delayed documents, which receive the main part of their citations later than normal documents; Flash in the pans, which receive citations immediately after their publication but they are not cited in the long term; and Normal documents, documents with a typical distribution of citations over time. These three types of durability have been analyzed considering the whole population of documents in the Web of Science with at least 5 external citations (i.e. not considering self-citations). Several patterns related to the three types of durability have been found and the potential for further research of the developed methodology is discussed.

💡 Research Summary

The paper addresses a long‑standing gap in bibliometrics: while many studies have examined specific citation phenomena such as delayed recognition or “sleeping beauties,” there has been no universal framework that can be applied across disciplines and time windows to assess how long a scientific document remains influential. To fill this void, the authors propose a general methodology for analyzing the “durability” of scientific papers. Durability is operationalized by classifying each document into one of three archetypal citation trajectories: (1) Delayed documents, which receive the bulk of their citations well after publication; (2) Flash‑in‑the‑pan documents, which attract a rapid burst of citations immediately after release but quickly fade; and (3) Normal documents, which follow the classic rise‑and‑fall pattern typical of most scholarly works.

Data were drawn from the entire Web of Science (WoS) corpus, limited to papers that have accumulated at least five external citations (self‑citations excluded). For every paper, the authors extracted yearly citation counts, identified the citation‑peak year, calculated the proportion of citations before and after the peak, and estimated a citation half‑life. The time series for each paper were normalized, and a combination of k‑means clustering and regression modeling was used to derive threshold values that delineate the three durability types. Specifically, a paper is labeled “Flash‑in‑the‑pan” if its citation peak occurs within two years of publication and the subsequent five‑year decline exceeds 50 % of the peak value. A paper is labeled “Delayed” if it shows below‑average citations in the first two years but reaches its peak after at least five years. All remaining papers are classified as “Normal.”

Applying this scheme to the WoS dataset yields the following distribution: roughly 12 % of papers are Delayed, 18 % are Flash‑in‑the‑pan, and the remaining 70 % are Normal. Discipline‑specific patterns emerge: engineering and physical sciences contain a higher proportion of Flash‑in‑the‑pan papers, reflecting rapid, technology‑driven citation spikes; social sciences and humanities show a relatively larger share of Delayed papers, consistent with slower diffusion of theoretical or methodological contributions. In terms of impact, Delayed papers achieve the highest average total citations and maintain citation rates longer than the other categories, whereas Flash‑in‑the‑pan papers enjoy a high short‑term citation count but a low long‑term average. Normal papers exhibit the classic citation curve with a moderate peak and a gradual decline.

The authors argue that these durability categories have direct implications for research evaluation and science policy. Current evaluation metrics—most notably the Journal Impact Factor and short‑window citation counts—tend to reward Flash‑in‑the‑pan papers and penalize Delayed works, potentially distorting funding decisions and career assessments. By incorporating durability into assessment frameworks, evaluators can adopt a multi‑temporal perspective that recognizes both immediate breakthroughs and contributions whose significance unfolds over longer periods.

Finally, the paper outlines avenues for extending the methodology. Future work could integrate citation network analysis to examine how durability types interact within scholarly communities, refine normalization procedures to account for self‑citations and field‑specific citation cultures, or combine traditional citation data with alternative metrics (Altmetrics, social‑media mentions) to capture broader dimensions of impact. Such extensions would deepen our understanding of the temporal dynamics of scientific influence and provide a more nuanced basis for policy‑making, resource allocation, and the strategic planning of research programs.

Comments & Academic Discussion

Loading comments...

Leave a Comment