Source and Channel Coding for Correlated Sources Over Multiuser Channels

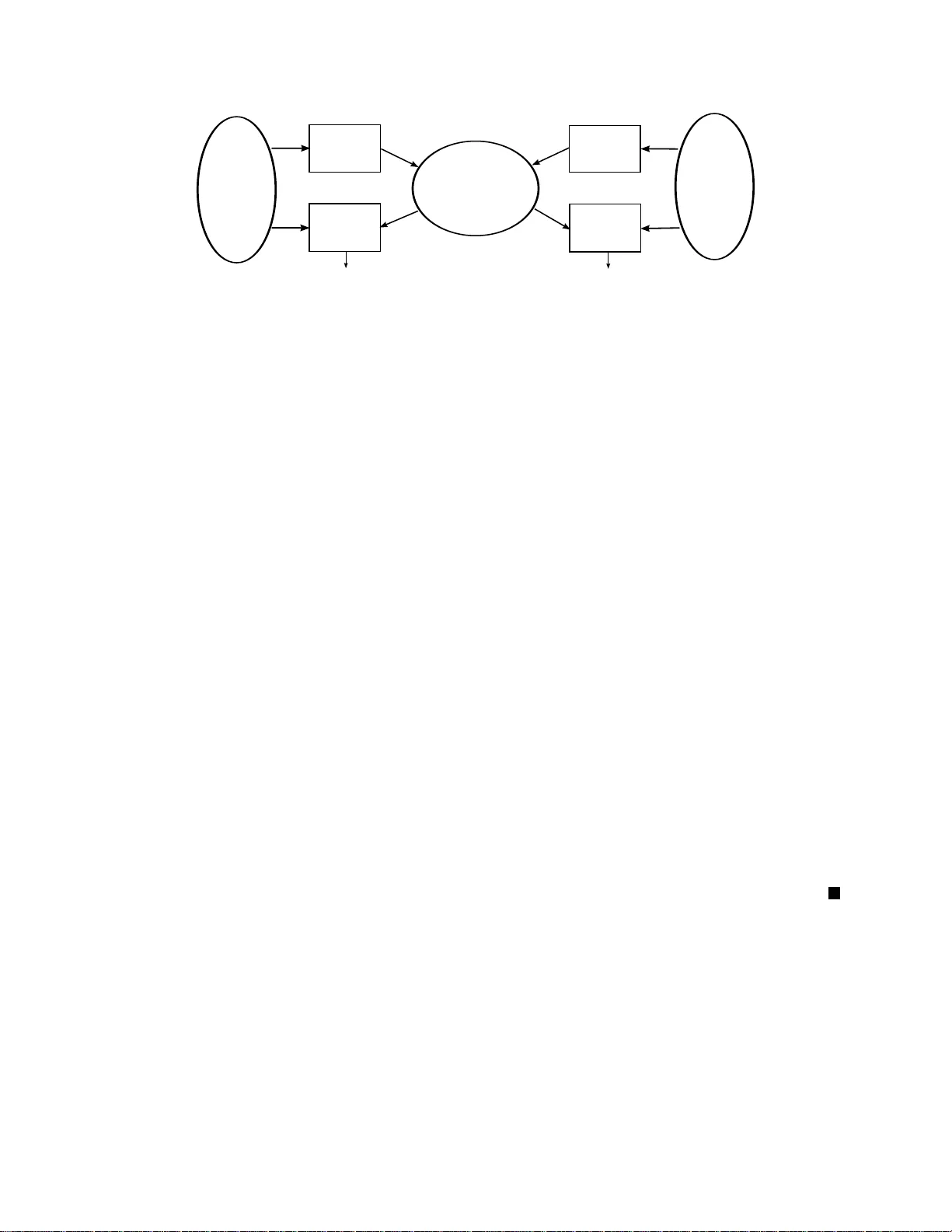

Source and channel coding over multiuser channels in which receivers have access to correlated source side information is considered. For several multiuser channel models necessary and sufficient conditions for optimal separation of the source and ch…

Authors: - Deniz Gündüz (Princeton University / Stanford University) - Elza Erkip (Polytechnic Institute of New York University) - Andrea Goldsmith (Stanford University) - H. Vincent Poor (Princeton University)