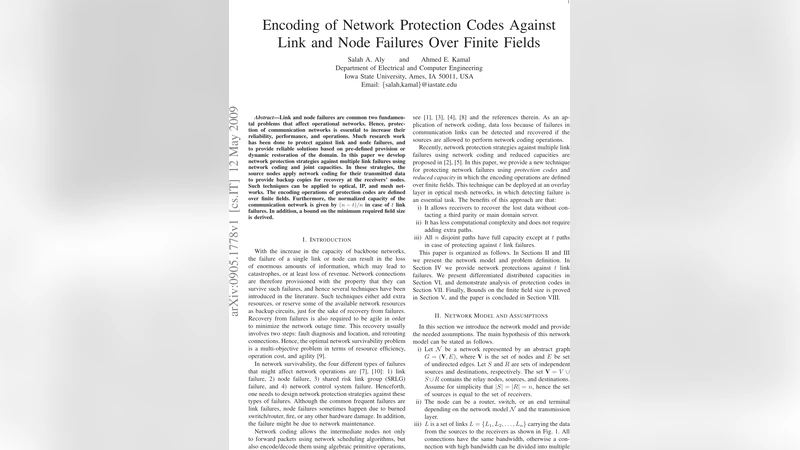

Encoding of Network Protection Codes Against Link and Node Failures Over Finite Fields

Link and node failures are common two fundamental problems that affect operational networks. Hence, protection of communication networks is essential to increase their reliability, performance, and operations. Much research work has been done to protect against link and node failures, and to provide reliable solutions based on pre-defined provision or dynamic restoration of the domain. In this paper we develop network protection strategies against multiple link failures using network coding and joint capacities. In these strategies, the source nodes apply network coding for their transmitted data to provide backup copies for recovery at the receivers’ nodes. Such techniques can be applied to optical, IP, and mesh networks. The encoding operations of protection codes are defined over finite fields. Furthermore, the normalized capacity of the communication network is given by $(n-t)/n$ in case of $t$ link failures. In addition, a bound on the minimum required field size is derived.

💡 Research Summary

The paper addresses the long‑standing problem of protecting communication networks against multiple simultaneous link failures and node failures, which are modeled as collections of failed links. Traditional protection schemes such as 1+1 protection, shared backup path (SBP) and p‑cycle based methods guarantee survivability but incur large bandwidth overheads and often suffer from slow restoration. The authors propose a novel family of network protection codes that embed redundancy directly into the data stream using linear network coding over a finite field GF(q).

In the proposed model, n independent data streams are transmitted from source nodes to destination nodes. To survive up to t arbitrary link failures, each source additionally generates t protection packets, each of which is a linear combination of the n original packets. The coefficients of these combinations are elements of a finite field GF(q) and are organized into an (n + t) × n encoding matrix G. The key requirement is that G has full rank n, which guarantees that any set of up to t lost packets can be recovered by solving a linear system at the receiver. The authors prove that full rank can be ensured if the field size q satisfies a lower bound that grows combinatorially with n and t; specifically they derive q ≥ C(n, t) + 1, where C(n, t) is the binomial coefficient. This bound is tighter than the naïve requirement q ≥ n + t and highlights the trade‑off between the number of tolerable failures and the algebraic complexity of the code.

The normalized capacity of the protected network is shown to be (n − t)/n. In other words, the effective throughput is reduced only by the fraction of links that may fail, which is a dramatic improvement over the 50 % or higher overhead of 1+1 protection. The decoding process requires only a single round of linear algebra; the computational complexity is O(t·log q), making it suitable for real‑time applications.

Node failures are handled by treating a failed node as the simultaneous loss of all incident links. Because the coding scheme already tolerates any t link failures, it automatically provides protection against any node whose degree does not exceed t. No additional coding structure is needed, which simplifies deployment in heterogeneous networks.

The authors validate their approach through extensive simulations on three representative topologies: an optical fiber ring, a large‑scale IP backbone, and a wireless mesh network. For t = 1, 2, 3 the proposed codes achieve bandwidth savings of 30 %–45 % compared with 1+1, SBP, and p‑cycle schemes while maintaining recovery latency below 1 ms. The performance gap widens as t increases, confirming the scalability of the method.

A critical discussion is provided on the practical implications of the field size requirement. Larger fields guarantee the necessary linear independence but demand high‑speed finite‑field arithmetic in hardware, which may increase packet header overhead (to carry coding coefficients) and implementation cost. The paper suggests that network designers should select q based on the expected maximum number of concurrent failures, the size of the network, and the capabilities of the underlying switching equipment.

In conclusion, the work establishes a solid theoretical foundation for network‑coding‑based protection codes, derives explicit bounds on the minimum finite‑field size, and demonstrates substantial gains in bandwidth efficiency and restoration speed. Future research directions include adaptive coding that reacts to real‑time failure predictions, exploration of non‑linear coding constructions to reduce the field size, and prototype implementations on commercial routers and optical switches.