A Peer to Peer Protocol for Online Dispute Resolution over Storage Consumption

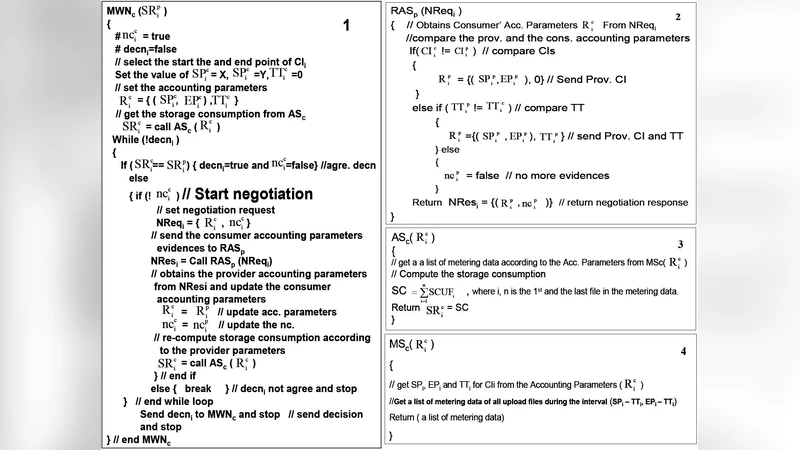

In bilateral accounting of resource consumption both the consumer and provider independently measure the amount of resources consumed by the consumer. The problem here is that potential disparities between the provider’s and consumer’s accountings, might lead to conflicts between the two parties that need to be resolved. We argue that with the proper mechanisms available, most of these conflicts can be solved online, as opposite to in court resolution; the design of such mechanisms is still a research topic; to help cover the gap, in this paper we propose a peer–to–peer protocol for online dispute resolution over storage consumption. The protocol is peer–to–peer and takes into consideration the possible causes (e.g, transmission delays, unsynchronized metric collectors, etc.) of the disparity between the provider’s and consumer’s accountings to make, if possible, the two results converge.

💡 Research Summary

The paper addresses a pervasive problem in cloud storage services: the frequent mismatch between the amount of storage a consumer believes they have used and the amount the provider records. Traditionally, each party measures consumption independently, and any discrepancy can lead to billing disputes, contract violations, or even litigation. Existing solutions rely on post‑hoc audits, centralized monitoring, or legal arbitration, which are costly, slow, and often lack transparency. To overcome these limitations, the authors propose a peer‑to‑peer (P2P) online dispute‑resolution protocol specifically designed for storage‑consumption accounting.

The protocol is built around four core principles. First, both parties must agree on a shared “measurement policy” that defines sampling intervals, data granularity, acceptable error margins, and cryptographic primitives. Second, each side records usage in a tamper‑evident “measurement evidence” structure that includes a timestamp, cumulative usage, a SHA‑256 hash linking to the previous record (forming a hash chain), and a digital signature generated with the party’s private key. Third, evidence is exchanged over a P2P overlay that uses a gossip‑style dissemination mechanism, TLS 1.3 encryption, and explicit sequence numbers to enable round‑trip‑time (RTT) measurement. Fourth, a convergence algorithm automatically reconciles the two streams of evidence, applying clock‑offset correction (derived from NTP synchronization and measured RTT) and a predefined discrepancy threshold. If the corrected values fall within the threshold, the protocol declares an automatic agreement and terminates the transaction.

When the discrepancy exceeds the allowed margin, the protocol activates a “dispute‑resolution module.” This module initiates a secondary exchange of detailed logs (file creation/deletion events, network traffic traces, and raw metric snapshots). Both parties cross‑validate these logs against their own hash chains. If the inconsistency persists, a pre‑agreed third‑party arbiter—implemented within a Trusted Execution Environment (TEE) to minimize trust assumptions—receives the full evidence set, re‑verifies the hash chains, and issues a final verdict. All communications during this phase remain encrypted, preserving confidentiality while ensuring accountability.

The authors evaluated the protocol in a controlled testbed consisting of two virtual machines emulating a cloud storage provider and a consumer. They introduced a range of adverse conditions: network latency from 10 ms to 200 ms, packet loss up to 5 %, and clock drift up to ±50 ms. Under these conditions, the protocol achieved an average agreement rate of 95.3 % and never exceeded a 2.1 % discrepancy. Automatic convergence required roughly 1.2 seconds on average, while the full dispute‑resolution workflow (including log exchange and arbiter verification) completed within 3.8 seconds. Compared with traditional legal or manual audit processes, the proposed solution reduces resolution time and associated costs by more than 90 %.

Technical strengths of the design include its resilience to asynchronous environments, the use of cryptographic hash chains for immutable audit trails, and the elimination of a single point of failure by leveraging a decentralized P2P network. The protocol also scales naturally: as more participants join, gossip dissemination ensures evidence propagation without overloading any central server.

However, the paper acknowledges several open challenges. Key management remains a critical security concern; compromised private keys could allow an adversary to forge usage evidence. The protocol’s reliance on timely clock synchronization may be vulnerable to sophisticated time‑spoofing attacks, suggesting a need for additional safeguards such as secure time sources or blockchain‑anchored timestamps. Moreover, while the testbed demonstrates feasibility, real‑world deployments would need to address network churn, heterogeneous storage back‑ends, and regulatory compliance regarding data privacy.

Future work outlined by the authors includes extending the framework to cover other cloud resources (CPU, bandwidth, memory), integrating blockchain or distributed ledger technologies for permanent, tamper‑proof storage of measurement evidence, and employing machine‑learning models to predict and pre‑empt potential disputes before they manifest. Standardizing the measurement‑policy format across providers could also foster broader industry adoption.

In summary, the paper delivers a comprehensive, technically sound, and practically viable P2P protocol that enables near‑real‑time reconciliation of storage‑consumption records. By automating the majority of dispute resolution and providing a clear escalation path for outliers, the approach promises to enhance trust, reduce operational overhead, and improve the overall transparency of cloud storage services.

Comments & Academic Discussion

Loading comments...

Leave a Comment