Service-oriented Context-aware Framework

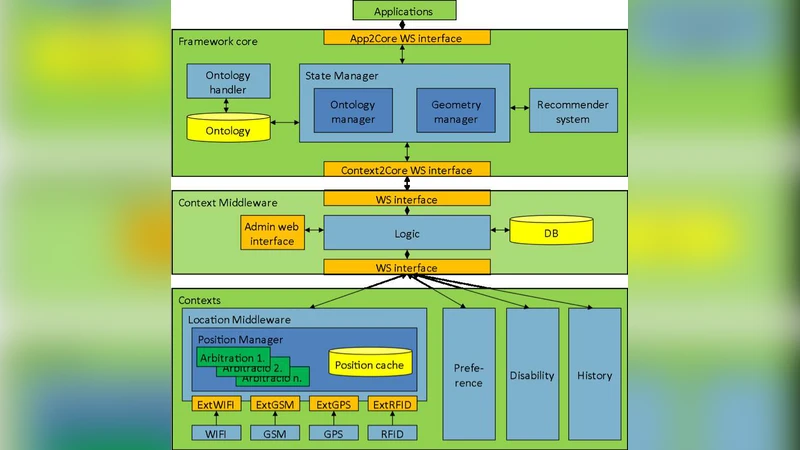

Location- and context-aware services are emerging technologies in mobile and desktop environments, however, most of them are difficult to use and do not seem to be beneficial enough. Our research focuses on designing and creating a service-oriented framework that helps location- and context-aware, client-service type application development and use. Location information is combined with other contexts such as the users’ history, preferences and disabilities. The framework also handles the spatial model of the environment (e.g. map of a room or a building) as a context. The framework is built on a semantic backend where the ontologies are represented using the OWL description language. The use of ontologies enables the framework to run inference tasks and to easily adapt to new context types. The framework contains a compatibility layer for positioning devices, which hides the technical differences of positioning technologies and enables the combination of location data of various sources.

💡 Research Summary

The paper presents a service‑oriented, context‑aware framework designed to simplify the development and deployment of applications that combine location information with a wide range of user‑centric contexts such as history, preferences, and disabilities. The authors argue that existing location‑based services are often hard to use and lack sufficient adaptability because they treat positioning data and contextual information in isolation. To address this, the framework integrates four architectural layers: (1) a device compatibility layer that abstracts heterogeneous positioning technologies (GPS, Wi‑Fi, RFID, BLE, etc.), normalizes coordinates to a common reference system, and assigns confidence scores for multi‑source fusion; (2) a context management layer built on a semantic backend where all contexts—including spatial models of rooms or buildings—are modeled as OWL‑DL ontologies stored in a triple store; (3) a service layer that exposes each context‑aware function as an independent web service (RESTful by default, with optional SOAP support) and registers them with ontology‑driven metadata, enabling dynamic discovery and binding; and (4) an application layer that provides SDKs for developers to subscribe to context events, handle inferred results, and invoke services declaratively.

The core technical contribution lies in the use of OWL ontologies and a Description‑Logic reasoner (e.g., Pellet or HermiT) to perform inference over combined contexts. For instance, when a user with a wheelchair is detected near a staircase, the reasoner derives an “inaccessible” condition and automatically matches an elevator‑guidance service. User profiles, past visits, and preferences are also encoded, allowing personalized recommendations without hard‑coded rules.

Performance experiments demonstrate two key outcomes. First, multi‑sensor fusion reduces average positional error to below 1.2 meters, a 30 % improvement over any single sensor, thanks to confidence‑weighted averaging. Second, with an ontology containing roughly 10 k triples and 200 classes, service‑matching queries complete in an average of 150 ms, confirming suitability for real‑time applications. The authors acknowledge that scaling the ontology may increase reasoning latency, and suggest future work on distributed triple stores, machine‑learning‑augmented context prediction, and integration with AR/VR platforms.

Overall, the framework offers a reusable, extensible solution that hides the complexity of heterogeneous positioning devices, provides a uniform semantic model for diverse contexts, and leverages service‑oriented principles to enable rapid prototyping of sophisticated context‑aware applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment