We consider the problem of deriving the no-signaling condition from the assumption that, as seen from a complexity theoretic perspective, the universe is not an exponential place. A fact that disallows such a derivation is the existence of {\em polynomial superluminal} gates, hypothetical primitive operations that enable superluminal signaling but not the efficient solution of intractable problems. It therefore follows, if this assumption is a basic principle of physics, either that it must be supplemented with additional assumptions to prohibit such gates, or, improbably, that no-signaling is not a universal condition. Yet, a gate of this kind is possibly implicit, though not recognized as such, in a decade-old quantum optical experiment involving position-momentum entangled photons. Here we describe a feasible modified version experiment that appears to explicitly demonstrate the action of this gate. Some obvious counter-claims are shown to be invalid. We believe that the unexpected possibility of polynomial superluminal operations arises because some practically measured quantum optical quantities are not describable as standard quantum mechanical observables.

Deep Dive into No-signaling, intractability and entanglement.

We consider the problem of deriving the no-signaling condition from the assumption that, as seen from a complexity theoretic perspective, the universe is not an exponential place. A fact that disallows such a derivation is the existence of {\em polynomial superluminal} gates, hypothetical primitive operations that enable superluminal signaling but not the efficient solution of intractable problems. It therefore follows, if this assumption is a basic principle of physics, either that it must be supplemented with additional assumptions to prohibit such gates, or, improbably, that no-signaling is not a universal condition. Yet, a gate of this kind is possibly implicit, though not recognized as such, in a decade-old quantum optical experiment involving position-momentum entangled photons. Here we describe a feasible modified version experiment that appears to explicitly demonstrate the action of this gate. Some obvious counter-claims are shown to be invalid. We believe that the unexpected

In a multipartite quantum system, any completely positive map applied locally to one part does not affect the reduced density operator of the remaining part. This fundamental no-go result, called the "no-signalling theorem" implies that quantum entanglement [1] does not enable nonlocal ("superluminal") signaling [2] under standard operations, and is thus consistent with relativity, inspite of the counterintuitive, stronger-than-classical correlations [3] that entanglement enables. For simple systems, no-signaling follows from non-contextuality, the property that the probability assigned to projector Π x , given by the Born rule, Tr(ρΠ x ), where ρ is the density operator, does not depend on how the orthonormal basis set is completed [4,5]. No-signaling has also been treated as a basic postulate to derive quantum theory [6].

It is of interest to consider the question of whether/how computation theory, in particular intractability and uncomputability, matter to the foundations of (quantum) physics. Such a study, if successful, could potentially allow us to reduce the laws of physics to mathematical theorems about algorithms and thus shed new light on certain conceptual issues. For example, it could explain why stronger-than-quantum correlations that are compatible with no-signaling [7] are disallowed in quantum mechanics. One strand of thought leading to the present work, earlier considered by us in Ref. [8], was the proposition that the measurement problem is a consequence of basic algorithmic limitations imposed on the computational power that can be supported by physical laws. In the present work, we would like to see whether no-signaling can also be explained in a similar way, starting from computation theoretic assumptions.

The Turing machine (TM) represents an abstraction of the principles of mechanical computation. The machine consists of a head and a tape. The head is capable of being in one of a finite number of “internal states” and can read and overwrite a symbol from a finite set, then shifting one block left or right along the tape. It contains a finite internal program that directs its operations. The central problem in computer science is the conjecture that two computational complexity classes, P and NP, are distinct in the standard Turing model of computation. P is the class of decision problems solvable in polynomial time by a (deterministic) TM. NP is the class of decision problems whose solution(s) can be verified in polynomial time by a deterministic TM. #P is the class of counting problems associated with the decision problems in NP. The word “complete” following a class denotes a problem X within the class, which is maximally hard in the sense that any other problem in the class can be solved in poly-time using an oracle giving the solutions of X in a single clock cycle. For example, determining whether a Boolean forumla is satisfied is NP-complete, and counting the number of Boolean satisfactions is #P-complete. The word “hard” following a class denotes a problem not necessarily in the class, but to which all problems in the class reduce in poly-time.

P is often taken to be the class of computational problems which are “efficiently solvable” (i.e., solvable in polynomial time) or “tractable”, although there are potentially larger classes that are considered tractable such as RP [9] and BQP, the latter being the class of decision problems efficiently solvable by a quantum computer [9]. NP-complete and potentially harder problems, which are not known to be efficiently solvable, are considered intractable in the Turing model. If P = NP and the universe is a polynomial-rather than an exponential-place, physical laws cannot be harnessed to efficiently solve intractable problems, and NP-complete problems will be intractable in the physical world.

That classical physics supports various implementations of the Turing machine is well known. More generally, we expect that computational models supported by a physical theory will be limited by that theory. Witten identified expectation values in a topological quantum field theory with values of the Jones polynomial that are #P-hard [11]. There is evidence that a physical system with a non-Abelian topological term in its Lagrangian may have observables that are NP-hard, or even #P-hard [12].

Other recent related works that have studied the computational power of variants of standard physical theories from a complexity or computability perspective are, respectively, Refs. [8,13,14,15,16] and Refs. [8,13]. Ref. [15] noted that NP-complete problems do not seem to be tractable using resources of the physical universe, and suggested that this might embody a fundamental principle, christened the NP-hardness assumption (also cf. [17]). Ref. [18] studies how insights from quantum information theory could be used to constrain physical laws. We will informally refer to the proposition that the universe is a polynomial place in the computational sense (t

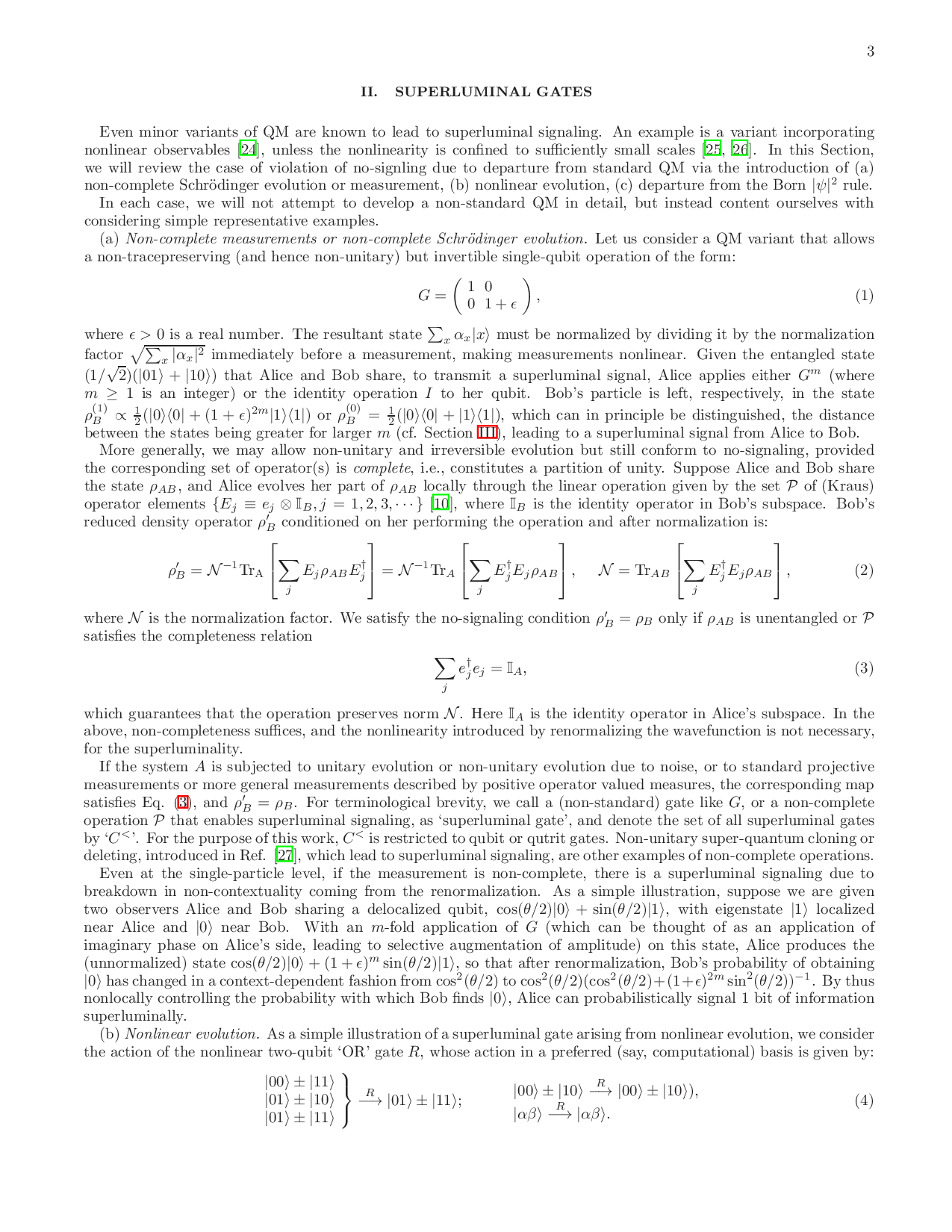

…(Full text truncated)…

This content is AI-processed based on ArXiv data.