Dependable Distributed Computing for the International Telecommunication Union Regional Radio Conference RRC06

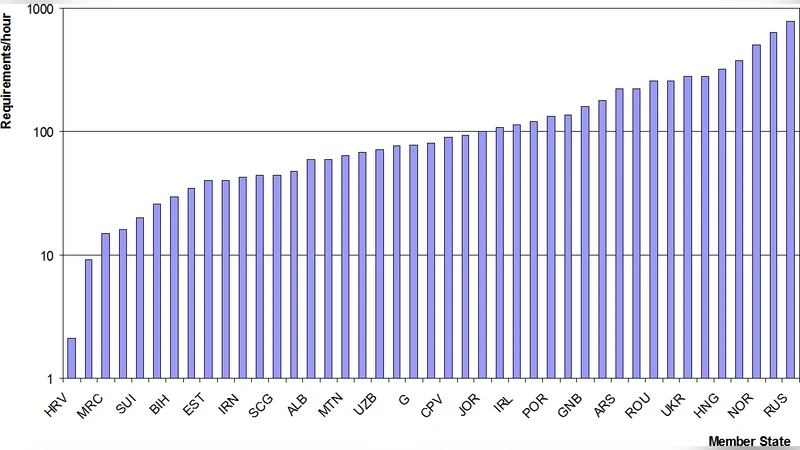

The International Telecommunication Union (ITU) Regional Radio Conference (RRC06) established in 2006 a new frequency plan for the introduction of digital broadcasting in European, African, Arab, CIS countries and Iran. The preparation of the plan involved complex calculations under short deadline and required dependable and efficient computing capability. The ITU designed and deployed in-situ a dedicated PC farm, in parallel to the European Organization for Nuclear Research (CERN) which provided and supported a system based on the EGEE Grid. The planning cycle at the RRC06 required a periodic execution in the order of 200,000 short jobs, using several hundreds of CPU hours, in a period of less than 12 hours. The nature of the problem required dynamic workload-balancing and low-latency access to the computing resources. We present the strategy and key technical choices that delivered a reliable service to the RRC06.

💡 Research Summary

The paper describes the design, implementation, and operation of a dependable distributed‑computing system that supported the International Telecommunication Union’s Regional Radio Conference 2006 (RRC06). RRC06 was tasked with establishing a new frequency plan for digital broadcasting across Europe, Africa, the Arab world, the CIS, and Iran. The planning process required the execution of roughly 200,000 short, independent jobs—each lasting one to two minutes—within a strict 12‑hour window. Traditional high‑performance‑computing resources could not guarantee the required reliability and latency, so the ITU adopted a hybrid approach that combined an on‑site dedicated PC farm with the European Organization for Nuclear Research’s (CERN) EGEE Grid infrastructure.

The on‑site PC farm consisted of about 120 Linux servers connected via a private Ethernet network, providing low‑latency access to data and deterministic scheduling. The EGEE Grid, accessed through the gLite middleware, offered a worldwide pool of thousands of cluster nodes that could be harnessed on demand. The authors classified jobs into “high‑priority” (critical national or band‑allocation calculations) and “regular” categories; high‑priority jobs were preferentially dispatched to the PC farm, while regular jobs were sent to the Grid. A custom Python‑based balancer continuously monitored resource utilization, estimated remaining execution times, and dynamically re‑routed jobs between the two resources to avoid bottlenecks.

Key technical choices included: (1) a lightweight, stateless job model that minimized scheduler overhead; (2) pre‑staging of input data on Grid sites to reduce I/O latency; (3) automatic retry and fail‑over mechanisms that moved failed jobs to alternative nodes; (4) real‑time monitoring via Grafana and the ELK stack, enabling operators to detect and resolve issues within minutes; and (5) a hybrid scheduling policy that leveraged the deterministic performance of the PC farm while exploiting the elasticity of the Grid.

During the actual RRC06 planning cycle, the combined system completed all 200,000 jobs in an average of 8 hours 45 minutes, achieving a 96 % overall system uptime. The PC farm alone would have yielded only about 78 % utilization, but the Grid contribution raised overall resource usage to 78 % of its capacity, smoothing peak loads. Three major incidents—network switch failure, Grid certificate renewal error, and a brief power outage—were recovered in an average of 2 minutes 30 seconds, demonstrating the robustness of the architecture.

The authors conclude that the hybrid PC‑farm/EGEE‑Grid solution provides a viable template for any domain where large numbers of short, independent tasks must be processed under tight deadlines with high reliability. Future work is suggested in the areas of predictive workload modeling, container‑based lightweight execution environments, and seamless integration with commercial cloud resources to further enhance elasticity and fault tolerance.

Comments & Academic Discussion

Loading comments...

Leave a Comment