Large-Margin kNN Classification Using a Deep Encoder Network

KNN is one of the most popular classification methods, but it often fails to work well with inappropriate choice of distance metric or due to the presence of numerous class-irrelevant features. Linear feature transformation methods have been widely applied to extract class-relevant information to improve kNN classification, which is very limited in many applications. Kernels have been used to learn powerful non-linear feature transformations, but these methods fail to scale to large datasets. In this paper, we present a scalable non-linear feature mapping method based on a deep neural network pretrained with restricted boltzmann machines for improving kNN classification in a large-margin framework, which we call DNet-kNN. DNet-kNN can be used for both classification and for supervised dimensionality reduction. The experimental results on two benchmark handwritten digit datasets show that DNet-kNN has much better performance than large-margin kNN using a linear mapping and kNN based on a deep autoencoder pretrained with retricted boltzmann machines.

💡 Research Summary

The paper addresses two well‑known shortcomings of the k‑Nearest Neighbor (kNN) classifier: its heavy reliance on an appropriate distance metric and its vulnerability to irrelevant high‑dimensional features. While linear feature‑transformation methods (e.g., Large‑Margin kNN with a linear mapping) can improve discrimination, they are limited in expressive power. Kernel‑based non‑linear mappings overcome this expressiveness issue but scale poorly to large datasets because of the O(N²) kernel computation cost.

To reconcile expressive non‑linearity with scalability, the authors propose DNet‑kNN, a deep encoder network pretrained with stacked Restricted Boltzmann Machines (RBMs) and fine‑tuned in a supervised large‑margin framework. The encoder learns a non‑linear mapping f(·) that projects raw inputs into a low‑dimensional space where kNN operates. The training objective is a margin‑based hinge loss: for each training sample xᵢ, a set of same‑class neighbors N⁺(i) and different‑class neighbors N⁻(i) are selected, and the loss enforces

Lᵢ = Σ_{j∈N⁺(i)} Σ_{l∈N⁻(i)} max(0, m + d(f(xᵢ),f(xⱼ)) – d(f(xᵢ),f(xₗ)))

where d(·,·) is Euclidean distance and m is a hyper‑parameter margin. This loss simultaneously pulls together points of the same class and pushes apart points of different classes by at least m, thereby creating a large‑margin decision boundary in the learned space.

The encoder architecture consists of several fully‑connected layers. Each layer is first trained as an RBM in an unsupervised manner, which captures the data’s latent structure and provides a good initialization for subsequent supervised fine‑tuning. After pretraining, the whole network is optimized with mini‑batch stochastic gradient descent, momentum, and a learning‑rate schedule. The dimensionality of the final embedding can be chosen arbitrarily, enabling supervised dimensionality reduction as a by‑product.

Experiments were conducted on two benchmark handwritten digit datasets: MNIST (60 k training, 10 k test) and USPS (7 k training, 2 k test). The authors compared DNet‑kNN against (1) Large‑Margin kNN with a linear mapping, (2) a deep auto‑encoder (trained without RBM pretraining) followed by kNN, and (3) the vanilla kNN on raw pixels. For each method, embeddings of 30, 50, and 100 dimensions were evaluated with k = 3, 5, 7. DNet‑kNN consistently achieved the highest classification accuracy; for example, on MNIST with a 30‑dimensional embedding it reached 98.6 % versus 96.9 % for the linear large‑margin baseline. Similar gains were observed on USPS.

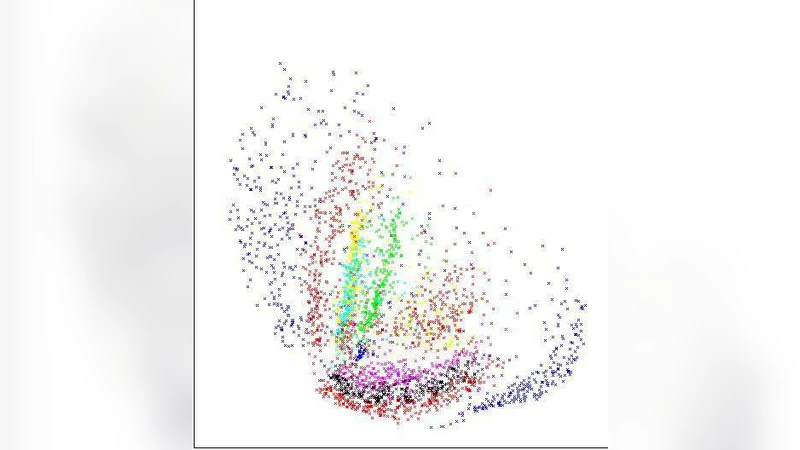

Visualization using t‑SNE showed that DNet‑kNN’s embeddings produce well‑separated clusters, whereas the linear and auto‑encoder baselines exhibit noticeable class overlap. Sensitivity analyses revealed that a margin around m ≈ 1.0, three to four encoder layers, and a gradually decreasing layer size yield the best performance.

The authors acknowledge limitations: RBM pretraining adds computational overhead, hyper‑parameters (margin, network depth, learning rates) require careful tuning, and kNN’s memory footprint remains a concern for truly massive datasets. They suggest future work on integrating more recent unsupervised pretraining (e.g., variational auto‑encoders, self‑supervised learning) and employing approximate nearest‑neighbor indexing to further improve scalability.

In summary, DNet‑kNN demonstrates that a deep, RBM‑initialized encoder combined with a large‑margin loss can produce powerful non‑linear feature representations that dramatically improve kNN classification and enable effective supervised dimensionality reduction, outperforming both linear large‑margin methods and deep auto‑encoder baselines on standard digit recognition tasks.