Calibration and imaging challenges at low radio frequencies: An overview of the state of the art

Many scientific deliverables of the next generation low frequency radio telescopes require high dynamic range imaging. Next generation telescopes under construction indeed promise at least a ten-fold increase in the sensitivity compared with existing telescopes. The projected achievable RMS noise in the images from these telescopes is in the range of 1–10$\mu$Jy/beam corresponding to typical imaging dynamic ranges of $10^{6-7}$. High imaging dynamic range require removal of systematic errors to high accuracy and for long integration intervals. In general, many source of errors are directionally dependent and unless corrected for, will be a limiting factor for the imaging dynamic range of these next generation telescopes. This requires development of new algorithms and software for calibration and imaging which can correct for such direction and time dependent errors. In this paper, I discuss the resulting algorithmic and computing challenges and the recent progress made towards addressing these challenges.

💡 Research Summary

The paper provides a comprehensive overview of the calibration and imaging challenges that confront the next‑generation low‑frequency radio telescopes such as LOFAR, MWA, and the upcoming SKA‑Low. These instruments aim to achieve unprecedented sensitivities (RMS noise of 1–10 µJy beam⁻¹) and image dynamic ranges of 10⁶–10⁷, which are essential for a broad suite of scientific goals ranging from studies of the epoch of re‑ionization to low‑frequency spectral mapping of galaxy clusters. Achieving such performance, however, requires the removal of systematic errors to an accuracy far beyond what traditional calibration pipelines can deliver.

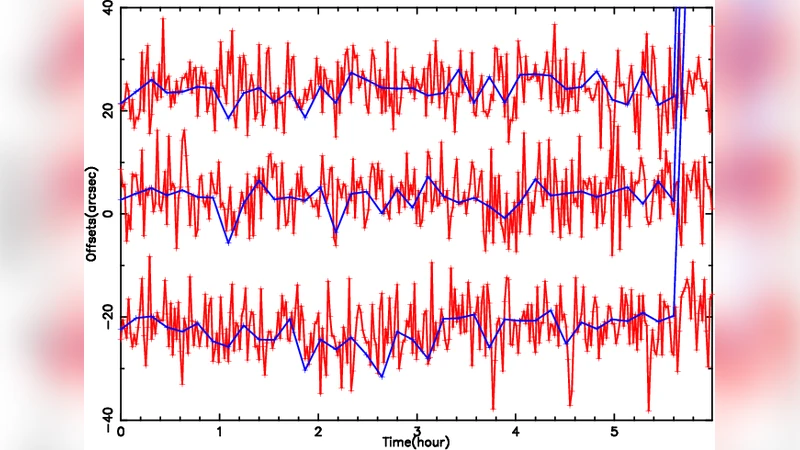

The authors first identify the principal sources of direction‑dependent errors (DDEs) that dominate low‑frequency observations: ionospheric phase screens that vary on timescales of seconds to minutes, antenna primary‑beam patterns that are both frequency‑ and direction‑dependent, wide‑band receiver gain variations, and non‑coplanar baseline effects (the w‑term). Because these errors evolve with time, frequency, and sky position, a single complex gain solution for the whole field—typical of conventional self‑calibration— leaves substantial residual artefacts after long integrations.

To address these issues, the paper surveys the state‑of‑the‑art DDE correction techniques. A‑projection and AW‑projection incorporate antenna beam models directly into the imaging kernel, allowing simultaneous correction of beam and w‑term effects. Facet‑based calibration divides the field of view into many smaller regions, solving independent gain and ionospheric phase solutions for each facet. “Peeling” removes the brightest sources iteratively, while newer “differential gains” and hybrid facet‑based solvers jointly estimate ionospheric screens and beam variations across the entire sky. The authors demonstrate, through simulated and real data, that these methods can reduce residual sidelobes by orders of magnitude, bringing the achievable dynamic range into the 10⁶–10⁷ regime.

A second major focus is the computational burden imposed by the petabyte‑scale data rates of next‑generation arrays. Traditional CPU‑centric pipelines suffer from memory bandwidth and I/O bottlenecks. The paper details how GPU acceleration of w‑stacking and w‑projection, combined with MPI‑based distributed memory frameworks, yields speed‑ups of tenfold or more. Real‑time flagging, online quality assessment, and streaming calibration are presented as essential components of a scalable processing chain. The authors also discuss the importance of data compression and hierarchical storage management to keep the workflow tractable.

The imaging stage itself is examined in depth. Multi‑scale and multi‑frequency CLEAN (MS‑MFS) algorithms capture both spatial and spectral complexity, while regularized maximum‑likelihood (MLE) and compressed‑sensing approaches provide a mathematically rigorous route to high‑dynamic‑range reconstruction. When these deconvolution techniques are coupled with direction‑dependent calibration, simulated experiments achieve dynamic ranges exceeding 10⁶, a dramatic improvement over classic single‑scale CLEAN, which typically stalls near 10⁴.

Finally, the paper reviews the emerging software ecosystem: CASA‑next, DDFacet, WSClean, and MeqTrees each implement different aspects of the DDE pipeline, from beam modeling to GPU‑accelerated imaging. However, the lack of standardized interfaces and end‑to‑end automation remains a barrier to widespread adoption. The authors advocate for a modular, open‑source framework built on the Radio Interferometer Measurement Equation (RIME) to enable seamless integration of calibration, imaging, and quality‑control modules.

In conclusion, the authors argue that the path to the ambitious imaging performance of future low‑frequency arrays lies at the intersection of algorithmic innovation—particularly direction‑dependent calibration and advanced deconvolution—and high‑performance computing architectures capable of handling massive data streams. Ongoing developments in both domains are already narrowing the gap, but further work is needed on real‑time DDE estimation, scalable data handling, and the establishment of community‑wide software standards. The paper thus serves both as a state‑of‑the‑art survey and a roadmap for the next decade of low‑frequency radio astronomy.

Comments & Academic Discussion

Loading comments...

Leave a Comment