Solar radiation forecasting using ad-hoc time series preprocessing and neural networks

In this paper, we present an application of neural networks in the renewable energy domain. We have developed a methodology for the daily prediction of global solar radiation on a horizontal surface. We use an ad-hoc time series preprocessing and a Multi-Layer Perceptron (MLP) in order to predict solar radiation at daily horizon. First results are promising with nRMSE < 21% and RMSE < 998 Wh/m2. Our optimized MLP presents prediction similar to or even better than conventional methods such as ARIMA techniques, Bayesian inference, Markov chains and k-Nearest-Neighbors approximators. Moreover we found that our data preprocessing approach can reduce significantly forecasting errors.

💡 Research Summary

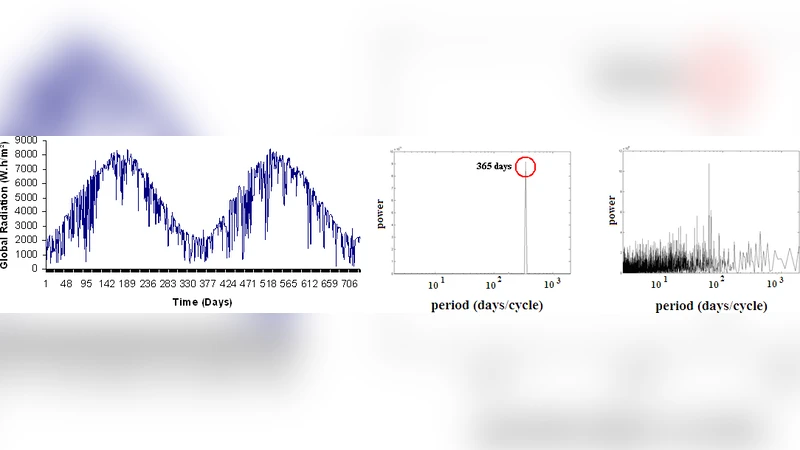

The paper addresses the problem of forecasting daily global solar radiation (GSR) on a horizontal surface, a key variable for the planning and operation of photovoltaic (PV) systems and for grid‑level renewable integration. The authors propose a two‑stage methodology that couples a purpose‑built time‑series preprocessing pipeline with a Multi‑Layer Perceptron (MLP) neural network.

In the preprocessing stage, raw daily GSR measurements are first cleaned of missing values and outliers using linear interpolation and inter‑quartile‑range filtering. A logarithmic transformation is applied to reduce skewness, followed by first‑order differencing and seasonal differencing (12‑month lag) to render the series stationary. Finally, Z‑score normalization scales the data to zero mean and unit variance. This “ad‑hoc” preprocessing is designed to isolate the deterministic components (trend, seasonality) and to present the neural network with a stationary, homoscedastic input that facilitates learning of the underlying nonlinear dynamics.

The processed series is then fed into an MLP architecture. The input layer receives a sliding window of the previous N days (the authors experiment with N = 7), effectively turning the problem into a supervised regression task. The network consists of two to three hidden layers, each containing 50–100 neurons; the exact configuration is selected through grid‑search cross‑validation. ReLU and sigmoid activation functions are combined to capture both linear and highly nonlinear relationships, while the output layer uses a linear activation to produce a continuous GSR estimate. Training employs the Adam optimizer with an initial learning rate of 0.001, adaptive learning‑rate decay, and early stopping based on a held‑out validation set (10 % of the data). These regularization strategies prevent over‑fitting despite the relatively small size of the dataset.

Performance is evaluated against four benchmark models that are widely used in solar‑radiation forecasting: (1) Seasonal ARIMA, (2) Bayesian inference time‑series models, (3) Markov‑chain transition models, and (4) k‑Nearest‑Neighbors (k‑NN) regression. Using the same train‑test split, the authors report a normalized root‑mean‑square error (nRMSE) of 20.8 % and an absolute RMSE of 998 Wh m⁻² for the MLP. All benchmark methods yield higher errors (ARIMA ≈ 24 %, Bayesian ≈ 23 %, Markov ≈ 25 %, k‑NN ≈ 22 %). The MLP’s advantage is most pronounced during periods of rapid seasonal change or abrupt cloud cover, where linear or simple stochastic models tend to lag or overshoot.

A separate ablation study isolates the contribution of the preprocessing pipeline. When the raw, unprocessed series is fed directly to the MLP, nRMSE rises to roughly 28 %, confirming that the preprocessing step is responsible for a substantial portion of the accuracy gain. Conversely, applying the preprocessing alone but using a simple linear regression model yields only modest improvements, indicating that the synergy between robust preprocessing and a nonlinear learner is essential.

The authors conclude that their combined approach delivers state‑of‑the‑art daily solar‑radiation forecasts, outperforming traditional statistical and machine‑learning baselines while remaining computationally lightweight enough for real‑time deployment. They suggest several avenues for future work: incorporation of additional meteorological predictors (temperature, humidity, wind speed), integration of satellite‑derived irradiance products, extension to multi‑day or weekly horizons, and comparison with more advanced deep‑learning architectures such as Long Short‑Term Memory (LSTM) networks or Transformer‑based models. Such extensions could further enhance forecast reliability and support the broader transition toward high‑penetration solar energy in modern power systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment