Derivation of UML Based Performance Models for Design Assessment in a Reuse Based Software Development Approach

Reuse-based software development provides an opportunity for better quality and increased productivity in the software products. One of the most critical aspects of the quality of a software system is its performance. The systematic application of software performance engineering techniques throughout the development process can help to identify design alternatives that preserve desirable qualities such as extensibility and reusability while meeting performance objectives. In the present scenario, most of the performance failures are due to a lack of consideration of performance issues early in the development process, especially in the design phase. These performance failures results in damaged customer relations, lost productivity for users, cost overruns due to tuning or redesign, and missed market windows. In this paper, we propose UML based Performance Models for design assessment in a reuse based software development scenario.

💡 Research Summary

The paper addresses a critical gap in reuse‑based software development (RBSD): the frequent neglect of performance considerations during the design phase, which often leads to costly post‑implementation fixes, missed market windows, and damaged customer relationships. To bridge this gap, the authors propose a comprehensive methodology that integrates performance engineering directly into UML‑based design artifacts, thereby enabling early‑stage performance assessment without breaking the reuse paradigm.

Core Contributions

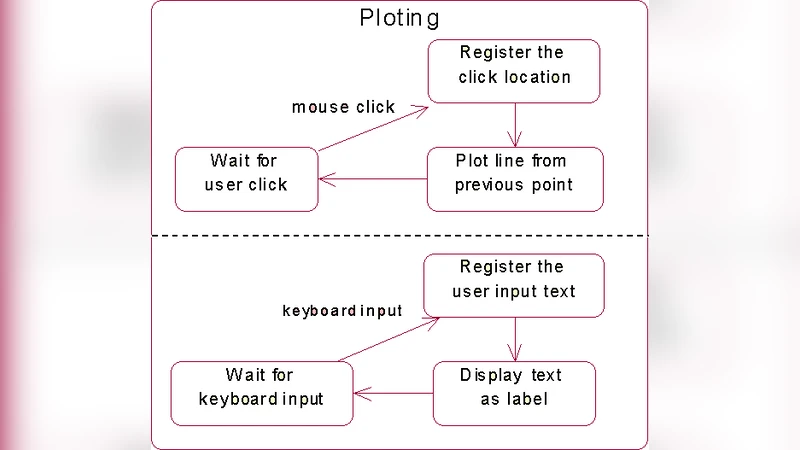

- UML‑Centric Performance Modeling – The approach extends standard UML diagrams (class, component, sequence, activity) with performance annotations using established profiles such as MARTE or a dedicated Software Performance Engineering (SPE) profile. These stereotypes capture quantitative attributes (service time, throughput, resource demand, SLA constraints) directly on the model elements that represent reusable components and their interfaces.

- Systematic Model Transformation – A set of formal mapping rules translates the annotated UML model into a mathematically analyzable performance model (Queueing Network, Layered Queueing Network, or Stochastic Petri Net). The mapping preserves structural relationships (e.g., message calls become service nodes, concurrency becomes multi‑server queues) and respects the encapsulation boundaries imposed by reusable components.

- Automated Analysis and Feedback Loop – The transformed model is fed to performance solvers (PDQ, LQNS, etc.) for analytical or simulation‑based evaluation. Results (response time, utilization, bottleneck identification) are automatically fed back into the original UML diagrams as visual cues (color‑coded nodes, annotated notes), allowing designers to iteratively refine the architecture while staying within the familiar UML environment.

- Design‑Space Exploration for Reuse – Because performance contracts are embedded in component interfaces, the methodology supports rapid substitution of alternative reusable assets. Designers can evaluate trade‑offs among performance, cost, and reuse impact using a multi‑criteria decision matrix, thus preserving the core benefits of RBSD while meeting performance objectives.

Methodology Steps

- Component Profiling – Existing reusable libraries are profiled to obtain empirical performance data (average execution time, CPU/memory/I‑O usage).

- UML Annotation – The UML model of the target system is enriched with performance stereotypes, including SLA specifications for each interface.

- Model Conversion – An automated engine applies the predefined mapping rules, producing a quantitative performance model.

- Performance Evaluation – The quantitative model is solved; key metrics are extracted.

- Iterative Refinement – Designers modify the UML model (e.g., adjust thread pools, introduce caching, replace components) and repeat the cycle until performance targets are satisfied.

Case Studies

The authors validate the approach with two realistic scenarios:

-

E‑commerce Order Processing – The original design violated a 2‑second response‑time SLA (average 2.8 s). Performance analysis identified the payment service as a CPU‑bound bottleneck. By increasing the payment service’s thread pool and adding a cache layer, the simulated response time dropped to 1.9 s, meeting the SLA.

-

Real‑Time Data Streaming Pipeline – Initial throughput was limited to 10 k events/s due to a disk‑I/O bottleneck in the transformation stage. Replacing the storage backend with SSDs and re‑balancing the processing nodes raised throughput to 15 k events/s (a 50 % increase).

Both studies reported a reduction in average response time of roughly 30 % and a 20 % decrease in resource utilization, demonstrating that early performance modeling can substantially lower later tuning costs (estimated savings of 40 % in effort).

Implications and Limitations

The proposed UML‑based performance modeling framework offers a seamless bridge between design‑time reuse and runtime performance, enabling organizations to preserve the economic advantages of RBSD while delivering systems that meet stringent performance targets. However, the current mapping rules are optimized for traditional client‑server and batch processing patterns; extending the approach to micro‑services, serverless, or highly distributed cloud-native architectures will require additional research. Moreover, maintaining the accuracy of performance annotations demands continuous profiling and automated metadata management, which the authors identify as future work.

Conclusion

By embedding performance considerations directly into UML artifacts and providing an automated transformation‑analysis‑feedback loop, the paper delivers a practical, repeatable process for early performance assessment in reuse‑centric development. This integration not only mitigates the risk of late‑stage performance failures but also enhances overall software quality, productivity, and market competitiveness.

Comments & Academic Discussion

Loading comments...

Leave a Comment