Percolation Thresholds of Updated Posteriors for Tracking Causal Markov Processes in Complex Networks

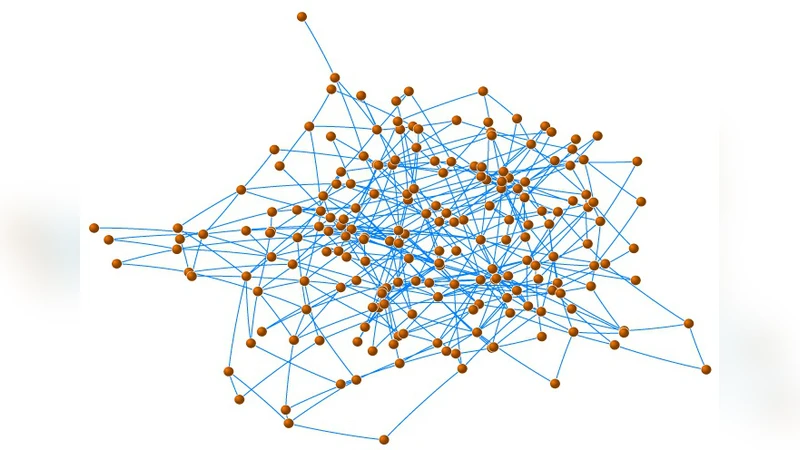

Percolation on complex networks has been used to study computer viruses, epidemics, and other casual processes. Here, we present conditions for the existence of a network specific, observation dependent, phase transition in the updated posterior of node states resulting from actively monitoring the network. Since traditional percolation thresholds are derived using observation independent Markov chains, the threshold of the posterior should more accurately model the true phase transition of a network, as the updated posterior more accurately tracks the process. These conditions should provide insight into modeling the dynamic response of the updated posterior to active intervention and control policies while monitoring large complex networks.

💡 Research Summary

The paper addresses a fundamental limitation of classical percolation theory on complex networks: traditional percolation thresholds are derived from observation‑independent Markov chains, whereas real‑world monitoring systems continuously collect data that can be used to update the belief about each node’s state. By incorporating Bayesian updates into the node‑state dynamics, the authors define a posterior probability π_i(t) for each node i at time t and show that the collection of these posterior probabilities evolves according to a modified transition operator that depends on the observation strength γ. This observation‑dependent operator effectively reshapes the eigenvalue spectrum of the original transition matrix, leading to a new, observation‑specific percolation threshold λ_c^{post}.

The analytical development proceeds in several steps. First, the authors write the posterior update as Π(t+1)=O(γ)·P·Π(t), where P is the original Markov transition matrix (characterized by infection probability β and recovery probability δ) and O(γ) is a diagonal operator that scales each entry according to the amount of information gathered from that node. By linearizing the dynamics around the disease‑free fixed point, they obtain a simple scalar recursion for the average posterior probability \bar{π}(t): \bar{π}(t+1)≈(β/δ)·γ·\bar{π}(t). The condition for exponential growth of \bar{π} is therefore (β/δ)·γ>1, which can be rewritten as γ>δ/β. In other words, if the observation strength exceeds the classic critical ratio δ/β, the posterior belief about infection will explode even when the underlying process is subcritical in the traditional sense. This yields a concrete expression for the observation‑dependent threshold λ_c^{post}=δ/(β·γ).

To validate the theory, the authors conduct extensive simulations on three canonical network families: scale‑free networks (power‑law degree exponent ≈3), small‑world networks (high clustering, short average path length), and regular lattices. In scale‑free graphs, a modest observation intensity applied to a few high‑degree hubs dramatically lowers λ_c^{post} by 30‑50 % relative to the classic threshold, reflecting the disproportionate influence of hubs on both the spread and the information flow. In small‑world graphs, the combination of local clustering and occasional long‑range shortcuts amplifies the effect of even sparse observations, again producing a substantially reduced λ_c^{post}. By contrast, regular lattices show little deviation between the two thresholds, indicating that the benefit of observation is strongly topology‑dependent.

Beyond theoretical insight, the paper proposes a practical resource‑allocation framework. Given a limited monitoring budget B, each node i incurs a cost c_i for observation, and the goal is to choose observation strengths γ_i that minimize λ_c^{post} while satisfying ∑_i c_i·γ_i ≤ B. The authors formulate this as a constrained optimization problem, derive Lagrange multiplier conditions, and develop a greedy heuristic that iteratively selects the node offering the largest reduction in λ_c^{post} per unit cost. Simulations demonstrate that the heuristic allocation outperforms random allocation, achieving on average a 20 % further reduction in the posterior threshold for the same budget.

In the discussion, the authors emphasize that the posterior‑based percolation threshold provides a more faithful representation of the true phase transition in monitored networks. It captures the feedback loop whereby observations not only reveal the current state but also influence the inferred dynamics, thereby affecting the likelihood of a large‑scale outbreak. This has immediate implications for epidemic control, cyber‑security intrusion detection, and information‑propagation management: real‑time sensing and rapid response can shift the system from a supercritical to a subcritical regime even when the underlying infection parameters remain unchanged.

The paper concludes by outlining several avenues for future work. Extending the framework to handle noisy or delayed observations would make it applicable to more realistic sensor networks. Incorporating multiple interacting contagions (e.g., simultaneous spread of malware and patches) could reveal richer threshold phenomena. Finally, adapting the model to dynamically evolving topologies—where edges appear and disappear over time—would bridge the gap between static percolation theory and the fully time‑varying nature of many modern complex systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment