Efficient Implementation of the Generalized Tunstall Code Generation Algorithm

A method is presented for constructing a Tunstall code that is linear time in the number of output items. This is an improvement on the state of the art for non-Bernoulli sources, including Markov sources, which require a (suboptimal) generalization of Tunstall’s algorithm proposed by Savari and analytically examined by Tabus and Rissanen. In general, if n is the total number of output leaves across all Tunstall trees, s is the number of trees (states), and D is the number of leaves of each internal node, then this method takes O((1+(log s)/D) n) time and O(n) space.

💡 Research Summary

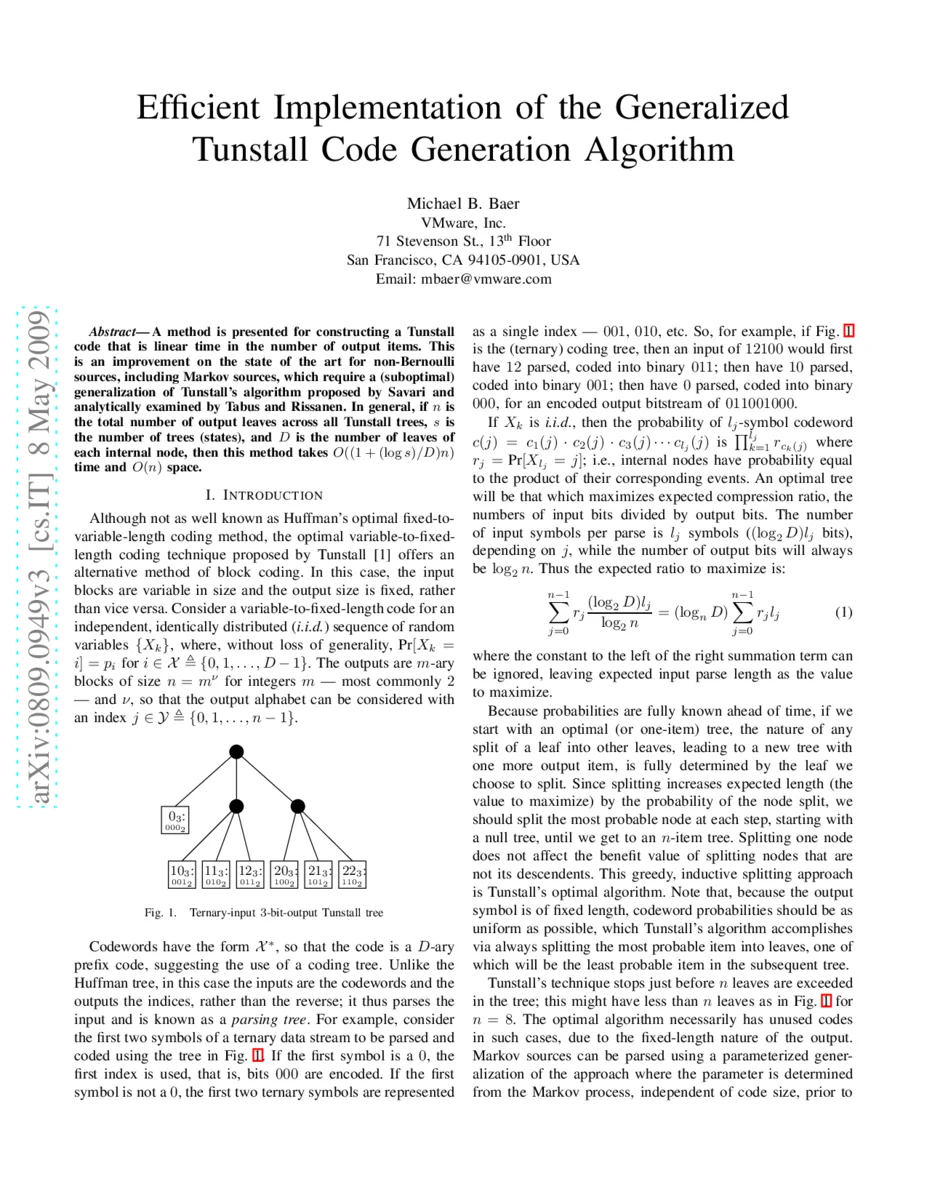

The paper addresses the computational inefficiency of constructing Tunstall codes, which are variable‑to‑fixed length coding schemes offering random‑access and synchronization benefits over Huffman codes. Traditional implementations of Tunstall’s greedy algorithm require either O(n²) time with a naïve approach or O(n log n) time when a single priority queue is used, where n denotes the total number of output leaves. This becomes a bottleneck for large‑scale or real‑time applications, especially for non‑binary alphabets and sources with memory (e.g., Markov processes).

The authors first revisit the binary (Bernoulli) case and introduce a two‑queue algorithm. When a leaf with probability q is split into two children with probabilities a·q and (1‑a)·q, each child’s probability is guaranteed not to exceed q. Consequently, left‑child nodes can be placed in a “left queue” and right‑child nodes in a “right queue” while preserving non‑increasing order within each queue. The most probable leaf at any iteration is simply the larger of the two queue heads, which can be retrieved in O(1) time. Starting from the root, the algorithm repeatedly extracts the highest‑probability leaf, splits it, and enqueues its children, stopping when the desired number of leaves n is reached. This yields a linear‑time O(n) construction for binary sources.

To handle general D‑ary alphabets and sources with s states (e.g., Markov chains), the paper extends the idea to a set of regular queues Qₜ indexed by every possible combination of conditional probability, input state, and node state. The number of such combinations is denoted g, bounded by g ≤ D·s². Each regular queue stores nodes of a particular type in non‑increasing order of probability, just as in the binary case. A separate priority queue P maintains pointers to the non‑empty regular queues, ordered by a monotone decreasing function fⱼ,ₖ(p) of the node’s probability. For i.i.d. sources the optimal choice is f = –ln p; for other models any decreasing f yields a sub‑optimal but still useful code.

The generalized algorithm proceeds as follows:

- Initialise all regular queues and the priority queue.

- Insert the root node (probability 1) into the appropriate regular queues and push the non‑empty queues into P.

- Repeatedly extract the regular queue with the smallest f‑value (the head of P), remove its head node, split it into D children, and distribute those children into their respective regular queues.

- Update P by re‑inserting any queues that became non‑empty and adjusting the priority of the queue whose head changed.

- Increment the leaf count by D‑1 and stop when the total number of leaves reaches n.

Complexity analysis shows that the number of splits is ⌈(n‑1)/(D‑1)⌉. Each split incurs O(D) time for inserting D children into regular queues and O(log g) amortised time for one deletion and one insertion in the priority queue. Hence the total running time is O((1 + (log g)/D)·n). Since g ≤ D·s², this can be expressed as O((1 + (log s)/D)·n). The algorithm uses O(n) space for the tree itself, O(n) for the regular queues (their total size never exceeds the number of nodes), and O(g) for the priority queue, which is also O(n) under the realistic assumption g log g ≤ O(n).

The paper provides illustrative examples: a binary Bernoulli source with probability 0.7 and a two‑state Markov source with three symbols. In the binary example, the two‑queue method reproduces the optimal 4‑leaf Tunstall tree in linear time. In the Markov example, g = 4 regular queues are required, and the algorithm correctly builds the optimal set of parsing trees while respecting state transitions.

Discussion points include:

- The method’s linear‑time guarantee holds for any D‑ary alphabet and any finite‑state source, making it broadly applicable.

- The choice of f influences optimality; –ln p yields optimal codes for i.i.d. sources, while other decreasing functions provide practical sub‑optimal solutions for more complex sources.

- When g becomes large (e.g., many states or highly non‑uniform probability sets), the log g factor may dominate, but using a Fibonacci heap or other constant‑time priority‑queue variants can mitigate this.

- Memory usage remains linear, which is advantageous for embedded or streaming environments.

In conclusion, the authors deliver a practical, theoretically sound algorithm that reduces Tunstall code construction from super‑linear to linear time while preserving optimality for i.i.d. sources and offering a systematic framework for sub‑optimal coding of Markov and other memory‑bearing sources. This advancement removes a major obstacle to deploying Tunstall codes in high‑throughput compression, real‑time communication, and storage systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment