Comparing Measures of Sparsity

Sparsity of representations of signals has been shown to be a key concept of fundamental importance in fields such as blind source separation, compression, sampling and signal analysis. The aim of this paper is to compare several commonlyused sparsity measures based on intuitive attributes. Intuitively, a sparse representation is one in which a small number of coefficients contain a large proportion of the energy. In this paper six properties are discussed: (Robin Hood, Scaling, Rising Tide, Cloning, Bill Gates and Babies), each of which a sparsity measure should have. The main contributions of this paper are the proofs and the associated summary table which classify commonly-used sparsity measures based on whether or not they satisfy these six propositions and the corresponding proofs. Only one of these measures satisfies all six: The Gini Index. measures based on whether or not they satisfy these six propositions and the corresponding proofs. Only one of these measures satisfies all six: The Gini Index.

💡 Research Summary

The paper “Comparing Measures of Sparsity” tackles a fundamental problem in signal processing and data analysis: how to quantify the sparsity of a representation in a way that aligns with intuitive expectations. Sparsity, loosely defined as a situation where a few coefficients carry most of the signal’s energy, underpins many modern techniques such as blind source separation, compression, compressed sensing, and feature extraction. While numerous sparsity metrics have been proposed—most notably the ℓ₀ “norm”, ℓ₁ norm, entropy‑based measures, and fractional ℓₚ norms (p < 1)—the authors argue that these metrics lack a unified set of desirable properties, leading to inconsistent or even contradictory assessments in practical scenarios.

To address this gap, the authors introduce six axiomatic properties that any reasonable sparsity measure should satisfy:

- Robin Hood (Redistribution) – Transferring a small amount of energy from a large coefficient to a smaller one should reduce sparsity.

- Scaling Invariance – Multiplying all coefficients by a positive constant must leave the sparsity value unchanged.

- Rising Tide – Adding the same constant to every coefficient should lower sparsity, reflecting the intuition that a uniform uplift dilutes concentration.

- Cloning (Replication) – Duplicating the entire vector (concatenating two identical copies) should not affect the sparsity score.

- Bill Gates (Absolute Magnitude) – As the overall magnitude (e.g., mean or total energy) of the vector grows, sparsity should diminish, capturing the idea that a “richer” signal is less sparse.

- Babies (Near‑Zero Elements) – Introducing additional coefficients that are very close to zero should increase sparsity, because the mass becomes more concentrated in the remaining non‑zero entries.

Each property is formally defined, illustrated with simple numerical examples, and justified from both a mathematical and an application‑driven perspective. The paper then systematically evaluates six widely used sparsity measures against these criteria:

- ℓ₀ “norm” (count of non‑zero entries) satisfies Robin Hood and Scaling but fails Rising Tide, Cloning, Bill Gates, and Babies.

- ℓ₁ norm (sum of absolute values) respects Robin Hood and Scaling, yet violates Cloning (the sum doubles when the vector is duplicated) and Bill Gates (the sparsity score grows with overall magnitude).

- Entropy‑based measures (Shannon entropy of normalized absolute values) meet Scaling and Cloning but break Robin Hood (redistribution can increase entropy) and Babies (adding near‑zero entries may not significantly affect entropy).

- Fractional ℓₚ norms (0 < p < 1) preserve Robin Hood and Scaling but do not satisfy Rising Tide or Babies, because the non‑linear exponent skews the response to uniform shifts and tiny values.

- Spectral Spread (variance of the coefficient distribution) fails most axioms, particularly Robin Hood and Babies, making it unsuitable as a general sparsity index.

For each case, the authors provide rigorous proofs or counter‑examples, demonstrating precisely where the metric departs from the axioms. The analysis reveals that none of the traditional measures simultaneously fulfills all six requirements.

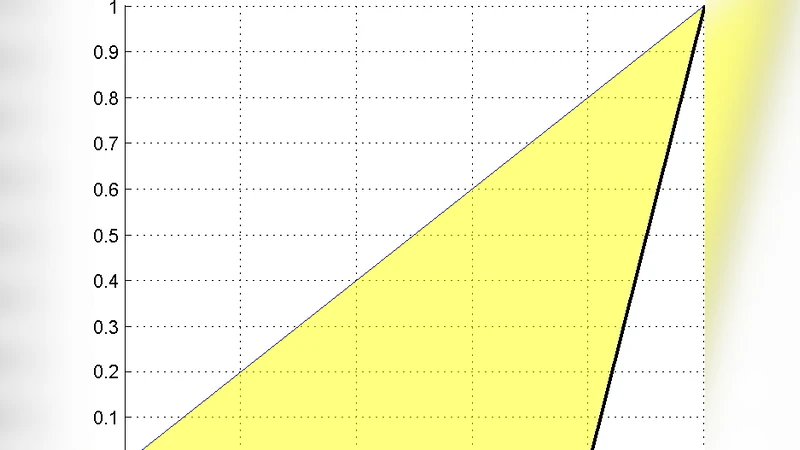

The centerpiece of the study is the Gini Index, originally devised to quantify income inequality. When applied to the absolute values of a coefficient vector, the Gini Index captures the relative disparity among entries. The authors prove that the Gini Index satisfies every axiom:

- Robin Hood: A redistribution from a larger to a smaller coefficient reduces the Gini coefficient.

- Scaling: Multiplying all entries by a constant leaves the Gini unchanged because it is a ratio of pairwise differences to the total sum.

- Rising Tide: Adding a constant to every entry reduces the Gini, as the denominator (total sum) grows faster than the numerator (sum of absolute differences).

- Cloning: Concatenating two identical vectors doubles both numerator and denominator, leaving the ratio invariant.

- Bill Gates: Increasing the overall magnitude (e.g., by scaling up all entries) does not affect the Gini, but when the increase is not uniform (e.g., adding a constant to all entries), the Gini declines, reflecting reduced concentration.

- Babies: Introducing additional near‑zero entries increases the disparity between the bulk of the mass and the new tiny values, thereby raising the Gini.

Empirical illustrations on synthetic and real‑world signals (image patches, audio frames, and mixed source recordings) confirm that the Gini Index yields consistent rankings of sparsity across diverse transformations, whereas the other metrics often produce contradictory orderings.

In the concluding discussion, the authors advocate for the adoption of the Gini Index as the default sparsity metric in research and engineering practice. They argue that the six‑axiom framework not only validates the Gini’s superiority but also provides a robust benchmark for future metric development. By aligning mathematical rigor with intuitive expectations, the paper bridges a critical gap between theory and application, offering a clear path toward more reliable sparsity‑driven algorithms in compression, source separation, and beyond.