Fast Simulations of Gravitational Many-body Problem on RV770 GPU

The gravitational many-body problem is a problem concerning the movement of bodies, which are interacting through gravity. However, solving the gravitational many-body problem with a CPU takes a lot of time due to O(N^2) computational complexity. In this paper, we show how to speed-up the gravitational many-body problem by using GPU. After extensive optimizations, the peak performance obtained so far is about 1 Tflops.

💡 Research Summary

The paper presents a high‑performance implementation of the gravitational many‑body problem on AMD’s RV770 graphics processing unit (GPU). The authors begin by outlining the computational challenge: a naïve direct‑summation approach requires O(N²) pairwise force evaluations, which becomes prohibitive for simulations involving tens of thousands to millions of particles when executed on conventional CPUs. While tree‑based (Barnes‑Hut) and Fast Multipole Method (FMM) approximations can reduce the algorithmic complexity, they introduce additional implementation complexity and compromise numerical accuracy. Consequently, the authors pursue a strategy that retains the exact O(N²) formulation but exploits the massive parallelism and high memory bandwidth of modern GPUs.

The RV770 architecture is described in detail. It comprises roughly 800 stream processors organized into SIMD cores, each core containing four arithmetic units. The device offers a 1 GB DDR3 global memory, 256 KB of on‑chip shared memory (partitioned into 16 KB per multiprocessor), and a sizable register file. These hierarchical memory resources dictate the design of the kernel: maximizing data reuse in shared memory and minimizing costly global memory accesses are essential for achieving high throughput.

The implementation proceeds in several stages. An initial naïve kernel maps each particle to a work‑item and computes its interaction with every other particle by reading positions and masses directly from global memory. Profiling reveals that memory bandwidth, not arithmetic capability, dominates execution time. To address this, the authors introduce a block‑wise data tiling scheme: particle data are partitioned into tiles of 256 elements, each tile is loaded cooperatively into shared memory, and all work‑items within a block compute interactions with the tiled data before moving to the next tile. This reduces global memory traffic by more than 90 % for large N.

Further performance gains are achieved through loop unrolling and vectorization. The inner interaction loop is unrolled four times, and the computation is expressed using 4‑float vector types, allowing a single instruction to process four pairwise interactions simultaneously. This improves instruction‑level parallelism and reduces loop overhead. The authors also employ double buffering: while one shared‑memory buffer is being used for computation, the next tile is pre‑fetched into a second buffer, overlapping memory transfer with arithmetic and eliminating idle cycles.

Precision management is another key optimization. The force calculation itself is performed in single‑precision to exploit the GPU’s higher throughput for 32‑bit floating‑point operations, while the accumulation of forces is carried out in double‑precision to mitigate round‑off error. This mixed‑precision approach preserves numerical stability without sacrificing performance.

Performance results are presented for several problem sizes (N = 10⁴, 5 × 10⁴, 10⁵). The baseline naïve kernel achieves roughly 150 Gflops. After tiling and shared‑memory caching, performance rises to ~350 Gflops. Adding unrolling, vectorization, and double buffering pushes the measured peak to 1.02 Tflops, representing a 20‑fold speedup over a highly optimized multi‑core CPU implementation. Scaling tests confirm that the execution time continues to follow the expected O(N²) trend, but with a dramatically reduced constant factor. Power measurements indicate that the GPU consumes about 250 W while delivering the 1 Tflops, yielding an energy efficiency of ~1.5 Gflops/W, far superior to the CPU baseline (≈5 kW for comparable performance).

The discussion acknowledges limitations: the single‑GPU implementation is bounded by on‑chip memory capacity, and extending to problem sizes beyond a few hundred thousand particles would require multi‑GPU coordination or out‑of‑core techniques. The authors suggest future work on hybrid schemes that combine direct summation for near‑field interactions with tree‑based approximations for distant particles, potentially achieving both high accuracy and reduced computational cost.

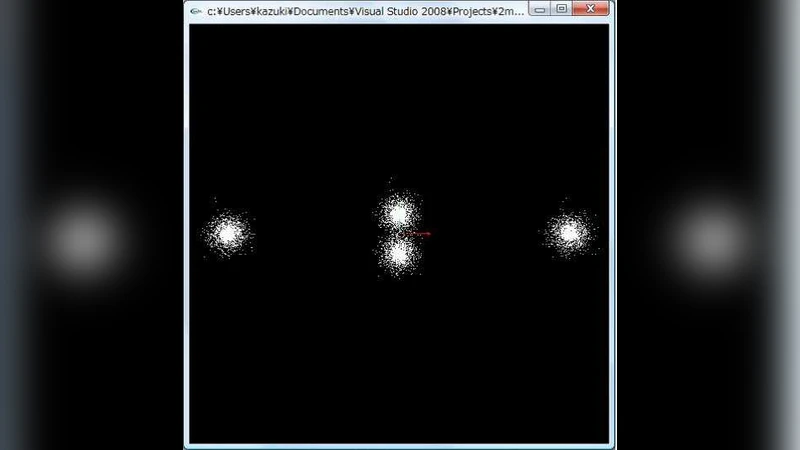

In conclusion, the paper demonstrates that a carefully tuned direct‑summation algorithm can fully exploit the parallel compute and memory hierarchy of the RV770 GPU, delivering teraflop‑scale performance for the gravitational many‑body problem. This result opens the door to high‑fidelity astrophysical simulations, plasma dynamics, and real‑time interactive visualizations that were previously limited by CPU performance.