📝 Original Info

- Title: Non-Negative Matrix Factorization, Convexity and Isometry

- ArXiv ID: 0810.2311

- Date: 2009-04-22

- Authors: ** - Nikolaos Vasiloglou - Alexander G. Gray - David V. Anderson **

📝 Abstract

In this paper we explore avenues for improving the reliability of dimensionality reduction methods such as Non-Negative Matrix Factorization (NMF) as interpretive exploratory data analysis tools. We first explore the difficulties of the optimization problem underlying NMF, showing for the first time that non-trivial NMF solutions always exist and that the optimization problem is actually convex, by using the theory of Completely Positive Factorization. We subsequently explore four novel approaches to finding globally-optimal NMF solutions using various ideas from convex optimization. We then develop a new method, isometric NMF (isoNMF), which preserves non-negativity while also providing an isometric embedding, simultaneously achieving two properties which are helpful for interpretation. Though it results in a more difficult optimization problem, we show experimentally that the resulting method is scalable and even achieves more compact spectra than standard NMF.

💡 Deep Analysis

Deep Dive into Non-Negative Matrix Factorization, Convexity and Isometry.

In this paper we explore avenues for improving the reliability of dimensionality reduction methods such as Non-Negative Matrix Factorization (NMF) as interpretive exploratory data analysis tools. We first explore the difficulties of the optimization problem underlying NMF, showing for the first time that non-trivial NMF solutions always exist and that the optimization problem is actually convex, by using the theory of Completely Positive Factorization. We subsequently explore four novel approaches to finding globally-optimal NMF solutions using various ideas from convex optimization. We then develop a new method, isometric NMF (isoNMF), which preserves non-negativity while also providing an isometric embedding, simultaneously achieving two properties which are helpful for interpretation. Though it results in a more difficult optimization problem, we show experimentally that the resulting method is scalable and even achieves more compact spectra than standard NMF.

📄 Full Content

arXiv:0810.2311v2 [cs.AI] 22 Apr 2009

Non-Negative Matrix Factorization, Convexity and Isometry ∗

Nikolaos Vasiloglou

Alexander G. Gray

David V. Anderson†

Abstract

In this paper we explore avenues for improving the reliability

of dimensionality reduction methods such as Non-Negative

Matrix Factorization (NMF) as interpretive exploratory data

analysis tools.

We first explore the difficulties of the op-

timization problem underlying NMF, showing for the first

time that non-trivial NMF solutions always exist and that

the optimization problem is actually convex, by using the

theory of Completely Positive Factorization.

We subse-

quently explore four novel approaches to finding globally-

optimal NMF solutions using various ideas from convex op-

timization. We then develop a new method, isometric NMF

(isoNMF), which preserves non-negativity while also provid-

ing an isometric embedding, simultaneously achieving two

properties which are helpful for interpretation. Though it

results in a more difficult optimization problem, we show ex-

perimentally that the resulting method is scalable and even

achieves more compact spectra than standard NMF.

1

Introduction.

In this paper we explore avenues for improving the relia-

bility of dimensionality reduction methods such as Non-

Negative Matrix Factorization (NMF) [21] as interpre-

tive exploratory data analysis tools, to make them reli-

able enough for, say, making scientific conclusions from

astronomical data.

NMF is a dimensionality reduction method of much

recent interest which can, for some common kinds of

data, sometimes yield results which are more meaning-

ful than those returned by the classical method of Prin-

cipal Component Analysis (PCA), for example (though

it will not in general yield better dimensionality reduc-

tion than PCA, as we’ll illustrate later). For data of

significant interest such as images (pixel intensities) or

text (presence/absence of words) or astronomical spec-

tra (magnitude in various frequencies), where the data

values are non-negative, NMF can produce components

which can themselves be interpreted as objects of the

same type as the data which are added together to pro-

duce the observed data. In other words, the components

are more likely to be sensible images or documents or

spectra. This makes it a potentially very useful inter-

∗Supported by Google Grants

†Georgia Institute of Technology

pretive data mining tool for such data.

A second important interpretive usage of dimen-

sionality reduction methods is the plot of the data points

in the low-dimensional space obtained (2-D or 3-D,

generally). Multidimensional scaling methods and re-

cent nonlinear manifold learning methods focus on this

usage, typically enforcing that the distances between

the points in the original high-dimensional space are

preserved in the low-dimensional space (isometry con-

straints).

Then, apparent relationships in the low-D

plot (indicating for example cluster structure or out-

liers) correspond to actual relationships. A plot of the

points using components found by standard NMF meth-

ods will in general produce misleading results in this

regard, as existing methods do not enforce such a con-

straint.

Another major reason that NMF might not yield

reliable interpretive results is that current optimization

methods [18, 22] might not find the actual optimum,

leading to poor performance in terms of both of the

above interpretive usages. This is because its objective

function is not convex, and so unconstrained optimizers

are used.

Thus, obtaining a reliably interpretable

NMF method requires understanding its optimization

problem more deeply – especially if we are going to

actually create an additionally difficult optimization

problem by adding isometry constraints.

1.1

Paper

Organization. In Section 2 we first

study at a fundamental level the optimization problem

of standard NMF. We relate for the first time the NMF

problem to the theory of Completely Positive Factoriza-

tion, then using that theory, we show that every non-

negative matrix has a non-trivial exact non-negative

matrix factorization of the form W=VH, a basic fact

which had not been shown until now. Using this the-

ory we also show that a convex formulation of the NMF

optimization problem exists, though a practical solu-

tion method for this formulation does not yet exist.

We then explore four novel formulations of the NMF

optimization problem toward achieving a global opti-

mum: convex relaxation using the positive semidefinite

cone, approximating the semidefinite cone with smaller

ones, convex multi-objective optimization, and general-

ized geometric programming. We highlight the difficul-

ties encountered by each approach.

In order to turn to the question of how to create a

new isometric NMF, in Section 3 we give background on

two recent successful manifold learning methods, Maxi-

mum Variance Unfolding (MVU) [33] and a new variant

of it, Maximum Furthest Neighbor Unfolding (MFNU)

[25]. It has been shown experimen

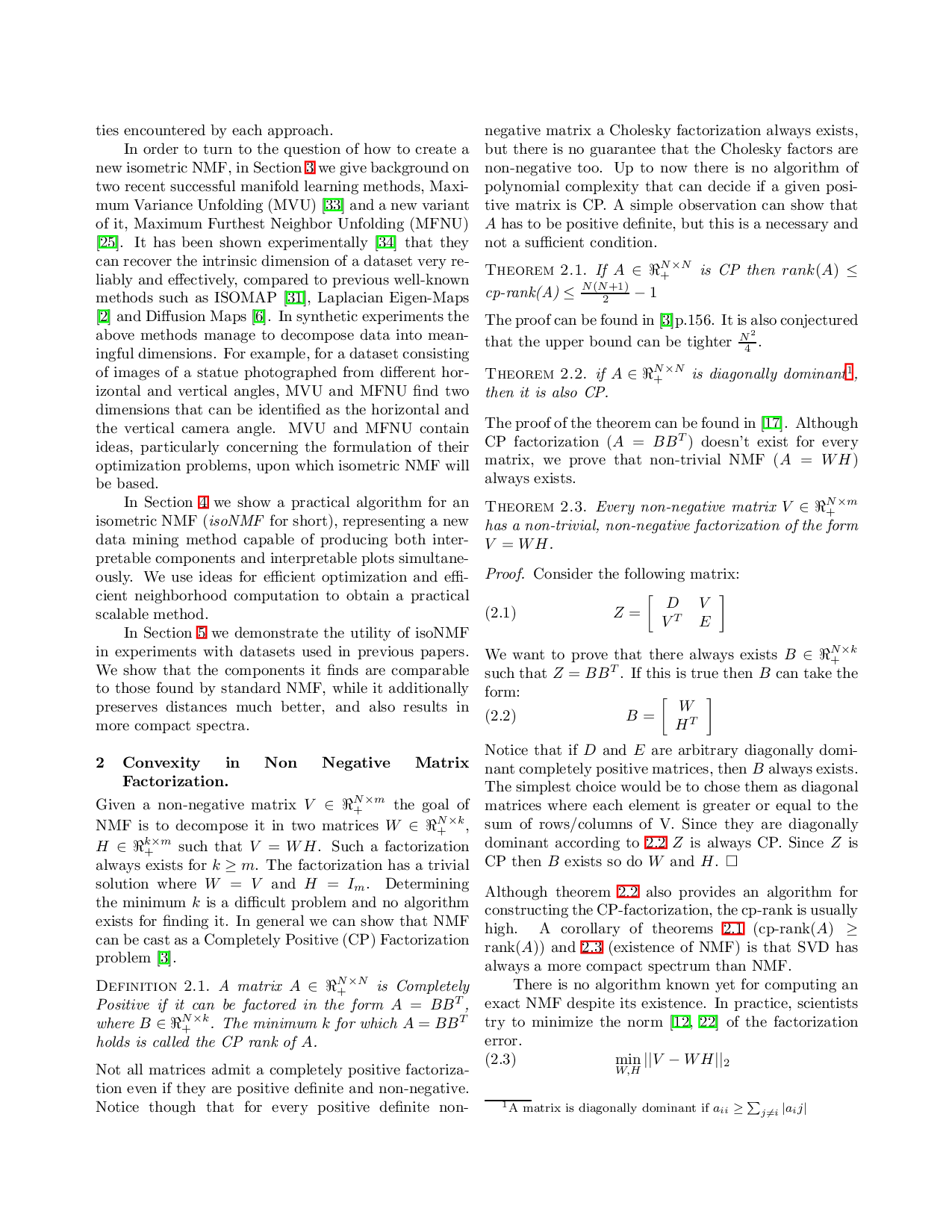

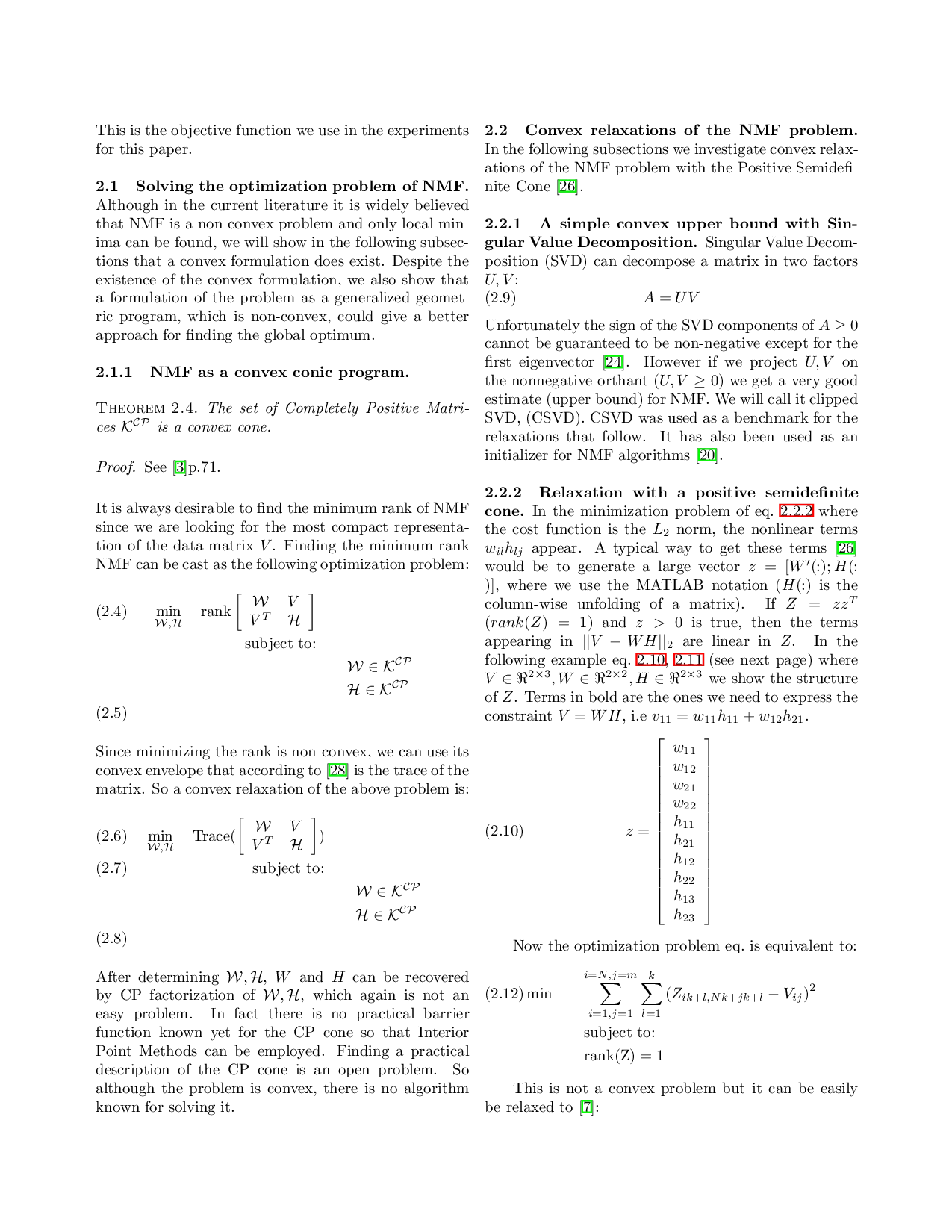

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.