Joint Range of Renyi Entropies

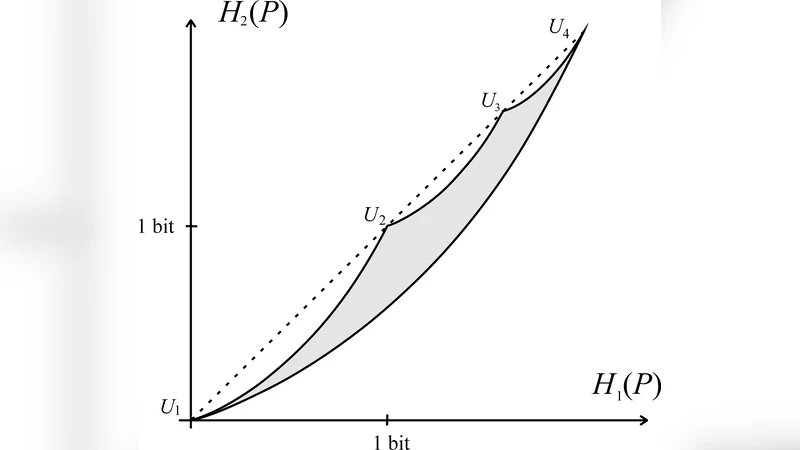

The exact range of the joined values of several R'{e}nyi entropies is determined. The method is based on topology with special emphasis on the orientation of the objects studied. Like in the case when only two orders of R'{e}nyi entropies are studied one can parametrize upper and lower bounds but an explicit formula for a tight upper or lower bound cannot be given.

💡 Research Summary

The paper investigates the set of all possible simultaneous values of several Rényi entropies of a probability distribution. Rényi entropy of order α is defined as Hα(P)=1/(1−α)·log ∑i p_i^α, and different orders emphasize different aspects of the distribution (α→0 focuses on support size, α→∞ on the most likely outcome). While earlier work had characterized the joint range for two orders, it could only describe upper and lower bounds parametrically and could not provide a closed‑form expression for the tight bound.

The authors adopt a topological viewpoint. They regard the probability simplex Δ_n as a compact, connected manifold and each entropy map f_α:Δ_n→ℝ as a continuous function. For a collection of orders {α₁,…,α_k} they consider the vector‑valued map F(P)=(H_{α₁}(P),…,H_{α_k}(P)). The central problem is to determine the image Im(F)⊂ℝ^k, i.e., the joint range.

The analysis begins with the simplest case of a two‑point distribution P_θ=(θ,1−θ). In this one‑parameter family each H_α is a monotone function of θ, and the curve (H_{α₁}(θ),H_{α₂}(θ)) traces the exact boundary for the two‑order problem. The authors then extend this construction to higher dimensions by embedding the two‑point curves along the edges of higher‑dimensional simplices (triangles, tetrahedra, etc.). Each edge corresponds to mixing an extreme point (a Dirac mass) with the uniform distribution, and moving inside the simplex corresponds to mixing several such extreme points.

A key conceptual tool is the notion of “orientation”. For a given order α the entropy either increases or decreases along a chosen mixing direction. When all selected orders share the same orientation the image of F lies in a single connected component, called the “upward class”. If some orders have opposite orientation, the image splits into a second component, the “downward class”. By analysing the orientation of each f_α along the simplex edges, the authors prove that the union of the two components exactly equals Im(F). Moreover, they construct an explicit surjective parametrisation Φ:Λ→Im(F) where Λ is a set of mixing coefficients (essentially the barycentric coordinates of points on the line segment between a Dirac mass and the uniform distribution). Φ is continuous but not given by a simple algebraic formula; it is defined implicitly through the mixture parameters. This parametrisation is tight: every admissible vector of Rényi entropies can be obtained from some mixture, and every mixture yields a point in the joint range.

The paper also shows that the new description reduces to the known two‑order result when k=2, confirming consistency. For k≥3 the boundary becomes a piecewise‑smooth surface of a high‑dimensional polytope, but the orientation‑based decomposition still holds.

Finally, the authors discuss implications. In information theory, Rényi entropies of different orders appear simultaneously in source coding with side information, privacy‑preserving data release, and hypothesis testing. Knowing the exact joint feasible region allows designers to set realistic constraints on multiple entropy measures at once. In statistical physics and machine learning, multi‑scale complexity measures often involve a spectrum of Rényi entropies; the topological characterisation clarifies which spectra can arise from genuine probability distributions.

In summary, the paper provides a complete topological characterisation of the joint range of any finite collection of Rényi entropies. It introduces the orientation framework, constructs a continuous surjective parametrisation based on mixtures between extreme and uniform distributions, and demonstrates that while explicit closed‑form bounds are unattainable, the parametrisation yields tight, computable descriptions useful across several scientific domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment