The canonical problem of solving a system of linear equations arises in numerous contexts in information theory, communication theory, and related fields. In this contribution, we develop a solution based upon Gaussian belief propagation (GaBP) that does not involve direct matrix inversion. The iterative nature of our approach allows for a distributed message-passing implementation of the solution algorithm. We also address some properties of the GaBP solver, including convergence, exactness, its max-product version and relation to classical solution methods. The application example of decorrelation in CDMA is used to demonstrate the faster convergence rate of the proposed solver in comparison to conventional linear-algebraic iterative solution methods.

Deep Dive into Gaussian Belief Propagation Solver for Systems of Linear Equations.

The canonical problem of solving a system of linear equations arises in numerous contexts in information theory, communication theory, and related fields. In this contribution, we develop a solution based upon Gaussian belief propagation (GaBP) that does not involve direct matrix inversion. The iterative nature of our approach allows for a distributed message-passing implementation of the solution algorithm. We also address some properties of the GaBP solver, including convergence, exactness, its max-product version and relation to classical solution methods. The application example of decorrelation in CDMA is used to demonstrate the faster convergence rate of the proposed solver in comparison to conventional linear-algebraic iterative solution methods.

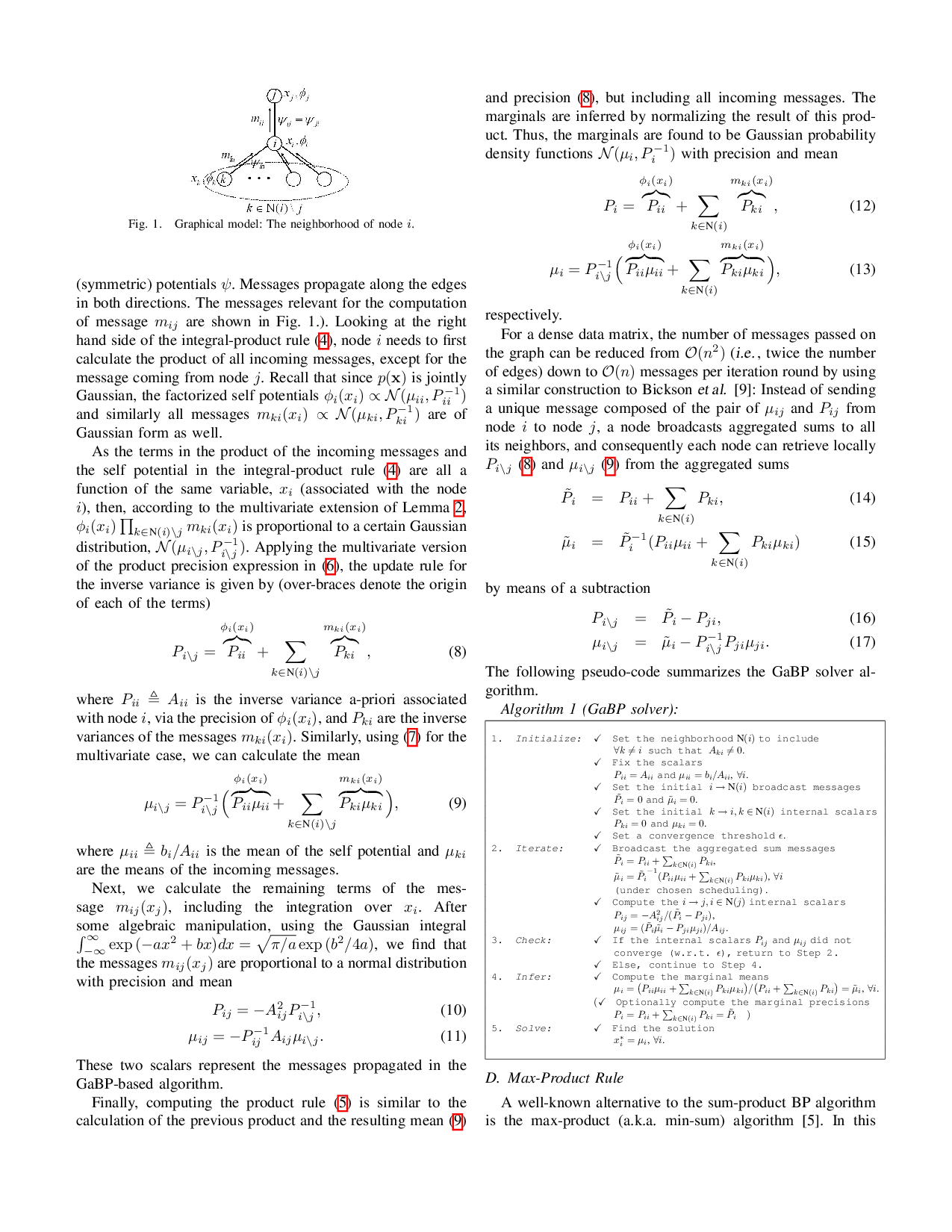

solution x * [2]. Thus, there is the risk that they may converge slowly, or not at all. In practice, however, it has been found that they often converge to the exact solution or a good approximation after a relatively small number of iterations.

A powerful and efficient iterative algorithm, belief propagation (BP) [4], also known as the sum-product algorithm, has been very successfully used to solve, either exactly or approximately, inference problems in probabilistic graphical models [5]. In this paper, we reformulate the general problem of solving a linear system of algebraic equations as a probabilistic inference problem on a suitably-defined graph. We believe that this is the first time that an explicit connection between these two ubiquitous problems has been established. As an important consequence, we demonstrate that Gaussian BP (GaBP) provides an efficient, distributed approach to solving a linear system that circumvents the potentially complex operation of direct matrix inversion.

We shall use the following notations. The operator {•} T denotes a vector or matrix transpose, the matrix I n is a n × n identity matrix, while the symbols {•} i and {•} ij denote entries of a vector and matrix, respectively.

We begin our derivation by defining an undirected graphical model (i.e. , a Markov random field), G, corresponding to the linear system of equations. Specifically, let G = (X , E), where X is a set of nodes that are in one-to-one correspondence with the linear system’s variables x = {x 1 , . . . , x n } T , and where E is a set of undirected edges determined by the non-zero entries of the (symmetric) matrix A. Using this graph, we can translate the problem of solving the linear system from the algebraic domain to the domain of probabilistic inference, as stated in the following theorem.

Proposition 1 (Solution and inference): The computation of the solution vector x * is identical to the inference of the vector of marginal means µ = {µ 1 , . . . , µ n } over the graph G with the associated joint Gaussian probability density function p(x) ∼ N (µ A -1 b, A -1 ).

Proof: Another way of solving the set of linear equations Axb = 0 is to represent it by using a quadratic form q(x) x T Ax/2 -b T x. As the matrix A is symmetric, the derivative of the quadratic form w.r.t. the vector x is given by the vector ∂q/∂x = Axb. Thus equating ∂q/∂x = 0 gives the stationary point x * , which is nothing but the desired solution to Ax = b. Next, one can define the following joint Gaussian probability density function

where Z is a distribution normalization factor. Denoting the vector µ A -1 b, the Gaussian density function can be rewritten as

where the new normalization factor ζ Z exp (-µ T Aµ/2). It follows that the target solution x * = A -1 b is equal to µ A -1 b, the mean vector of the distribution p(x), as defined above (1). Hence, in order to solve the system of linear equations we need to infer the marginal densities, which must also be Gaussian, p(

where µ i and P i are the marginal mean and inverse variance (sometimes called the precision), respectively.

According to Proposition 1, solving a deterministic vectormatrix linear equation translates to solving an inference problem in the corresponding graph. The move to the probabilistic domain calls for the utilization of BP as an efficient inference engine.

Belief propagation (BP) is equivalent to applying Pearl’s local message-passing algorithm [4], originally derived for exact inference in trees, to a general graph even if it contains cycles (loops). BP has been found to have outstanding empirical success in many applications, e.g. , in decoding Turbo codes and low-density parity-check (LDPC) codes. The excellent performance of BP in these applications may be attributed to the sparsity of the graphs, which ensures that cycles in the graph are long, and inference may be performed as if the graph were a tree.

Given the data matrix A and the observation vector b, one can write explicitly the Gaussian density function, p(x) (2), and its corresponding graph G consisting of edge potentials (‘compatibility functions’) ψ ij and self potentials (’evidence’) φ i . These graph potentials are simply determined according to the following pairwise factorization of the Gaussian function (1)

The graph topology is specified by the structure of the matrix A, i.e. , the edges set {i, j} includes all non-zero entries of A for which i > j.

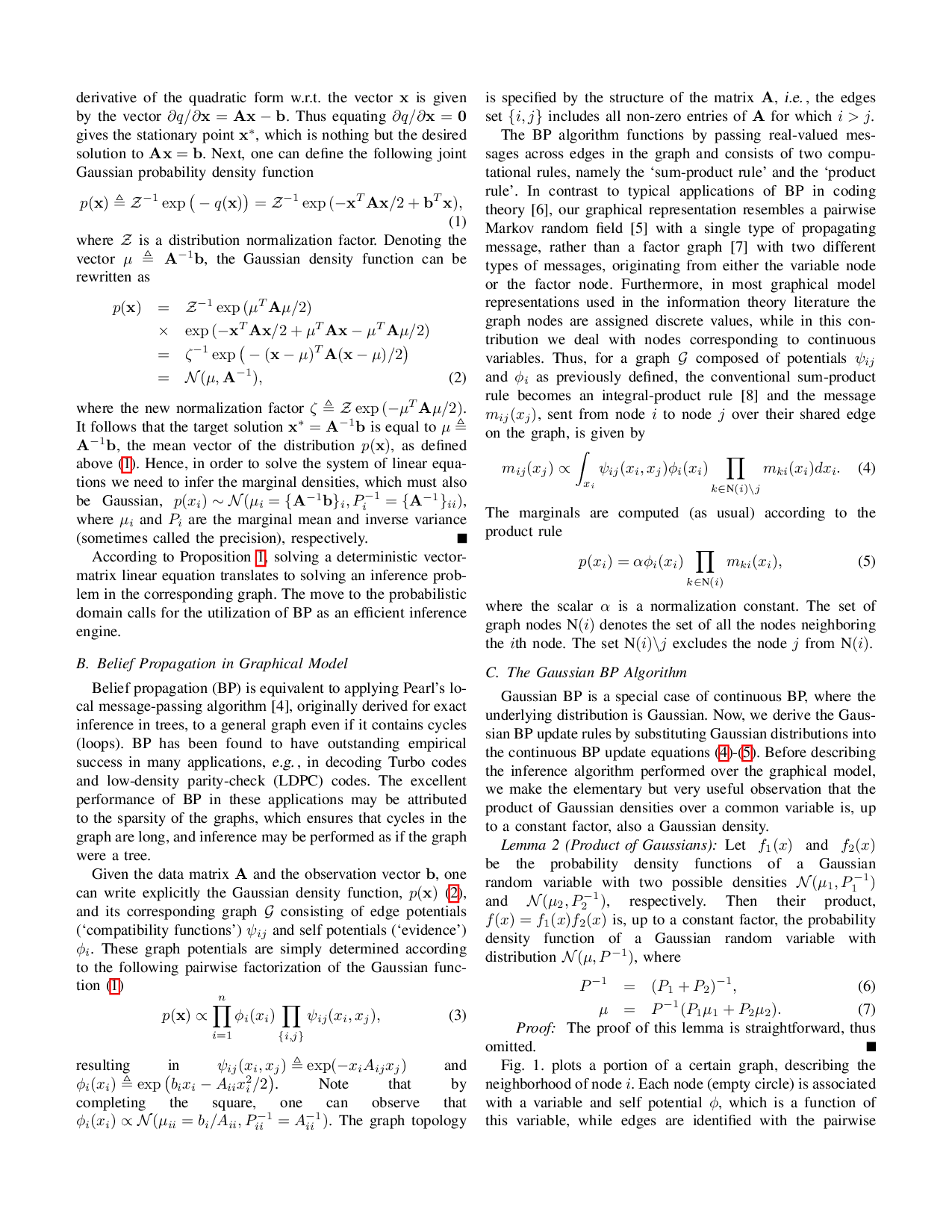

The BP algorithm functions by passing real-valued messages across edges in the graph and consists of two computational rules, namely the ‘sum-product rule’ and the ‘product rule’. In contrast to typical applications of BP in coding theory [6], our graphical representation resembles a pairwise Markov random field [5] with a single type of propagating message, rather than a factor graph [7] with two different types of messages, originating from either the variable node or the factor node. Furthermore, in most graphical model representat

…(Full text truncated)…

This content is AI-processed based on ArXiv data.