Gaussian Belief Propagation Solver for Systems of Linear Equations

The canonical problem of solving a system of linear equations arises in numerous contexts in information theory, communication theory, and related fields. In this contribution, we develop a solution based upon Gaussian belief propagation (GaBP) that …

Authors: ** (원 논문 저자 정보가 본문에 명시되지 않아 제공할 수 없습니다.) **

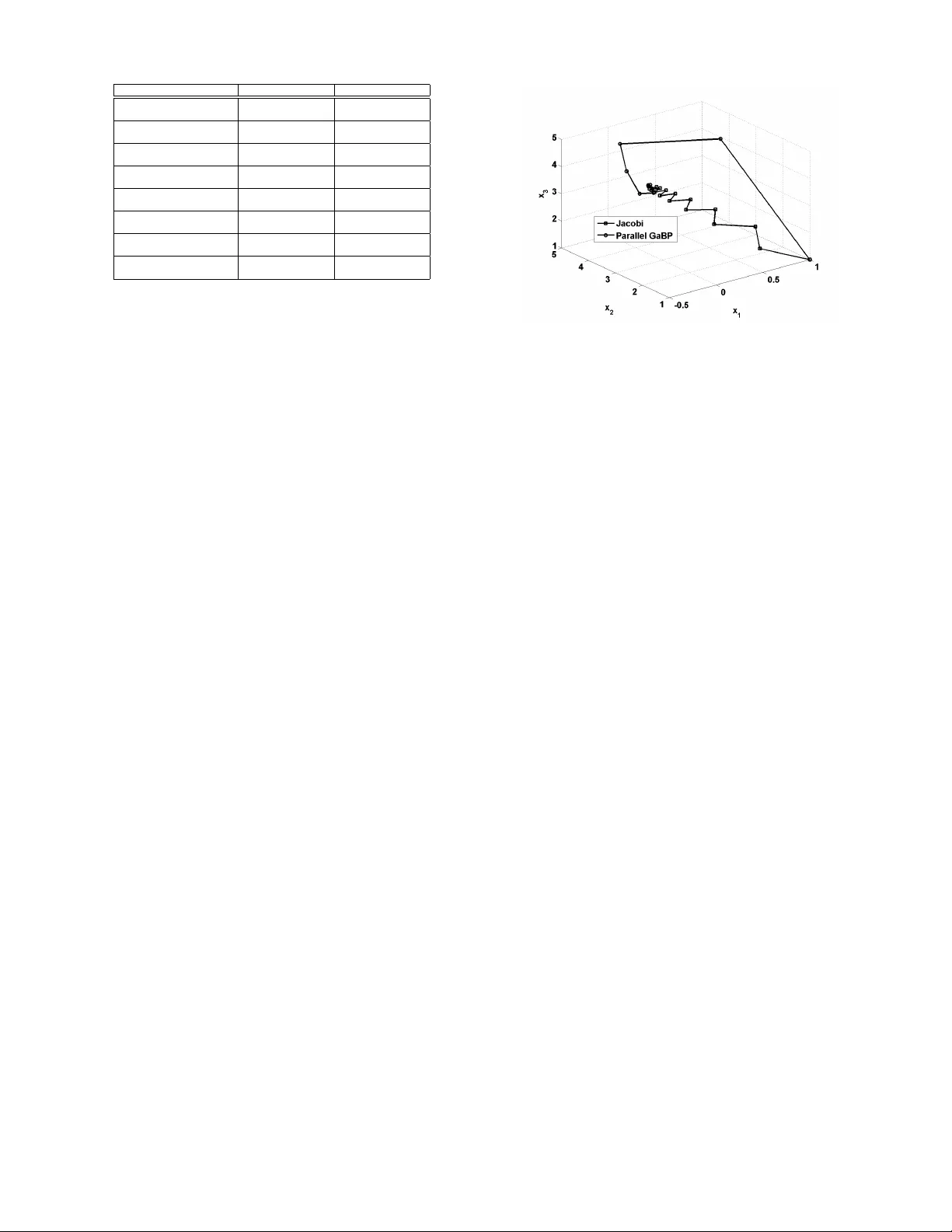

Gaussian Belief Propagation Solv er for Systems of Linear Equations Ori Shental 1 , Paul H. Sie gel and Jack K. W olf Center for Magnetic Recording Research Univ ersity of California - San Diego La Jolla, CA 92093, USA Email: { oshental,psiegel,jwolf } @ucsd.edu Danny Bickson 1 and Danny Dolev School of Computer Science and Engineering Hebrew Uni versity of Jerusalem Jerusalem 91904, Israel Email: { daniel51,dolev } @cs.huji.ac.il Abstract — The canonical problem of solving a system of linear equations arises in numer ous contexts in information theory , com- munication theory , and related fields. In this contribution, we de- velop a solution based upon Gaussian belief propagation (GaBP) that does not in volve direct matrix in version. The iterati ve nature of our approach allows for a distributed message-passing implementation of the solution algorithm. W e also addr ess some properties of the GaBP solver , including con vergence, exactness, its max-pr oduct version and r elation to classical solution methods. The application example of decorrelation in CDMA is used to demonstrate the faster con vergence rate of the proposed solver in comparison to conventional linear -algebraic iterative solution methods. I . P RO B L E M F O R M U L AT I O N A N D I N T R O D U C T I O N Solving a system of linear equations Ax = b is one of the most fundamental problems in algebra, with countless applications in the mathematical sciences and engineering. Giv en the observation vector b ∈ R n , n ∈ N ∗ , and the data matrix A ∈ R n × n , a unique solution, x = x ∗ ∈ R n , exists if and only if the data matrix A is full rank. In this contribution we concentrate on the popular case where the data matrices, A , are also symmetric ( e.g. , as in correlation matrices). Thus, assuming a nonsingular symmetric matrix A , the system of equations can be solv ed either directly or in an iterati ve manner . Direct matrix in version methods, such as Gaussian elimination (LU factorization, [1]-Ch. 3) or band Cholesky factorization ( [1]-Ch. 4), find the solution with a finite number of operations, typically , for a dense n × n matrix, on the order of n 3 . The former is particularly ef fective for systems with unstructured dense data matrices, while the latter is typically used for structured dense systems. Iterativ e methods [2] are inherently simpler , requiring only additions and multiplications, and hav e the further adv antage that they can exploit the sparsity of the matrix A to reduce the computational comple xity as well as the algorithmic storage requirements [3]. By comparison, for large, sparse and amor- phous data matrices, the direct methods are impractical due to the need for excessiv e row reordering operations. The main drawback of the iterative approaches is that, under certain conditions, the y con ver ge only asymptotically to the exact 1 Contributed equally to this work. Supported in part by NSF Grant No. CCR-0514859 and EVERGR OW , IP 1935 of the EU Sixth Framework. solution x ∗ [2]. Thus, there is the risk that they may con verge slowly , or not at all. In practice, howe ver , it has been found that they often conv erge to the exact solution or a good approximation after a relativ ely small number of iterations. A po werful and ef ficient iterativ e algorithm, belief propa- gation (BP) [4], also kno wn as the sum-product algorithm, has been very successfully used to solve, either exactly or approximately , inference problems in probabilistic graphical models [5]. In this paper , we reformulate the general problem of solving a linear system of algebraic equations as a prob- abilistic inference problem on a suitably-defined graph. W e believ e that this is the first time that an explicit connection between these two ubiquitous problems has been established. As an important consequence, we demonstrate that Gaussian BP (GaBP) provides an efficient, distributed approach to solv- ing a linear system that circumv ents the potentially comple x operation of direct matrix in version. W e shall use the following notations. The operator {·} T denotes a vector or matrix transpose, the matrix I n is a n × n identity matrix, while the symbols {·} i and {·} ij denote entries of a vector and matrix, respectiv ely . I I . T H E G A B P S O LV ER A. F rom Linear Algebra to Pr obabilistic Infer ence W e begin our deri vation by defining an undirected graphical model ( i.e. , a Markov random field), G , corresponding to the linear system of equations. Specifically , let G = ( X , E ) , where X is a set of nodes that are in one-to-one correspondence with the linear system’ s v ariables x = { x 1 , . . . , x n } T , and where E is a set of undirected edges determined by the non-zero entries of the (symmetric) matrix A . Using this graph, we can translate the problem of solving the linear system from the algebraic domain to the domain of probabilistic inference, as stated in the following theorem. Pr oposition 1 (Solution and infer ence): The computation of the solution vector x ∗ is identical to the inference of the vector of marginal means µ = { µ 1 , . . . , µ n } ov er the graph G with the associated joint Gaussian probability density function p ( x ) ∼ N ( µ , A − 1 b , A − 1 ) . Pr oof: Another way of solving the set of linear equations Ax − b = 0 is to represent it by using a quadratic form q ( x ) , x T Ax / 2 − b T x . As the matrix A is symmetric, the deriv ati ve of the quadratic form w .r .t. the vector x is gi ven by the vector ∂ q /∂ x = Ax − b . Thus equating ∂ q /∂ x = 0 giv es the stationary point x ∗ , which is nothing but the desired solution to Ax = b . Next, one can define the following joint Gaussian probability density function p ( x ) , Z − 1 exp − q ( x ) = Z − 1 exp ( − x T Ax / 2 + b T x ) , (1) where Z is a distrib ution normalization factor . Denoting the vector µ , A − 1 b , the Gaussian density function can be rewritten as p ( x ) = Z − 1 exp ( µ T A µ/ 2) × exp ( − x T Ax / 2 + µ T Ax − µ T A µ/ 2) = ζ − 1 exp − ( x − µ ) T A ( x − µ ) / 2 = N ( µ, A − 1 ) , (2) where the new normalization factor ζ , Z exp ( − µ T A µ/ 2) . It follows that the target solution x ∗ = A − 1 b is equal to µ , A − 1 b , the mean vector of the distribution p ( x ) , as defined abov e (1). Hence, in order to solve the system of linear equa- tions we need to infer the marginal densities, which must also be Gaussian, p ( x i ) ∼ N ( µ i = { A − 1 b } i , P − 1 i = { A − 1 } ii ) , where µ i and P i are the marginal mean and in verse variance (sometimes called the precision), respectiv ely . According to Proposition 1, solving a deterministic vector - matrix linear equation translates to solving an inference prob- lem in the corresponding graph. The mov e to the probabilistic domain calls for the utilization of BP as an efficient inference engine. B. Belief Propa gation in Graphical Model Belief propagation (BP) is equiv alent to applying Pearl’ s lo- cal message-passing algorithm [4], originally derived for exact inference in trees, to a general graph e ven if it contains cycles (loops). BP has been found to ha ve outstanding empirical success in many applications, e.g. , in decoding Turbo codes and low-density parity-check (LDPC) codes. The excellent performance of BP in these applications may be attributed to the sparsity of the graphs, which ensures that cycles in the graph are long, and inference may be performed as if the graph were a tree. Giv en the data matrix A and the observation vector b , one can write explicitly the Gaussian density function, p ( x ) (2), and its corresponding graph G consisting of edge potentials (‘compatibility functions’) ψ ij and self potentials (‘evidence’) φ i . These graph potentials are simply determined according to the follo wing pairwise factorization of the Gaussian func- tion (1) p ( x ) ∝ n Y i =1 φ i ( x i ) Y { i,j } ψ ij ( x i , x j ) , (3) resulting in ψ ij ( x i , x j ) , exp( − x i A ij x j ) and φ i ( x i ) , exp b i x i − A ii x 2 i / 2 . Note that by completing the square, one can observe that φ i ( x i ) ∝ N ( µ ii = b i / A ii , P − 1 ii = A − 1 ii ) . The graph topology is specified by the structure of the matrix A , i.e. , the edges set { i, j } includes all non-zero entries of A for which i > j . The BP algorithm functions by passing real-valued mes- sages across edges in the graph and consists of two compu- tational rules, namely the ‘sum-product rule’ and the ‘product rule’. In contrast to typical applications of BP in coding theory [6], our graphical representation resembles a pairwise Markov random field [5] with a single type of propagating message, rather than a factor graph [7] with two different types of messages, originating from either the variable node or the factor node. Furthermore, in most graphical model representations used in the information theory literature the graph nodes are assigned discrete values, while in this con- tribution we deal with nodes corresponding to continuous variables. Thus, for a graph G composed of potentials ψ ij and φ i as pre viously defined, the conv entional sum-product rule becomes an integral-product rule [8] and the message m ij ( x j ) , sent from node i to node j over their shared edge on the graph, is giv en by m ij ( x j ) ∝ Z x i ψ ij ( x i , x j ) φ i ( x i ) Y k ∈ N ( i ) \ j m ki ( x i ) dx i . (4) The mar ginals are computed (as usual) according to the product rule p ( x i ) = αφ i ( x i ) Y k ∈ N ( i ) m ki ( x i ) , (5) where the scalar α is a normalization constant. The set of graph nodes N ( i ) denotes the set of all the nodes neighboring the i th node. The set N ( i ) \ j excludes the node j from N ( i ) . C. The Gaussian BP Algorithm Gaussian BP is a special case of continuous BP , where the underlying distribution is Gaussian. Now , we deriv e the Gaus- sian BP update rules by substituting Gaussian distrib utions into the continuous BP update equations (4)-(5). Before describing the inference algorithm performed ov er the graphical model, we make the elementary but very useful observ ation that the product of Gaussian densities over a common variable is, up to a constant factor , also a Gaussian density . Lemma 2 (Pr oduct of Gaussians): Let f 1 ( x ) and f 2 ( x ) be the probability density functions of a Gaussian random v ariable with two possible densities N ( µ 1 , P − 1 1 ) and N ( µ 2 , P − 1 2 ) , respectiv ely . Then their product, f ( x ) = f 1 ( x ) f 2 ( x ) is, up to a constant factor , the probability density function of a Gaussian random variable with distribution N ( µ, P − 1 ) , where P − 1 = ( P 1 + P 2 ) − 1 , (6) µ = P − 1 ( P 1 µ 1 + P 2 µ 2 ) . (7) Pr oof: The proof of this lemma is straightforward, thus omitted. Fig. 1. plots a portion of a certain graph, describing the neighborhood of node i . Each node (empty circle) is associated with a variable and self potential φ , which is a function of this variable, while edges are identified with the pairwise Fig. 1. Graphical model: The neighborhood of node i . (symmetric) potentials ψ . Messages propagate along the edges in both directions. The messages relev ant for the computation of message m ij are sho wn in Fig. 1.). Looking at the right hand side of the integral-product rule (4), node i needs to first calculate the product of all incoming messages, except for the message coming from node j . Recall that since p ( x ) is jointly Gaussian, the factorized self potentials φ i ( x i ) ∝ N ( µ ii , P − 1 ii ) and similarly all messages m ki ( x i ) ∝ N ( µ ki , P − 1 ki ) are of Gaussian form as well. As the terms in the product of the incoming messages and the self potential in the integral-product rule (4) are all a function of the same variable, x i (associated with the node i ), then, according to the multiv ariate extension of Lemma 2, φ i ( x i ) Q k ∈ N ( i ) \ j m ki ( x i ) is proportional to a certain Gaussian distribution, N ( µ i \ j , P − 1 i \ j ) . Applying the multiv ariate version of the product precision expression in (6), the update rule for the in verse variance is given by (ov er-braces denote the origin of each of the terms) P i \ j = φ i ( x i ) z}|{ P ii + X k ∈ N ( i ) \ j m ki ( x i ) z}|{ P ki , (8) where P ii , A ii is the in verse variance a-priori associated with node i , via the precision of φ i ( x i ) , and P ki are the in verse variances of the messages m ki ( x i ) . Similarly , using (7) for the multiv ariate case, we can calculate the mean µ i \ j = P − 1 i \ j φ i ( x i ) z }| { P ii µ ii + X k ∈ N ( i ) \ j m ki ( x i ) z }| { P ki µ ki , (9) where µ ii , b i / A ii is the mean of the self potential and µ ki are the means of the incoming messages. Next, we calculate the remaining terms of the mes- sage m ij ( x j ) , including the integration ov er x i . After some algebraic manipulation, using the Gaussian integral R ∞ −∞ exp ( − ax 2 + bx ) dx = p π /a exp ( b 2 / 4 a ) , we find that the messages m ij ( x j ) are proportional to a normal distribution with precision and mean P ij = − A 2 ij P − 1 i \ j , (10) µ ij = − P − 1 ij A ij µ i \ j . (11) These two scalars represent the messages propagated in the GaBP-based algorithm. Finally , computing the product rule (5) is similar to the calculation of the previous product and the resulting mean (9) and precision (8), b ut including all incoming messages. The marginals are inferred by normalizing the result of this prod- uct. Thus, the marginals are found to be Gaussian probability density functions N ( µ i , P − 1 i ) with precision and mean P i = φ i ( x i ) z}|{ P ii + X k ∈ N ( i ) m ki ( x i ) z}|{ P ki , (12) µ i = P − 1 i \ j φ i ( x i ) z }| { P ii µ ii + X k ∈ N ( i ) m ki ( x i ) z }| { P ki µ ki , (13) respectiv ely . For a dense data matrix, the number of messages passed on the graph can be reduced from O ( n 2 ) ( i.e. , twice the number of edges) do wn to O ( n ) messages per iteration round by using a similar construction to Bickson et al. [9]: Instead of sending a unique message composed of the pair of µ ij and P ij from node i to node j , a node broadcasts aggregated sums to all its neighbors, and consequently each node can retriev e locally P i \ j (8) and µ i \ j (9) from the aggregated sums ˜ P i = P ii + X k ∈ N ( i ) P ki , (14) ˜ µ i = ˜ P − 1 i ( P ii µ ii + X k ∈ N ( i ) P ki µ ki ) (15) by means of a subtraction P i \ j = ˜ P i − P j i , (16) µ i \ j = ˜ µ i − P − 1 i \ j P j i µ j i . (17) The following pseudo-code summarizes the GaBP solver al- gorithm. Algorithm 1 (GaBP solver): 1. Initialize: X Set the neighborhood N ( i ) to include ∀ k 6 = i such that A ki 6 = 0 . X Fix the scalars P ii = A ii and µ ii = b i / A ii , ∀ i . X Set the initial i → N ( i ) broadcast messages ˜ P i = 0 and ˜ µ i = 0 . X Set the initial k → i, k ∈ N ( i ) internal scalars P ki = 0 and µ ki = 0 . X Set a convergence threshold . 2. Iterate: X Broadcast the aggregated sum messages ˜ P i = P ii + P k ∈ N ( i ) P ki , ˜ µ i = ˜ P i − 1 ( P ii µ ii + P k ∈ N ( i ) P ki µ ki ) , ∀ i (under chosen scheduling) . X Compute the i → j, i ∈ N ( j ) internal scalars P ij = − A 2 ij / ( ˜ P i − P j i ) , µ ij = ( ˜ P i ˜ µ i − P j i µ j i ) / A ij . 3. Check: X If the internal scalars P ij and µ ij did not converge (w.r.t. ), return to Step 2. X Else, continue to Step 4. 4. Infer: X Compute the marginal means µ i = P ii µ ii + P k ∈ N ( i ) P ki µ ki / P ii + P k ∈ N ( i ) P ki = ˜ µ i , ∀ i . ( X Optionally compute the marginal precisions P i = P ii + P k ∈ N ( i ) P ki = ˜ P i ) 5. Solve: X Find the solution x ∗ i = µ i , ∀ i . D. Max-Pr oduct Rule A well-known alternativ e to the sum-product BP algorithm is the max-product (a.k.a. min-sum) algorithm [5]. In this variant of BP , a maximization operation is performed rather than marginalization, i.e. , variables are eliminated by taking maxima instead of sums. F or trellis trees ( e.g. , graphical representation of con volutional codes or ISI channels), the con ventional sum-product BP algorithm boils down to per - forming the BCJR algorithm, resulting in the most probable symbol, while its max-product counterpart is equiv alent to the V iterbi algorithm, thus inferring the most probable sequence of symbols [7]. In order to deriv e the max-product version of the proposed GaBP solver , the integral(sum)-product rule (4) is replaced by a new rule m ij ( x j ) ∝ arg max x i ψ ij ( x i , x j ) φ i ( x i ) Y k ∈ N ( i ) \ j m ki ( x i ) . (18) Computing m ij ( x j ) according to this max-product rule, one gets (the exact deriv ation is omitted) m ij ( x j ) ∝ N ( µ ij = − P − 1 ij A ij µ i \ j , P − 1 ij = − A − 2 ij P i \ j ) , (19) which is identical to the messages deriv ed for the sum-product case (10)-(11). Thus interestingly , as opposed to ordinary (discrete) BP , the follo wing property of the GaBP solver emerges. Cor ollary 3 (Max-pr oduct): The max-product (18) and sum-product (4) versions of the GaBP solver are identical. I I I . C O N V E R G E N C E A N D E X AC T N E S S In ordinary BP , con vergence does not guarantee exactness of the inferred probabilities, unless the graph has no cycles. Luckily , this is not the case for the GaBP solv er . Its un- derlying Gaussian nature yields a direct connection between con vergence and exact inference. Moreov er, in contrast to BP , the conv ergence of GaBP is not limited to acyclic or sparse graphs and can occur even for dense (fully-connected) graphs, adhering to certain rules that we no w discuss. W e can use results from the literature on probabilistic inference in graph- ical models [8], [10], [11] to determine the conv ergence and exactness properties of the GaBP solver . The following two theorems establish sufficient conditions under which GaBP is guaranteed to con verge to the exact marginal means. Theor em 4: [8, Claim 4] If the matrix A is strictly di- agonally dominant ( i.e. , | A ii | > P j 6 = i | A ij | , ∀ i ), then GaBP con verges and the marginal means con ver ge to the true means. This sufficient condition was recently relaxed to include a wider group of matrices. Theor em 5: [10, Proposition 2] If the spectral radius ( i.e. , the maximum of the absolute v alues of the eigen v alues) ρ of the matrix | I n − A | satisfies ρ ( | I n − A | ) < 1 , then GaBP con verges and the marginal means con ver ge to the true means. There are many e xamples of linear systems that violate these conditions for which the GaBP solver nevertheless con verges to the exact solution. In particular, if the graph corresponding to the system is acyclic ( i.e. , a tree), GaBP yields the e xact marginal means (and e ven marginal v ariances), re gardless of the value of the spectral radius [8]. I V . R E L A T I O N T O C L A S S I C A L S O L U T I O N M E T H O D S It can be shown (see also Plarre and Kumar [12]) that the GaBP solv er (Algorithm 1) for a system of linear equations represented by a tree graph is identical to the renowned direct method of Gaussian elimination (a.k.a. LU factorization, [1]). The interesting relation to classical iterativ e solution meth- ods [2] is rev ealed via the following proposition. Pr oposition 6 (J acobi and GaBP solvers): The GaBP solver (Algorithm 1) 1) with in verse variance messages arbitrarily set to zero, i.e. , P ij = 0 , i ∈ N ( j ) , ∀ j ; 2) incorporating the message receiv ed from node j when computing the message to be sent from node i to node j , i.e. , replacing k ∈ N ( i ) \ j with k ∈ N ( i ) ; is identical to the Jacobi iterativ e method. Pr oof: Arbitrarily setting the precisions to zero, we get in correspondence to the abov e deriv ation, P i \ j = P ii = A ii , (20) P ij µ ij = − A ij µ i \ j , (21) µ i = A − 1 ii ( b i − X k ∈ N ( i ) A ki µ k \ i ) . (22) Note that the inv erse relation between P ij and P i \ j (10) is no longer valid in this case. No w , we rewrite the mean µ i \ j (9) without excluding the information from node j , µ i \ j = A − 1 ii ( b i − X k ∈ N ( i ) A ki µ k \ i ) . (23) Note that µ i \ j = µ i , hence the inferred marginal mean µ i (22) can be rewritten as µ i = A − 1 ii ( b i − X k 6 = i A ki µ k ) , (24) where the e xpression for all neighbors of node i is replaced by the redundant, yet identical, expression k 6 = i . This fixed- point iteration (24) is identical to the element-wise expression of the Jacobi method [2], concluding the proof. Now , the Gauss-Seidel (GS) method can be viewed as a ‘serial scheduling’ version of the Jacobi method; thus, based on Proposition 6, it can be deriv ed also as an instance of the serial (message-passing) GaBP solver . Next, since successiv e ov er-relaxation (SOR) is nothing but a GS method averaged ov er two consecutiv e iterations, SOR can be obtained as a serial GaBP solver with ‘damping’ operation [13]. V . A P P L I C A T I O N E X A M P L E : L I N E A R D E T E C T I O N W e examine the implementation of a decorrelator linear detector in a CDMA system with spreading codes based upon Gold sequences of length N = 7 . T wo system setups are simulated, corresponding to n = 3 and n = 4 users. The decorrelator detector , a member of the family of linear detectors, solves a system of linear equations, Ax = b , where the matrix A is equal to the n × n correlation matrix R , and the observation vector b is identical to the n -length CDMA Algorithm Iterations t ( R n =3 ) Iterations t ( R n =4 ) Jacobi 111 24 GS 26 26 Parallel GaBP 23 24 Optimal SOR 17 14 Serial GaBP 16 13 Jacobi+Steffensen 59 − Parallel GaBP+Steffensen 13 13 Serial GaBP+Steffensen 9 7 T ABLE I C O NV E R G EN C E R A T E . channel output vector y . Thus, the vector of decorrelator deci- sions is determined by taking the signum (for binary signaling) of the vector A − 1 b = R − 1 y . Note that R n =3 and R n =4 in this case are not strictly diagonally dominant, but their spectral radii are less than unity , since ρ ( | I 3 − R n =3 | ) = 0 . 9008 < 1 and ρ ( | I 4 − R n =4 | ) = 0 . 8747 < 1 , respecti vely . In all of the experiments, we assumed the (noisy) output sample was the all-ones vector . T able I compares the proposed GaBP solver with stan- dard iterative solution methods [2], pre viously employed for CDMA multiuser detection (MUD). Specifically , MUD algo- rithms based on the algorithms of Jacobi, GS and (optimally weighted) SOR were inv estigated [14]–[16]. T able I lists the con vergence rates for the two Gold code-based CDMA settings. Conv ergence is identified and declared when the differences in all the iterated values are less than 10 − 6 . W e see that, in comparison with the previously proposed detectors based upon the Jacobi and GS algorithms, the serial (asyn- chronous) message-passing GaBP detector conv erges more rapidly for both n = 3 and n = 4 and achieves the best ov erall con vergence rate, surpassing ev en the optimal SOR- based detector . Further speed-up of the GaBP solver can be achiev ed by adopting kno wn acceleration techniques from linear algebra. T able I demonstrates the speed-up of the GaBP solver obtained by using such an acceleration method, termed Steffensen’ s iterations [17], in comparison with the accelerated Jacobi algorithm (div erged for the 4 users setup). W e remark that this is the first time such an acceleration method is ex- amined within the frame work of message-passing algorithms and that the region of con vergence of the accelerated GaBP solver remains unchanged. The conv ergence contours for the Jacobi and parallel (syn- chronous) GaBP solvers for the case of 3 users are plotted in the space of { x 1 , x 2 , x 3 } in Fig. 2. As e xpected, the Jacobi algorithm con verges in zigzags directly to wards the fixed point. It is interesting to note that the GaBP solver’ s conv ergence is in a spiral shape, hinting that despite the overall con vergence improv ement, performance improvement is not guaranteed in successiv e iteration rounds. Further results and elaborate discussion on the application of GaBP specifically to linear MUD may be found in recent contributions [18], [19]. Fig. 2. Conv ergence visualization. R E F E R E N C E S [1] G. H. Golub and C. F . V . Loan, Matrix Computation , 3rd ed. Baltimore, MD: The Johns Hopkins Univ ersity Press, 1996. [2] O. Axelsson, Iterative Solution Methods . Cambridge, UK: Cambridge Univ ersity Press, 1994. [3] Y . Saad, Iterative Methods for Sparse Linear Systems . PWS Publishing company , 1996. [4] J. Pearl, Probabilistic Reasoning in Intelligent Systems: Networks of Plausible Inference . San Francisco: Morgan Kaufmann, 1988. [5] M. I. Jordan, Ed., Learning in Graphical Models . Cambridge, MA: The MIT Press, 1999. [6] T . Richardson and R. Urbanke, Modern Coding Theory . Cambridge Univ ersity Press, 2007. [7] F . Kschischang, B. Frey , and H. A. Loeliger, “Factor graphs and the sum-product algorithm, ” IEEE T rans. Inform. Theory , v ol. 47, pp. 498– 519, Feb. 2001. [8] Y . W eiss and W . T . Freeman, “Correctness of belief propagation in Gaussian graphical models of arbitrary topology , ” Neural Computation , vol. 13, no. 10, pp. 2173–2200, 2001. [9] D. Bickson, D. Dolev , and Y . W eiss, “Modified belief propagation for energy saving in wireless and sensor networks, ” in Leibniz Center TR-2005-85, School of Computer Science and Engineering, The Hebr ew University , 2005. [Online]. A vailable: http://leibniz.cs.huji.ac.il/tr/842. pdf [10] J. K. Johnson, D. M. Malioutov , and A. S. Willsk y , “W alk-sum inter- pretation and analysis of Gaussian belief propagation, ” in Advances in Neural Information Pr ocessing Systems 18 , Y . W eiss, B. Sch ¨ olkopf, and J. Platt, Eds. Cambridge, MA: MIT Press, 2006, pp. 579–586. [11] D. M. Malioutov , J. K. Johnson, and A. S. Willsk y , “W alk-sums and belief propagation in Gaussian graphical models, ” Journal of Machine Learning Researc h , vol. 7, Oct. 2006. [12] K. Plarre and P . Kumar , “Extended message passing algorithm for inference in loopy Gaussian graphical models, ” Ad Hoc Networks , 2004. [13] K. M. Murphy , Y . W eiss, and M. I. Jordan, “Loopy belief propagation for approximate inference: An empirical study , ” in Pr oc. of UAI , 1999. [14] A. Y ener , R. D. Y ates, and S. Ulukus, “CDMA multiuser detection: A nonlinear programming approach, ” IEEE T rans. Commun. , vol. 50, no. 6, pp. 1016–1024, June 2002. [15] A. Grant and C. Schlegel, “Iterativ e implementations for linear multiuser detectors, ” IEEE T rans. Commun. , vol. 49, no. 10, pp. 1824–1834, Oct. 2001. [16] P . H. T an and L. K. Rasmussen, “Linear interference cancellation in CDMA based on iterati ve techniques for linear equation systems, ” IEEE T rans. Commun. , vol. 48, no. 12, pp. 2099–2108, Dec. 2000. [17] P . Henrici, Elements of Numerical Analysis . New Y ork: John Wiley and Sons, 1964. [18] D. Bickson, O. Shental, P . H. Siegel, J. K. W olf, and D. Dolev , “Linear detection via belief propag ation, ” in Pr oc. 45th Allerton Conf. on Communications, Contr ol and Computing , Monticello, IL, USA, Sept. 2007. [19] ——, “Gaussian belief propagation based multiuser detection, ” in IEEE Int. Symp. on Inform. Theory (ISIT) , T oronto, Canada, July 2008.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment