Boosting has attracted much research attention in the past decade. The success of boosting algorithms may be interpreted in terms of the margin theory. Recently it has been shown that generalization error of classifiers can be obtained by explicitly taking the margin distribution of the training data into account. Most of the current boosting algorithms in practice usually optimizes a convex loss function and do not make use of the margin distribution. In this work we design a new boosting algorithm, termed margin-distribution boosting (MDBoost), which directly maximizes the average margin and minimizes the margin variance simultaneously. This way the margin distribution is optimized. A totally-corrective optimization algorithm based on column generation is proposed to implement MDBoost. Experiments on UCI datasets show that MDBoost outperforms AdaBoost and LPBoost in most cases.

Deep Dive into Boosting through Optimization of Margin Distributions.

Boosting has attracted much research attention in the past decade. The success of boosting algorithms may be interpreted in terms of the margin theory. Recently it has been shown that generalization error of classifiers can be obtained by explicitly taking the margin distribution of the training data into account. Most of the current boosting algorithms in practice usually optimizes a convex loss function and do not make use of the margin distribution. In this work we design a new boosting algorithm, termed margin-distribution boosting (MDBoost), which directly maximizes the average margin and minimizes the margin variance simultaneously. This way the margin distribution is optimized. A totally-corrective optimization algorithm based on column generation is proposed to implement MDBoost. Experiments on UCI datasets show that MDBoost outperforms AdaBoost and LPBoost in most cases.

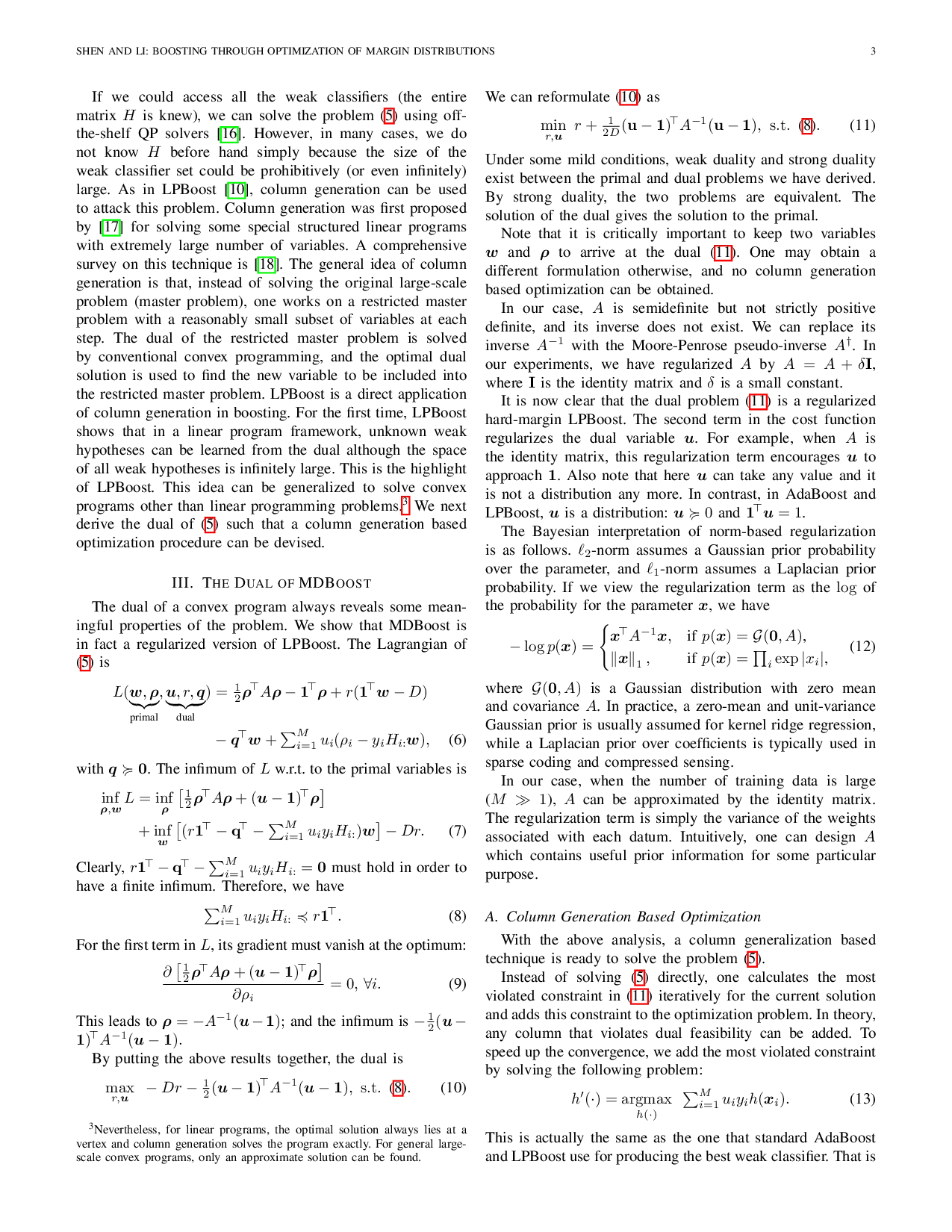

Boosting offers a method for improving existing classification algorithms. Given a training dataset, boosting builds a strong classifier using only a weak learning algorithm [1], [2]. Typically, a weak (or base) classifier generated by the weak learning algorithm has a misclassification error that is slightly better than random guess. A strong classifier has a much better test error. In this sense, boosting algorithms can boost the weak learning algorithm to obtain a much stronger classifier. Boosting was originally proposed as an ensemble learning method, which depends on majority voting of multiple individual classifiers. Later, Breiman [3] and Friedman et al. [4] observed that many boosting algorithms can be viewed as gradient descent optimization in functional space. Mason et al. [5] developed AnyBoost for boosting arbitrary loss functions with a similar idea. Despite the large success in practice of these boosting algorithms, there are still open questions about why and how boosting works. Inspired by the large-margin theory in kernel methods, Schapire et al. [1] presented a margin-based bound for AdaBoost, which tries to interpret AdaBoost's success with the margin theory. Although the margin theory provides a qualitative explanation of the effectiveness of boosting, the bounds are quantitatively weak. A recent work [6] has proffered new tighter margin bounds, which may be useful for quantitative predictions. Arc-Gv [3], a variant of the AdaBoost algorithm, was designed by Breiman to empirically test AdaBoost's convergence properties. It is very similar to AdaBoost (only different in calculating the coefficient associated with each weak classifier) such that it increases margins even more aggressively than AdaBoost. Breiman's experiments on Arc-Gv show contrary results to the margin theory: Arc-Gv always has a minimum margin that is provably larger than AdaBoost but Arc-Gv performs worse in terms of test error [3]. Grove and Schuurmans [7] observed the same phenomenon. In the literature, much work has focused on maximizing the minimum margin [8]- [10]. Recently, Reyzin and Schapire [11] re-ran Breiman's experiments by controlling weak classifiers' complexity. They found that a better margin distribution is more important than the minimum margin. It is of importance to have a large minimum margin, but not at the expense of other factors. They thus conjectured that maximizing the average margin rather than the minimum margin may lead to improved boosting algorithms. We try to verify this conjecture in this work.

Recently, Garg and Roth [12] introduced margin distribution based complexity measure for learning classifiers and developed margin distribution based generalization bounds. Competitive classification results have been shown by optimizing this bound. Another relevant work is [13]. [13] applies a boosting method to optimize the margin distribution based generalization bound obtained by [14]. Experiments show that the new boosting methods achieve considerable improvements over AdaBoost. The optimization of this new boosting method is based on the AnyBoost framework [5]. Aligned with these attempts, we propose a new boosting algorithm through optimization of margin distribution (termed MDBoost). Instead of minimizing a margin distribution based generalization bound, we directly optimize the margin distribution: maximizing the average margin and at the same time minimizing the variance of the margin distribution.

The theoretical justification of the proposed MDBoost is that, approximately, AdaBoost actually maximizes the average margin and minimizes the margin variance.

The main contributions of our work are as follows.

We propose a new totally-corrective boosting algorithm, MDBoost, by optimizing the margin distribution directly. The optimization procedure of MDBoost is based on the idea of column generation that has been widely used in large-scale linear programming.

We empirically demonstrate that MDBoost outperforms AdaBoost and LPBoost on most UCI datasets used in our experiments. The success of MDBoost verifies the conjecture in [11]. Our results also show that MDBoost has achieved similar (or better) classification performance compared with AdaBoost-CG [15]. AdaBoost-CG is also totally-corrective in the sense that all the linear coefficients of the weak classifiers are updated during the training. An advantage of MDBoost is that, at each iteration, MDBoost solves a quadratic program while AdaBoost-CG needs to solve a general convex program.1 Throughout the paper, a matrix is denoted by an upper-case letter (X); a column vector is denoted by a bold low-case letter (x). The ith row of X is denoted by X i: and the ith column X :i . We use I to denote the identity matrix. 1 and 0 are column vectors of 1’s and 0’s, respectively. Their sizes will be clear from the context. We use , to denote componentwise inequalities.

The rest of the paper is structured as follows. In Section II we pres

…(Full text truncated)…

This content is AI-processed based on ArXiv data.