Nonparametric Partial Importance Sampling for Financial Derivative Pricing

Importance sampling is a promising variance reduction technique for Monte Carlo simulation based derivative pricing. Existing importance sampling methods are based on a parametric choice of the proposal. This article proposes an algorithm that estimates the optimal proposal nonparametrically using a multivariate frequency polygon estimator. In contrast to parametric methods, nonparametric estimation allows for close approximation of the optimal proposal. Standard nonparametric importance sampling is inefficient for high-dimensional problems. We solve this issue by applying the procedure to a low-dimensional subspace, which is identified through principal component analysis and the concept of the effective dimension. The mean square error properties of the algorithm are investigated and its asymptotic optimality is shown. Quasi-Monte Carlo is used for further improvement of the method. It is easy to implement, particularly it does not require any analytical computation, and it is computationally very efficient. We demonstrate through path-dependent and multi-asset option pricing problems that the algorithm leads to significant efficiency gains compared to other algorithms in the literature.

💡 Research Summary

This paper addresses the longstanding challenge of variance reduction in Monte‑Carlo (MC) pricing of high‑dimensional financial derivatives. Traditional importance sampling (IS) techniques rely on parametric families for the proposal distribution—typically Gaussian shifts or Gaussian mixtures—and adjust a few parameters (mean shift, covariance scaling, mixture weights) to approximate the optimal proposal q_opt(x) ∝ |ϕ(x)| p(x). While effective for simple pay‑offs, these parametric forms often fail to capture the complex shape of the optimal density for path‑dependent or multi‑asset options, limiting the achievable variance reduction.

The authors propose a non‑parametric partial importance sampling (NPIS) framework that directly estimates the marginal optimal proposal on a carefully selected low‑dimensional subspace. The optimal proposal is defined as q_opt(x) = |ϕ(x)| p(x) / ∫|ϕ(x)| p(x)dx, where ϕ is the discounted payoff and p is the standard normal density of the underlying Brownian increments. Since q_opt is unavailable analytically, the algorithm proceeds in two stages:

-

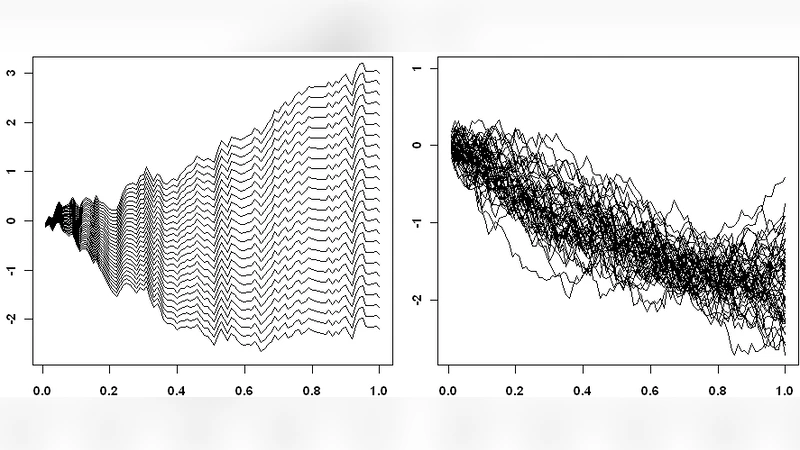

Non‑parametric estimation – A trial distribution q₀ (usually the original p) is used to draw M samples. For each sample 𝑥̃_j the weight ω_j = |ϕ(𝑥̃_j)| p(𝑥̃_j)/q₀(𝑥̃_j) is computed. The marginal optimal density on a subset of coordinates u (|u|≪d) is then estimated using a multivariate linear blend frequency polygon (LBFP), i.e., a histogram with linear interpolation between bin mid‑points. This estimator retains the O(N^{-4/(4+d)}) mean‑square‑error rate of kernel density estimators but with dramatically lower computational cost (O(N 2^{|u|−1}) versus O(N²) for kernels).

-

Partial IS – Samples for the selected coordinates x_u are drawn from the LBFP estimate \hat q_opt, while the remaining coordinates x_{−u} are sampled from the original p. The IS estimator then uses the likelihood ratio p(x_u)/\hat q_opt(x_u) together with the payoff ϕ(x).

The crucial innovation is the restriction to a low‑dimensional subspace. The authors invoke the concept of effective dimension (ED), defined via ANOVA decomposition of the integrand’s variance. The ED is the smallest number of coordinates whose combined ANOVA terms explain a pre‑specified proportion (e.g., 95 %) of the total variance. Empirically, many financial integrals have small ED even when the nominal dimension d is large. To identify the most influential coordinates, the paper applies principal component analysis (PCA) to the covariance matrix Σ of the underlying Gaussian driver. By ordering eigenvalues λ₁≥λ₂≥…≥λ_d, the first few principal components capture most of the variance; these components constitute the set u.

Theoretical analysis (Theorem 1) decomposes the mean‑square error of the NPIS estimator into two parts: (i) variance contributed by the non‑sampled dimensions x_{−u}, and (ii) the non‑parametric estimation error on x_u. By selecting the bin width h optimally (explicit formula involving |u|, M, and constants H₁, H₂), the second term decays as O(N^{-(8+|u|)/(4+|u|)}), which is faster than the crude MC rate O(N^{-1}) for modest |u| (e.g., |u|=1–3). As N→∞ with M/N→λ∈(0,1), the overall MSE is dominated by the first term, implying that the algorithm asymptotically attains the variance of an IS estimator that uses the true marginal optimal proposal on u—i.e., it is asymptotically optimal within the chosen subspace.

To further accelerate convergence, the authors combine NPIS with quasi‑Monte Carlo (QMC) low‑discrepancy sequences (Sobol, Halton) for sampling the low‑dimensional u. Since QMC provides deterministic error bounds of order O(N^{-1} (log N)^{|u|}), the hybrid NPIS‑QMC method often outperforms both pure MC and pure QMC in high‑dimensional settings where the effective dimension is low.

The paper validates the methodology on several benchmark problems:

- Asian options (path‑dependent, discretized over many time steps);

- Basket options with multiple underlying assets;

- Barrier and look‑back options where payoff depends on extrema of the path.

In each case, the NPIS (or NPIS‑QMC) estimator achieves variance reduction factors ranging from 5× to over 50× compared with crude MC, and outperforms leading parametric IS schemes such as mean‑shift, adaptive stochastic optimization, and Gaussian mixture IS. Moreover, computational times are comparable because the LBFP estimator is cheap to evaluate and the low‑dimensional sampling avoids the curse of dimensionality.

In summary, the contribution of the paper is threefold:

- Non‑parametric estimation of the optimal IS density using the computationally efficient LBFP estimator.

- Dimensionality reduction via effective dimension and PCA, enabling the non‑parametric approach to scale to problems with hundreds of nominal dimensions.

- Hybridization with QMC, delivering further speed‑up and robustness.

The proposed NPIS framework is easy to implement (the authors provide C++ code for the LBFP estimator), requires no analytical derivation of the optimal drift, and can be combined with other variance‑reduction techniques. It offers a powerful, flexible tool for practitioners dealing with complex, high‑dimensional derivative pricing problems where traditional parametric IS fails to deliver sufficient variance reduction.

Comments & Academic Discussion

Loading comments...

Leave a Comment