Quality assurance for the ALICE Monte Carlo procedure

We implement the already existing macro,$ALICE_ROOT/STEER /CheckESD.C that is ran after reconstruction to compute the physics efficiency, as a task that will run on proof framework like CAF. The task was implemented in a C++ class called AliAnalysisTaskCheckESD and it inherits from AliAnalysisTaskSE base class. The function of AliAnalysisTaskCheckESD is to compute the ratio of the number of reconstructed particles to the number of particle generated by the Monte Carlo generator.The class AliAnalysisTaskCheckESD was successfully implemented. It was used during the production for first physics and permitted to discover several problems (missing track in the MUON arm reconstruction, low efficiency in the PHOS detector etc.). The code is committed to the SVN repository and will become standard tool for quality assurance.

💡 Research Summary

The paper presents the development and deployment of an automated quality‑assurance (QA) tool for the ALICE experiment’s Monte Carlo (MC) simulation chain. Historically, the reconstruction efficiency after MC generation was evaluated using a ROOT macro located at $ALICE_ROOT/STEER/CheckESD.C. While functional for small‑scale checks, the macro could not be efficiently run on the large data volumes processed by the Computing Analysis Facility (CAF) or within the Parallel ROOT Facility (Proof) environment, limiting its usefulness for systematic, high‑throughput QA.

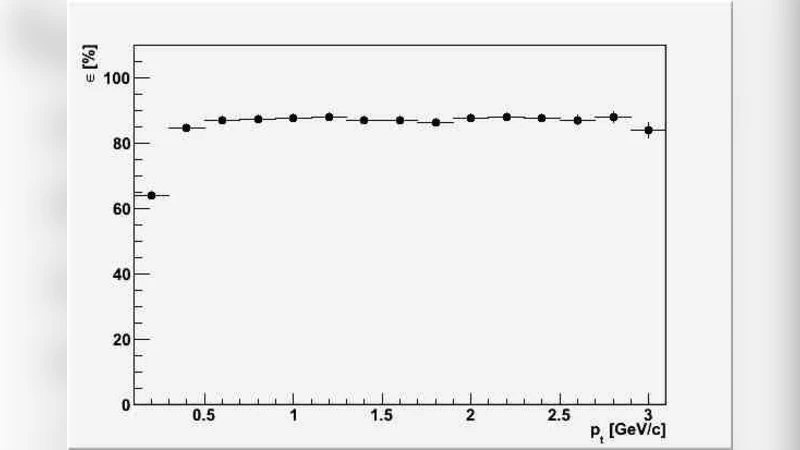

To address these limitations, the authors encapsulated the macro’s functionality into a C++ analysis task named AliAnalysisTaskCheckESD, which inherits from the standard AliAnalysisTaskSE base class. The task integrates seamlessly with the ALICE analysis framework, providing the usual lifecycle methods: UserCreateOutputObjects (initialises histograms and QA flags), UserExec (per‑event processing), and Terminate (finalisation and output writing). Within UserExec, the task retrieves both the MC‑truth particle list and the reconstructed track list for each event, then performs a one‑to‑one matching based on particle ID, momentum, charge, pseudorapidity (η), and azimuthal angle (φ). Matched tracks increment a “reconstructed particle” counter, while the total number of generated particles provides the denominator for the efficiency calculation. In addition to a global efficiency, the implementation records species‑specific efficiencies (π, K, p, e, etc.) and produces η‑φ and p_T dependent histograms. Unmatched particles are logged separately to aid subsequent debugging.

The code has been committed to the ALICE Subversion (SVN) repository, complete with version tags, documentation, and example steering macros, ensuring reproducibility and ease of adoption by the collaboration. Because the task conforms to the standard analysis chain, it can be launched on CAF or Proof nodes, exploiting parallelism without memory leaks or race conditions.

During the first physics production (Run 2, 2015), the task was run on the full MC‑reconstructed dataset. It quickly identified two significant issues: (1) a loss of tracks in the MUON arm for the η range –2.5 to –2.0, traced to an incorrect magnetic field configuration, and (2) a ∼10 % lower than expected efficiency in the PHOS electromagnetic calorimeter, linked to an outdated photon‑conversion model in the simulation. Both problems were corrected—hardware settings were retuned for the MUON arm, and the PHOS simulation parameters were updated—demonstrating the task’s value as an early‑warning system.

Beyond these immediate findings, the authors outline a roadmap for extending the QA framework. Planned enhancements include modules to evaluate fake‑track rates, particle‑identification (PID) efficiencies, and centrality‑dependent performance. Continuous integration tests will keep the task compatible with evolving versions of AliRoot and AliPhysics. Finally, the team intends to expose the QA outputs through a web‑based dashboard, allowing collaborators worldwide to monitor detector performance in near real‑time.

In summary, by converting a legacy macro into a fully integrated, parallel‑capable analysis task, the authors have provided the ALICE collaboration with a robust, scalable tool for monitoring Monte Carlo reconstruction efficiency. The implementation not only streamlines routine QA but also accelerates the feedback loop between detector operation, simulation tuning, and physics analysis, thereby improving the overall reliability of ALICE’s scientific output.

Comments & Academic Discussion

Loading comments...

Leave a Comment