We consider the downlink of a cellular system and address the problem of multiuser scheduling with partial channel information. In our setting, the channel of each user is modeled by a three-state Markov chain. The scheduler indirectly estimates the channel via accumulated Automatic Repeat Request (ARQ) feedback from the scheduled users and uses this information in future scheduling decisions. Using a Partially Observable Markov Decision Process (POMDP), we formulate a throughput maximization problem that is an extension of our previous work where the channels were modeled using two states. We recall the greedy policy that was shown to be optimal and easy to implement in the two state case and study the implementation structure of the greedy policy in the considered downlink. We classify the system into two types based on the channel statistics and obtain round robin structures for the greedy policy for each system type. We obtain performance bounds for the downlink system using these structures and study the conditions under which the greedy policy is optimal.

Deep Dive into Opportunistic Multiuser Scheduling in a Three State Markov-modeled Downlink.

We consider the downlink of a cellular system and address the problem of multiuser scheduling with partial channel information. In our setting, the channel of each user is modeled by a three-state Markov chain. The scheduler indirectly estimates the channel via accumulated Automatic Repeat Request (ARQ) feedback from the scheduled users and uses this information in future scheduling decisions. Using a Partially Observable Markov Decision Process (POMDP), we formulate a throughput maximization problem that is an extension of our previous work where the channels were modeled using two states. We recall the greedy policy that was shown to be optimal and easy to implement in the two state case and study the implementation structure of the greedy policy in the considered downlink. We classify the system into two types based on the channel statistics and obtain round robin structures for the greedy policy for each system type. We obtain performance bounds for the downlink system using these

Opportunistic multiuser scheduling, introduced by Knopp and Humblet in [1] and defined as allocating the resources to the user experiencing the most favorable channel conditions has gained immense popularity among network designers in the recent past. Opportunistic multiuser scheduling essentially taps the multiuser diversity in the system and has motivated several researchers ([2, 3, 4, 5, 6]) to study the performance gains obtained by opportunistic scheduling under various scenarios. While i.i.d flat fading model is popularly used by researchers in modeling time varying channels, it fails to capture the memory in the channel observed in realistic scenarios. This motivated the researchers to consider the Gilbert Elliott model [7] that represents the channel by a two state Markov chain. Specifically, a user experiences error-free transmission when it observes a "good" channel, and unsuccessful transmission in a "bad" channel. Several works have been done on opportunistic multiuser scheduling in this Markov modeled channel [8,9,10,11,12]. It is understandable that the availability of the channel state information at the scheduler is crucial for the success of the opportunistic scheduling schemes. Traditionally, when the scheduler has no channel information, pilot based channel estimation is performed and the estimates are used for scheduling decisions ([2, 6, 13]). A new line of work, [14,15,16,17,18], attempts to exploit Automatic Repeat reQuest (ARQ) feedback, traditionally used for error control at the data link layer, to estimate the state of the two state Markov modeled channels.

In [16] and [18], for a two state Markov modeled downlink (one to many communication) system, a greedy policy has been shown to be optimal from a sum throughput point of view. This greedy policy is shown to be amenable to an easy implementation with a simple round robin based algorithm that takes as input the ARQ feedback from the scheduled user. Although modeling the channel by a two state Markov chain is a welcome change from the traditional memoryless models, the scheduler can do better by discriminating the channel conditions on a finer level, i.e., if the channel is modeled by higher state Markov chains. As a first step in this direction, in this report, we model the channels by three state Markov chains and study the property of the greedy policy and conditions under which it will be optimal.

The report is organized as follows. The problem setup is described in Section 2 followed by a study of the implementation structure of the greedy policy in Section 3. A comparison of the original system with the genie-aided system, introduced in [18], will be made in Section 4. In Section 5, upper and lower bounds to the system performance is derived. We study the conditions under which greedy policy is optimal in Section 6. Conclusions are provided in Section 7.

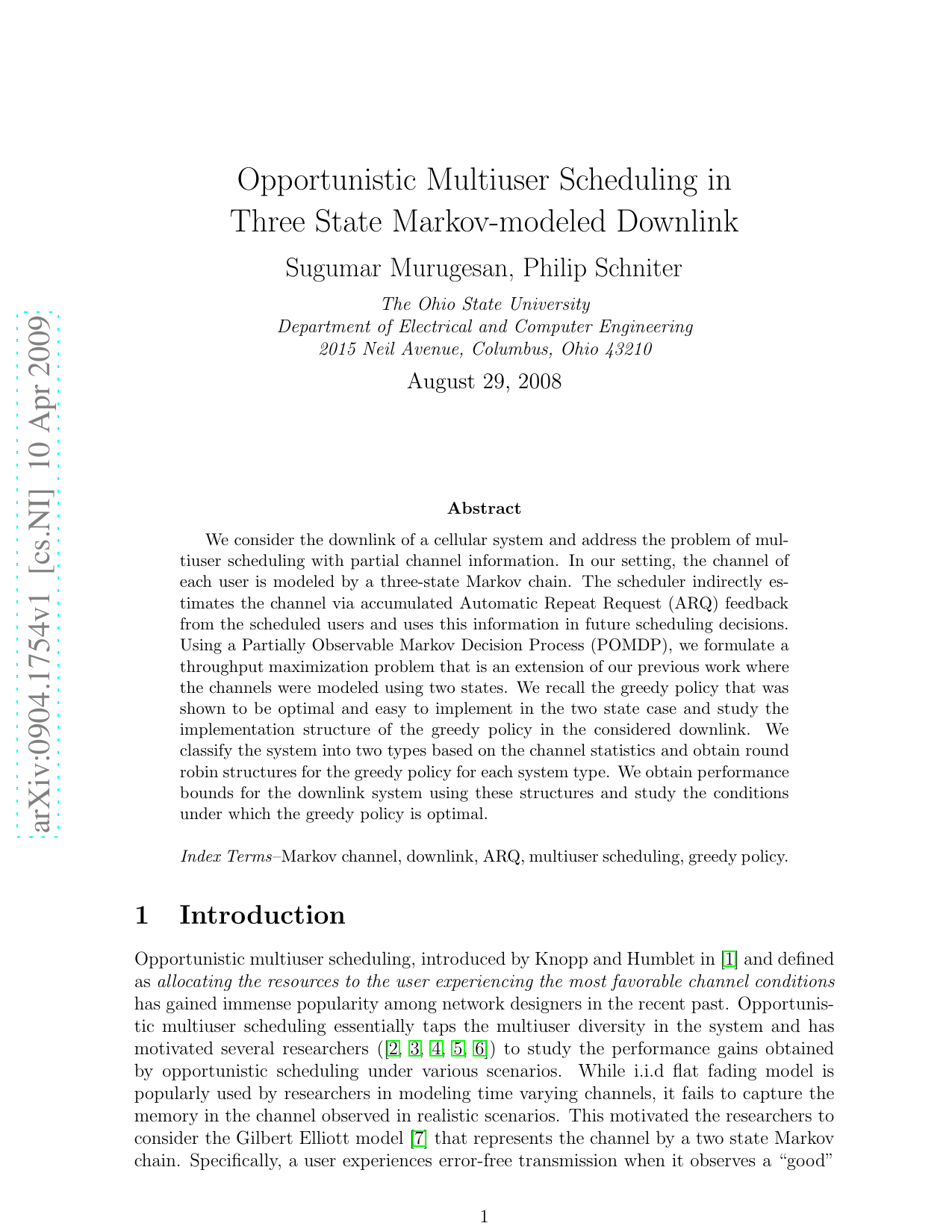

We consider downlink transmissions with 2 users. The channel between the base station and each user is modeled by an i.i.d, first order, three-state Markov chain. Time is slotted and the channel of each user remains fixed for a slot and evolves into another state in the next slot according to the Markov chain statistics. The time slots of all users are synchronized. The three-state Markov channel is characterized by a 3 × 3 probability transition matrix

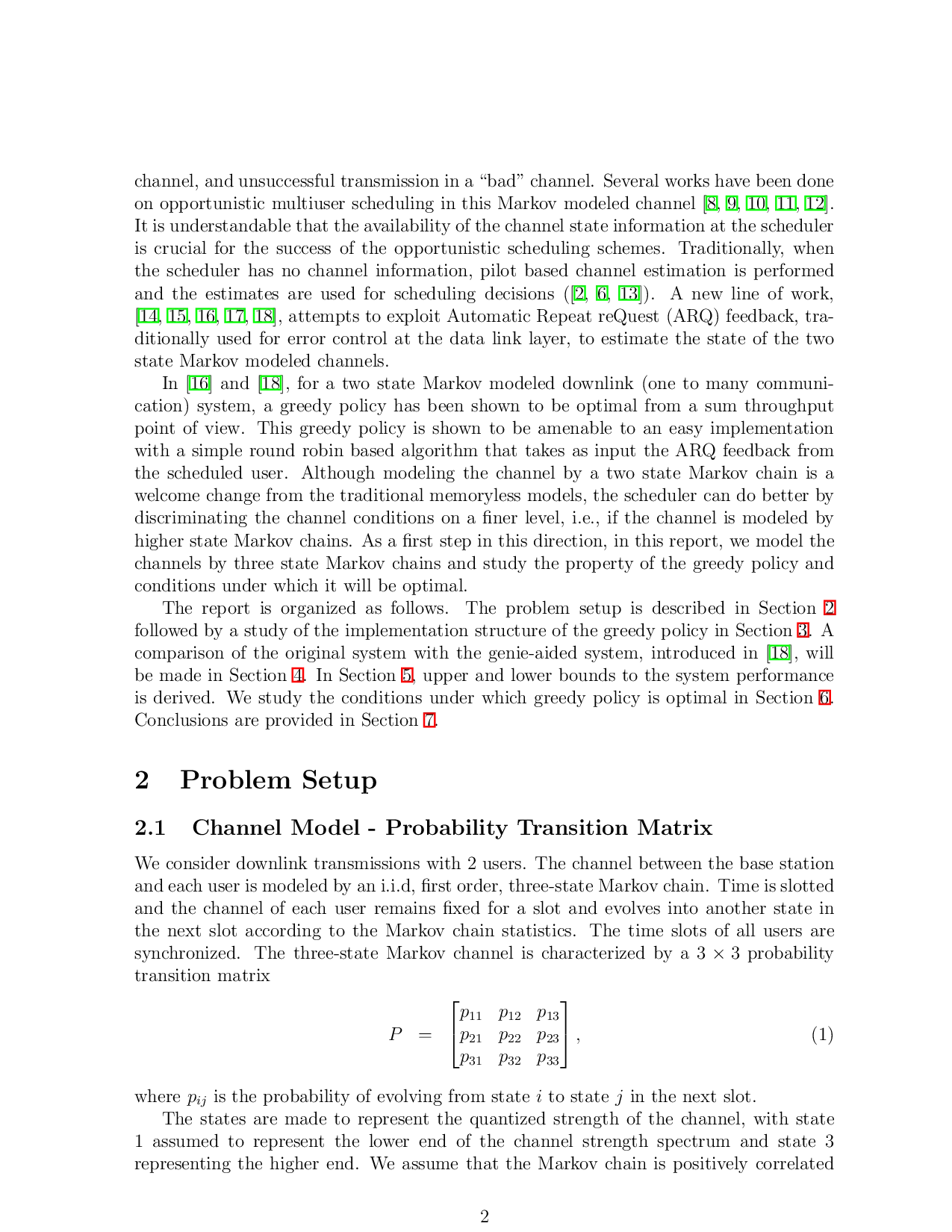

where p ij is the probability of evolving from state i to state j in the next slot. The states are made to represent the quantized strength of the channel, with state 1 assumed to represent the lower end of the channel strength spectrum and state 3 representing the higher end. We assume that the Markov chain is positively correlated in time. Thus p ii ≥ p ji if j = i. Also, motivated by observation of realistic channels, we assume that the channel evolves in a smooth fashion across time. Thus p 21 ≥ p 31 and p 23 ≥ p 13 . Also, observing that state 3 represents a region of the channel strength spectrum that is not bounded from above, it is reasonable to assume p 32 ≤ p 12 . To summarize, the transition matrix elements are related as below:

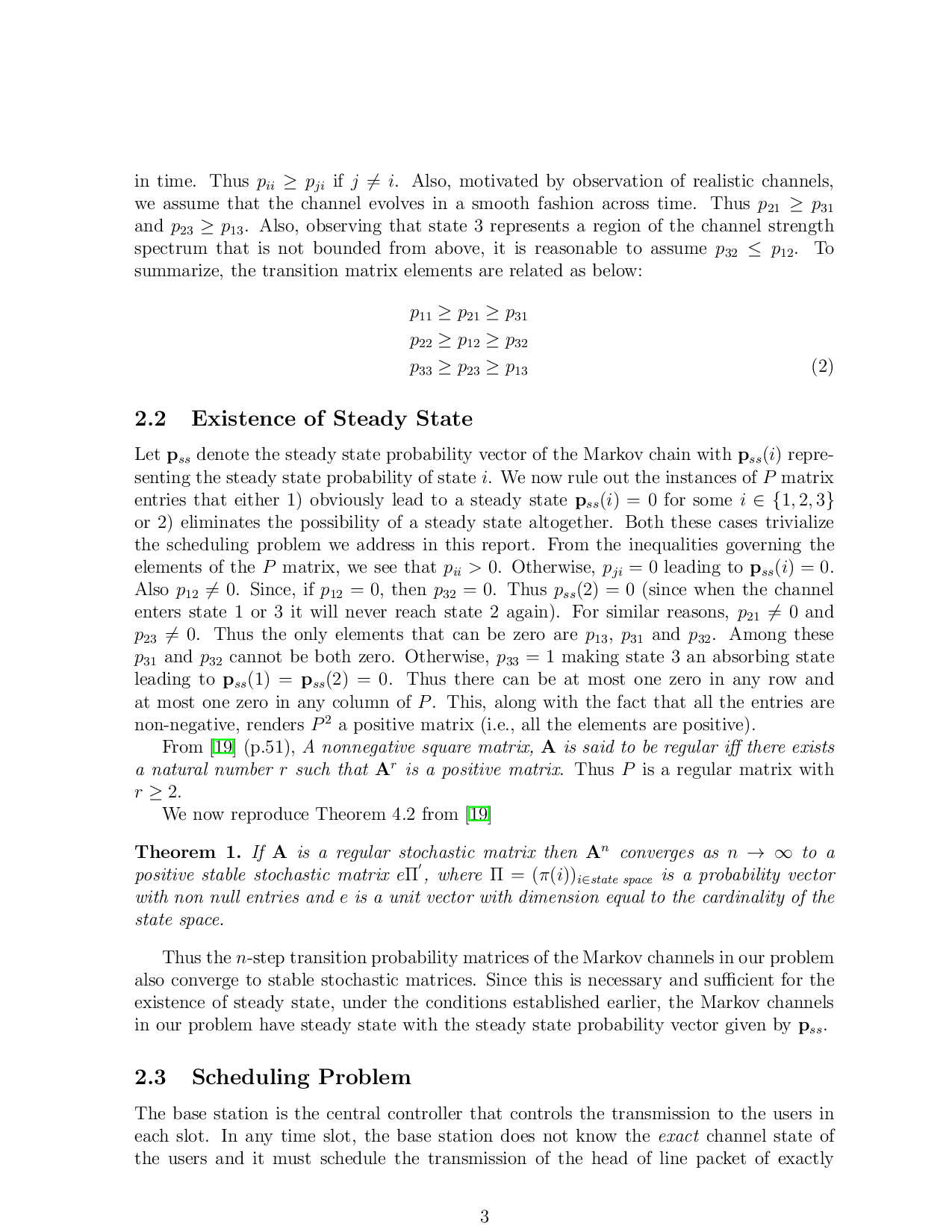

Let p ss denote the steady state probability vector of the Markov chain with p ss (i) representing the steady state probability of state i. We now rule out the instances of P matrix entries that either 1) obviously lead to a steady state p ss (i) = 0 for some i ∈ {1, 2, 3} or 2) eliminates the possibility of a steady state altogether. Both these cases trivialize the scheduling problem we address in this report. From the inequalities governing the elements of the P matrix, we see that p ii > 0. Otherwise, p ji = 0 leading to p ss (i) = 0. Also p 12 = 0. Since, if p 12 = 0, then p 32 = 0. Thus p ss (2) = 0 (since when the channel enters state 1 or 3 it will never reach state 2 again). For similar reasons, p 21 = 0 and p 23 = 0. Thus the only elements that can be zero are p 13 , p 31 and p 32 . Among these p 31 and p 32 cannot be both zero. Otherwise, p 33 = 1 making state 3 an absorbing

…(Full text truncated)…

This content is AI-processed based on ArXiv data.