Digital Instrumentation for the Radio Astronomy Community

Time-to-science is an important figure of merit for digital instrumentation serving the astronomical community. A digital signal processing (DSP) community is forming that uses shared hardware development, signal processing libraries, and instrument architectures to reduce development time of digital instrumentation and to improve time-to-science for a wide variety of projects. We suggest prioritizing technological development supporting the needs of this nascent DSP community. After outlining several instrument classes that are relying on digital instrumentation development to achieve new science objectives, we identify key areas where technologies pertaining to interoperability and processing flexibility will reduce the time, risk, and cost of developing the digital instrumentation for radio astronomy. These areas represent focus points where support of general-purpose, open-source development for a DSP community should be prioritized in the next decade. Contributors to such technological development may be centers of support for this DSP community, science groups that contribute general-purpose DSP solutions as part of their own instrumentation needs, or engineering groups engaging in research that may be applied to next-generation DSP instrumentation.

💡 Research Summary

The paper addresses a critical bottleneck in modern radio astronomy: the long “time‑to‑science” interval that separates the conception of an instrument from the delivery of scientific results. Historically, radio‑astronomy back‑ends have been built as bespoke solutions, each project commissioning its own analog front‑end, digital signal‑processing (DSP) hardware, and proprietary software stack. This approach leads to multi‑year development cycles, high risk, and duplicated effort across the community.

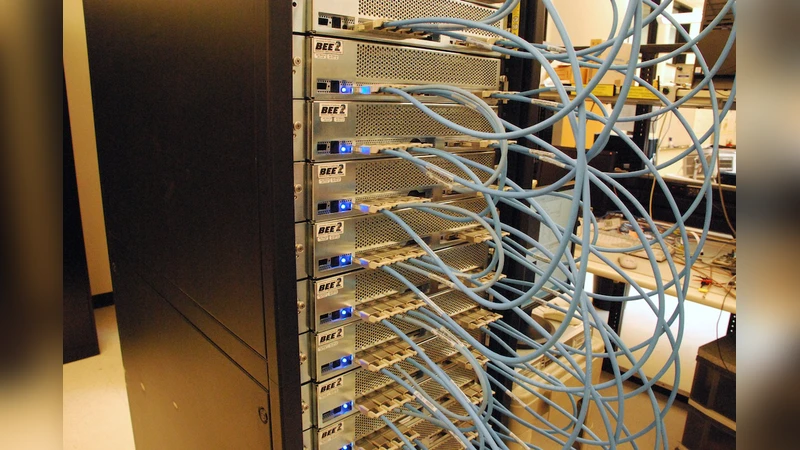

To overcome these challenges, the authors propose the formation of a dedicated DSP community that shares hardware platforms, signal‑processing libraries, and system architectures. By converging on common field‑programmable gate arrays (FPGAs), graphics processing units (GPUs), and application‑specific integrated circuits (ASICs), and by adopting open‑source DSP kernels (FFT, FIR/IIR filters, beamforming, real‑time radio‑frequency interference mitigation, machine‑learning classifiers, etc.), the community can dramatically reduce the engineering overhead for new projects.

The paper identifies five key technology domains that must be prioritized to enable this collaborative model:

-

Interoperability through hardware abstraction and virtualization – A hardware abstraction layer (HAL) and container‑based virtualization allow the same software stack to run on heterogeneous platforms, simplifying integration and maintenance.

-

Processing flexibility via dynamic pipeline reconfiguration – Stream‑processing frameworks that support on‑the‑fly re‑routing of data enable rapid switching between observing modes, real‑time algorithm updates, and adaptive resource allocation.

-

Accelerated development with automated code generation and CI/CD pipelines – High‑level synthesis (HLS), OpenCL, and Python‑to‑FPGA toolchains, coupled with continuous integration and testing, catch design errors early and shorten the deployment cycle.

-

Metadata‑driven data management and sharing – Standardized data models (e.g., ASDM, HDF5) and a metadata‑centric data lake facilitate provenance tracking, multi‑institution collaboration, and reproducibility of results.

-

Security and reliability through authentication and encryption – Embedding cryptographic primitives and secure boot mechanisms in the instrument firmware protects remote control channels and ensures data integrity.

The authors illustrate the impact of these technologies with case studies from several flagship projects:

- SKA‑Low – Requires processing of terabits per second from thousands of antennas; benefits from a modular FPGA‑GPU hybrid back‑end and real‑time pipeline reconfiguration.

- ngVLA – Demands ultra‑wide bandwidth and high spectral resolution; gains from high‑performance DSP libraries and automated deployment pipelines.

- CHIME‑FRB and HIRAX – Focus on fast transient detection and multi‑beam formation; need machine‑learning‑based RFI excision and low‑latency beamforming algorithms.

Each case is examined in terms of bandwidth, latency, power‑budget, and algorithmic complexity, showing how a shared DSP ecosystem can reduce development time from years to months while lowering cost and risk.

To sustain this ecosystem, the paper proposes three complementary organizational models:

- Dedicated support centers (e.g., NRAO, CSIRO, SKA regional offices) that provide shared test‑beds, documentation, and training.

- Science groups that contribute general‑purpose DSP solutions as part of their own instrument development, thereby enriching the communal code base.

- Engineering research labs (universities, industry partners) that push forward next‑generation ASICs, high‑speed interconnects, and novel signal‑processing algorithms.

When these models interact, they create a virtuous cycle of standardization, innovation, and knowledge transfer. The authors argue that prioritizing open‑source, modular, and standards‑based development will enable the radio‑astronomy community to respond rapidly to emerging scientific questions, such as probing the cosmic dawn, mapping neutral hydrogen across cosmic time, and detecting fast radio bursts with unprecedented sensitivity.

In conclusion, the paper calls for coordinated investment over the next decade in the identified technology pillars, emphasizing that a thriving DSP community will be the catalyst for a new era of data‑driven radio astronomy, where scientific insight is limited only by the sky, not by the time required to build the instruments that observe it.

Comments & Academic Discussion

Loading comments...

Leave a Comment