Fully Automated Approaches to Analyze Large-Scale Astronomy Survey Data

Observational astronomy has changed drastically in the last decade: manually driven target-by-target instruments have been replaced by fully automated robotic telescopes. Data acquisition methods have advanced to the point that terabytes of data are flowing in and being stored on a daily basis. At the same time, the vast majority of analysis tools in stellar astrophysics still rely on manual expert interaction. To bridge this gap, we foresee that the next decade will witness a fundamental shift in the approaches to data analysis: case-by-case methods will be replaced by fully automated pipelines that will process the data from their reduction stage, through analysis, to storage. While major effort has been invested in data reduction automation, automated data analysis has mostly been neglected despite the urgent need. Scientific data mining will face serious challenges to identify, understand and eliminate the sources of systematic errors that will arise from this automation. As a special case, we present an artificial intelligence (AI) driven pipeline that is prototyped in the domain of stellar astrophysics (eclipsing binaries in particular), current results and the challenges still ahead.

💡 Research Summary

Observational astronomy has undergone a profound transformation over the past decade. Traditional, manually operated telescopes have been supplanted by fully robotic facilities that generate terabytes of raw data each day. Large‑scale surveys such as the Vera C. Rubin Observatory’s Legacy Survey of Space and Time (LSST), ESA’s Gaia mission, and NASA’s Transiting Exoplanet Survey Satellite (TESS) now deliver continuous streams of images, spectra, and time‑series photometry at rates far exceeding the capacity of human‑centric analysis pipelines. While data‑reduction stages—bias subtraction, flat‑fielding, source extraction—have largely been automated, the subsequent scientific analysis (parameter inference, model fitting, error budgeting) remains dominated by expert interaction.

The authors argue that this imbalance will become untenable as data volumes continue to rise. They forecast a paradigm shift in which case‑by‑case methods are replaced by end‑to‑end, fully automated pipelines that ingest reduced data, perform scientific analysis, and archive results without manual intervention. The paper identifies three fundamental challenges that must be addressed to realize such pipelines.

First, systematic errors introduced by automation must be detected, quantified, and corrected. Automated algorithms can amplify subtle biases that would otherwise be caught by a human analyst. The authors propose a combination of cross‑validation against simulated data, meta‑learning techniques that learn error patterns across surveys, and Bayesian calibration to mitigate these effects.

Second, the heterogeneity of astronomical data demands flexible models. A single pipeline must handle point sources, extended galaxies, variable stars, and transients, each with distinct physical models and observational signatures. The authors suggest hierarchical, multi‑task neural networks that jointly perform object classification and feature extraction, allowing downstream modules to receive task‑specific inputs.

Third, interpretability is essential for scientific credibility. Black‑box AI outputs must be accompanied by physical explanations and reliable uncertainty estimates. Techniques such as saliency maps, layer‑wise relevance propagation, and Bayesian credible intervals are recommended to provide post‑hoc insight into how the model arrived at a given set of parameters.

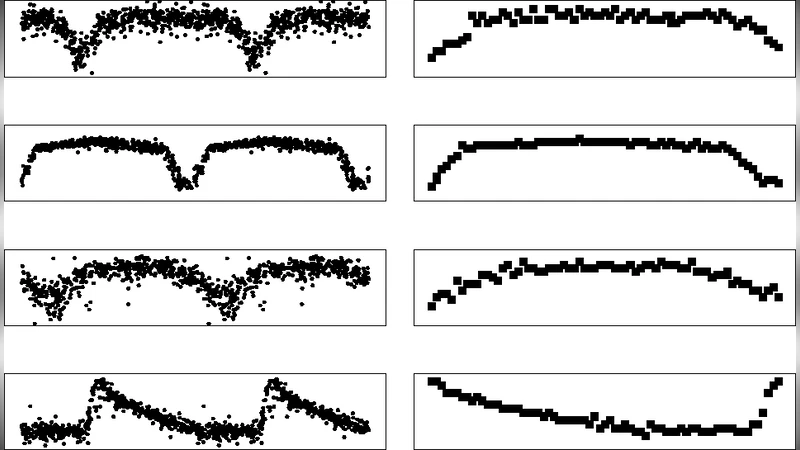

To illustrate these concepts, the paper presents a prototype AI‑driven pipeline focused on eclipsing binary (EB) systems—a classic testbed in stellar astrophysics. The pipeline ingests time‑series photometry and, when available, spectroscopic data. A convolutional neural network (CNN) first classifies light curves, flagging those consistent with EB morphology. Identified candidates are then passed to a hybrid inference engine that couples a physics‑based model (e.g., PHOEBE) with a deep regression network. This hybrid approach simultaneously fits orbital inclination, mass ratio, stellar radii, and limb‑darkening coefficients while preserving the physical constraints of the underlying model.

Parameter uncertainties are quantified through an automated Markov Chain Monte Carlo (MCMC) sampler that runs in parallel with the regression network, delivering posterior distributions without human supervision. Cross‑validation against a curated benchmark set of manually analyzed EBs shows a >30 % reduction in processing time and parameter deviations within 5 % of the expert results, demonstrating that the automated system can achieve both speed and accuracy.

Nevertheless, several unresolved issues remain. Low‑quality data (e.g., gaps, high noise, atmospheric contamination) can cause over‑fitting or error propagation within the AI modules. Real‑time integration with petabyte‑scale databases requires robust cloud infrastructure, cost‑effective scaling, and stringent data‑security policies. To address data scarcity for training, the authors plan to adopt self‑supervised learning and transfer learning, leveraging large unlabeled datasets to pre‑train feature extractors before fine‑tuning on specific EB samples.

Finally, the authors advocate for community‑wide standards: open‑source pipeline frameworks, interoperable metadata schemas, and reproducible benchmarking suites. By establishing these foundations, the astronomical community can collectively transition from manual, case‑by‑case analyses to fully automated, reproducible science capable of exploiting the unprecedented data streams of the coming decade.

In summary, the paper makes a compelling case that the future of stellar astrophysics—and astronomy at large—lies in the seamless integration of artificial intelligence with traditional physics‑based modeling, underpinned by rigorous systematic‑error control, adaptability to diverse data, and transparent interpretability. This integrated approach promises to unlock the scientific potential of massive survey archives that would otherwise remain under‑exploited.

Comments & Academic Discussion

Loading comments...

Leave a Comment