We show that it is possible to significantly improve the accuracy of a general class of histogram queries while satisfying differential privacy. Our approach carefully chooses a set of queries to evaluate, and then exploits consistency constraints that should hold over the noisy output. In a post-processing phase, we compute the consistent input most likely to have produced the noisy output. The final output is differentially-private and consistent, but in addition, it is often much more accurate. We show, both theoretically and experimentally, that these techniques can be used for estimating the degree sequence of a graph very precisely, and for computing a histogram that can support arbitrary range queries accurately.

Deep Dive into Boosting the Accuracy of Differentially-Private Histograms Through Consistency.

We show that it is possible to significantly improve the accuracy of a general class of histogram queries while satisfying differential privacy. Our approach carefully chooses a set of queries to evaluate, and then exploits consistency constraints that should hold over the noisy output. In a post-processing phase, we compute the consistent input most likely to have produced the noisy output. The final output is differentially-private and consistent, but in addition, it is often much more accurate. We show, both theoretically and experimentally, that these techniques can be used for estimating the degree sequence of a graph very precisely, and for computing a histogram that can support arbitrary range queries accurately.

Recent work in differential privacy [9] has shown that it is possible to analyze sensitive data while ensuring strong privacy guarantees. Differential privacy is typically achieved through random perturbation: the analyst issues a query and receives a noisy answer. To ensure privacy, the noise is carefully calibrated to the sensitivity of the query. Informally, query sensitivity measures how much a small change to the database-such as adding or removing a person's private record-can affect the query answer. Such query mechanisms are simple, efficient, and often quite accurate. In fact, one mechanism has recently been shown to be optimal for a single counting query [10]-i.e., there is no better noisy answer to return under the desired privacy objective.

However, analysts typically need to compute multiple statistics on a database. Differentially private algorithms extend nicely to a set of queries, but there can be difficult trade-offs among alternative strategies for answering a workload of queries. Consider the analyst of a private student database who requires answers to the following queries: the total number of students, xt, the number of students xA, xB, xC , xD, xF receiving grades A, B, C, D, and F respectively, and the number of passing students, xp (grade D or

Constrained Inference

Smooth Sensitivity 1 temp

Proving results from [1] and applying to degree sequence. Lemma 1. Let A be an algorithm that on input x outputs A(x) = f (x) + S(x) α Z. For any inputs x, y, we have:

where z x (s) = s-f (x) S(x)/α and Z x (S) = {z x (s) | s ∈ S}. And

where z y (s) = S(x) S(y) z x (s) + f (x)-f (y) S(x)/α = s-f (y) S(y)/α and Z y (S) = {z y (s) | s ∈ S}. In shorthand, Z x and Z y are related as:

Z y (S) = σ(Z x (S) + ∆)

where σ = S(x) S(y) and ∆ = f (x)-f (y) S(x)/α .

Proposition 1. Let Z be a Laplace random variable. Let c, δ > 0 be fixed. For any ∆ such that |∆| ≤ c, the following sliding property holds:

For any σ such that σ ≤ 1 + c/ln 1 δ , the following dilation property holds: XXXXX For dilation, need to prove it but I know that there is some set Z such that for the dilation property to hold, it must be that σ ≤ 1 + c/ ln 1 δ . But it may be the case that it is necessary for σ < 1 + c/ ln 1 δ to be true for all Z.

Step 1

Step 2

Step 3

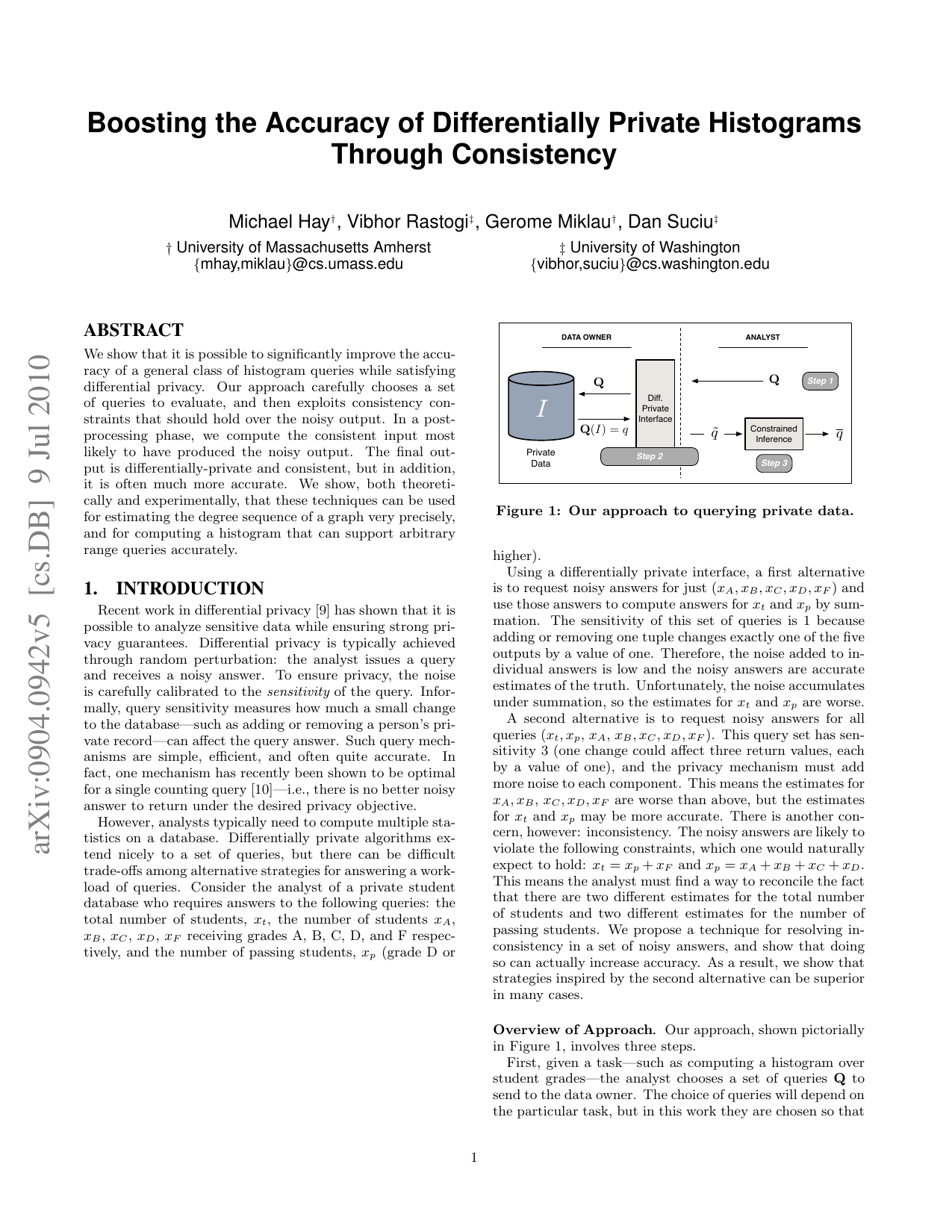

Figure 1: Our approach to querying private data.

Using a differentially private interface, a first alternative is to request noisy answers for just (xA, xB, xC , xD, xF ) and use those answers to compute answers for xt and xp by summation. The sensitivity of this set of queries is 1 because adding or removing one tuple changes exactly one of the five outputs by a value of one. Therefore, the noise added to individual answers is low and the noisy answers are accurate estimates of the truth. Unfortunately, the noise accumulates under summation, so the estimates for xt and xp are worse.

A second alternative is to request noisy answers for all queries (xt, xp, xA, xB, xC , xD, xF ). This query set has sensitivity 3 (one change could affect three return values, each by a value of one), and the privacy mechanism must add more noise to each component. This means the estimates for xA, xB, xC , xD, xF are worse than above, but the estimates for xt and xp may be more accurate. There is another concern, however: inconsistency. The noisy answers are likely to violate the following constraints, which one would naturally expect to hold: xt = xp + xF and xp = xA + xB + xC + xD. This means the analyst must find a way to reconcile the fact that there are two different estimates for the total number of students and two different estimates for the number of passing students. We propose a technique for resolving inconsistency in a set of noisy answers, and show that doing so can actually increase accuracy. As a result, we show that strategies inspired by the second alternative can be superior in many cases.

Overview of Approach. Our approach, shown pictorially in Figure 1, involves three steps.

First, given a task-such as computing a histogram over student grades-the analyst chooses a set of queries Q to send to the data owner. The choice of queries will depend on the particular task, but in this work they are chosen so that In the second step, the data owner answers the set of queries, using a standard differentially-private mechanism [9], as follows. The queries are evaluated on the private database and the true answer Q(I) is computed. Then random independent noise is added to each answer in the set, where the data owner scales the noise based on the sensitivity of the query set. The set of noisy answers q is sent to the analyst. Importantly, because this step is unchanged from [9], it offers the same differential privacy guarantee.

The above step ensures privacy, but the set of noisy answers returned may be inconsistent. In the third and final step, the analyst post-processes the set of noisy answers to resolve inconsistencies among them. We propose a novel approach for

…(Full text truncated)…

This content is AI-processed based on ArXiv data.