Mykyta the Fox and networks of language

The results of quantitative analysis of word distribution in two fables in Ukrainian by Ivan Franko: “Mykyta the Fox” and “Abu-Kasym’s slippers” are reported. Our study consists of two parts: the analysis of frequency-rank distributions and the application of complex networks theory. The analysis of frequency-rank distributions shows that the text sizes are enough to observe statistical properties. The power-law character of these distributions (Zipf’s law) holds in the region of rank variable r=20 - 3000 with an exponent $\alpha\simeq 1$. This substantiates the choice of the above texts to analyse typical properties of the language complex network on their basis. Besides, an applicability of the Simon model to describe non-asymptotic properties of word distributions is evaluated. In describing language as a complex network, usually the words are associated with nodes, whereas one may give different meanings to the network links. This results in different network representations. In the second part of the paper, we give different representations of the language network and perform comparative analysis of their characteristics. Our results demonstrate that the language network of Ukrainian is a strongly correlated scale-free small world. Empirical data obtained may be useful for theoretical description of language evolution.

💡 Research Summary

The paper presents a two‑part quantitative study of Ukrainian language using two classic fables by Ivan Franko – “Mykyta the Fox” and “Abu‑Kasym’s Slippers”. In the first part the authors examine the word frequency‑rank (Zipf) distribution. The texts contain 15 426 and 8 002 tokens respectively, with vocabularies of 3 563 and 2 392 distinct types, which are sufficiently large for statistical analysis. Plotting frequency f(r) versus rank r shows a clear power‑law region from r≈20 to r≈3000 with an exponent α≈1.00±0.03; the goodness‑of‑fit χ²/df≈0.002 confirms the Zipf law holds for these Ukrainian narratives. For low ranks (r<20) the distribution deviates from a pure power law, and the authors demonstrate that the classic Simon model (new‑word probability p≈0.85, reinforcement parameter β≈0.15) reproduces these non‑asymptotic features, as illustrated in Figures 2 and 3.

The second part treats language as a complex network. Words are nodes; links are defined in four distinct representations:

- L‑space – words co‑occurring within a sliding window of radius R inside the same sentence. R=1 corresponds to immediate neighbours; R=R_max connects all words of a sentence.

- B‑space – a bipartite graph linking words to the sentences in which they appear.

- P‑space – a projection of B‑space where all words belonging to the same sentence form a complete subgraph.

- C‑space – sentences are linked if they share at least one common word.

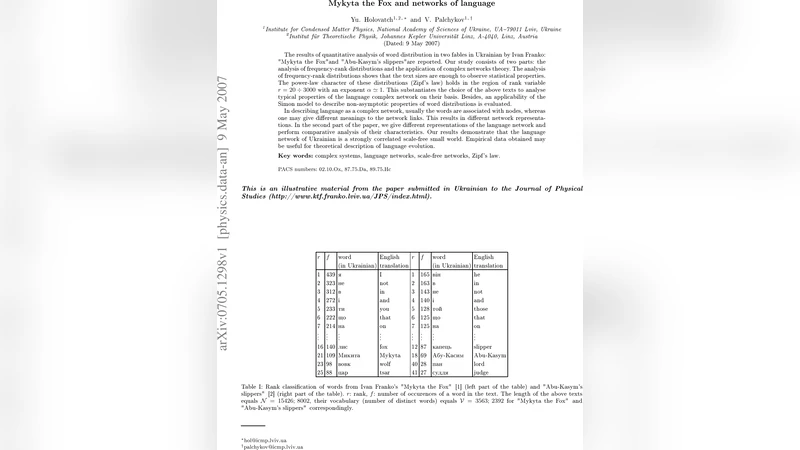

For each representation the authors compute standard network metrics: number of vertices V, number of edges M, average degree ⟨k⟩, maximum degree k_max, degree‑distribution exponent γ (P(k)∼k^‑γ), cumulative exponent γ_int, average clustering coefficient ⟨C⟩, clustering of an equivalent Erdős‑Rényi random graph C_rand, and average shortest‑path length ⟨ℓ⟩. Results for L‑space are summarized in Table I. With R=1, ⟨k⟩≈6, k_max≈228, γ≈1.9, ⟨C⟩≈0.17, and ⟨ℓ⟩≈5.2. When R is increased to R_max (the full sentence length), ⟨k⟩ rises to ≈48, k_max≈1 134, γ remains near 2.0, ⟨C⟩ jumps to ≈0.84, and ⟨ℓ⟩ drops to ≈1.9. The degree distribution follows a scale‑free power law across all R values, and the clustering coefficient is several times larger than that of a random graph of the same size, indicating strong local correlations. The short average path length together with high clustering confirms the small‑world nature of the Ukrainian language network. Similar scale‑free exponents and elevated clustering are observed in P‑space and C‑space, showing that the small‑world, scale‑free topology is robust to the choice of link definition.

When the two fables are merged, V and M increase proportionally, but the exponents γ, the clustering ratio ⟨C⟩/C_rand, and the normalized path length remain essentially unchanged, demonstrating that the observed properties are not artifacts of text length.

The authors argue that these empirical findings provide a solid basis for theoretical models of language evolution. The Simon model captures the early‑stage word‑introduction dynamics, while the emergence of a scale‑free, small‑world network reflects self‑organized growth mechanisms akin to those found in other complex systems (e.g., the Internet, biological networks). The paper suggests future work should extend the analysis to larger corpora, compare multiple languages, and test dynamic growth models (e.g., preferential attachment with rewiring) against the measured network statistics. Such extensions could illuminate universal principles governing lexical and syntactic organization across human languages.

Comments & Academic Discussion

Loading comments...

Leave a Comment