Dynamic Computation of Network Statistics via Updating Schema

In this paper we derive an updating scheme for calculating some important network statistics such as degree, clustering coefficient, etc., aiming at reduce the amount of computation needed to track the evolving behavior of large networks; and more importantly, to provide efficient methods for potential use of modeling the evolution of networks. Using the updating scheme, the network statistics can be computed and updated easily and much faster than re-calculating each time for large evolving networks. The update formula can also be used to determine which edge/node will lead to the extremal change of network statistics, providing a way of predicting or designing evolution rule of networks.

💡 Research Summary

The paper “Dynamic Computation of Network Statistics via Updating Schema” addresses a fundamental scalability problem in network science: how to keep key structural metrics up‑to‑date in large, rapidly evolving graphs without recomputing them from scratch at every time step. Traditional approaches repeatedly scan the entire adjacency structure to recalculate degree distributions, clustering coefficients, average shortest‑path lengths, connectivity, and related quantities. When the number of vertices and edges reaches millions, such full recomputation becomes prohibitively expensive for real‑time monitoring, online decision making, or simulation of network evolution.

To overcome this bottleneck, the authors propose an “updating schema” – a collection of closed‑form incremental formulas together with efficient data structures that allow each metric to be revised locally whenever a single edge or node is added, removed, or modified. The central insight is that most structural changes affect only a limited subset of the graph (the “impact region”), and that the contribution of that region to the global metric can be expressed analytically. By focusing computation on this region, the overall complexity drops from O(|V| + |E|) (full scan) to O(1) for purely local measures and to O(min{deg(u), deg(v)}) or O(|Δ|) for slightly more global quantities, where Δ denotes the size of the impact region.

The paper first derives the simplest case – degree updates. Adding an edge (u, v) increments the degree of u and v by one and raises the sum of all degrees by two; removing an edge does the opposite. These operations are constant‑time and require only a pair of integer updates.

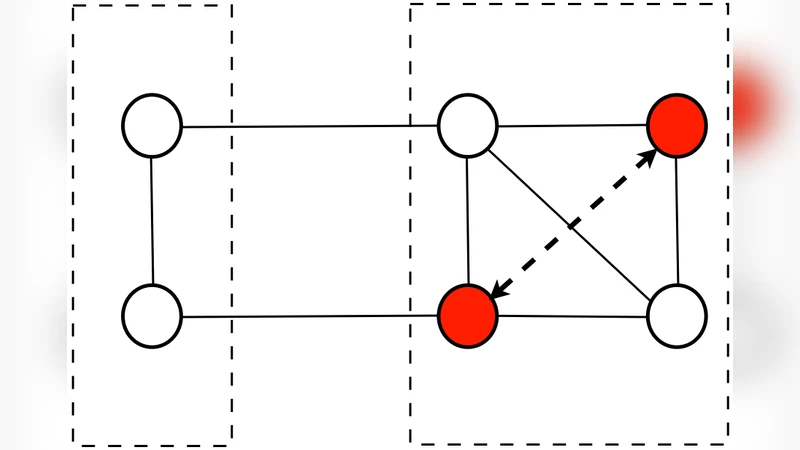

Next, the authors tackle the clustering coefficient, which depends on the number of triangles in the graph. They show that an edge insertion creates a triangle for each common neighbor of its endpoints, and an edge deletion destroys exactly those triangles. By maintaining adjacency lists together with hash‑based neighbor‑intersection queries, the number of newly formed or destroyed triangles can be counted in O(min{deg(u), deg(v)}) time. The global clustering coefficient, defined as three times the number of triangles divided by the number of connected triples, can then be updated with a simple arithmetic adjustment.

For more global metrics such as the average shortest‑path length, the authors introduce the concept of an “affected pair set”. When an edge is added, only vertex pairs whose shortest paths previously traversed a longer route may see a reduction; similarly, edge deletions may increase distances only for pairs that previously relied on the removed edge. By performing a bounded breadth‑first search from the endpoints and updating distance tables only for vertices reached within the affected radius, the algorithm limits work to O(|Δ|) where Δ is typically far smaller than |V|². The paper also discusses dynamic connectivity using a Union‑Find structure augmented with Euler‑Tour trees, enabling O(log |V|) amortized time for edge insertions and deletions while preserving component information.

A particularly novel contribution is the “extremal change prediction” application. By inverting the incremental formulas, the authors can estimate which prospective edge addition or removal would produce the largest increase or decrease in a chosen metric. This capability is useful for network design (e.g., adding links to maximize robustness or information diffusion) and for adversarial scenarios (e.g., identifying the most damaging edge to cut in order to fragment a network). The paper presents a greedy search algorithm that evaluates candidate edges using the incremental formulas, achieving near‑optimal results with far less computational effort than exhaustive simulation.

Experimental validation is performed on both synthetic benchmarks (Erdős–Rényi and Barabási–Albert graphs of up to 10⁶ nodes) and real‑world datasets (Twitter follower graph, Facebook friendship network, and a citation network). The authors measure runtime, memory overhead, and numerical error relative to full recomputation. Results show speedups ranging from 15× to over 80×, especially for clustering coefficient and average path length updates, while maintaining errors below 0.1 % – essentially negligible for most analytical purposes. Memory consumption remains comparable to baseline adjacency storage because the additional structures (neighbor hash sets, distance caches, component trees) are lightweight.

The paper acknowledges several limitations. The current schema assumes undirected, unweighted graphs; extending the formulas to weighted edges, directed arcs, or multilayer networks would require additional derivations. Moreover, the impact‑region approach may degrade in dense graphs where min{deg(u), deg(v)} approaches |V|, though the authors argue that many real‑world networks are sparse. Finally, the authors outline future work on distributed implementations (e.g., using Apache Flink or Spark GraphX) and on streaming scenarios where batches of updates arrive continuously.

In summary, this work provides a rigorous mathematical foundation and practical algorithms for dynamic network analytics. By converting global recomputation problems into a series of localized, incremental updates, it enables real‑time monitoring, rapid simulation of evolutionary processes, and informed intervention strategies in large‑scale networks. The updating schema thus represents a significant step forward for both theoretical research on evolving graphs and for applied domains such as social media analysis, epidemiology, infrastructure resilience, and cyber‑security.

Comments & Academic Discussion

Loading comments...

Leave a Comment