Visual Conceptualizations and Models of Science

This Journal of Informetrics special issue aims to improve our understanding of the structure and dynamics of science by reviewing and advancing existing conceptualizations and models of scholarly activity. Several of these conceptualizations and models have visual manifestations supporting the combination and comparison of theories and approaches developed in different disciplines of science. Subsequently, we discuss challenges towards a theoretically grounded and practically useful science of science and provide a brief chronological review of relevant work. Then, we exemplarily present three conceptualizations of science that attempt to provide frameworks for the comparison and combination of existing approaches, theories, laws, and measurements. Finally, we discuss the contributions of and interlinkages among the eight papers included in this issue. Each paper makes a unique contribution towards conceptualizations and models of science and roots this contribution in a review and comparison with existing work.

💡 Research Summary

This special issue of the Journal of Informetrics sets out to deepen our understanding of the structure and dynamics of science by reviewing, visualizing, and advancing existing conceptualizations and models of scholarly activity. The authors begin by highlighting a central paradox in the field of “science of science”: while a wealth of quantitative and qualitative models exists—ranging from citation‑network analyses, agent‑based simulations of knowledge diffusion, to institutional and policy‑driven frameworks—these efforts often remain siloed within disciplinary traditions. Consequently, there is a lack of a unified theoretical foundation that can both explain observed regularities and guide practical interventions.

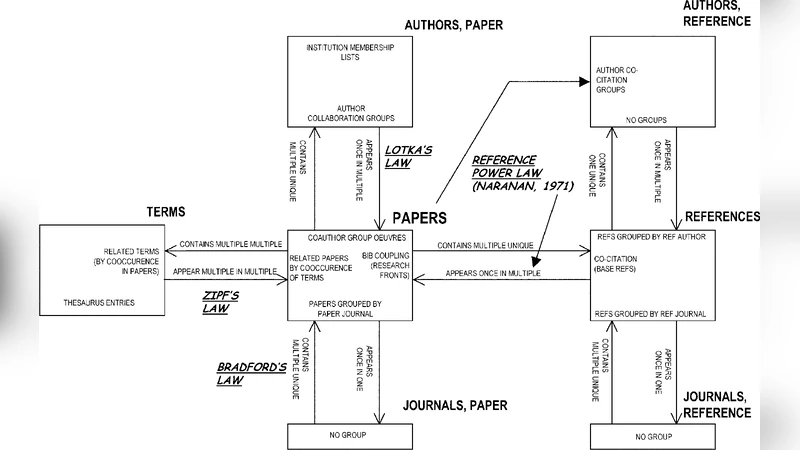

To address this gap, the paper first catalogues the major families of models that have shaped the study of science. Bibliometric and network‑science approaches focus on structural relationships among papers, authors, and institutions; sociological perspectives emphasize norms, social capital, and collective behavior; economic and innovation‑studies models stress funding flows, market mechanisms, and the emergence of breakthrough technologies. For each family, the authors extract core variables, underlying assumptions, and typical analytical techniques, and then translate these into a series of visual schematics. By doing so, they reveal that many seemingly disparate models share common building blocks—nodes, edges, temporal dynamics, and exogenous shocks—making them amenable to integration.

A central methodological contribution is the “Theory‑Data‑Visualization Triangle,” which the authors propose as a guiding framework for future research. In this triangle, theory supplies testable hypotheses, data provides the empirical substrate, and visualization acts as the connective tissue that renders complex relationships intelligible, facilitates hypothesis refinement, and supports communication with policymakers and broader audiences. The paper showcases three concrete visualization strategies: (1) dynamic, time‑layered network graphs that capture the evolution of scholarly communities; (2) multi‑layer maps that simultaneously display author, institution, and policy dimensions; and (3) interactive dashboards that allow users to explore simulation outcomes and sensitivity analyses in real time.

Building on this methodological foundation, the authors present a chronological review of the field from the 1960s to the present. They trace a trajectory that moves from early attempts to identify “laws of science” (e.g., Lotka’s law, Price’s model of exponential growth) through the network‑expansion era of the 1990s, to the current big‑data and machine‑learning driven phase where massive open‑access repositories, altmetrics, and policy documents are mined for signals of scientific change. Each epoch is illustrated with a timeline network that links seminal papers, data sources, and methodological breakthroughs, making clear how ideas have diffused across disciplinary borders.

The heart of the article consists of three integrative conceptual frameworks that aim to bridge the identified gaps.

-

Multi‑Layer Network Model – This framework treats researchers, publications, institutions, funding agencies, and policy instruments as distinct layers in a multiplex network. Inter‑layer edges capture cross‑cutting influences such as grant awards shaping collaboration patterns or policy mandates reshaping citation behavior. The model is designed to accommodate heterogeneous data types and to support cross‑layer community detection, offering a richer picture of scientific ecosystems than single‑layer analyses.

-

Dynamic Flow‑Accumulation Model – Inspired by ecological models of resource flow, this approach models knowledge production, diffusion, and decay as continuous flows over time. It incorporates mechanisms for “knowledge accumulation” (e.g., citation reinforcement) and “knowledge loss” (e.g., obsolescence, retraction). By simulating these flows, the model can predict tipping points such as rapid paradigm shifts or periods of stagnation, and it can be calibrated against longitudinal bibliometric datasets.

-

Law‑Exception Framework – Recognizing that many regularities in science (e.g., power‑law distributions) coexist with rare, high‑impact disruptions, this framework maps the boundary between generalized “laws” and context‑specific “exceptions.” Using visual heatmaps and anomaly detection, it highlights when and where standard predictive models fail—such as during the emergence of a disruptive technology or a sudden policy shock—thereby guiding researchers to augment existing theories with contingency factors.

Each of these frameworks is evaluated against a matrix of existing studies, with explicit discussion of strengths (e.g., integrative capacity, predictive power, visual clarity) and limitations (e.g., data intensity, computational complexity). The authors also provide prototype code snippets and workflow diagrams, demonstrating how scholars can operationalize the models on open datasets.

The final section synthesizes the contributions of the eight papers included in the special issue. By mapping each paper onto the Theory‑Data‑Visualization Triangle, the authors show that the collection collectively advances three overarching goals: (a) enriching theoretical grounding, (b) expanding the empirical data frontier (through novel sources such as policy documents, grant databases, and social‑media traces), and (c) enhancing visual communication of complex scientific dynamics. For instance, one paper introduces a new method for visualizing interdisciplinary citation flows, while another develops an agent‑based model that incorporates funding policy as an exogenous driver. The authors argue that these works are mutually reinforcing; the visual tools developed in one study can be used to validate the models proposed in another, creating a virtuous cycle of theory‑driven visualization and data‑driven theory refinement.

In conclusion, the article posits that a truly robust “science of science” must be built on a tripod of rigorous theory, high‑quality, interoperable data, and powerful visual representations that make complexity tractable. The authors call for community‑wide efforts to (i) standardize data formats and metadata, (ii) develop open‑source, interactive visualization platforms, and (iii) design experimental studies that can test the predictions of the three proposed frameworks. By doing so, the field can move beyond fragmented models toward a cohesive, actionable understanding of how knowledge is created, diffused, and transformed across time and institutional contexts.

Comments & Academic Discussion

Loading comments...

Leave a Comment