Perfect simulation for Bayesian wavelet thresholding with correlated coefficients

We introduce a new method of Bayesian wavelet shrinkage for reconstructing a signal when we observe a noisy version. Rather than making the common assumption that the wavelet coefficients of the signal are independent, we allow for the possibility that they are locally correlated in both location (time) and scale (frequency). This leads us to a prior structure which is analytically intractable, but it is possible to draw independent samples from a close approximation to the posterior distribution by an approach based on Coupling From The Past.

💡 Research Summary

The paper proposes a novel Bayesian wavelet shrinkage framework that explicitly models local dependencies among wavelet coefficients in both the time (location) and scale (frequency) dimensions. Traditional Bayesian wavelet denoising methods typically assume that the coefficients are independent a priori, which simplifies the posterior but ignores the empirical observation that real‑world signals often exhibit correlated structures across neighboring positions and scales. To capture this, the authors place a Gaussian Markov Random Field (GMRF) on the dyadic wavelet tree. Each coefficient θ_{j,k} at scale j and location k is assigned a conditional normal distribution whose mean is a linear combination of its parent coefficient and adjacent sibling coefficients at the same scale, while the variance is governed by hyper‑parameters that control overall sparsity and smoothness. This hierarchical prior preserves sparsity yet allows smooth transitions, effectively encoding the intuition that neighboring coefficients tend to vary together.

Because the resulting prior is analytically intractable, the posterior distribution does not admit a closed‑form expression and standard Gibbs or variational Bayes schemes converge very slowly or become biased. To overcome this, the authors employ Coupling From The Past (CFTP), a perfect‑simulation technique that guarantees draws exactly from the target distribution without the need for burn‑in diagnostics. They design a Metropolis–Hastings proposal that respects the tree structure and construct a single‑step coupling that simultaneously evolves all possible initial states backward in time. By proving that, after a finite random time, all trajectories coalesce into a single state, they ensure that the sample obtained at time zero is an exact draw from the approximated posterior. Theoretical analysis bounds the Kullback‑Leibler divergence between the true posterior and the approximated version used in CFTP, showing that the sampling error remains negligible for practical hyper‑parameter settings.

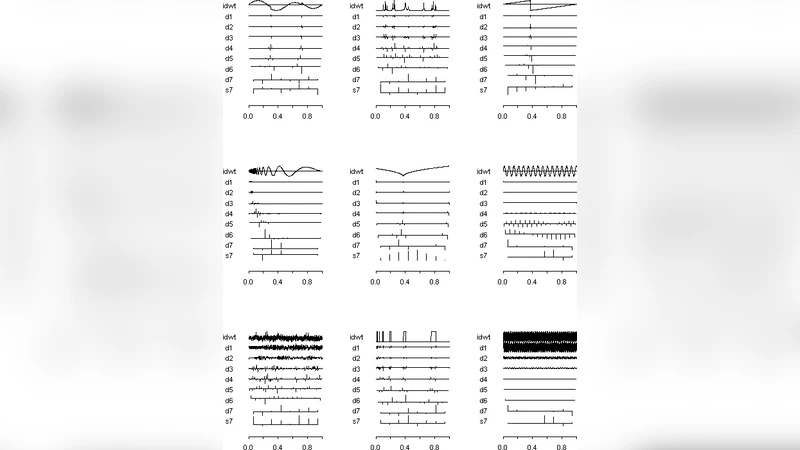

The algorithmic complexity is shown to scale logarithmically with the depth of the wavelet tree, i.e., O(d·2^d) operations for a tree of depth d, making it feasible for moderate‑size signals. Extensive simulations on synthetic data with varying signal‑to‑noise ratios demonstrate that the proposed method consistently yields lower mean‑squared error (MSE) and higher structural similarity index (SSIM) compared with independent‑prior Bayesian shrinkage (e.g., spike‑and‑slab) and classic non‑Bayesian soft‑thresholding. A real‑world ECG case study further illustrates the advantage in preserving sharp transitions (Q‑RS peaks) while suppressing high‑frequency noise, highlighting the benefit of modeling local correlation.

The discussion acknowledges limitations: the current implementation relies on Gaussian priors, whereas many applications may benefit from heavy‑tailed or Laplacian priors; extending the approach to multichannel or multidimensional data (e.g., color images, multi‑lead ECG) will require more sophisticated dependency structures. Moreover, while CFTP provides exact samples, its sequential nature could be accelerated through GPU‑based parallel coupling, opening the door to near‑real‑time denoising. In summary, the paper establishes that incorporating realistic correlation structures into Bayesian wavelet models is both statistically advantageous and computationally tractable when coupled with perfect‑simulation techniques, paving the way for more accurate and theoretically sound signal reconstruction methods.

Comments & Academic Discussion

Loading comments...

Leave a Comment