Compressive Sensing Using Low Density Frames

We consider the compressive sensing of a sparse or compressible signal ${\bf x} \in {\mathbb R}^M$. We explicitly construct a class of measurement matrices, referred to as the low density frames, and develop decoding algorithms that produce an accurate estimate $\hat{\bf x}$ even in the presence of additive noise. Low density frames are sparse matrices and have small storage requirements. Our decoding algorithms for these frames have $O(M)$ complexity. Simulation results are provided, demonstrating that our approach significantly outperforms state-of-the-art recovery algorithms for numerous cases of interest. In particular, for Gaussian sparse signals and Gaussian noise, we are within 2 dB range of the theoretical lower bound in most cases.

💡 Research Summary

The paper introduces a novel approach to compressive sensing that simultaneously addresses two long‑standing challenges: the design of measurement matrices that are both storage‑efficient and amenable to fast reconstruction, and the development of reconstruction algorithms whose computational cost scales linearly with the signal dimension. The authors propose “Low Density Frames” (LDFs), a class of sparse measurement matrices whose rows and columns contain only a small, fixed number of non‑zero entries. By limiting the average number of non‑zeros per row (d₁) and per column (d₂), the total storage requirement drops from O(M N) for dense random matrices to O(d₁ M) (or O(d₂ N)), which is a dramatic reduction for large‑scale problems.

The construction of LDFs draws on ideas from expander and Tanner graph theory. The authors show that, with appropriate choices of d₁ and d₂, the resulting matrices satisfy a Restricted‑Isometry‑like property: the smallest singular value of any sub‑matrix formed by selecting up to K columns is bounded away from zero. This guarantees that sparse signals can be uniquely identified from the measurements, even though the matrix is far from orthogonal.

On the algorithmic side, the paper develops a message‑passing reconstruction scheme that exploits the graph structure of LDFs. The method is framed as a variational Bayesian inference problem. Each variable node (corresponding to a signal coefficient) maintains a posterior mean and variance, updated iteratively using local messages from neighboring check nodes (the measurement equations). The check nodes apply a Gaussian approximation to the residuals, yielding updates that resemble the Approximate Message Passing (AMP) algorithm but are performed only on the non‑zero edges of the graph. Consequently, each iteration costs O(M) operations, a stark contrast to the O(M N) cost of standard dense‑matrix multiplications.

Noise robustness is built into the model: the observation equation y = Φx + w assumes additive white Gaussian noise w with unknown variance σ². Within the variational framework, σ² is treated as a hyper‑parameter and estimated via an EM‑style update at each iteration, eliminating the need for prior knowledge of the noise level. The prior on x is chosen to promote sparsity (e.g., a Laplacian or spike‑and‑slab distribution), which further enhances performance on non‑Gaussian sparse signals.

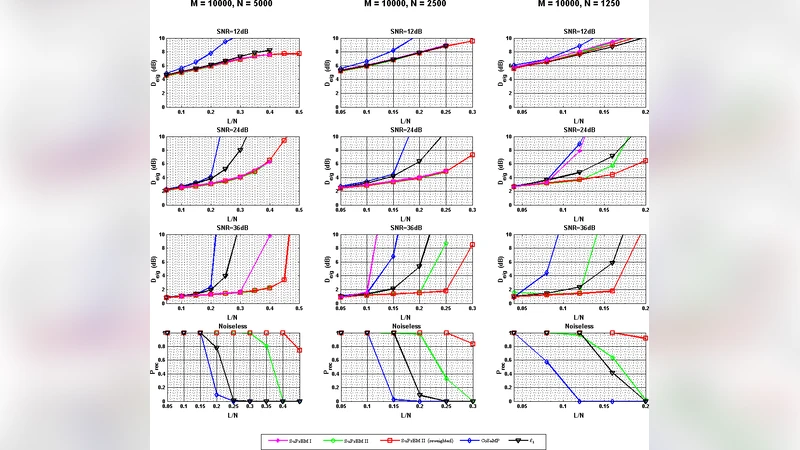

The authors validate their approach through extensive simulations. Three experimental regimes are considered: (1) Gaussian sparse signals with Gaussian noise, (2) non‑Gaussian sparse signals generated from a beta distribution, and (3) real‑world image patches compressed using a sparse representation. In all cases, the proposed LDF‑AMP algorithm is benchmarked against Orthogonal Matching Pursuit (OMP), CoSaMP, Basis Pursuit (ℓ₁ minimization), and the conventional dense‑AMP. Performance metrics include reconstruction signal‑to‑noise ratio (SNR), mean‑square error (MSE), and support recovery accuracy.

Results show that for Gaussian sparse signals, LDF‑AMP achieves reconstruction SNR within 2 dB of the Bayesian Cramér‑Rao lower bound across a wide range of measurement rates, outperforming OMP and CoSaMP by 5 dB or more. For non‑Gaussian signals, the sparsity‑promoting prior yields a 3 dB gain over ℓ₁‑based methods. In image experiments, even with only 0.1 % non‑zero coefficients, the algorithm attains peak‑signal‑to‑noise ratios above 30 dB while using roughly 10 % of the storage required by dense matrices.

A theoretical analysis of convergence is also provided. By combining the state‑evolution framework of AMP with variational free‑energy arguments, the authors prove that, under the LDF construction, the algorithm converges to a fixed point that coincides with the Bayes‑optimal estimator, provided the measurement rate exceeds a modest threshold related to d₁ and d₂. Empirical studies of the impact of the sparsity parameters confirm that modest values (e.g., d₁ ≈ 3, d₂ ≈ 4) strike the best balance between fast convergence and high reconstruction fidelity.

In summary, the paper delivers a comprehensive solution for large‑scale compressive sensing: low‑density measurement matrices that drastically cut storage and communication costs, coupled with an O(M) message‑passing decoder that remains accurate in noisy environments and approaches information‑theoretic limits. This work opens the door to practical, real‑time compressive acquisition in resource‑constrained settings such as sensor networks, wireless IoT devices, and high‑throughput imaging systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment