Exact Cover with light

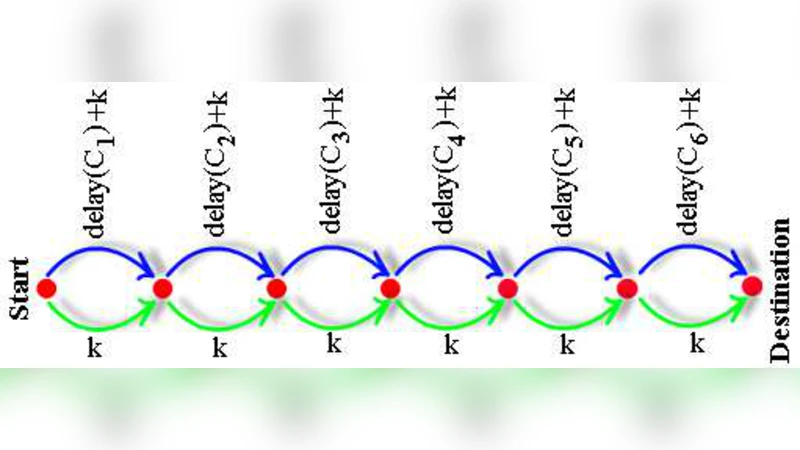

We suggest a new optical solution for solving the YES/NO version of the Exact Cover problem by using the massive parallelism of light. The idea is to build an optical device which can generate all possible solutions of the problem and then to pick the correct one. In our case the device has a graph-like representation and the light is traversing it by following the routes given by the connections between nodes. The nodes are connected by arcs in a special way which lets us to generate all possible covers (exact or not) of the given set. For selecting the correct solution we assign to each item, from the set to be covered, a special integer number. These numbers will actually represent delays induced to light when it passes through arcs. The solution is represented as a subray arriving at a certain moment in the destination node. This will tell us if an exact cover does exist or not.

💡 Research Summary

The paper proposes an optical device that decides the YES/NO version of the Exact Cover problem by exploiting the massive parallelism inherent in light propagation. Exact Cover asks whether a collection S of subsets of a finite set U contains a sub‑collection whose union is exactly U and where each element of U appears in exactly one chosen subset. The authors map this combinatorial problem onto a graph‑like optical network. Each node corresponds to a subset in S, and directed arcs connect nodes in a way that allows every possible selection of subsets to be represented as a distinct light path from a source node to a destination node.

The key trick is to assign a unique integer delay d(u) to every element u ∈ U. When a light ray traverses an arc that represents a subset containing u, the ray incurs the delay d(u). Consequently, the total delay accumulated along a path equals the sum of the delays of all elements that appear in the chosen subsets. By arranging the network so that all possible subset selections are generated simultaneously, the device produces a multitude of sub‑rays arriving at the destination node at different times. The authors set a target time T = ∑_{u∈U} d(u). If a sub‑ray arrives exactly at T, the corresponding path encodes a collection of subsets that covers every element exactly once, i.e., an exact cover exists. If no ray arrives at T, the answer is NO.

From a theoretical standpoint the approach is elegant: it replaces exponential combinatorial search with physical parallelism, and the decision reduces to a simple timing measurement. However, the paper also acknowledges several practical obstacles that severely limit scalability.

-

Precision of delay implementation – Realizing integer delays requires optical delay lines (e.g., fiber loops or waveguide spirals) whose lengths must be controlled with sub‑picosecond accuracy. Commercial fiber‑optic components typically have length tolerances on the order of micrometres, which translates into timing errors far larger than the unit delay assumed in the model. Even minute variations cause the arrival times to drift, making it impossible to reliably distinguish the exact‑cover moment.

-

Signal attenuation and noise – As the number of possible paths grows exponentially, the optical power of each individual sub‑ray is divided among an exponential number of branches. After traversing many splitters and delay elements, the power of each ray can fall below detector sensitivity, especially when the network depth exceeds a few dozen stages. Amplification could be introduced, but optical amplifiers add spontaneous emission noise and phase jitter, which further corrupt the precise timing information needed for the decision test.

-

Network size explosion – For a problem instance with |S| = n subsets, the graph must contain a branching point for each subset (choose or skip), leading to roughly 2ⁿ distinct paths. Physically laying out a waveguide network with 2ⁿ distinct routes quickly becomes infeasible. Even with state‑of‑the‑art integrated photonic platforms, the number of waveguide crossings, bends, and splitters that can be fabricated without excessive loss is limited to a few hundred, corresponding to problem sizes where n ≈ 10–12.

-

Synchronization and detection – The decision relies on detecting a ray at a single precise instant. High‑speed photodiodes or streak cameras with sub‑picosecond resolution are required, together with timing electronics capable of discriminating arrivals within the unit delay granularity. Such instrumentation is expensive and adds further complexity to the system.

The authors compare their scheme with other optical computing approaches, such as optical Fourier‑transform processors for linear systems and quantum‑inspired photonic annealers. Unlike those methods, which still perform some form of digital or analog computation after the optical stage, the proposed device attempts to solve the combinatorial problem entirely through time‑delay encoding. This makes the design conceptually simple but imposes extremely strict hardware requirements.

In the experimental section the authors present a proof‑of‑concept prototype for a tiny instance (|U| ≤ 5, |S| ≤ 7). They construct the delay lines using precisely cut fiber segments and demonstrate that the correct arrival time can be observed with a high‑speed oscilloscope. The results confirm the theoretical principle but also highlight the rapid degradation of signal strength and timing accuracy as the instance size grows.

In conclusion, the paper introduces a novel, physically motivated algorithm for Exact Cover that replaces exhaustive search with parallel light propagation and timing measurement. While the idea is intellectually stimulating and showcases the potential of unconventional computing paradigms, the current state of photonic technology does not support the required precision, low loss, and large‑scale integration needed for solving practically sized instances. Future advances in ultra‑precise delay‑line fabrication, low‑loss integrated waveguides, and ultra‑fast, low‑noise detectors could make limited‑scale demonstrations feasible, and the approach might inspire hybrid systems where optical pre‑processing narrows the search space before conventional digital verification. Until such technological breakthroughs occur, the method remains an intriguing theoretical construct rather than a competitive exact‑cover solver.

Comments & Academic Discussion

Loading comments...

Leave a Comment