Bayesian projection approaches to variable selection and exploring model uncertainty

A Bayesian approach to variable selection which is based on the expected Kullback-Leibler divergence between the full model and its projection onto a submodel has recently been suggested in the literature. Here we extend this idea by considering proj…

Authors: David Nott, Chenlei Leng

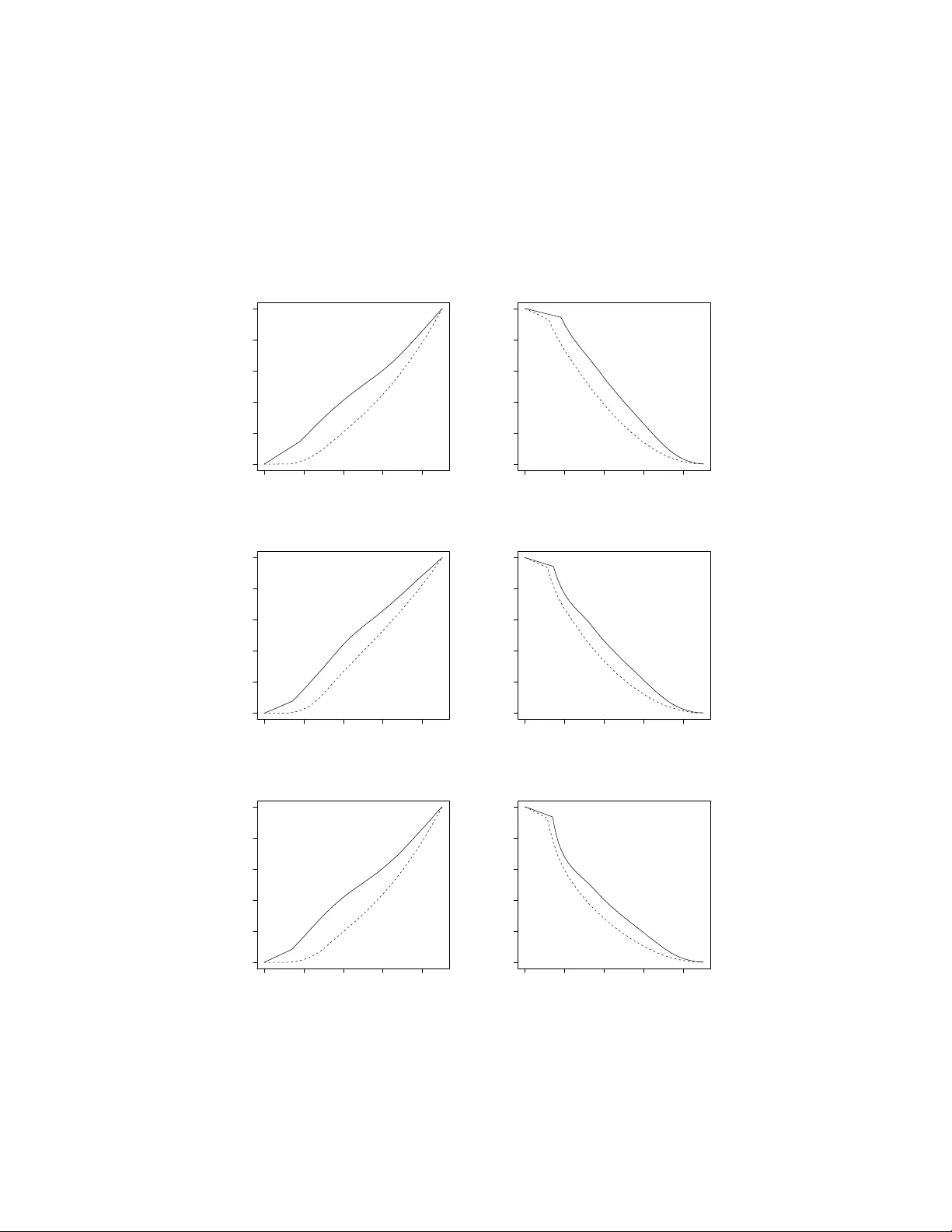

Ba y esian pro jection approac hes to v ariable selection and exploring mo del uncertain t y Da vid J. Nott and Chenlei Leng ∗ Abstract A Ba yesian approac h to v ariable selection whic h is based on the exp ected Kullbac k- Leibler divergence b etw een the full mo del and its pro jection onto a submo del has recen tly b een suggested in the literature. Here w e extend this idea by considering pro jections on to subspaces defined via some form of L 1 constrain t on the parameter in the full mo del. This leads to Bay esian mo del selection approaches related to the lasso. In the p osterior distribution of the pro jection there is p ositive probabilit y that some comp onen ts are exactly zero and the p osterior distribution on the mo del space induced b y the pro jection allo ws exploration of mo del uncertaint y . W e also consider use of the approac h in structured v ariable selection problems suc h as ANOV A mo dels where it is desired to incorp orate main effects in the presence of in teractions. Here w e make use of pro jections related to the non-negativ e garotte which are able to resp ect the hierarchical constrain ts. W e also prov e a consistency result concerning the p osterior distribution on the mo del induced by the pro jection, and show that for some pro jections related to the adaptive lasso and non-negativ e garotte the p osterior distribution concentrates on the true mo del asymptotically . ∗ Da vid J. Nott is Asso ciate Professor, Departmen t of Statistics and Applied Probabilit y , National Uni- v ersit y of Singap ore, Singap ore 117546. (email standj@nus.edu.sg). Chenlei Leng is Assistant Professor, Departmen t of Statistics and Applied Probability , National Univ ersity of Singap ore, Singapore 117546 (email stalc@n us.edu.sg). This w ork was partially supp orted by an Australian Research Council grant. 1 Keywor ds : Ba y esian v ariable selection, Kullbac k-Leibler pro jection, lasso, non-negative garotte, preconditioning. 1 In tro duction Ba yesian approac hes to mo del selection and describing mo del uncertain ty ha v e b ecome in- creasingly p opular in recen t years. In this pap er w e extend a metho d of v ariable selection considered by Dupuis and Rob ert (2003) and related to earlier suggestions b y Goutis and Rob ert (1998) and Mengersen and Rob ert (1996). Dupuis and Rob ert (2003) consider an approac h to v ariable selection where mo dels are selected according to a relative explanatory p o w er, where relativ e explanatory p ow er is defined using the exp ected Kullback-Leibler di- v ergence b et ween the full mo del and its pro jection onto a submo del. The goal is to find the most parsimonious mo del whic h achiev es an acceptable loss of explanatory pow er compared to the full mo del. In this pap er we consider an extension of the metho d of Dupuis and Rob ert (2003) where instead of considering a pro jection on to a subspace defined by a set of active co v ariates w e consider pro jection on to a subspace defined b y some other form of constraint on the param- eter in the full mo del. Certain choices of the constraint (an L 1 constrain t as used in the lasso of Tibshirani (1996), for example) lead to exact zeros for some of the co efficien ts. The kinds of pro jections w e consider also ha ve computational adv an tages, in that parsimony is con trolled b y a single contin uous parameter and we a void the searc h ov er a large and complex mo del space. Searc hing the mo del space in traditional Ba yesian mo del selection approaches with large n um b ers of co v ariates is a computationally daunting task. In contrast, with our metho d we handle mo del uncertain ty in a con tin uous wa y through an encompassing mo del, and can exploit existing fast lasso t yp e algorithms to calculate pro jections for samples from 2 the p osterior distribution in the encompassing mo del. This allo ws sparsity and exploration of mo del uncertain t y while preserving approximately p osterior predictive b ehaviour based on the full mo del. F urthermore, the metho d is easy to implement given a combination of existing Bay esian softw are and softw are implemen ting lasso type fitting metho ds. While the metho d is more computationally intensiv e than calculating a solution path for a classical shrink age approac h like the lasso, this is the price to b e paid for exploring mo del uncertaint y and the computational demands of the metho d are certainly less than Ba yesian approac hes whic h searc h the mo del space directly . Our idea can also b e applied to structured v ariable selection problems suc h as those arising in ANO V A mo dels where w e might wish to include in teraction terms only in the presence of the corresponding main effects. A plug-in version of our approach is also related to the preconditioning metho d of Paul et al. (2007) for feature selection in “large p , small n ” regression problems. A key adv an tage of our approach is sim- plicit y in prior sp ecification. One is only required to sp ecify a prior on the parameter in the full mo del, and not a prior on the mo del space or a prior on parameters for every submo del. Nev ertheless, the p osterior distribution on the mo del space induced b y the pro jection can b e used in a similar w ay to the p osterior distribution on the mo del space in a traditional Ba yesian analysis for exploring mo del uncertain ty and different in terpretations of the data. There are man y alternativ e Bay esian strategies for mo del selection and exploring mo del uncertain ty to the one considered here. Ba yes factors and Bay esian mo del av eraging (Kass and Raftery , 1995, Hoeting et al. , 1999, F ern´ andez et al. , 2001) are the traditional approac hes to addressing issues of mo del uncertaint y in a Bay esian framework. As already men tioned, prior specification can b e v ery demanding for these approaches, although general default prior sp ecifications hav e been suggested (Berger and P ericc hi, 1996; O’Hagan, 1995). In our later examples we fo cus on generalized linear mo dels, and Raftery (1996) suggests some reference priors for Bay esian mo del comparison in this con text. Structuring priors hierarc hically and estimating hyperparameters in a data driven w a y is another wa y to reduce the complexit y 3 of prior sp ecification, and this can work well (George and F oster, 2000). V arious Bay esian predictiv e criteria for s election ha ve also been suggested (Laud and Ibrahim, 1995, Gelfand and Ghosh, 1998, Spiegelhalter et al. , 2002). These approaches generally do not require sp ecification of a prior on the mo del – how ev er, prior sp ecification for parameters in all mo dels is still required and this can b e quite demanding if there are a large num b er of mo dels to b e compared. Decision theoretic strategies whic h attempt to take account of the costs of data collection for co v ariates hav e also b een considered (Lindley , 1968, Bro wn et al. , 1999, Drap er and F ousk akis, 2000). Ba y esian mo del a veraging can also b e combined with mo del selection as in Bro wn et al. (2002). The pro jection metho d of Dupuis and Rob ert (2003) that we extend here is related to the Ba yesian reference testing approach of Bernardo and Rueda (2002) and the predictive metho d of V eh tari and Lampinen (2004). There are also less formal approaches to Ba yesian mo del comparison including p osterior predictive c hecks (Gelman, Meng and Stern, 1996) which are targeted according to the uses that will be made of a mo del. W e see one application of the metho ds we describ e here as b eing to suggest a small set of candidate simplifications of the full model whic h can b e examined b y such means as to their adequacy for sp ecific purposes. The pro jection metho ds w e use here for model selection are related to the lasso of Tibshi- rani (1996) and its man y later extensions. There has b een some recen t w ork on incorporating the lasso into Bay esian approaches to mo del selection. Tibshirani (1996) p ointed out the Ba yesian interpretation of the lasso as a posterior mo de estimate in a model with indep en- den t double exp onential priors on regression co efficients. Park and Casella (2008) consider the Ba yesian lasso, where estimators other than the p osterior mo de are considered – their estimators do not provide automatic v ariable selection via the p osterior mo de but conv e- nien t computation and inference are possible within their framework. Y uan and Lin (2005) consider a hierarc hical prior form ulation in Ba yesian model comparison and a certain analyt- ical approximation to p osterior probabilities connecting the lasso with the Ba yes estimate. 4 Recen tly Griffin and Bro wn (2007) hav e also considered alternatives to double exp onential prior distributions on the co efficien ts to provide selection approaches related to the adaptive lasso of Zou (2006). The structure of the pap er is as follows. In the next section we briefly review the metho d of Dupuis and Rob ert (2003) and consider our extension of their approac h. Computational issues and predictive inference are considered in Section 3, and then a consistency result relating to the p osterior distribution on model space induced b y the pro jection is prov ed in Section 4. W e describ e applications to structured v ariable selection in Section 5 and Section 6 considers connections b etw een our method and the preconditioning approac h to selection of P aul et al. (2007) in “large p , small n ” regression problems. Section 7 considers some examples and sim ulation studies and Section 8 concludes. 2 Pro jection approac hes to mo del selection 2.1 Metho d of Dupuis and Rob e rt Dupuis and Rob ert (2003) consider a metho d of mo del selection based on the Kullbac k- Leibler divergence b etw een the true mo del and its pro jection onto a submo del. Suppose w e are considering a problem of v ariable selection in regression, where M F denotes the full mo del including all cov ariates and M S is a submo del with a reduced set of co v ariates. W rite f ( y | θ F , M F ) and f ( y | θ S , M S ) for the corresp onding likelihoo ds of the mo dels with parameters θ F and θ S . Let θ 0 S = θ 0 S ( θ F ) b e the pro jection of θ F on to the submo del M S . That is, θ 0 S is the v alue for θ S for whic h f ( y | θ S , M S ) is closest in Kullbac k-Leibler div ergence to f ( y | θ F , M F ), so that θ 0 S = arg min θ S Z log f ( x | θ F , M F ) f ( x | θ S , M S ) f ( x | θ F , M F ) d x . 5 Let δ ( M S , M F ) = Z Z log f ( x | θ F , M F ) f ( x | θ 0 S , M S ) f ( x | θ F , M F ) d x p ( θ F | y ) d θ F b e the p osterior exp ected Kullbac k-Leibler divergence b etw een the full mo del and its Kullback- Leibler pro jection onto the submo del M S . The relativ e loss of explanatory p ow er for M S is d ( M S , M F ) = δ ( M S , M F ) δ ( M 0 , M F ) where M 0 denotes the model with no co v ariates and Dupuis and Robert (2003) suggest mo del selection b y choosing the subset mo del most parsimonious for which d ( M S , M F ) < c where c is an appropriately small constant. If there is more than one mo del of the minimal size satisfying the bound, then the one with the smallest v alue of δ ( M S , M F ) is c hosen. Dupuis and Rob ert (2003) show that δ ( M 0 , M F ) can b e interpreted as measuring the explanatory p o w er of the full mo del, and using an additivit y prop erty of pro jections they sho w that d ( M S , M F ) < c guarantees that our c hosen submodel S has explanatory p o wer at least 100(1 − c )% of the explanatory p ow er of the full mo del. This in terpretation is helpful in c ho osing c . F or a predictive quan tit y ∆ we further suggest approximating the predictive densit y p (∆ | y ) for a chosen subset model S b y p S (∆ | y ) = Z p (∆ | θ 0 S , y ) p ( θ 0 S | y ) d θ 0 S (1) where p ( θ 0 S | y ) is the p osterior distribution of θ 0 S under the p osterior distribution of θ F for the full mo del. 2.2 Extension of the metho d T o b e concrete supp ose we are considering v ariable selection for generalized linear mo dels. W rite y 1 , ..., y n for the resp onses with E ( y i ) = µ i and supp ose that each y i has a distribution 6 from the exp onen tial family f y i ; θ i , φ A i = exp y i θ i − b ( θ i ) φ/ A i + c y i ; φ A i where θ i = θ i ( µ i ) is the natural parameter, φ is a scale parameter, the A i are known weigh ts and b ( · ) and c ( · ) are kno wn functions. F or a smo oth in vertible link function g ( · ) we hav e η i = g ( µ i ) = x T i β where x i is a p -vector of co v ariates and β is a p -dimensional parameter v ector. W riting X for the design matrix with i th row x T i and η = ( η 1 , ..., η n ) T (the v alues of η are called the linear predictor v alues) w e hav e η = X β . W e write f ( y ; β ) for the lik eliho o d. Now let β b e fixed and supp ose w e wish to find for some subspace S of the parameter space the Kullbac k-Leibler pro jection onto S . The subspace S migh t b e defined b y a subset of “active” co v ariates as in Dupuis and Rob ert (2003) but here w e consider subspaces such as S = S ( λ ) = ( β : p X j =1 | β j | ≤ λ ) (2) S = S ( β ∗ , λ ) = ( β : p X j =1 | β j | / | β ∗ j | ≤ λ ) (3) where β ∗ is a parameter v alue that supplies w eighting factors in the constrain t or S = S ( λ, η ) = ( β : p X j =1 | β j | + η p X j =1 β j 2 ≤ λ ) . (4) The c hoice (2) leads to pro cedures related to the lasso of Tibshirani (1996), (3) relates to the adaptiv e lasso of Zou (2006) and (4) is related to the elastic net of Zou and Hastie (2005). In (3) w e ha ve allow ed the space that we are pro jecting onto to depend on some parameter 7 β ∗ , and later w e will allo w β ∗ to b e the parameter in the full mo del that we are pro jecting, so that the subspace that w e are pro jecting on to is adapting with the parameter. There is no reason to forbid this in what follows. In a later section we also consider pro jections which are related to Breiman’s (1995) non-negative garotte and which allo w for structured v ariable selection in the presence of hierarchical relationships among predictors. The close connection b et w een the adaptive lasso and the non-negative garotte is discussed in Zou (2006). No w let β S b e a parameter in the subspace S . In the developmen t b elow we consider the scale parameter φ as known – we consider an unknown scale parameter later. The Kullbac k-Leibler div ergence betw een f ( y ; β ) and f ( y ; β S ) is E β log f ( Y ; β ) f ( Y ; β S ) (5) where E β denotes the exp ectation with resp ect to f ( y ; β ). W riting µ i ( β ) and θ i ( β ) for the mean and natural parameter for y i when the parameter is β (5) is giv en b y E β n X i =1 Y i θ i ( β ) − b ( θ i ( β )) φ/ A i − n X i =1 Y i θ i ( β S ) − b ( θ i ( β S )) φ/ A i ! = n X i =1 µ i ( β ) θ i ( β ) − b ( θ i ( β )) φ/ A i − n X i =1 µ i ( β ) θ i ( β S ) − b ( θ i ( β S )) φ/ A i = − log f ( µ ( β ); β S ) + C where f ( µ ( β ); β S ) is the lik eliho o d ev aluated at β S with data y replaced b y fitted means µ ( β ) when the parameter is β and C represents terms not dep ending on β S and hence irrel- ev ant when minimizing ov er β S . So minimization with resp ect to β S sub ject to a constrain t just corresp onds to minimization of the negative log-likelihoo d sub ject to a constraint but with data µ ( β ) instead of y . Dupuis and Rob ert (2003) observ ed that in the case where the subspace S is defined by a set of active cov ariates calculation of the Kullbac k-Leibler pro jection can b e done using standard soft w are for calculation of the maxim um lik eliho o d 8 estimator in generalized linear mo dels: one simply “fits to the fit” using the fitted v alues for the full mo del instead of the resp onses y in the fitting for a subset mo del. Clearly for some choices of the resp onse distribution the data y might b e in teger v alued, but commonly generalized linear mo delling softw are do es not chec k this condition so that replacement of the data y with the fitted means for the full mo del can usually b e done. In our case, supp ose we wish to calculate the pro jection onto the subspace (2). W e m ust minimize − log f ( µ ( β ); β S ) sub ject to p X j =1 | β S,j | ≤ λ where β S,j denotes the j th element of β S . This is equiv alent to minimization of − log f ( µ ( β ); β S ) + δ p X j =1 | β S,j | for some δ > 0. Here the calculation just in volv es the use of the lasso of Tibshirani (1996) where in the calculation the resp onses are replaced by the fitted v alues µ ( β ). In the case of a Gaussian linear mo del, the whole solution path o ver v alues of δ can be calculated with computational effort equiv alent to a single least squares fit (Osb orne et al. , 2000, Efron et al. , 2004). Efficien t algorithms are also av ailable for generalized linear mo dels (Park and Hastie, 2007). Note that b ecause the relationship betw een λ and δ dep ends on β , generally w e calculate the whole solution path ov er δ in order to calculate the pro jection onto the subscpace defined by the constraint. One can consider other constrain ts apart from an L 1 constrain t. F or instance, (3) leads to minimization of − log f ( µ ( β ); β S ) + δ p X j =1 | β S,j | / | β j | for δ > 0 if we choose β ∗ = β which gives a certain adaptive lasso estimator (Zou, 2006) 9 obtained by fitting with data y replaced by µ ( β ). Also, (4) leads to minimization of − log f ( µ ( β ); β S ) + δ p X j =1 | β S,,j | + γ p X j =1 β 2 S,j for p ositive constants δ and γ whic h is related to the elastic net of Zou and Hastie (2005). Later w e fo cus on the lasso and adaptiv e lasso t yp e pro jections for whic h the parameter λ needs to b e chosen. One wa y to do this is to follo w a similar strategy to the one employ ed in Dupuis and Rob ert (2003). W riting M S = M S ( λ ) for the mo del sub ject to the restriction (2) or (3), w e c ho ose λ as small as p ossible sub ject to d ( M S , M F ) < c . Note that c ho osing the single parameter λ is muc h easier than searc hing ov er subsets as in Dupuis and Rob ert (2003), and that d ( M S , M F ) increases monotonically as λ decreases. An alternative to choosing c based on relative explanatory p ow er would b e to directly choose the observ ed sparsity in the mo del: that is, to c ho ose λ so that the posterior mean of the num b er of active comp onents in the pro jection is equal to some sp ecified v alue. The relative loss of explanatory p ow er can also b e rep orted for this c hoice. Another p ossibilit y is to av oid choosing λ at all, but instead to simply rep ort the characteristics of the mo dels app earing on the solution path o ver differen t samples from the posterior distribution in the full mo del. 2.3 Gaussian resp onse with unkno wn v ariance So far w e ha ve considered the scale parameter φ to b e kno wn. F or binomial and Poisson resp onses φ = 1, but we also wish to consider Gaussian linear models with unkno wn v ariance φ = σ 2 . Calculation of pro jections is still straightforw ard in the Gaussian linear mo del with unkno wn v ariance parameter. No w supp ose we ha ve mean and v ariance parameter β and σ 2 , and write β S and σ 2 S for corresp onding parameter v alues in some subspace S . In this 10 case (5) is E β ,σ 2 − n 2 log 2 π σ 2 − n X i =1 ( Y i − µ i ( β )) 2 2 σ 2 + n 2 log(2 π σ 2 S ) + n X i =1 ( Y i − µ i ( β S )) 2 2 σ 2 S ! = − n 2 log 2 π σ 2 − n 2 + n 2 log 2 π σ 2 S + 1 2 σ 2 S n X i =1 ( σ 2 + ( µ i ( β ) − µ i ( β S )) 2 ) = n 2 log σ 2 S σ 2 − 1 + nσ 2 2 σ 2 S + 1 2 σ 2 S n X i =1 ( µ i ( β ) − µ i ( β S )) 2 . Minimization with resp ect to β S sub ject to an L 1 constrain t in volv es minimization of n X i =1 ( µ i ( β ) − µ i ( β S )) 2 + δ p X j =1 | β S,j | and the minimizer β 0 S o ver β S is indep enden t of the v alue of σ 2 S . Once the pro jection β 0 S is calculated, the pro jection σ 2 S 0 is easily sho wn from the expression ab o v e to b e σ 2 S 0 = σ 2 + ( µ ( β ) − µ ( β 0 S )) T ( µ ( β ) − µ ( β 0 S ) n . 3 Computation and predictiv e inference W e hav e already discussed computation of the Kullback-Leibler pro jection onto subspaces of certain forms in generalized linear models. Hence generating from the p osterior distribution of the pro jection is easily done – w e simply generate a sample from the p osterior distribution p ( β | y ) of the parameter, β (1) , ..., β ( s ) sa y , and then for eac h of these parameter v alues w e calculate the corresp onding pro jections β (1) 0 , ..., β ( s ) 0 . Note that the pattern of sparsity of the pro jection is differen t for differen t samples from the p osterior distribution, so that the p osterior distribution of the pro jection provides one w ay of exploring mo del uncertaint y . W e 11 appro ximate the predictive densit y (1) by 1 s s X i =1 p (∆ | β ( i ) 0 , y ) where p (∆ | β ( i ) 0 , y ) denotes the predictiv e distribution for ∆ giv en the parameter v alue β ( i ) 0 and data y . W e can write γ 0 j = I ( β 0 j 6 = 0) for the indicator of whether or not the j th comp onen t of the pro jection of β is nonzero, and γ 0 = ( γ 0 1 , ..., γ 0 p ) T . Then w e can write p S (∆ | y ) in a differen t form to (1), namely p S (∆ | y ) = X γ 0 p ( γ 0 | y ) p (∆ | γ 0 , y ) where p (∆ | γ 0 , y ) = Z p (∆ | γ 0 , β 0 , y ) p ( β 0 | γ 0 , y ) d β 0 . These expressions for predictiv e densities are formally similar to those arising in Ba y esian mo del a v eraging, where different v alues for the indicators γ 0 define differen t mo dels. Of course, the p osterior distribution on γ 0 cannot b e in terpreted in quite the same wa y as the p osterior distribution on the mo del space in a formal Bay esian approach to mo del com- parison, but we still b elieve that examining p ( γ 0 | y ) can b e helpful for exploring differen t in terpretations of the data in our approac h. W e illustrate this in the examples b elo w. 4 Consisten t mo del selection Let β 0 denote the true parameter, and for an y β write A ( β ) = { k : β k 6 = 0 } so that for instance A ( β 0 ) is the set of nonzero co efficien ts for the true parameter. Supp ose that β is 12 some fixed parameter v alue and consider β 0 S whic h minimizes − log p ( µ ( β ); β S ) sub ject to p X j =1 | β S,j | / | β j | ≤ λ. (6) with resp ect to β S . That is, we consider in this section Kullbac k-Leibler pro jections for subspaces of the form (3) related to the adaptiv e lasso of Zou (2006). A similar result to the one b elow can b e pro v ed for some pro jections related to the non-negativ e garotte in view of the close connection b etw een the adaptive lasso and the non-negativ e garotte (Zou, 2006). W e will examine pro jections related to the non-negativ e garotte when we lo ok at structured v ariable selection problems later. Considering only the case of a generalized linear mo del, the minimization problem ab o ve is equiv alen t to minimization of n X i =1 { µ i ( β ) θ i ( β S ) + b ( θ i ( β S )) } + γ p X j =1 | β S,j | / | β j | (7) where there is a natural one to one corresp ondence b etw een γ and λ in (6) and for simplicity w e are considering the case where the observ ation sp ecific weigh ts φ/ A i are all equal. Actu- ally , as mentioned earlier, the relationship b etw een γ and δ dep ends on β , but this can b e ignored in what follows: if we take λ = # A ( β 0 ) + O p (1 / √ n ), where # A ( β 0 ) is the num b er of the en tries in A ( β 0 ), the consistency result for (6) can be similarly established. W e also consider henceforth the natural link function θ i ( β ) = x T i β , although the argumen ts b elo w extend easily to other link functions and the case of unequal weigh ts for the observ ations. If β is a sample from the p osterior distribution then under general conditions it is a ro ot- n consistent estimator, and w e kno w that for any ∈ [0 , 1] there exists C not depending on n suc h that with N n = { α : k α − β 0 k ≤ C / √ n } , P r ( β ∈ N n ) ≥ 1 − where the probability 13 is calculated with resp ect to the distribution q ( β ) = Z p ( β | y ) p ( y | β 0 ) dy . W e will sho w that lim n →∞ P r ( A ( β 0 S ) = A ( β 0 ) for ev ery β ∈ N n ) = 1 for a suitable sequence of v alues γ n for γ and hence lim n →∞ P r ( A ( β 0 S ) = A ( β 0 )) = 1 . That is, the p osterior distribution of the pro jection indicates the correct mo del with prob- abilit y one as n → ∞ for a suitable choice of the sequence of parameters γ n defining the pro jection. W e assume the following regularit y conditions, whic h are the same as in Zou (2006). 1. The Fisher information I ( β 0 ) is p ositiv e definite; 2. There is a large enough open set O containing the true parameter β 0 suc h that ∀ β ∈ O , | b 000 ( x T β ) | ≤ M ( x ) < ∞ and E [ M ( x ) | x i x j x k | ] < ∞ for any i, j, k . Theorem 1. F or β ∈ N n , if γ n / √ n → 0 and γ n → ∞ as n → ∞ , β 0 S whic h minimizes (7) is consistent in v ariable selection and is √ n -consisten t. 14 The pro of, which is an easy adaptation of a similar result in Zou (2006), is given in the App endix. 5 Structured v ariable selection In the last section we considered v ariable selection in generalized linear mo dels: as b efore, write η = X β where η is the vector of linear predictor v alues, X is a design matrix and β is a parameter vector. In this section w e will com bine the structured v ariable selection approac h of Y uan, Joseph and Zou (2007) whic h uses the non-negative garotte of Breiman (1995) with our pro jection approac h to v ariable selection. Consider the mo del in which η = X ( β ∗ ◦ θ ) where β ∗ = ( β ∗ 1 , ..., β ∗ p ) T and θ = ( θ 1 , ..., θ p ) T are p -vectors of parameters with θ j ≥ 0, P p j =1 θ j ≤ p and where ◦ denotes element b y elemen t m ultiplication of tw o vectors. W e write β ∗ instead of β to emphasize that β ∗ is a differen t parameter in a differen t mo del to the original one, although our original mo del can b e recov ered b y setting β ∗ = β and θ a p -v ector of ones. W e can consider the pro jection of this parameter on to the subspace S = S ( β , λ ) = { ( β ∗ , θ ) : β ∗ = β , p X j =1 θ j ≤ λ, θ j ≥ 0 , j = 1 , ..., p } . T o calculate the pro jection we need to minimize − log p ( µ ( β ); β ◦ θ ) with respect to θ sub ject to θ j ≥ 0 and P p j =1 θ j ≤ λ . F or the Gaussian case, this is just Breiman’s non-negative garotte applied to the fitted v alues µ ( β ) rather than the data y . The minimization problem is easily solv ed. See Y uan, Joseph and Zou (2007) for computational details in the sligh tly more complicated situation of structured fitting of generalized linear mo dels. As p ointed out by Zou (2006), the non-negative garotte is v ery closely related to the adaptive lasso. In solving the minimization problem ab o v e w e ma y find that some of the θ j are zero. This 15 allo ws v ariable selection in the original mo del for which w e hav e replaced the parameter β with β ∗ ◦ θ . Y uan, Joseph and Zou (2007) also suggested a w ay in whic h hierarc hical structure can b e incorp orated in the non-negative garotte, and we make use of this idea here. F ollo wing their notation, for the i th predictor (corresp onding to the i th column of X ) we write D i for the set of predictors which are so-called parents of i . Under the strong heredit y principle (Chipman, 1996) all the predictors in D i m ust b e included in the mo del b efore the i th predictor is included. Under the weak heredity principle at least one of the predictors in D i m ust b e included b efore the i th predictor is included. Y uan, Joseph and Zou (2007) suggest the constraints θ i ≤ θ j for j ∈ D i to enforce the strong heredit y principle and θ i ≤ P j ∈D i θ j to enforce the weak heredity principle. The linear nature of the constrain ts ensures that computations are still tractable. 6 “Large p , small n ” problems and preconditioning P aul et al. (2007) suggest that in “large p , small n ” regression problems with more predictors than observ ations it is b eneficial to separate the problem of obtaining go o d predictions from that of v ariable selection. With this in mind, they suggest a t wo step pro cedure where first a go o d predictor ˆ y is found for the mean resp onse, and then in a second stage a mo del selection and fitting pro cedure such as the lasso is applied with the resp onses y replaced b y ˆ y . They sho w that such a procedure can perform b etter than application of the fitting and selection pro cedure to the ra w outcome y . W e note that their second stage of fitting to a set of fitted v alues (using the lasso in the case of a linear model for instance) commonly corresponds to calculation of a Kullbac k- Leibler pro jection if ˆ y is obtained by plugging in of a point estimate of the mo del parameters. Our approac h is related though slightly differen t – w e generate from the p osterior distribution of the parameters, and for each draw from the p osterior w e fit to the corresp onding set of 16 fitted v alues. 7 Examples and sim ulations 7.1 Lo w birth w eigh t data W e consider application of our approac h to the lo w birth w eight data of Hosmer and Lemesho w (1989). The data are concerned with 189 births at a US hospital. W e consider a logistic regression mo del for a resp onse whic h is a binary indicator for birthw eight b eing less than 2.5kg. The predictors in the model are sho wn in T able 1. These predictors had b een shown to b e asso ciated with low birthw eigh t in past studies and it w as desired to find out which of the predictors w ere imp ortant for the medical cen tre where the data w ere collected. In our analysis we leav e the binary predictors unc hanged, but cen tre and scale the other predictors to hav e mean zero and v ariance one. W e fit the full mo del with a prior on the co efficients that is normal, with mean vector 0 and co v ariance matrix 3 I where I denotes the identit y matrix. This is a fairly noninformativ e prior on the scale of the probabilities: note that making the prior v ariances of co efficients v ery large w ould correspond to a v ery informativ e prior on the probability scale where high prior probabilit y is placed on co efficient v alues corresp onding to most of the fitted v alues b eing close to zero or one. Co efficien t estimates (p osterior means) and p osterior standard deviations obtained b y fitting the full mo del are sho wn in T able 2. These results were obtained using the MCMCpac k pac k age in R (Martin and Quinn, 2007). T o obtain the results rep orted w e ran the MCMC scheme for 1000 “burn in” and 10000 sampling iterations. W e consider our pro jection approac h to selection with the lasso type constraint (2) as w ell as the adaptive lasso type constraint (3). F or eac h sample from the p osterior, the whole solution path w as calculated for the pro jection as the parameter λ w as v aried, and all the distict mo dels on the path w ere recorded. Th us for each sample from the posterior, 17 Figure 1: Plot of relativ e loss of explanatory p o wer versus p osterior exp ected mo del size for lo w birth weigh t example. The solid line is for the lasso pro jection and the dashed line is for the adaptive lasso. 02468 1 0 0 2 04 06 08 0 1 0 0 Average model size Explanatory loss w e should ha ve roughly 11 distinct mo dels (including the n ull and the full mo dels) because there are 10 co v ariates. W e say “roughly” 11 distinct mo dels b ecause it is p ossible for a v ariable to lea ve the mo del as the regularization parameter is increased in the solution path, but this is not v ery common in practice. T able 3 shows the tw o most frequen tly app earing mo dels of each size across solution paths for all samples from the p osterior, together with the relativ e frequency with whic h this mo del app ears amongst models with the same n umber of co v ariates. The table only rep orts results for the adaptive lasso. The reason wh y we only rep ort results for the adaptive lasso is sho wn in Figure 1, whic h giv es the relative loss of explanatory p o wer as a function of the p osterior exp ected n umber of v ariables selected in the pro jection. In the figure, the solid line is for the lasso pro jection and the dashed line for the adaptiv e lasso – it can b e seen that for the adaptiv e lasso there is a reduced loss of explanatory p ow er compared to the lasso for a given lev el of parsimon y . 18 Examining the mo dels in T able 3, the indicators for num b er of first trimester ph ysician visits (ftv), one of the indicators for race and age app ear to be the least imp ortant cov ariates. This is consistent with other published analyses of this data set suc h as in V enables and Ripley (2002). They consider step wise v ariable selection using AIC in a main effects model including all the cov ariates, whic h results in exclusion of the dummy v ariables co ding for ftv and age. They also consider inclusion of second order in teractions and note that there is some evidence for an in teraction b etw een ftv and age. Raftery and Zheng (2003) also consider some reference Bay esian mo del av eraging approac hes to the analysis of this dataset. Their conclusions concerning the imp ortant v ariables (based on marginal p osterior probabilities of inclusion) are similar to ours, although it should b e noted that their mo del is different with first trimester ph ysician visits treated as a contin uous co v ariate rather than b eing co ded through t wo indicator v ariables as in our analysis, whic h follows V enables and Ripley (2002). 7.2 Structured v ariable selection example Our next example concerns a v ariable selection problem with hierarchical structure. The data are simulated follo wing a similar example discussed in Y uan, Joseph and Zou (2007). The purpose of considering this example is to sho w that the metho d described in Section 5 whic h incorp orates hierarc hical constrain ts into v ariable selection is b eneficial. In particular, w e consider a mo del satisfying the strong heredit y principle, and then sho w that a pro jection approac h to v ariable selection which imp oses strong heredit y outperforms an approac h whic h do es not imp ose this constraint. By outp erforms here we mean that for a given lev el of parsimon y (a given v alue for the p osterior exp ected num b er of nonzero comp onents of the pro jection) w e hav e a greater p osterior probabilit y for the model c hosen via the pro jection to encompass the true mo del, with a relatively small loss of explanatory p o wer due to imp osing the constraint. In the example of Y uan, Joseph and Zou (2007) three predictors X 1 , X 2 and X 3 are 19 sim ulated follo wing a m ultiv ariate normal distribution with mean zero and Cov( X i , X j ) = ρ | i − j | for v alues ρ of − 0 . 5, 0 and 0 . 5. There are n = 50 observ ations simulated and 100 differen t datasets are considered for each v alue of ρ . W e consider fitting a mo del that includes X 1 , X 2 , X 3 and all second order interaction terms (nine p ossible terms in all - no in tercept is fitted). The true mo del used to generate Y is Y = 3 X 1 + 2 X 2 + 1 . 5 X 1 X 2 + where ∼ N (0 , 9). Note that this mo del resp ects the strong heredity principle – for the in teraction term, the corresp onding main effects are also included. W e use tw o v ariants of our non-negativ e garotte approac h to fitting the data. The first v arian t resp ects the strong heredit y principle, and the second v ariant do es not imp ose any constrain t. W e considered a grid of 100 equally spaced v alues for λ b etw een 0 and 9 in the constraint P p j =1 θ j ≤ λ as describ ed in Section 5. Using the usual noninformative prior in the Bay esian linear mo del on the regression co efficients and v ariance parameter of p ( β , σ 2 ) ∝ σ − 2 , we can sim ulate directly from the posterior distribution without the need for iterativ e metho ds (see, for instance, Gelman et al. , 2003). F or eac h sim ulated dataset we generated 1000 samples from the p osterior distribution. F or eac h v alue of λ in the grid and each draw from the p osterior distribution, we calculated pro jections (with and without the strong heredit y constraint) recording the n umber of active v ariables, whether or not the pro jection encompassed the true mo del and the Kullback-Leibler divergence b etw een the full mo del and the pro jections. Plotting the p osterior exp ected v alues of these quantities against one another for the grid of v alues of λ giv es a sense of the trade off b et ween parsimon y , predictive accuracy and iden tification of the imp ortant v ariables. Figure 2 shows plots of the probability of encompassing the true mo del and of the explanatory loss v ersus posterior expected n umber 20 of v ariables selected in the pro jected mo del for the tw o pro jection metho ds (imp osing strong heredit y , solid line, and no constraint, broken line). F or a given level of parsimony it can b e seen that the pro jection metho d which imp oses the hierarc hical constrain t has a higher p osterior probability of encompassing the true mo del so that enforcing the strong heredity principle when it is approp oriate is helpful for obtaining more parsimonious mo dels and for iden tifying the imp ortant v ariables. 7.3 “Large p , small n ” regression W e now consider some simulations for the “large p , small n ” case where there are more predictors than observ ations. W e consider generating 100 datasets with n = 20 and 40 predictors. The datasets follow a linear model y = X β + where ∼ N (0 , 5 2 I ). Belo w w e write x i. for the i th row of the design matrix X . 1. Example 1: set β j = 0, j = 1 , ..., 10, j = 21 , ..., 30, β j = 2, j = 11 , ..., 20, j = 31 , ..., 40. W e ha v e x i. ∼ N (0 , I ). 2. Example 2: set β j = 4, j = 1 , ..., 5, β j = 0, j = 6 , ..., 40. W e generate x i. ∼ N (0 , Σ ) with Σ j j = 1, j = 1 , ..., 40 and Σ ij = 0 . 5 i 6 = j . In the first example there is no multicollinearit y , but 20 active predictors. In the second example there is mo derate m ulticollinearity but only 5 active predictors. Since the n um b er of predictors is double the num b er of observ ations in b oth examples, here we are considering a “large p , small n ” situation. F or a Ba yesian analysis of the data with an encompassing mo del we consider the Bay esian lasso of Park and Casella (2008). They consider the follo wing priors on parameters. If an in tercept term β 0 is included, this is giv en 21 Figure 2: Plots of p osterior probabilit y of encompassing the true mo del v ersus a v erage mo del size (left column) and explanatory loss v ersus av erage mo del size (righ t column). The parameter ρ takes v alues of − 0 . 5 (top) 0 (middle) and 0 . 5 (bottom). Solid line is for strong heredit y and broken line no constrain t. 0 2 4 6 8 0.0 0.2 0.4 0.6 0.8 1.0 Average model size Encompassing probability 0 2 4 6 8 0 20 40 60 80 100 Average model size Explanatory loss 0 2 4 6 8 0.0 0.2 0.4 0.6 0.8 1.0 Average model size Encompassing probability 0 2 4 6 8 0 20 40 60 80 100 Average model size Explanatory loss 0 2 4 6 8 0.0 0.2 0.4 0.6 0.8 1.0 Average model size Encompassing probability 0 2 4 6 8 0 20 40 60 80 100 Average model size Explanatory loss 22 a flat prior p ( β 0 ) ∝ 1 and this parameter can b e integrated out of the mo del analytically . Conditional on the v ariance σ 2 , the β j are conditionally indep enden t in their prior with p ( β j | σ 2 ) = λ 2 σ 2 exp − λ | β j | √ σ 2 where λ > 0 is a shrink age parameter. Finally an inv erse gamma prior can b e used for σ 2 , where we use I G (0 . 01 , 0 . 01). A hyperprior can b e placed on λ , or it can b e estimated b y marginal maxim um likelihoo d as outlined in Park and Casella (2008) or b y cross-v alidation. F or illustrativ e purposes here w e will fix λ = 10 in the computations below. P ark and Casella (2008) outline an efficient MCMC scheme for computations. W e consider pro jections based on the adaptive lasso, and Figure 3 shows a plot of the false disco very rate (av erage n umber of v ariables incorrectly selected divided by a v erage num b er of v ariables selected) v ersus a v erage mo del size. The a verages are o ver 100 sim ulation repli- cates. Quite a large model w ould need to b e chosen to encompass all the active predictors. Note that if w e use the classical lasso to do selection then the n umber of predictors chosen b y the pro jection cannot b e more than the num b er of observ ations. Figure 3 also shows the explanatory loss as a function of the av erage mo del size. Mo del uncertain ty is considerable here, and we b elieve that the distribution on the mo del space defined by the pro jection is extremely v aluable for exploring mo del uncertaint y . Figure 4 sho ws for the first sim ulation replicate in each example the marginal p osterior probabilities of the v ariables b eing nonzero in the pro jection. The pro jections in the figure corresp ond to av erage mo del size of 13 (ex- ample 1) and 10 (example 2) corresponding to approximately 20% explanatory loss in b oth cases. The lines show the mean v alues for these probabilities within the activ e and inactive groups. It is clear that there is some useful information in the p osterior distribution of the pro jection for distinguishing active from inactive v ariables. It is imp ortant to realize that Figure 4 is examining posterior probabilities of selection in the pro jection for a single repli- 23 cate, not the frequen tist b eha viour of selection across replicates - such frequen tist b eha viour is summarized b y the false disco very rates of Figure 3. W e also stress that p osterior prob- abilities of selection in the pro jection dep end on the prior in the encompassing mo del and the tolerable explanatory loss. 8 Conclusion W e hav e discussed the use of Kullbac k-Leibler pro jections related to the lasso as a to ol for the exploration of model uncertaint y . There are man y p ossible extensions to our suggested framew ork. One interesting p ossibility which we are currently pursuing is the use of pro- jections related to v ersions of the lasso for selection on batches of parameters and random effects. App endix Pr o of of The or em 1 : W e write β = β 0 + v / √ n , where k v k ≤ C . Denoting u = √ n ( β S − β 0 ), w e define L ( u ) = n X i =1 {− µ i ( β ) x T i ( β 0 + u √ n ) + b ( x T i ( β 0 + u √ n )) } + γ p X j =1 | β 0 j + u j √ n | / | β j | and Z ( u ) = L ( u ) − L ( 0 ) . The minimizer u 0 of L ( u ) gives the minimizer β 0 S of (7) by β 0 S = β 0 + u 0 / √ n , and hence to study β 0 S it suffices to consider u 0 . F ollowing Zou (2006), w e decomp ose Z ( u ) as Z ( u ) = Z 1 ( u ) + Z 2 ( u ) + Z 3 ( u ) + Z 4 ( u ) 24 Figure 3: Plots of false discov ery rate versus a verage model size (top ro w) and explanatory loss v ersus a verage mo del size (b ottom row) for examples 1 (left) and 2 (righ t). Plotted p oin ts corresp ond to a grid of v alues for the constraint λ . 0 5 10 15 0.30 0.35 0.40 0.45 Average model size False Discovery Rate 0 5 10 15 0.60 0.65 0.70 0.75 0.80 Average model size False Discovery Rate 0 5 10 15 20 40 60 80 100 Average model size Explanatory loss 0 5 10 15 0 20 40 60 80 100 Average model size Explanatory loss 25 Figure 4: Plots of marginal p osterior probabilit y of inclusion versus v ariable for first sim- ulation replicate for example 1 (left) and 2 (righ t). Probabilities are for pro jections with a verage mo del size 13 (left) and 10 (righ t) corresp onding in b oth cases to approximately 20% explanatory loss. The lines sho w the mean p osterior probabilities of inclusion among the active and inactive groups. ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● 0 10 20 30 40 0.2 0.4 0.6 0.8 Variable Posterior Selection Probability ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● ● 0 10 20 30 40 0.0 0.2 0.4 0.6 Variable Posterior Selection Probability where Z 1 ( u ) = − n X i =1 [ µ i ( β ) − b 0 ( x T i β 0 )] x T i u √ n Z 2 ( u ) = n X i =1 1 2 b 00 ( x T i β 0 ) u T x i x T i n u Z 3 ( u ) = γ p X j =1 | β 0 j + u j √ n | − | β 0 j | | β j | Z 4 ( u ) = n − 3 / 2 n X i =1 1 6 b 000 ( x T i β ∗ )( x T i u ) 3 where β ∗ lies b et ween β 0 and β 0 + u / √ n . Since µ i ( β 0 ) = b 0 ( β 0 ), we can write the first term as Z 1 ( u ) = n X i =1 ( µ i ( β ) − µ i ( β 0 )) x T i u √ n = n X i =1 ( v T √ n + o p (1 / √ n )) µ 0 i ( β 0 ) x T i u √ n = a T n u + o p (1) , 26 where k a n k = O p (1) . F or the second term Z 2 ( u ), we ha ve n X i =1 b 00 ( x T i β 0 ) x i x T i n → I ( β 0 ) . Th us Z 2 ( u ) → 1 / 2 u T I ( β 0 ) u . F or the third term, following the arguments in Zou (2006), w e ha ve γ n | β 0 j + u j √ n | − | β 0 j | | β j | → p 0 β 0 j 6 = 0 0 β 0 j = 0 and u j = 0 ∞ β 0 j = 0 and u j 6 = 0 since γ n satisfies γ n / √ n → 0 and γ n → ∞ . The fourth term is of the order O p (1 / √ n ) as 6 √ nZ 4 ( u ) ≤ n X i =1 1 n M ( x i ) | x T i u | 3 → p E [ M ( x ) | x T u | 3 ] < ∞ . F rom the ab ov e arguments, w e m ust ha ve u A = O p (1) and u A C → d 0 , where u A is the subv ector of u corresp onding to the co efficients in A ( β 0 ). Thus β 0 S is √ n - consisten t, and ∀ j ∈ A ( β 0 ), with probabilit y tending to one, β 0 j is estimated by a nonzero co efficien t. It suffices then to show that ∀ k / ∈ A ( β 0 ), with probabilit y tending to one, β 0 k will b e estimated b y zero. Otherwise, by the Karush-Kuhn-T uc k er optimalit y conditions, w e m ust ha ve 1 √ n n X i =1 x ik ( µ i ( β ) − b 0 ( x T i β S )) = γ n √ n | β k | sgn( β S,k ) . (8) 27 It is easy to see that the left hand side is equiv alent to 1 √ n n X i =1 x ik [( µ i ( β ) − µ i ( β 0 )) − ( b 0 ( x T i β S ) − b 0 ( x T i β 0 ))] = 1 √ n n X i =1 x ik [( β − β 0 ) T µ 0 i ( β 0 ) − b 00 ( x T i β 0 ) x T i ( β S − β 0 ) + o p ( k β − β 0 k + k β S − β 0 k )] = O p (1) . Ho wev er, the righ t hand side satisfies γ n √ n | β k | → ∞ , since β k = β 0 k + O p (1 / √ n ) = O p (1 / √ n ) for β k ∈ N and β k / ∈ A . This con tradicts (8) and the pro of is completed. References Berger, J. and Pericc hi, L. (1996) The in trinsic Bay es factor for mo del selection and predic- tion. J. Amer. Statist. Asso c. , 91, 109-122. Bernardo, J. M. and Rueda, R. (2002). Bay esian h yp othesis testing: A reference approach. Int. Statist. R ev. , 70, 351-372. Breiman, L. (1995) Better subset regression using the non-negativ e garotte. T e chnometrics , 3, 373-384. Bro wn, P .J., F earn, T. and V ann ucci, M. (1999) The c hoice of v ariables in multiv ariate regression: A non-conjugate Bay esian decision theory approac h. Biometrika , 86, 635– 648. 28 Bro wn, P .J., V ann ucci, M. and F earn, T. (2002) Bay es Mo del a v eraging with selection of regressors. J. R oy. Statist. So c. B , 64, 519–536. Chipman, H. (1996). Ba yesian v ariable selection with related predictors. Canadian J. Statist. , 24, 17–36. Drap er, D. and F ousk akis, D. (2000) A case study of sto c hastic optimization in health p olicy: problem formulation and preliminary results. J. Glob al Optimizn. , 18, 399–416. Dupuis, J.A. and Rob ert, C.P . (2003) V ariable selection in qualitativ e mo dels via an entropic explanatory p ow er. J. Statist. Plan. Inf. , 111, 77–94. F ern´ andez, C., Ley , E., and Steel, M.F.J. (2001) Benchmark priors for Ba y esian mo del a veraging. J. Ec onomet. , 100, 381–427. Gelfand, A.E. and Ghosh, S.K. (1998) Mo del choice: a minim um p osterior predictiv e loss approac h. Biometrika , 85, 1–11. Gelman, A., Carlin, J. B., Stern, H. S., and Rubin, D. B. (2003). Ba yesian Data. Analysis (2nd edition). London: CR C Press. Gelman, A., Meng, X.-L. and Stern, H. (1996). P osterior predictive assessment of mo del fitness via realized discrepancies. Statistic a Sinic a , 6, 733–807. George, E.I. and F oster, D.P . (2000) Calibration and empirical Bay es v ariable selection. Biometrika , 87, 731–747. Griffin, J.E. and Bro wn, P .J. (2007). Bay esian adaptiv e lassos with non-conv ex p enalization. T echnical rep ort av ailable at http://www.kent.ac.uk/ims/personal/jeg28/BALasso.pdf 29 Goutis, C. and Rob ert, C.P . (1998). Mo del c hoice in generalised linear mo dels: A Ba yesian approac h via Kullback-Leibler pro jections. Biometrika , 85, 29-37 Ho eting, J.A., Madigan, D., Raftery , A.E. and V olinsky , C.T. (1999). Bay esian mo del av er- aging: A tutorial (with Discussion). Statistic al Scienc e , 14, 382–401. Correction: v ol. 15, pp. 193-195. Corrected v ersion av ailable at http://www.stat.washington.edu/www/research/online/hoeting1999.pdf Kohn, R., Smith, M. and Chan, D. (2001). Nonparametric regression using linear com bina- tions of basis functions. Statistics and Computing , 11, 313–322. Lindley , D.V. (1968) The choice of v ariables in multiple regression (with discussion). J. R oy. Statist. So c. B , 30, 31–66. Martin, A. and Quinn, M. (2007). The MCMCpack pac k age (v ersion 0.9-1). R pack age man ual a v ailable at http://cran.r-project.org/doc/packages/MCMCpack.pdf Mengersen, K. and Rob ert, C. (1996). T esting for mixtures: aBa y esian en tropy approach. In: Bay esian Statistics 5, Eds. J.O. Berger, J.M. Bernardo, A.P . Dawid, D.V. Lindley and A.F.M. Smith. pp. 255–276, Oxford Univ ersity Press. O’Hagan, A. (1995). F ractional Ba yes factors for mo del comparison (with discussion). J. R oy. Statist. So c. B , 56, 99–138. Osb orne, M.R., Presnell, B. and T urlach, B.A. (2000). A new approac h to v ariable selection in least squares problems. IMA Journal of Numeric al Analysis , 20, 389-403. P ark, T. and Casella, G. (2008). The Ba yesian lasso. J. Amer. Statist. Asso c. , 103, 681–686. 30 P ark, M.-Y. and Hastie, T. (2007). An L1 regularization-path algorithm for generalized linear mo dels. J. R oy. Statist. So c. B , 69, 659677. P aul, D., Bair, E., Hastie, T. and Tibshirani, R. (2007) Pre-conditioning for feature selection and regression in high-dimensional problems. A nnals of Statistics , to app ear. Raftery , A.E. (1996). Appro ximate Bay es factors and accoun ting for mo del uncertaint y in generalized linear mo dels. Biometrika , 83, 251-266. Raftery , A.E. and Zheng, Y. (2003). Discussion: P erformance of Bay esian Mo del Av eraging. J. A mer. Statist. Asso c. , 98, 931–938. Spiegelhalter, D.J., Best, N.G., Carlin, B.P . and v an der Linde, A. (2002) Bay esian measures of mo del complexity and fit (with discussion). J. R oy. Statist. So c. B , 64, 583–639. Tibshirani, R. (1996) Regression shrink age and selection via the lasso. J. R oy. Statist. So c. B , 58, 267–88. V ehtari, A. and Lampinen, J. (2004). Mo del Selection via Predictiv e Explanatory Po w er. Rep ort B38, Lab oratory of Computational Engineering, Helsinki Universit y of T echnology . Y uan, M., Joseph, V.R. and Zou, H. (2007). Structured v ariable selection and estimation. T echnical rep ort. Av ailable at http://www2.isye.gatech.edu/~myuan/YuanPub.html Y uan, M. and Lin, Y. (2005). Efficien t empirical Ba yes v ariable selection and estimation. J. A mer. Statist. Asso c. , 100, 1215–1225. Zou, H. and Hastie, T. (2005) Regularization and v ariable selection via the elastic net. J. R oy. Statist. So c. B , 67, 301–320. 31 Zou, H. (2006). The adaptive lasso and its oracle properties. J. A mer. Statist. Asso c. , 101, 1418–1429. 32 T able 1: Predictors for lo w birth weigh ts data set Predictor Description age age of mother in y ears lwt w eight of mother (lbs) at least menstrual perio d raceblac k indicator for race=black (0/1) raceother indicator for race other than white or blac k (0/1) smok e smoking status during pregnancy (0/1) ptd previous premature lab ors (0/1) h t history of hypertension (0/1) ui has uterine irritabilit y (0/1) ftv1 indicator for one physician visit in first trimester (0/1) ftv2+ indicator for t wo or more ph ysician visits in first trimester (0/1) T able 2: Posterior means and standard deviations of co efficients for full mo del fitted to low birth weigh t data. Predictor P osterior Posterior Mean Standard Deviation age -0.21 0.21 lwt -0.48 0.22 raceblac k 1.06 0.52 raceother 0.65 0.43 smok e 0.68 0.41 ptd 1.31 0.47 h t 1.69 0.67 ui 0.64 0.46 ftv1 -0.49 0.46 ftv2 0.11 0.44 33 T able 3: Tw o most frequently app earing mo dels of each size in solution path for the pro- jection together with relative frequency of each mo del within all app earances of mo del of the same size (Prob/Size). Zeros and ones in the columns lab elled b y the predictors show inclusion and exclusion for differen t mo dels (rows). Mo del Predictor Prob/ Size Size age lwt blac k other smoke ptd h t ui ftv1 ftv2 1 0 0 0 0 0 1 0 0 0 0 0.48 1 0 1 0 0 0 0 0 0 0 0 0.17 2 0 1 0 0 0 1 0 0 0 0 0.24 2 0 0 0 0 0 1 1 0 0 0 0.10 3 0 1 0 0 0 1 1 0 0 0 0.13 3 0 1 1 0 0 1 0 0 0 0 0.06 4 0 1 0 0 1 1 1 0 0 0 0.07 4 0 1 0 0 0 1 1 0 1 0 0.06 5 0 1 1 0 1 1 1 0 0 0 0.05 5 0 1 1 0 0 1 1 1 0 0 0.05 6 0 1 1 1 1 1 1 0 0 0 0.06 6 1 1 1 0 1 1 1 0 0 0 0.05 7 0 1 1 1 1 1 1 1 0 0 0.10 7 1 1 1 1 1 1 1 0 0 0 0.09 8 1 1 1 1 1 1 1 1 0 0 0.13 8 0 1 1 1 1 1 1 1 1 0 0.13 9 1 1 1 1 1 1 1 1 1 0 0.29 9 1 1 1 1 1 1 1 1 0 1 0.19 34

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment