Binary Causal-Adversary Channels

In this work we consider the communication of information in the presence of a causal adversarial jammer. In the setting under study, a sender wishes to communicate a message to a receiver by transmitting a codeword x=(x_1,...,x_n) bit-by-bit over a …

Authors: Michael Langberg, Sidharth Jaggi, Bikash Kumar Dey

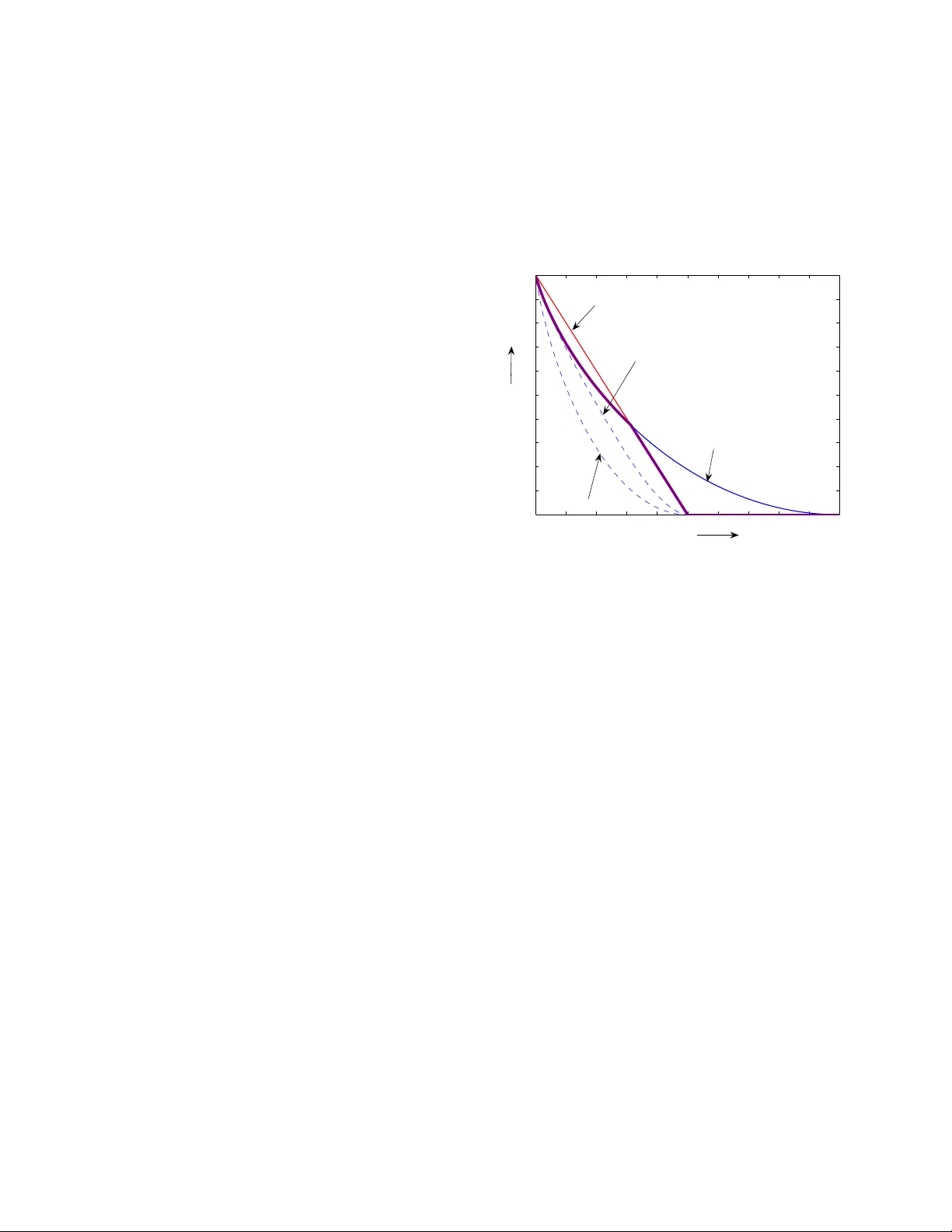

Binary Causal-Adv ersary Channels M. Langbe r g Computer Science Division Open Uni versity of Israel Raanana 4310 7, Israel mikel@openu. ac.il S. Jaggi Departmen t of Infor mation Engin eering Chinese Un iv ersity of Ho ng Kong Shatin, N.T ., Ho ng K ong jaggi@ie.cuh k.edu.hk B. K. Dey Departmen t of Electrical Engineer ing Indian I nstitute o f T ech nology Bom bay Mumbai, I ndia, 400 076 bikash@ee.ii tb.ac.in Abstract — In this work we consider the communication of informa tion in the presence of a causal adversaria l jammer . In t he setting under study , a sender wishes to communicate a message to a receiver by transmitting a codeword x = ( x 1 , . . . , x n ) bit-by-bit ov er a communication chann el. T he adversar ial jammer can view the transmitted bits x i one at a time, and can c hange u p to a p -fraction o f th em. Howe ver , the decisions of the jammer must be made in an online or causal mann er . Namely , for each bit x i the jammer’ s decision on whether to corrupt it or not (and on ho w to change it) must depend only on x j fo r j ≤ i . This is in contrast to th e “classical” adversar ial j ammer which may base its decisions on its com plete knowledge of x . W e present a non-trivial upp er bound on the amount of information that can be communicated. W e show that th e achiev able rate can be asymptotically no greater than min { 1 − H ( p ) , (1 − 4 p ) + } . Her e H ( . ) is the bin ary entropy function, and (1 − 4 p ) + equals 1 − 4 p for p ≤ 0 . 25 , and 0 otherwise. I . I N T RO D U C T I O N Consider the fo llowing adversarial commun ication sce- nario. A sender Alice wishes to transmit a messag e u to a receiver B ob. T o do so, Alice en codes u into a codeword x and transmits it over a binary channel . The codeword x = x 1 , . . . , x n is a binary vector of length n . Ho wever , Calvin, a malicio us adversary , can observe x and corr upt u p to a p -fra ction of the n transmitted bits, i.e. , p n bits. In th e classical ad versarial chann el model, e. g., [4], it is usually assumed that Calvin has full knowledge of th e entire codeword x , and based on this knowledge ( together with the knowledge of the code shared b y Alice and Bob ) Calvin can maliciously plan wh at error to impose on x . W e refer to such an adversary as an omniscient adversary . F or bin ary channels, the optimal rate of co mmun ication in the pr esence of an om niscient adversary has b een an o pen prob lem in classical co ding th eory f or sev eral d ecades. Th e best k nown lower boun d is given by the Gilb ert-V arshamov bound [10], [18], which implies that Alice can tran smit at ra te 1 − H (2 p ) to Bob . Conv ersely , the tigh test upp er boun d was g iv en by McEliece e t al. [12], and has a positive gap fr om the lower bound for all p ∈ (0 , 1 / 4) (see Fig. 1). In this w ork we initiate th e an alysis of coding schem es that allow com munication ag ainst c ertain adversaries tha t are weaker than the omn iscient adversary . W e con sider adver- saries that behave in a causal or onlin e man ner . Namely , for 0 The work of B. K. Dey was supported by Bharti Centre for Communi- catio n in IIT Bombay , that of M. Langberg was supported in part by ISF grant 480/08, and that of S. Jaggi was partial ly supported by MS-CU-JL grants. 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0.4 0.45 0.5 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 p C 1−4p McEliece bound 1−H(p) Gilbert−Varshamov bound Fig. 1. Bounds on capacit y of the adver sarial channel. T he bold line in purple is our upper bound of min { 1 − H ( p ) , (1 − 4 p ) + } . each bit x i , we assume that Calvin d ecides whether to change it or not (and if so, how to ch ange it) based on the bits x j , for j ≤ i alon e, i.e. , the bits that he has already observed. In this case we refer to Calvin as a cau sal adversary . Causal adversaries ar ise natur ally in p ractical settings, where adversaries typically have no a priori kn owledge of Alice’ s me ssage u . In suc h cases they mu st simultaneously learn u based o n Alice’ s transmissions, and jam the corre- sponding co deword x accor dingly . This causality assum ption is reason able for many comm unication channels, both wir ed and wireless, where Calvin is n ot co-located with Alice. For example conside r the scenario in which the transmission of x = x 1 , . . . , x n is done du ring n chan nel uses over time, where at time i th e bit x i is tran smitted over th e chan nel. Calvin can only corrup t a bit when it is transmitted (and thus its er ror is b ased on its v iew so far). T o deco de the transmitted message, Bob waits un til all the bits have arri ved . As in the omniscient mod el, C alvin is restricted in the number of bits p n he can c orrup t. Th is m ight be b ecause of lim ited processing power or limited transmit energy . Recently , the pro blem of cod es against causal adversaries was consider ed and solved by the autho rs [6] fo r larg e- q channe ls , i.e. , ch annels wher e Alice’ s cod ew ord x = x 1 , . . . , x n is considere d to be a vector of len gth n over a field of “large” size q . E ach symb ol x i may repr esent a large packet of bits in practice. Calvin is allowed to arbitrarily corrup t a p - fraction of th e sym bols, rathe r tha n b its. A tight characterizatio n of the rate-region fo r various scen arios is giv en in [6], and computatio nally efficient co des that achieve these r ate-regions are p resented. Howev er , the tech niques used in character izing the rate-r egion o f causal adversaries over large- q channels do no t work over b inary chan nels. This is be cause each sym bol in a large- q channel ca n contain within it a “small” hash that can be used to verify the symbol. This is the crux of th e technique used to a chieve the lower bound s in [6]. W e curren tly do not know h ow to extend th is method to binary channels. Con versely , for upper boun ds, the geo metry o f the space of length- n codewords over large- q alphabets is significan tly different than that co rrespon ding to binary a lphabets. F or instance, f or large- q c hannels the volume of an n -sph ere of radiu s αn ( 0 ≤ α ≤ 1 ) over F q is ∼ q nα , This leads to simpler bou nds for large- q channels. In this work we initiate the study of binary causal- adversary channe ls, and present two upper bound s o n th eir capacity: 1 − H ( p ) , and (1 − 4 p ) + . The upper bound of 1 − H ( p ) is very “natural”. Namely , it is not har d to verify that if Calv in attacks Alice’ s tra nsmission b y sim ulating the well-studied Binary Symmetric Channel [4], h e can force a commun ication rate o f n o mo re th an 1 − H ( p ) . The uppe r bound o f (1 − 4 p ) + presented in this work is non -trivial for both its implicatio ns and its proof techniqu es. The bound demonstra tes that at least for some v alues of p , the achiev able rate is boun ded away from 1 − H ( p ) . For p ∈ ( p 0 , 0 . 5 ) , 1 − 4 p is strictly less than 1 − H ( p ) (he re p 0 is the value of p satisfyin g H ( p ) = 4 p , and can b e computed to be approx imately 0 . 1564 2 . . . ). In fact f or p ∈ (0 . 25 , 0 . 5) o ur bound implies that no commun ication at p ositiv e rate is possible, which is much stronger th an the re sult obtained by the upper bound o f 1 − H ( p ) (see Fig. 1). Our proof technique s include a combinatio n o f tools fr om the fields of Extre mal Combina torics (e .g. T ur ´ an’ s theo rem [ 17]), and classical Cod ing Theor y (e.g. the Plotkin bou nd [14], [2]) . I I . M O D E L For an y integer i let [ i ] denote the set { 1 , . . . , i } . Let R ≥ 0 be Alice’ s r ate . An ( n, Rn ) - co de C is defined by Alice’ s encoder and Bob’ s co rrespon ding decoder, as below . Alice: Alice’ s message u is assumed to be a rand om variable U with entro py R n , over alp habet U . W e consider two types of e ncoding schemes for Alice. For de terministic co des , Alice’ s message U is assumed to be unifo rmly distributed over U = [2 Rn ] . Her d eterministic encoder is a deterministic function f D ( . ) that m aps every u in [2 Rn ] to a vector x ( u ) = ( x 1 , . . . , x n ) in { 0 , 1 } n . Alice’ s co deboo k X is the c ollection { x ( u ) } of all possible transmitted codewords. More g enerally , Alice and Bob may use pr o babilistic codes . For such codes, the random variable U correspon ding to Alice’ s message p U may have an arbitr ary distribution p U (with en tropy R n ) over an arbitrary alpha bet U . Alice’ s codebo ok X is an ar bitrary collection {X ( u ) } of subsets of { 0 , 1 } n . For each subset X ( u ) ⊂ X , there is a co rrespon ding codewor d rand om variable X ( u ) with c odewor d d istribution p X ( u ) over X ( u ) . For any v alue U = u of the message, Alice’ s enco der choses a codew ord from X ( u ) rando mly from the distribution p X ( u ) . Alice’ s message distribution p U , codebo ok X , and all th e c odebo ok distributions p X ( u ) are all known to b oth Bob an d Calvin, but the values o f the rand om variables U and X ( . ) are unknown to them. If X ( u ) = { x ( u, r ) : r ∈ Λ u } , then the transmitted co dew ord X ( U ) has the prob ability distribution given by Pr[ X ( U ) = x ( u, r )] = p U ( u ) p X ( u ) ( x ( u, r )) . Let p b e the overall distrib ution of codewords x = x ( u, r ) of Alice. It holds th at p ( x ( u, r )) = p U ( u ) p X ( u ) ( x ) and p ( x ) = P U p U ( u ) p X ( u ) ( x ) . Calvin/Channel: Calvin possesses n jamming functions g i ( . ) and n arbitrary jamm ing random variables J i that satisfy the following constraints. Causality co nstraint: For each i ∈ [ n ] , the jamming fu nction g i ( . ) map s x i = ( x 1 , . . . , x i ) an d J i = ( J 1 , . . . , J i ) to an element of { 0 , 1 } . P o wer constraint: The numbe r of ind ices i ∈ [ n ] f or wh ich the value of g i ( . ) equals 1 is a t mo st pn . That is, for all x n , J n , P i g i ( x i , J i ) ≤ pn . The output of th e channel is the set of bits y i = x i ⊕ g i ( x i , J i ) for i = 1 , . . . , n . Bob: Bob’ s decode r is a (p otentially) probabilistic f unction h ( . ) of the rece iv ed vector y . It map s the vectors y = ( y 1 , . . . y n ) in { 0 , 1 } n to the messages in U . Code para meters: Bob is said to m ake a decoding err o r if the message u ′ he dec odes differs fr om the message u encoded by Alice. T he pr ob ability of err or for a given message u is defined as the p robability , over Alice, Calvin and Bob’ s rand om v ariables, that Bob makes a decoding error . The pr obability of e rror of the code C is d efined a s the av erage over a ll u ∈ U of the p robab ility of er ror f or message u . W e define two ty pes o f rates an d co rrespon ding capacities. The ra te R is said to b e weakly achievable if for every ε > 0 , δ > 0 and every suf ficiently large n th ere exists an ( n, ( R − δ ) n ) -cod e that allows communica tion with pro ba- bility o f error at most ε . T he su premum over n of the weak ly achiev able rates is called the weak capacity an d is den oted by C w . The rate R is said to be str on gly a chievable 1 if fo r every δ > 0 , ∃ α > 0 so that fo r sufficiently large n there exists an ( n, ( R − δ ) n ) -co de that allows commun ication with probab ility of er ror at most e − αn . The supremu m over n of the stro ngly ach iev able rates is called th e str ong c apacity an d is d enoted b y C s . Remark: Since a rate that is strongly achievable is always weakly ac hiev able but th e co n verse is not tru e in general, C w ≥ C s . 1 This definition is moti vat ed by the extensi ve lite rature on error exponents in information theory – for large classes of information-th eoretic problems, e.g. [9], [5], the probability of error of the coding scheme is required to decay exponenti ally in block length. I I I . R E L A T E D W O R K A N D O U R R E S U LT S T o the best o f ou r kn owledge, co mmunica tion in th e presence of a cau sal adversary has not bee n explicitly ad- dressed in the literatu re (othe r th an our prio r work for causal adversaries over large- q channels). Nevertheless, we note that the mode l of causal ch annels, being a natu ral one, has b een “on th e table” for sev eral d ecades and the analysis of the online/cau sal chan nel m odel appears as an open question in the book o f Csisz ´ ar a nd Korner [5] (in the section addressing Arbitrary V arying Chan nels [1]). V arious variants of cau sal adversaries have been addr essed in the past, for instance [1], [11], [15], [16], [13 ] – howe ver th e models considered therein differ significantly from ours. At a high lev el, we sh ow that for cau sal ad versaries, fo r a large r ange of p (fo r all p > 0 . 25 ), the max imum achiev a ble rate eq uals that of th e classical “omniscient” a dversarial model ( i.e. , 0 ). This may at first com e as a surpr ise, as the onlin e adversary is weaker than th e omniscien t one, and h ence o ne may suspect that it allows a higher rate of commun ication. W e have two main results. Theorem 1 g iv es an upper bound on the weak capacity C w if Alice’ s encoder is de- terministic. Theo rem 2 giv es an upp er bound on the stro ng capacity C s in the mo re gener al case where Alice’ s en coder is prob abilistic. Due to cer tain limitations of our proof technique s, we d o not p resent any boun ds on the weak capacity in the latter setting. The upper bo und in bo th cases equals min { 1 − H ( p ) , (1 − 4 p ) + } . Theor em 1 (Deterministic enco der): For deterministic codes, C s ≤ C w ≤ min { 1 − H ( p ) , (1 − 4 p ) + } . Theor em 2 (Pr oba bilistic encod er): For probab ilistic codes, C s ≤ min { 1 − H ( p ) , (1 − 4 p ) + } . W e note that under a very weak notion of capacity in which one only req uires the success probab ility to be bo unded away f rom zero (instead o f approach ing 1 ), the capacity of the om niscient channel, an d thus the binary cau sal-adversary channel, app roaches 1 − H ( p ) . This follows by th e fact that for n sufficiently large and ℓ ≥ 4 there exists ( n, Rn ) cod es which are ( ℓ , pn ) list decodable w ith R = 1 − H ( p )(1 + 1 /ℓ ) [7]. Comm unicating u sing an ( ℓ, pn ) list de codable code allows Bob to decod e a list of size ℓ of messages which inclu des the m essage tra nsmitted by Alice. Choosing a message unifo rmly at ran dom from his list, Bob deco des correctly with prob ability at least 1 /ℓ . A. Ou tline of p r oo f techniqu es The upper bou nd o f 1 − H ( p ) follo ws directly by describin g an attack for Calvin wherein h e ap prox imately simulates a BSC( p ) (Binary Symmetric Channel [4] with crossover probab ility p ). More pre cisely , f or e ach i ∈ [ n ] and any sufficiently small ε > 0 , Calvin flips x i with pr obability p − ε until he r uns out of his budget of pn bit- flips. By the Cherno ff bou nd [3], with very h igh probab ility he do es not r un ou t of his budget, and is therefo re in distinguishab le from a BSC( p − ε ). But it is well-known [4] that in this case the optimal rate of comm unication from Alice to Bob is 1 − H ( p − ε ) . T aking the limit when ε → 0 implies our bound . The u pper b ound of (1 − 4 p ) + is m ore involv ed. For the case where Alice’ s encode r is determin istic, the proof o f Theorem 1 ha s th e following overall structur e. Assume for sake o f contrad iction that Alice attempts to communicate at rate g reater than R = (1 − 4 p ) + . T o p rove our up per bo und we design the following wait-and -push attack for Calvin. Calvin starts by w aiting for Alice to transmit approxi- mately R n b its. As Alice is assumed to commu nicate at rate greater than R , the set o f Alice’ s codewords X ′ consistent with the bits Calvin has seen so far is “large” with “high probab ility”. Calv in construc ts X ′ and cho oses a cod ew ord x ′ unifor mly at r andom fro m X ′ . He then ac tiv ely “pu shes” x in the d irection of x ′ by flipp ing, w ith p robab ility 1 / 2 , each future x i that differs fr om x ′ i . If Calvin succeed s in pushing x to a word y rough ly midway between x and x ′ , a care ful analysis demon strates that regardless of Bo b’ s decodin g strategy , Bob is unable to determ ine wh ether Alice transmitted x or x ′ — cau sing a d ecoding er ror of 1 / 2 in this case. So , to prove our boun d, we must show that with con stant pro bability ( indepen dent o f the block length n ) Calvin will indeed succeed in pushing x to y . Namely , that Alice’ s codeword x and the cod ew ord chosen at r andom by Calvin x ′ are of distance at mo st 2 pn . Rou ghly speaking , we prove the ab ove by a detailed analysis of the distance structure of the set of co dewords in any c ode using to ols from extrem al com binator ics an d co ding th eory . The case wher e Alice’ s en coder may be r andomiz ed is more technic ally challenging, and is con sidered in Theo- rem 2. At a h igh level, the strategy o f Calvin fo r a pro b- abilistic encod er follows tha t outlined for the deterministic case. However , there are two main difficulties in its ex- tended analysis. Firstly , the symmetry b etween x and x ′ no lon ger exists. Name ly , the fact that Bob may not be able to disting uish which of the two were transmitted by Alice does not necessarily cause a significant decodin g erro r , since the p robability of x ′ being tran smitted b y Alice ma y well be significan tly sm aller than the pr obability that x was tr ansmitted. Secondly , the fact that b oth x and x ′ may correspo nd to th e same m essage u places the entire schem e in jeop ardy . As it n ow n o lo nger m atters if Bob decodes to x or x ′ , in both cases the decod ed messag e will b e that sent by Alice. T o overcome these difficulties, we describe a mor e intricate analysis of Calvin’ s attack. Roughly spea king, we prove that a “large” subset X ′′ of X ′ behaves “well”. Any x ′ chosen unifor mly at rand om from X ′ , with “significant” p robab ility , is in X ′′ , and h as three p roper ties correspo nding to those when Alice uses a deterministic enco der . That is, x ′ is sufficiently close to x as desired , it has appr oximately the same p robability of transmission th at x do es (thu s pre serving the neede d symmetry), and it also corr esponds to a m essage that differs from that corr espondin g to x . All in all, we show that the above th ree prop erties hold with probability 1 / poly ( n ) , which suf fices to bound the strong capacity of the chann el at hand (but not th e weak capa city). In case of a randomized encod er of Alice, we assume that the messages may h ave non unifor m distribution, and also any message is encoded into one of a set of possible code words as per some p robab ility distribution in that set. One may thin k of various other ways of encoding, for e xample the following, to confu se Calvin. But as we discuss in the next parag raph, such sch emes are also covered in our setup. Multiple cod ebooks: In this scheme, Alice m aintains a set of code s C 1 , C 2 , . . . , C L . For tran smitting a message u , she randomly selects the code C i with probability q i . If th e set of messages is U = { 1 , 2 , . . . , M } with a p robab ility distribution gi ven by p i △ = P r { u = i } , and the c ode C r contains the cod ew ords { x ( u, r ) | u = 1 , 2 , . . . , M } , then in o ur setup, the corr espondin g codeb ook for the m essage u will be X ( u ) = { x ( u, r ) | r = 1 , 2 , . . . , L } . This co deboo k may have le ss than L codewords due to common c odewords in the origina l code s. The indu ced p robab ility distribution in this codebo ok of u is given b y P r { x | u } = P r : x ( u,r )= x q r . If Alice picks a c ode and uses it to enco de se veral messages, even then she does not gain anything. First, if she uses the sam e code to encode to o many message s (and calvin kn ows the en coding scheme, as assumed), then b oth Bob and Calvin will know the cod e used after receiving or ‘reading ’ some cod ew ords. On th e other hand , if a rand omly chosen cod e is u sed on ly to encode a block o f few messages this is equiv alent to u sing a longer (‘superblock’ ) code in our setup. The only difference is th at the probab ility o f err or analysed in o ur set up is the pr obability o f err or in de coding the ‘super blocks’ rather than the smaller block s/codewords. The pr oofs of the upper b ound s correspo nding to 1 − H ( p ) have already been sketched in Section III- A. Henc e we only provide proof s o f the upper bo unds corr espondin g to (1 − 4 p ) + in Th eorems 1 and 2. I V . P RO O F O F T H E O R E M 1 Let R = (1 − 4 p ) + + ε for some ε > 0 . Let log( . ) deno te the binar y logarithm, here and thr ougho ut. By assumption for deterministic codes, Alice’ s message space U is of size 2 Rn . Here we assume for th at 2 Rn in an integer . T his implies that the set X of Alice’ s transmitted co dew ords is o f size 2 Rn . 2 W e no w present Calvin’ s attack. W e sho w that for any fixed ε > 0 , regardless of Bob’ s decoding strategy , there is a decoding error with constant probab ility (nam ely , th e error p robability is in depend ent of n ). Calvin’ s attack is in two stages. First Calvin pa ssively waits until Alice tran smits ℓ = ( R − ε/ 2) n bits over the chan nel. Let x ℓ ∈ { 0 , 1 } ℓ be the value o f the codeword observed so far . He then consider s the set of codewords that are c onsistent with th e observed x ℓ . Namely , Calvin con structs the set X | x ℓ = { x = x 1 , . . . , x n ∈ 2 In fac t, X may be smaller , howe ver we note that for codes of optimal rate, |X | is of s ize exac tly 2 Rn . If |X | < 2 Rn , then for some transmitted code word x at least two m essages u and u ′ must both be encoded to x . On recei ving x , Bob’ s probabilit y of error is maximal – it is at least 1 / 2 . Therefore changing the codebook so as to encode u ′ as some x ′ / ∈ X cannot increa se the probabilit y of decodi ng error . X | x 1 , . . . , x ℓ = x ℓ } . He then cho oses an element x ′ ∈ X | x ℓ unifor mly at random . In the second stage, Calvin follows a random b it-flip strate gy . T hat is, f or each remain ing bit x ′ i of x ′ that d iffers from the co rrespon ding bit x i of x transmitted, he flips the transmitted b it with probab ility 1 / 2 , until he has either flipped pn bits, or until i = n . W e analyze Calvin’ s attack by a series o f claims. W e first show that with high p robab ility (w .h. p.) the set X | x ℓ is larg e . Claim 4.1: With prob ability at least 1 − 2 − εn/ 4 , th e set X | x ℓ is of size at least 2 εn/ 4 . Pr oo f: The num ber of messages u fo r which X | x ℓ ( u ) is of size less than 2 εn/ 4 is at most th e numb er of distinc t prefixes x ℓ times 2 εn/ 4 , which in turn is at mo st 2 ℓ + εn/ 4 = 2 ( R − ε/ 4) n . Now assume that the message u is such that its correspond- ing set X | x ℓ ( u ) is of s ize at least 2 εn/ 4 . W e now show that this implies that the transmitted codeword x and th e c odeword x ′ chosen by Calv in are distinct and of small Ha mming distance apart with a p ositiv e p robab ility (indep endent of n ). Claim 4.2: Con ditioned on Claim 4. 1, with p robability at least ε 64 p , x 6 = x ′ and d H ( x , x ′ ) < 2 pn − εn / 8 . Pr oo f: Consider the u ndirected graph G = ( V , E ) in which the vertex set V consists of the set X | x ℓ and tw o nodes are con nected by an edge if their Hamming distance is less than d = 2 pn − εn/ 8 . An independen t set I in G correspo nds to a subset of cod ew ords in { 0 , 1 } n that ar e all (pairwise) at d istance greater than d . Since th e cod ew ords in X | x ℓ all have th e same prefix x ℓ , one may consider o nly the suffix (of length n − ℓ = 4 pn − εn/ 2 ) of the co dewords in X | x ℓ . Here we assum e p ≤ 0 . 2 5 , minor modifications in th e p roof are needed fo r larger p . The set of vectors defined by the su ffix es in an indep endent set I of G now co rrespon ds to a binary er ror-correcting code of length 4 p n − εn/ 2 , with |I | c odewords and m inimum distance d . By Plotkin’ s bou nd [2] ther e do n ot exist b inary error co r- recting codes with mo re than 2 d 2 d − (4 pn − εn/ 2) + 1 cod ew ords. Thus I , any max imal indep endent set in G , must satisfy |I | ≤ 2(2 pn − ε n/ 8) 2(2 pn − ε n/ 8) − 4 pn + εn/ 2 + 1 = 16 p ε (1) By T ur ´ an’ s theorem [1 7], any un directed graph G of size |V | and average degree ∆ has an independent set of size at least |V | / (∆ + 1) . This, alo ng with (1) implies that the av erage d egree of our grap h G satisfies |V | ∆ + 1 ≤ |I | ≤ 16 p ε This in tu rn implies th at ∆ ≥ ε |V | 16 p − 1 ≥ ε |V | 32 p The second ineq uality is for large enou gh n , since |V | is o f size at least 2 Rn . T o sum marize the ab ove discussion , we have shown that our grap h G has large average degree of size ∆ ≥ ε | V | 32 p . W e now use th is fact to analyze Calvin’ s attack. By the d efinition o f d eterministic cod es, any co dew ord in X is transmitted with equal pr obability . Also, by definition both x (the transmitted cod ew ord) and x ′ (the codeword chosen b y Calvin) are in V = X | x ℓ . Henc e b oth x and x ′ are unifor m in X | x ℓ . This implies that with p robability |E | / |V | 2 the nod es c orrespon ding to co dew ords x and x ′ are d istinct and co nnected by an e dge in G . Th is in turn imp lies that with probab ility |E | / |V | 2 , x 6 = x ′ and d H ( x , x ′ ) < 2 p n − εn/ 8 , as r equired . Now |E | |V | 2 = ∆ |V | 2 |V | 2 ≥ ε 64 p Conditioned on Claim 4.2, Calvin’ s cod ew ord x ′ is very close to Alice’ s tr ansmitted co deword x . Sp ecifically , d H ( x , x ′ ) ∈ (0 , 2 pn − εn/ 8) . W e now show that if Calvin follows the ra ndom bit-flip strategy , from Bob’ s per spective (w .h.p .), both x or x ′ were equ ally likely to h ave been transmitted by Alice. W e first show th at d uring Calvin’ s random b it-flip p rocess, w .h.p., Calvin do es not “run ou t” of his budget of pn bit flips. Claim 4.3: Con ditioned on Claim 4. 2, with prob ability at least 1 − 2 − Ω( ε 2 n ) d H ( x , y ) ∈ d 2 − εn 16 , d 2 + εn 16 . (2) Pr oo f: The expected numb er of locations flipped by Calvin is d/ 2 ≤ pn − ε n/ 16 . Assume that d/ 2 = pn − εn/ 16 (for smaller v alues of d the boun d is only tighter) . By Sanov’ s theorem [4 , T heorem 1 2.4.1 ], the p robab ility that the number of bits flipped by Calvin d eviates f rom the expectation d/ 2 by m ore than εn/ 16 is at most e − Ω( ε 2 n 2 /d ) ≤ e − Ω( ε 2 n ) for large eno ugh n . It shou ld be noted that d/ 2 + εn / 16 ≤ pn , an d so d H ( x , y ) ≤ d/ 2 + εn/ 16 implies that the number of bits flipped by Calvin does n ot exceed p n . Since Calvin p ossibly flips only the bits of x w hich differ fro m the correspond ing bits in x ′ , (2) also implies d H ( x ′ , y ) ∈ d 2 − εn 16 , d 2 + εn 16 . (3) W e con clude by proving tha t if the number of bits flipped by Calvin lies in the rang e ( d/ 2 − εn / 16 , d/ 2 + εn/ 1 6) , then indeed Bob can not distinguish between the case in which x or x ′ were tr ansmitted. Claim 4.4: Con ditioned o n Claim 4.3 Bo b makes a d e- coding error with probability at least 1 / 2 . Pr oo f: By Bayes’ Th eorem [8], if Bob receives y , the a posteri p robab ility that Alice transmitted x , d enoted p ( x | y ) , equals p ( y | x ) p ( x ) /p ( y ) . He re p ( x ) is the probability (over h er encod ing strategy) that Alice transmits x , p ( y | x ) is the pr obability ( over Calvin’ s random b it-flipping strategy) that Bob receives y given that Alice transmits x , and p ( y ) is the resulting prob ability that Bob receives y . Similarly , p ( x ′ | y ) = p ( y | x ′ ) p ( x ′ ) /p ( y ) . T aking the ratio an d noting that fo r d eterministic codes p ( x ) = p ( x ′ ) , we have p ( x | y ) /p ( x ′ | y ) = p ( y | x ) /p ( y | x ′ ) . (4) Since Calvin’ s random bit-flip strategy inv olves him flip- ping bits of x (which are different from the corr espondin g bits of x ′ ) with probab ility 1 / 2 , fo r all y satisfying (2), the probab ilities p ( y | x ) an d p ( y | x ′ ) are eq ual. This o bservation and (4) together imply p ( x | y ) = p ( x ′ | y ) . T hus, Bo b canno t distinguish wh ether x or x ′ were tr ansmitted. Nam ely , o n th e pair of events in which Alice transmits x and Calvin ch ooses x ′ and in which Alice transmits x ′ and Calvin ch ooses x , no matter whic h decodin g process Bob u ses, h e will ha ve an av erage decod ing error of at least 1 / 2 . This suffi ces to p rove our assertion. Thus a decoding error happens if the con- ditions of Claims 4.1, 4. 2, 4.3 a nd 4.4 are all satisfied. This happens with probability at least 1 − 2 − εn/ 4 ε 64 p 1 − 2 − Ω( ε 2 n ) 1 2 ≥ 1 2 ε 64 p 1 2 1 2 ≥ ε 512 p for large en ough n . V . P RO O F O F T H E O R E M 2 W e start by proving the following technical Lemma that we use in our pr oof. Let q be an arb itrary pro bability distribution over an index set I = { 1 , . . . , k } . Let A 1 , . . . , A k be arbitrary discrete rando m variables with prob ability distribu- tions q 1 , . . . , q k over alphabets A 1 , . . . , A k respectively . Let k i = |A i | . Let A be a random v ariable that equ als the rando m variable A i with p robability q ( i ) . T hen the fo llowing Lemma describing an elementary p roperty of th e entropy fun ction H ( . ) is usefu l in the pr oof o f Th eorem 2. Lemma 5 .1: The entrop ies of A , A 1 , . . . , A k and q satisfy H ( A ) ≤ P k i =1 q ( i ) H ( A i ) + H ( q ) , with e quality if an d only if fo r each i, i ′ for which both q ( i ) and q ( i ′ ) ar e po siti ve it holds that P r q i ,q i ′ [ A i = A i ′ ] = 0 . Pr oo f: For any a ∈ A , the pr obability Pr { A = a } = p ( a ) of occurre nce of a , equals P i : a ∈ A i q ( i ) q i ( a ) . Hence H ( A ) = − X a ∈ S i A i p ( a ) log ( p ( a )) ≤ − k X i =1 k i X j =1 q ( i ) q i ( j ) lo g( q ( i ) q i ( j )) (5) = k X i =1 k i X j =1 q ( i ) ( q i ( j ) lo g( q i ( j ))) + k X i =1 k i X j =1 q i ( j ) ( q ( i ) log( q ( i ))) = k X i =1 q ( i ) H ( A i ) + H ( q ) . Here (5) follows from Jensen’ s ineq uality , e.g. [4], with equality if an d o nly if fo r each po siti ve Pr { A = a } , th ere is a uniqu e i su ch that q ( i ) q i ( j ) > 0 (here a i ( j ) = a ). W e now turn to pr ove Th eorem 2. Recall our notation : let U be the rando m variable cor respond ing to Alice’ s message and p U its distribution (with entr opy R n ). Thro ughou t we assume the message set U (the supp ort of U ) is at mo st of size 2 n . L et X be Alice’ s codebook . X is a collec tion {X ( u ) } of subsets o f { 0 , 1 } n . For each sub set X ( u ) ⊂ X , there is a correspo nding codew ord rand om variable X ( u ) with co dew ord d istribution p X ( u ) over X ( u ) . For a ny value U = u of the message, Alice’ s enco der c hoses a codeword from X ( u ) randomly fro m the distrib ution p X ( u ) . Alice’ s message d istribution p U , cod ebook X , a nd all the c odebo ok distributions p X ( u ) are all k nown to both Bo b and Calv in, but the values of the rand om variables U and X ( . ) are unknown to them . If X ( u ) = { x ( u, r ) : r ∈ Λ u } , th en the transmitted co dew ord X ( U ) has the pro bability distribution giv en by Pr[ X ( U ) = x ( u, r )] = p U ( u ) p X ( u ) ( x ( u, r )) . L et p the th e overall distribution of codewords x = x ( u, r ) of Alice. It h olds that p ( x ( u, r )) = p U ( u ) p X ( u ) ( x ) an d p ( x ) = P U p U ( u ) p X ( u ) ( x ) . For a ny ε > 0 , let R = (1 − 4 p ) + + ε . W e start by specifying Calvin’ s attack. Calvin uses a very similar attack to the one describ ed in the proo f o f Theo rem 1. That is, Calvin first p assively waits un til Alice transmits ℓ = ( R − ε/ 2 ) n b its ov er the channel. Let x ℓ ∈ { 0 , 1 } ℓ be th e value of th e co deword observed so far . He then considers the set of co dew ords x ( u, r ) consistent with the observed x ℓ . Here an d thro ugho ut this section, w e denote codewords b y their cor respond ing m essage u and index r in X ( u ) . As it may be that x ( u, r ) is exactly the same codeword as x ( u ′ , r ′ ) , th e sets in th e definitions to follow and in this section are in a sense multisets . Namely , Calvin constructs the set X | x ℓ = { x ( u, r ) = x 1 , . . . , x n ∈ X | x 1 , . . . , x ℓ = x ℓ } . Le t p ( x ℓ ) = p ( X | x ℓ ) b e the prob ability , under the pr obability distribution p , correspo nding to the ev ent that Calvin observes x ℓ in the first ℓ transmission s. Let p U | x ℓ and p X ( u ) | x ℓ be the pr obability d istributions p U and p X ( u ) also respecti vely c ondition ed on the same event. Calvin then cho oses an e lement x ′ ( u ′ , r ′ ) ∈ X | x ℓ with pr obability 3 p U | x ℓ ( u ′ ) p X ( u ′ ) | x ℓ ( x ′ ( u ′ , r ′ )) . In the second stage he then follows exactly the same random b it-flip strate gy as in th e proof of Theorem 1. Recall that in the proo f of Theorem 1, our g oal was to prove th at with some constant pro bability , th e distance between x ( u, r ) an d x ′ ( u ′ , r ′ ) is app roximate ly 2 pn . Lo osely speaking, this allows the success of Calvin ’ s attack (i.e., imply a decodin g erro r). Follo wing the same outlin e of proof , we now sho w that with p robab ility 1 / poly ( n ) the codeword x ′ ( u ′ , r ′ ) cho sen b y Calvin has the following three proper ties: • I t’ s corresp onding message differs from that correspond - ing to x ( u, r ) ( i.e., u 6 = u ′ ). • x ′ ( u ′ , r ′ ) is close to x ( u, r ) and thus Calvin will be a ble 3 This is one significant differe nce from the attack in the proof of Theorem 1 – there Calvin chooses each x ′ uniformly at random from the correspond ing consistent set. to “pu sh” x ( u, r ) to a codeword y at ap proxim ately the same distan ce fr om x ( u, r ) an d x ′ ( u ′ , r ′ ) . • Given y , Bob is unab le to distinguish whether x ( u, r ) or x ′ ( u ′ , r ′ ) was transmitted . T o this end , we partition the set X | x ℓ into n 2 disjoint subsets X ij for i, j ∈ { 1 , 2 , . . . , n } . Let p ( X ij ) be th e pro bability mass o f X ij . L et p U | ij and p X ( u ) | ij be the pro bability distributions p U and p X ( u ) respectively condition ed o n the ev ent that Alice transmitted x ( u, r ) in X ij . The partition X ij is obtain ed in two steps – first we partition X | x ℓ in to n subsets X i , then we pa rtition each X i into n sets X ij . W e also use the prob ability distribution p ( X i ) , p U | i and p X ( u ) | i defined accord ingly . All in all, we p rove the existence of a subset X ij with the following prop erties • H ( p U | ij ) is “large”. • p ( X ij ) is large with respe ct to p ( x ℓ ) . • For a ny x ( u, r ) ∈ X ij it holds that p ( x ( u, r )) has approx imately the same value. • p U | ij is ap prox imately unifor m on its suppor t. Roughly speaking, proving these prop erties on X ij reduces us to the case of a deterministic enco der (addressed in Theorem 1) and allows us to comp lete our pro of. W e now present our pr oof for th e existence of X ij as specified a bove. W e first show that with positive probab ility the set X | x ℓ has high e ntropy . Claim 5.1: With probab ility at least ε/ 4 , H ( p U | x ℓ ) ≥ εn/ 4 . Pr oo f: Le t q b e th e proba bility distribution over { 0 , 1 } ℓ for which q ( x ℓ ) = p ( x ℓ ) for all po ssible x ℓ ∈ { 0 , 1 } ℓ . Let q x ℓ be the prob ability distribution p U | x ℓ . Now using Lemma 5.1 we obtain H ( p U ) ≤ X x ℓ q ( x ℓ ) H ( p U | x ℓ ) + H ( q ) . (6) By o ur definition s H ( p U ) = Rn . Mor eover , H ( q ) ≤ ℓ = ( R − ε/ 2) n ( since q is defined over an alp habet of size 2 ℓ ). Thus (6) become s X x ℓ q ( x ℓ ) H ( p U | x ℓ ) ≥ Rn − ( R − ε/ 2) n = εn / 2 . As the av erage of H ( p U | x ℓ ) is at least εn/ 2 , the n H ( p U | x ℓ ) ≥ εn/ 4 with probab ility at least ε/ 4 (by a Markov type inequal- ity , h ere we use th e fact that H ( p U | x ℓ ) ≤ n ). W e now defin e the sets X i . For i = 1 , . . . , n − 1 , let X i be the set of co dew ords in X | x ℓ for which p ( x ( u, r )) / p ( x ℓ ) is in the ra nge (2 − 3 i , 2 − 3 i +3 ] . The set X n is defined to b e the set of cod ew ords in X | x ℓ for which p ( x ( u, r )) /p ( x ℓ ) is in the rang e [0 , 2 − 3 n +3 ] . Let p ( X i ) be th e probability mass of X i . Namely p ( X i ) ≃ 2 − 3 i |X i | p ( x ℓ ) . Let q be the distribution over { 1 , 2 , . . . , n } taking i w .p. p ( X i ) /p ( x ℓ ) . Notice that H ( q ) ≤ log( n ) = o ( n ) (as its supp ort is of size n ). Con ditioning on Claim 5.1 and using Lemma 5 .1 it can be verified that Claim 5.2: X i q ( i ) H ( p U | i ) ≥ H ( p U | x ℓ ) − H ( q ) ≥ εn/ 8 (7) Consider sets X i with (relativ e) mass q ( i ) ≥ 1 /n 2 . It holds that X i ≤ n − 1; q ( i ) ≥ 1 /n 2 q ( i ) H ( p U | i ) ≥ εn/ 1 6 The above follows fro m the fact that P i ≤ n − 1; q ( i ) ≤ 1 /n 2 q ( i ) H ( p U | i ) + q ( n ) H ( p U | i ) ≤ P i ≤ n − 1; q ( i ) ≤ 1 /n 2 n/n 2 + 2 − n +3 n ≤ 2 (f or sufficiently large n ). He re we use the fact th at q ( n ) ≤ |X i | 2 − 3 n +3 . W e co nclude the existence of a set X i such th at q ( i ) ≥ 1 /n 2 and H ( p U | i ) ≥ εn/ 16 . W e now further par tition X i . For j = 1 , . . . , n − 1 , let X ij be the set of c odewords x ( u, r ) in X i for which p U | i ( u ) is in the range (2 − 3 j , 2 − 3 j +3 ] . X in is define d to be the set of co dew ords x ( u, r ) in X i for which p U | i ( u ) is in the r ange [0 , 2 − 3 n +3 ] . Let p ( X ij ) be the probab ility mass o f X ij . Namely p ( X ij ) ≃ 2 − 3 i |X ij | p ( x ℓ ) . Let q ′ be the distribution over { 1 , 2 , . . . , n } taking j w .p. p ( X ij ) /p ( X i ) . Notice that H ( q ′ ) ≤ lo g( n ) = o ( n ) (as its support is o f size n ). As befo re, con ditioning on Claim 5.2 and using Lemma 5 .1 it can be verified that (fo r the ind ex i specified a bove), Claim 5.3: X j q ′ ( j ) H ( p U | ij ) ≥ H ( p U | i ) − H ( q ′ ) ≥ εn/ 3 2 (8 ) Again, con sider sets X ij with mass q ′ ( i ) ≥ 1 /n 2 . It holds that X j ≤ n − 1; q ′ ( j ) ≥ 1 /n 2 q ′ ( j ) H ( p U | ij ) ≥ εn/ 6 4 W e conclude the existence of a set X ij such th at • H ( p U | ij ) ≥ εn/ 6 4 . • p ( X ij ) ≥ p ( x ℓ ) /n 4 . • For any x ( u, r ) ∈ X ij it holds th at p ( x ( u, r )) is approx imately 2 − 3 i p ( x ℓ ) . • For any x ( u, r ) ∈ X ij it h olds that p U | ij ( u ) is appr ox- imately equal. The set X ij is exactly w hat we are lookin g f or . Roughly speaking, by Claim 5.1, with prob ability at le ast ε / 4 Calvin views a pre fix x ℓ for which H ( p U | x ℓ ) ≥ εn/ 4 . Condition ing on this event, both Alice a nd Calvin choose codewords x ( u, r ) , x ′ ( u ′ , r ′ ) in X ij with pr obability at least 1 /n 8 . W e now sketch to remaind er o f the pr oof which closely follows that of Theorem 1. W e partition X ij into gr oups of messages X ij ( u ) consisting of all codewords in X ij correspo nding to u . Recall that each codeword x ( u, r ) ∈ X ij has approx imately the same prob ability p ( x ( u, r )) , and for each x ( u, r ) ∈ X ij it h olds th at p U | ij ( u ) is app roximately the same value. This implies th at each group X ij ( u ) ⊆ X ij has appro ximately the same size. Moreover, as H ( p U | ij ) ≥ εn/ 64 it h olds that there are at least 2 εn/ 64 non-em pty subsets X ij ( u ) in X ij . So, all in all, X ij has a very symm etric structu re: it includes man y gr oups, each consisting of elements with the same transmission probability , and each of approx imately the same size and mass (w . r .t. p ). This reduces us to the case considered in Theorem 1 in wh ich our subset X | x ℓ included many messages, ea ch with the same pro bability , details follow . Consider the graph G = ( V , E ) in which the vertex set V consists of the set X ij and two nod es ar e conne cted b y an edge if their H amming distance is less than d = 2 p n − ε n/ 8 . Now , it is c an b e verified (using an alysis almost id entical to tha t given in th e pr oof o f Theor em 1) th at 1) With pr obability at least 1 − 2 − Ω( εn ) the cod ew ords x ( u, r ) and x ′ ( u ′ , r ′ ) satisfy u 6 = u ′ . Here one needs to take into c onsideratio n the slight d ifference in the group sizes and the p robab ilities for each cod ew ord. 2) With pr obability Ω ε p the vertices in G co rrespond ing to x ( u, r ) and x ′ ( u ′ , r ′ ) are conn ected by an edge . 3) Du ring Calvin’ s rand om bit- flip process, with high probab ility of 1 − 2 − Ω( ε 2 n ) , Calvin does not “run out” of h is budget of pn bit flips. 4) Con ditioning o n the above, Bob cann ot distingu ish between the case in which x ( u, r ) o r x ′ ( u ′ , r ′ ) were transmitted. 5) Finally , on the pair of events in which Alice trans- mits x ( u, r ) and Calv in ch ooses x ′ ( u ′ , r ′ ) , and Alice transmits x ′ ( u ′ , r ′ ) and Calv in ch ooses x ( u, r ) , no matter which decod ing p rocess Bob uses, he ha s an av erage decoding error that is bounded away from zero. Here ag ain we take into account the sligh t differences between p ( x ( u, r )) and p ( x ′ ( u ′ , r ′ )) . T o summ arize, Calvin causes a d ecoding err or with prob - ability Ω( poly ( ε ) / po ly ( n )) = Ω(1 / poly ( n )) as desired. This conclud es our proo f. V I . C O N CL U S I O N S W e analy ze the capacity o f th e cau sal-adversarial channe l and show ( for both deterministic and p robab ilistic encod ers) that th e cap acity is bounded b y ab ove by min { 1 − H ( p ) , (1 − 4 p ) + } . For a large r ange o f p ( for all p > 0 . 25 ), the maximum achiev able rate equ als that of the str onger classical “omniscient” a dversarial mod el ( i.e. , 0 ). Sev eral qu estions remain open . In this work we do not address achiev ability r esults (i.e., the con struction of cod es). It would be very in teresting to obtain cod es for the causal- adversary cha nnel which obtain rate greater th an that know for the “omniscient” adversarial m odel ( i. e. , the Gilbert- V arshamov bound ) for p < 0 . 2 5 ). As we do not belie ve that the upper b ound of (1 − 4 p ) + presented in this work is actu ally tight, such codes, if they exist, m ay give a hint to the co rrect capacity . As done in our work on large alphabets [6], one may also consider the more general chann el mo del in which for a d elay parameter d ∈ (0 , 1) , the jammer ’ s decision o n the corrup tion of x i must dep end solely on x j for j ≤ i − dn . This might correspond to the scenario in which the error tra nsmission o f th e adversarial jammer is delay ed due to certain computation al tasks that the adversary needs to perfor m. The cap acity of the c ausal cha nnel with delay is an intriguing p roblem lef t open in this work. R E F E R E N C E S [1] D. Blackwell, L. Breiman, and A. J. Thomasian. The capacit ies of ce rtain channe l classes unde r random coding. The A nnals of Mathemat ical Statistics , 31(3):558–567, 1960. [2] A. E. Brouwer . Bounds on the size of linear codes. In V . S. Pless and W . C. Huffman, editors, Handbook of Coding T heory , volume 1, chapte r 4, pages 295–461. E lse vier Science, New Y ork, NY , USA, 1998. [3] H. Chernoff. Measure of asymptotic e ffic iency for tests of a hypot hesis based on the sum of observ ations. A nnals of Mathematic al Statistics , 23:493–50 7, 1952. [4] T . M. Cover and J. A. Thomas. Elements of informati on theory , 2nd editi on . Wile y-Interscience , Ne w Y ork, NY , USA, 2006. [5] I. Csiszar and J. G. Korn er . Information T heory: coding theor ems for discr ete memoryless syste ms . Academic Press, Inc, Orlando, FL , USA, 1982. [6] B. K. Dey , S. Jaggi, and M. Langberg. Codes against online adver- saries. Manusc ript . A va ilable at http:/ /arxi v .org/abs/0 811.2850 . [7] P . Elias. Error-corre cting codes for list decoding. IEE E T ransactio ns on Informati on Theory . 37(1):512, 1991. [8] W . Feller . An Intr oduction to Pr obability Theory and Its Applicatio ns, V olume II (2nd ed.) . John Wile y & Sons, New Y ork, 1972. [9] R. G. Gallage r . Information Theory and Reliable Communication . J. W ile y and Sons, New Y ork, 1968. [10] E. N. Gilbert. A comparison of signal ling alphabe ts. Bell Systems T ec hnical J ournal , 31:504–522, 1952. [11] S. Jaggi, M. Langberg, T . Ho, and M. E ffro s. Correctio n of Adv ersarial Errors in Networks. In pr oceedings of IEEE Internat ional Symposium on Informati on Theory (ISIT) , pages 1455–1459, 2005. [12] R. McEliece, E. Rodemich, H. Rumse y , and L. W elch. New upper bounds on the rate of a c ode via the delsarte -macwilli ams inequali ties. IEEE T rans. Inform. Theory , 23(2):157–1 66, March 1977. [13] L. Nutman and M. Langberg. Adversarial Mode ls and Resilient Schemes for Network Coding. In proc eedings of IEEE Internatio nal Symposium on Information Theory , pages 171–175, 2008. [14] M. Plotkin. Binary codes wit h specified m inimum distance. IRE T rans. Inform. Theory , 6:445–450, 1960. [15] A. Sahai and S . Mitter . T he necessity and suf ficienc y of anyti me ca- pacit y for stabil izatio n of a lin ear system ov er a noisy communicati on link, Part I: scalar systems. IEEE T ransac tions on Information The ory , 52(8):3369 –3395, 2006. [16] A. Sarwate. Robust and adapti ve communica tion under uncertain interfe rence. PhD thesis, Berkel ey , 2008. [17] P . T ur ´ an. On the Theory of G raphs., Colloq . Math . 3 (1954), 19-30. Colloq. Math. 3:19-30, 1954. [18] R. R . V arshamov . Estimate of the number of signal s in error correcting codes. Dokl. Acad. Nauk , 117:739–741, 1957.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment