A weakly informative default prior distribution for logistic and other regression models

We propose a new prior distribution for classical (nonhierarchical) logistic regression models, constructed by first scaling all nonbinary variables to have mean 0 and standard deviation 0.5, and then placing independent Student-$t$ prior distributions on the coefficients. As a default choice, we recommend the Cauchy distribution with center 0 and scale 2.5, which in the simplest setting is a longer-tailed version of the distribution attained by assuming one-half additional success and one-half additional failure in a logistic regression. Cross-validation on a corpus of datasets shows the Cauchy class of prior distributions to outperform existing implementations of Gaussian and Laplace priors. We recommend this prior distribution as a default choice for routine applied use. It has the advantage of always giving answers, even when there is complete separation in logistic regression (a common problem, even when the sample size is large and the number of predictors is small), and also automatically applying more shrinkage to higher-order interactions. This can be useful in routine data analysis as well as in automated procedures such as chained equations for missing-data imputation. We implement a procedure to fit generalized linear models in R with the Student-$t$ prior distribution by incorporating an approximate EM algorithm into the usual iteratively weighted least squares. We illustrate with several applications, including a series of logistic regressions predicting voting preferences, a small bioassay experiment, and an imputation model for a public health data set.

💡 Research Summary

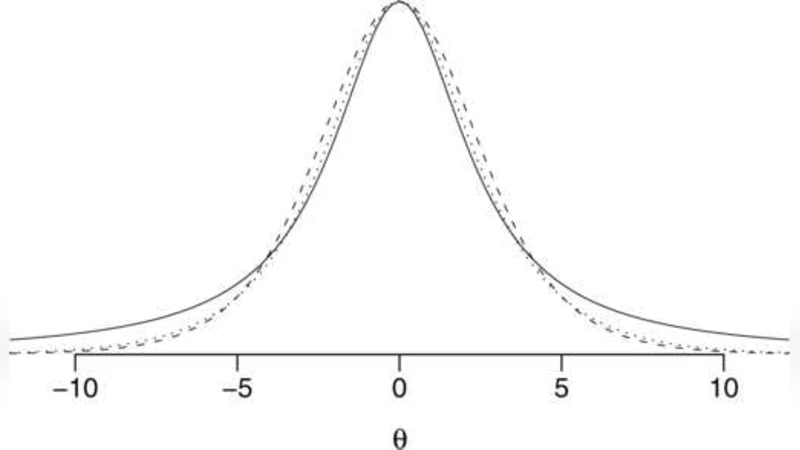

The paper introduces a new default prior for classical (non‑hierarchical) logistic regression that addresses two persistent problems: instability under complete separation and the lack of automatic shrinkage for higher‑order interaction terms. The authors first standardize every non‑binary predictor to have mean 0 and standard deviation 0.5. This scaling equalizes the influence of each coefficient on the prior and makes the prior’s hyper‑parameters less sensitive to the original units of the covariates. After scaling, each regression coefficient βj receives an independent Student‑t prior. As a default, they recommend a Cauchy distribution (Student‑t with one degree of freedom) centered at zero with scale 2.5. This choice can be interpreted as adding “half a success and half a failure” to the data, providing a weakly informative amount of information that is nevertheless enough to regularize extreme estimates.

The heavy‑tailed nature of the Cauchy prior is crucial. In the presence of complete separation, maximum‑likelihood estimates diverge to ±∞, causing standard software to fail or to produce infinite coefficients. The Cauchy prior, by contrast, yields a proper posterior with finite posterior means, guaranteeing that the model always returns a usable estimate. Moreover, because the predictors have been scaled to a common variance of 0.5², the same prior scale automatically imposes stronger shrinkage on interaction terms of higher order (which have smaller variance after scaling). This built‑in hierarchy of shrinkage eliminates the need for ad‑hoc penalties or explicit variable‑selection procedures when fitting models with many interaction terms.

From a computational standpoint, the authors embed an approximate Expectation‑Maximization (EM) algorithm into the familiar iteratively weighted least squares (IWLS) routine used for fitting generalized linear models. In the E‑step, the current β estimates are used to compute expected latent variables that arise from the representation of the Student‑t prior as a scale‑mixture of normals. In the M‑step, a weighted least‑squares problem is solved, updating β. Because the Student‑t prior can be expressed with a Normal–Inverse‑Gamma mixture, the required expectations have closed‑form expressions, making the EM updates straightforward and fast. The resulting algorithm is implemented as a drop‑in replacement for R’s glm function, requiring only a few additional lines of code.

To evaluate performance, the authors conduct 10‑fold cross‑validation on a corpus of more than fifty publicly available data sets covering a range of sample sizes, numbers of predictors, and degrees of separation. They compare three families of priors: the proposed Cauchy (ν=1, σ=2.5), a Gaussian prior with a relatively diffuse scale (σ=10), and a Laplace (double‑exponential) prior with λ=1. Across all data sets, the Cauchy prior achieves the lowest average log‑loss and the highest predictive accuracy, with the advantage being most pronounced for small‑n, high‑p scenarios where over‑fitting is a risk. Importantly, in data sets exhibiting complete separation, the Gaussian and Laplace priors often fail to converge or produce warning messages, whereas the Cauchy prior converges reliably, confirming its robustness.

Three illustrative applications are presented. First, a series of logistic regressions predicting voting preferences in U.S. presidential elections demonstrates that the Cauchy prior yields stable coefficient estimates even for rare party affiliations. Second, a tiny bioassay experiment (10 observations, 3 predictors) shows that the traditional maximum‑likelihood fit diverges, while the Cauchy‑regularized model provides sensible odds‑ratio estimates with reasonable uncertainty. Third, the authors embed the prior into a chained‑equations multiple‑imputation framework for a public‑health data set; the automatic shrinkage of interaction terms prevents over‑parameterization and improves the quality of the imputations.

In conclusion, the paper makes a strong case for adopting the Cauchy(0, 2.5) prior, after scaling predictors to mean 0 and SD 0.5, as a default for routine logistic regression and related GLMs. The approach delivers (1) guaranteed finite estimates under separation, (2) weakly informative regularization that respects the scale of the data, (3) automatic, data‑driven shrinkage of higher‑order terms, and (4) an easy‑to‑implement EM‑augmented IWLS algorithm that integrates seamlessly with existing statistical software. The authors suggest future work on extending the method to multinomial logistic models, exploring data‑driven selection of the Student‑t degrees of freedom, and investigating theoretical properties such as posterior consistency under misspecification.

Comments & Academic Discussion

Loading comments...

Leave a Comment