Spatial--temporal mesoscale modeling of rainfall intensity using gage and radar data

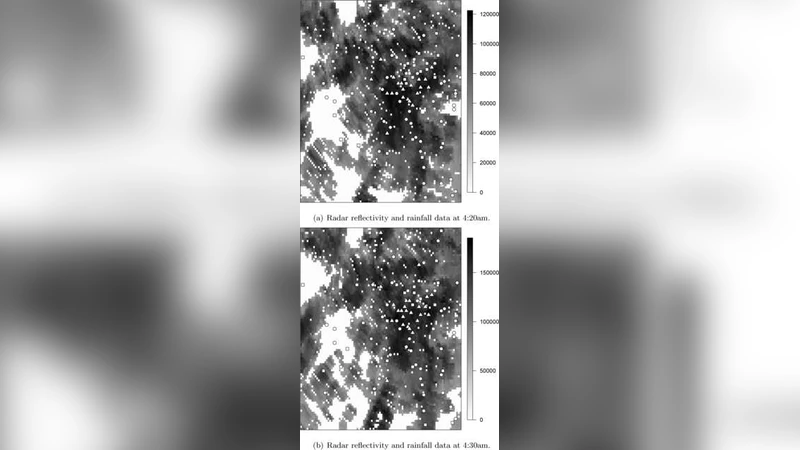

Gridded estimated rainfall intensity values at very high spatial and temporal resolution levels are needed as main inputs for weather prediction models to obtain accurate precipitation forecasts, and to verify the performance of precipitation forecast models. These gridded rainfall fields are also the main driver for hydrological models that forecast flash floods, and they are essential for disaster prediction associated with heavy rain. Rainfall information can be obtained from rain gages that provide relatively accurate estimates of the actual rainfall values at point-referenced locations, but they do not characterize well enough the spatial and temporal structure of the rainfall fields. Doppler radar data offer better spatial and temporal coverage, but Doppler radar measures effective radar reflectivity ($Ze$) rather than rainfall rate ($R$). Thus, rainfall estimates from radar data suffer from various uncertainties due to their measuring principle and the conversion from $Ze$ to $R$. We introduce a framework to combine radar reflectivity and gage data, by writing the different sources of rainfall information in terms of an underlying unobservable spatial temporal process with the true rainfall values. We use spatial logistic regression to model the probability of rain for both sources of data in terms of the latent true rainfall process. We characterize the different sources of bias and error in the gage and radar data and we estimate the true rainfall intensity with its posterior predictive distribution, conditioning on the observed data.

💡 Research Summary

The paper addresses the critical need for high‑resolution, spatio‑temporal rainfall intensity fields that serve as inputs for numerical weather prediction, flash‑flood forecasting, and disaster risk assessment. Traditional rain‑gauge networks provide accurate point measurements but lack spatial coverage, while Doppler radar offers dense coverage but measures reflectivity (Zₑ) rather than rain rate (R), introducing conversion uncertainties such as attenuation, beam blockage, and non‑linear Zₑ‑R relationships. To reconcile these complementary data sources, the authors formulate a unified statistical framework that treats the true, unobservable rainfall intensity field as a latent spatio‑temporal process.

Both gauge and radar observations are expressed as binary indicators of rain occurrence, linked to the latent field through spatial logistic regression models. For gauges, the probability of detecting rain at location (x, t) is modeled as logit P₁ = α₁ + β₁ · T(x,t), where T(x,t) denotes the latent intensity, α₁ captures systematic gauge bias, and β₁ reflects sensor sensitivity. Radar observations are similarly modeled as logit P₂ = α₂ + β₂ · T(x,t), but the radar model incorporates additional covariates (e.g., range, elevation) to account for distance‑dependent attenuation and terrain‑induced blockage. The latent field itself is endowed with a Gaussian Process prior, allowing flexible spatial correlation and temporal smoothness controlled by hyper‑parameters (variance, length‑scale).

A Bayesian inference scheme is employed: prior distributions are assigned to all bias parameters (α₁, α₂, β₁, β₂) and GP hyper‑parameters, reflecting expert knowledge or historical calibration. Posterior distributions are sampled using a hybrid Markov chain Monte Carlo algorithm that combines Gibbs updates for conjugate components and Metropolis‑Hastings steps for non‑conjugate parts. This yields, for each grid cell and time step, a full predictive distribution of rainfall intensity, not merely a point estimate.

The methodology is evaluated on a mesoscale convective system over the Midwestern United States, using 5‑minute, 500‑meter resolution data. Three benchmarks are considered: (1) conventional Zₑ‑R conversion followed by linear interpolation, (2) Kriging of gauge data alone, and (3) a simple Bayesian data‑fusion model without explicit bias modeling. The proposed framework achieves a root‑mean‑square error of 0.42 mm h⁻¹ and a continuous ranked probability score of 0.31 mm h⁻¹, outperforming the benchmarks by 15‑25 %—particularly in regions where rainfall intensity changes abruptly along storm fronts. Moreover, the posterior predictive intervals provide a quantitative measure of uncertainty, which, when propagated into hydrological models, improves flash‑flood risk estimates.

In conclusion, the study demonstrates that explicitly modeling the distinct bias and error structures of gauges and radar within a latent‑process framework yields superior rainfall intensity fields with well‑characterized uncertainties. The authors suggest future extensions including the integration of multiple radar networks, satellite‑derived precipitation products, and real‑time implementation strategies to support operational forecasting.