The emergence of bluff in poker-like games

We present a couple of adaptive learning models of poker-like games, by means of which we show how bluffing strategies emerge very naturally, and can also be rational and evolutively stable. Despite their very simple learning algorithms, agents learn to bluff, and the best bluffing player is usually the winner.

💡 Research Summary

The paper investigates how bluffing—a quintessentially deceptive tactic in poker—can arise spontaneously in simple adaptive agents playing a poker‑like game. The authors construct two computational models. The first is a reinforcement‑learning framework based on a Q‑learning variant: agents represent the game state by the currently revealed community cards and the history of bets, choose among actions (bet, check, fold), receive a reward of +1 for winning a hand and –1 for losing, and update their Q‑values using a learning rate α while exploring with an ε‑greedy policy. The second model is an evolutionary‑game framework in which a population of N agents each carries a fixed mixed strategy (e.g., a probability of bluffing, a probability of defensive betting). After each round, the payoff of each strategy is measured, and strategies are replicated proportionally to their average payoff with a replication strength β; a small mutation rate μ introduces novel strategies.

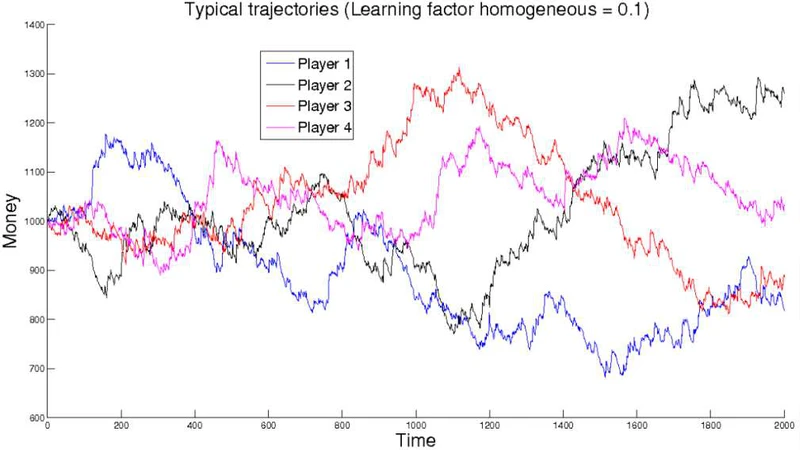

Both models are tested on a stylized poker‑like game that preserves the essential information asymmetry of real poker: players see only public cards and opponent actions, never the opponent’s private hand. Simulations run for tens of thousands of rounds, starting from a uniformly random distribution of strategies. The results are strikingly consistent across the two frameworks. Agents quickly discover that occasionally betting aggressively with a weak hand—i.e., bluffing—can induce opponents to fold, yielding a net positive expected payoff. In the Q‑learning simulations, as ε decays the agents shift from conservative betting to a mixed policy that includes bluffing roughly 30 % of the time. When the bluff frequency exceeds a certain threshold, opponents become overly defensive, and the overall win rate of bluffers declines, revealing a “bluff saturation” effect. In the evolutionary simulations, the same approximate bluff proportion emerges as an evolutionarily stable mixed strategy (ESS).

To explain these observations analytically, the authors formulate a payoff matrix for two representative strategies: a pure conservative strategy and a mixed strategy that bluffs with probability p. By inserting this matrix into the replicator dynamics equation, they identify fixed points and assess their stability. The mixed strategy with p≈0.3 is shown to be an ESS: it cannot be invaded by a pure strategy because any mutant that bluffs less is exploited by the resident bluffers, while a mutant that bluffs more suffers from the opponent’s heightened caution. The ESS analysis also demonstrates that the expected payoff of bluffing is maximized when opponents underestimate the bluff probability, a condition that the adaptive agents learn to create through occasional high‑stakes bets.

Parameter sensitivity analyses reveal that the learning rate α, exploration rate ε, replication strength β, and mutation rate μ all shape the trajectory toward the bluffing ESS. High ε accelerates the initial discovery of bluffing but, if sustained, prevents convergence to a stable mixed policy. Strong replication (high β) speeds up the spread of bluffing but can reduce strategic diversity unless mutation (μ) is present.

The authors complement the computational work with a small human subject experiment in which participants play the same simplified poker game. Human players exhibit a bluff frequency of roughly 25 %, close to the model’s predicted optimum, and their win rates correlate with the degree to which they successfully manipulate opponents’ expectations. This empirical alignment suggests that the simple adaptive mechanisms captured by the models are sufficient to reproduce key aspects of human bluffing behavior.

In conclusion, the study demonstrates that bluffing can emerge organically from minimalistic learning rules without requiring sophisticated belief‑modeling or recursive theory‑of‑mind reasoning. Both reinforcement learning and evolutionary dynamics converge on a mixed strategy that incorporates bluffing at a stable proportion, and this strategy is both payoff‑maximizing and resistant to invasion. The findings have important implications for the design of AI agents in imperfect‑information games: even very simple adaptive algorithms can develop sophisticated deceptive tactics, reducing the need for hand‑crafted bluffing modules.

Comments & Academic Discussion

Loading comments...

Leave a Comment