Parallelizing the XSTAR Photoionization Code

We describe two means by which XSTAR, a code which computes physical conditions and emission spectra of photoionized gases, has been parallelized. The first is pvm_xstar, a wrapper which can be used in place of the serial xstar2xspec script to foster concurrent execution of the XSTAR command line application on independent sets of parameters. The second is PModel, a plugin for the Interactive Spectral Interpretation System (ISIS) which allows arbitrary components of a broad range of astrophysical models to be distributed across processors during fitting and confidence limits calculations, by scientists with little training in parallel programming. Plugging the XSTAR family of analytic models into PModel enables multiple ionization states (e.g., of a complex absorber/emitter) to be computed simultaneously, alleviating the often prohibitive expense of the traditional serial approach. Initial performance results indicate that these methods substantially enlarge the problem space to which XSTAR may be applied within practical timeframes.

💡 Research Summary

The paper presents two complementary approaches to parallelize the XSTAR photoionization code, dramatically reducing the time required for large‑scale spectral modeling. XSTAR, originally written in Fortran‑77, computes the physical state and emission spectrum of photoionized gases and is widely used in astrophysics through analytic models such as warmabs and hotabs. However, each model component can take 10–15 seconds to evaluate on a modern CPU, leading to fit loops that may run for days or weeks when many components and parameters are involved.

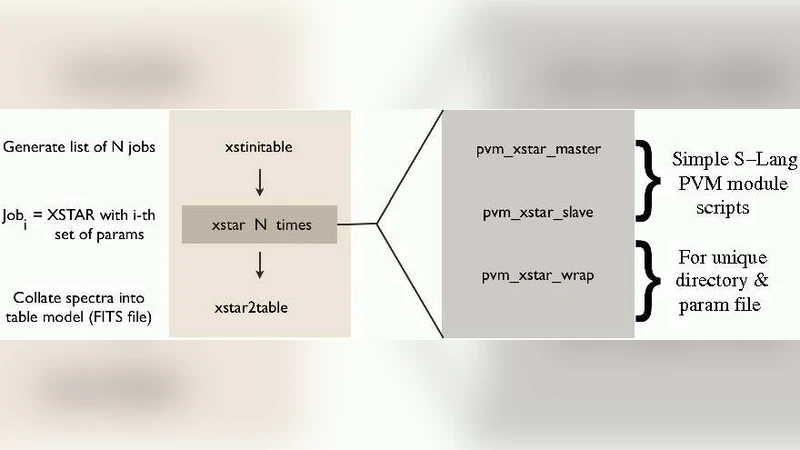

The first method, pvm_xstar, is a wrapper that replaces the traditional serial xstar2xspec script. It exploits the independence of individual parameter sets by launching separate XSTAR instances for each set and distributing them across multiple processors using the Parallel Virtual Machine (PVM) library. The implementation consists of four scripts (two Bash wrappers and two S‑Lang master/slave scripts) that manage job submission, directory isolation to avoid FITS I/O conflicts, and result collection. Benchmarks on a 52‑core Beowulf cluster (2.4 GHz Opteron, 4 GB RAM per node) show that a workload of 4 200 XSTAR runs, which would require 7.5 days on a single 2.6 GHz Opteron, completes in just 110 minutes. This near‑linear scaling demonstrates that pvm_xstar is well suited for batch‑mode simulations, Monte‑Carlo parameter sweeps, and any workflow that requires many independent XSTAR executions.

The second method, PModel, is an ISIS plug‑in that parallelizes the model evaluation step itself. The authors identify two intrinsic sources of parallelism: (1) Component independence – when model components are mathematically independent (e.g., warmabs(1)+warmabs(2)+hotabs(1)), they can be evaluated concurrently on separate CPUs; (2) Grid independence – when the spectral grid can be partitioned because each energy bin’s computation does not depend on neighboring bins, the grid can be split into sub‑grids and processed in parallel. PModel provides four user‑level functions—pm_add, pm_mul, pm_func, and pm_subgrid(N)—that act as stubs in a model expression to mark which parts should be dispatched in parallel. Internally, the plug‑in uses the S‑Lang PVM module to launch worker processes, each handling a component or a sub‑grid, and then combines the partial results using additive, multiplicative, or user‑defined reduction operations. Importantly, ISIS itself requires no code changes; the parallelism is entirely encapsulated within PModel, allowing astronomers with minimal programming expertise to exploit multi‑core or cluster resources.

Performance tests on a realistic analysis scenario involving more than 20 model components and hundreds of free parameters show a reduction from over four weeks of serial computation to roughly 22 hours on the same 52‑core cluster. This represents an order‑of‑magnitude speed‑up and enables the exploration of far more complex physical scenarios within practical time frames. Because PModel’s design is generic, it can be applied to any computationally intensive model, not just XSTAR components, broadening its applicability across X‑ray spectroscopy and other domains that rely on ISIS.

In conclusion, the authors deliver two open‑source tools—pvm_xstar for batch job distribution and PModel for interactive model evaluation—that together make high‑performance computing accessible to the astrophysical community. Both have already been deployed at multiple institutions on desktop multicore machines, workstation clusters, and larger parallel systems. The paper suggests future extensions such as GPU acceleration or MPI‑based implementations, indicating that the presented strategies form a solid foundation for scaling photoionization modeling to the demands of next‑generation X‑ray observatories.

Comments & Academic Discussion

Loading comments...

Leave a Comment