Min-Max decoding for non binary LDPC codes

Iterative decoding of non-binary LDPC codes is currently performed using either the Sum-Product or the Min-Sum algorithms or slightly different versions of them. In this paper, several low-complexity quasi-optimal iterative algorithms are proposed fo…

Authors: Valentin Savin

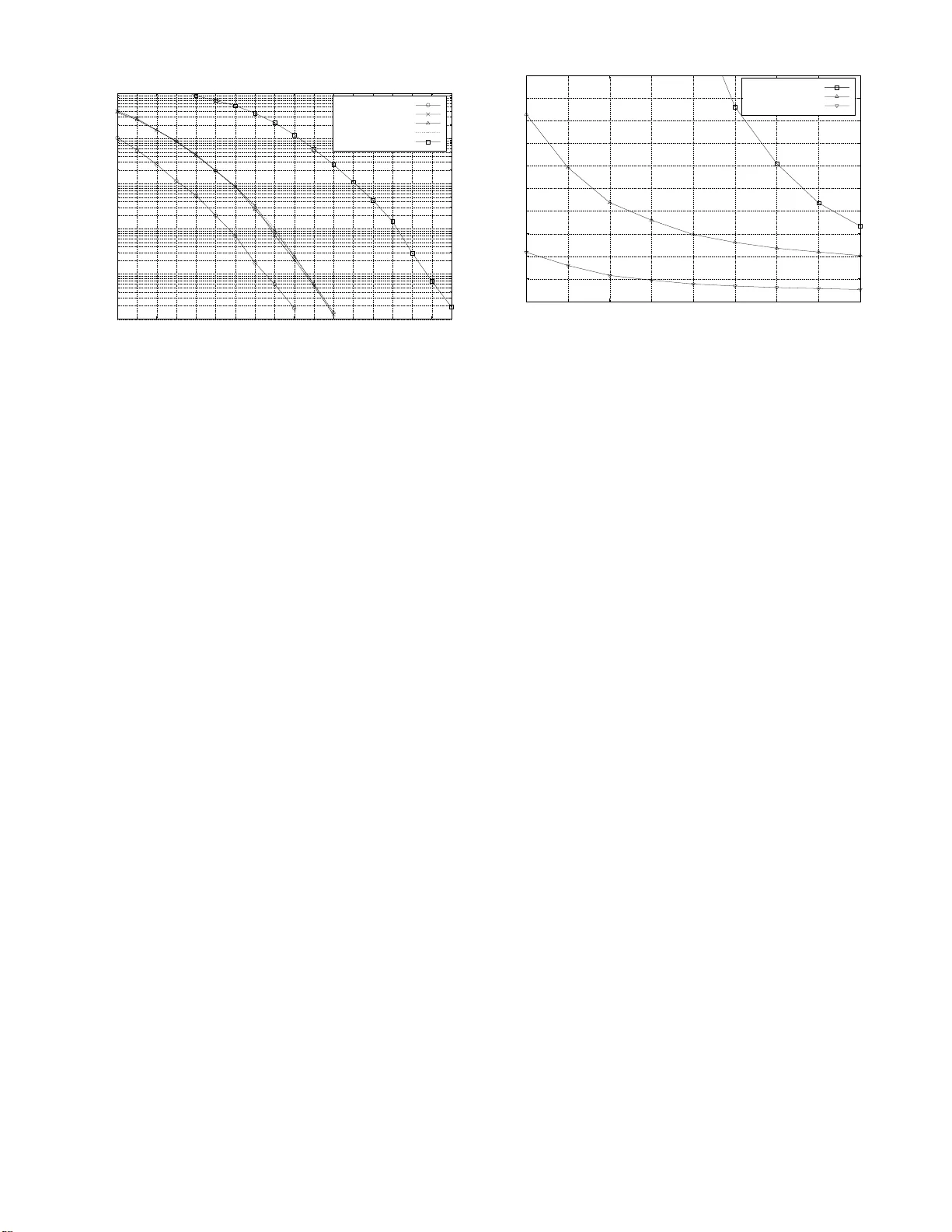

1 Min-Max decoding for no n binary LDPC codes V alentin Savin, CEA-LETI, MIN A TEC, Grenoble, Franc e, valentin.savin@cea.fr Abstract — Iterativ e decoding of non-binary LDPC codes is currently perf ormed using either th e Sum-Product or the Min- Sum algorithms or slightly dif ferent versions of them. In th is paper , sev eral low-complexity quasi-optimal iterative algorithms are proposed fo r decoding n on-binary codes. Th e Min-M ax algorithm is one of them and i t has the b enefit of two possible LLR domain implementations: a standard implementation , whose complexity scales as th e square of the Galois fi eld’ s cardinality and a reduced complexity i mp lementation called selectiv e imple- mentation , which makes the Min -Max d ecoding very attractive fo r p ractical p urposes. Index T erms — LDPC codes, graph codes, iterativ e decodin g. I . I N T R O D U C T I O N Although already pro posed by Gallager in his P hD thesis [1], [2] th e use o f non binary LDPC codes is still very limited tod ay , mainly be cause of the decod ing complexity of these codes. Th e o ptimal iterative decod ing is perfo rmed by the Su m-Produ ct algo rithm [3] at the pr ice of an in - creased co mplexity , comp utation instability , and depen dence on ther mal n oise estimation errors. The Min -Sum algorithm [3] perfo rms a sub-op timal iterative decodin g, le ss co mplex than the Sum-Pro duct d ecoding, and ind ependen t of thermal noise estimation err ors. The sub-op timality of the Min- Sum decodin g comes from the overestimation of extrinsic messages computed within the check-nod e processing. The Sum-Product a lgorithm can be efficiently implem ented in the pro bability d omain u sing b inary Four ier transfor ms [4] a nd its complexity is dom inated by O ( q log 2 q ) sum and produ ct operations for each check nod e processing, where q is the cardinality of the Galois field of th e non-bin ary LDPC code. Th e Min- Sum decoding can be implemented either in the log- probab ility d omain or in th e log-likeliho od ratio ( LLR) domain and its complexity is d ominated by O ( q 2 ) sum op erations f or each ch eck node pr ocessing. I n the LLR domain, a r educed selective implementatio n of th e Min -Sum decodin g, called Ex tended M in-Sum, was propo sed in [5], [6]. Here “selective” mea ns that the check -node p rocessing uses the incoming messages con cerning only a par t o f the Galois field elem ents. No n binary LDPC c odes wer e also in vestigated in [7], [8], [9]. In this paper we propo se sev eral new algorithm s for deco - ding non -binary LDPC cod es, one of wh ich is called the Min- Max algo rithm. They are all indep endent of therm al noise esti- mation errors and perfor m q uasi-optimal decoding – meaning that they presen t a very small perfor mance lo ss with respect to the op timal iterative decoding (Sum- Product). W e also propo se two im plementation s of the Min-Max algorithm , both Part of this work was performed in the frame of the ICTN EWCOM++ project which is a partly EU funded Network of Excellence of the 7th European Frame work Program. in the LLR dom ain, so that the decodin g is com putationally stable: a “standa rd implementation ” whose complexity scales as the square of the Galo is field’ s car dinality and a r educed complexity implementation called “selective implementation ” . That makes the Min-Max deco ding very attracti ve for practical purpo ses. The paper is organized as follows. In the next section we briefly revie w sev eral realizations of the Min -Sum alg orithm for non binary LDPC codes. It is intended to keep th e paper self-co ntained but also to ju stify some of o ur choices regarding the new decoding algor ithms introdu ced in sectio n III. The implementation o f th e Min-Max decoder is discu ssed in section IV. Section V presents simulation results and section VI con cludes this paper . The following notation s will be u sed throug hout the paper . Notatio ns related to the Galois field: • G F ( q ) = { 0 , 1 , . . . , q − 1 } , the Ga lois field with q elements , where q is a power of a prime number . I ts elements will be called symbo ls , in o rder to be distinguishe d f rom o rdinary integers. • a, s, x will be used to denote G F ( q ) -sym bols. • a , s , x will be used to denote vectors of G F ( q ) -symbols. For instance, a = ( a 1 , . . . , a I ) ∈ G F ( q ) I , etc . Notatio ns related to LDPC c o des: • H ∈ M M ,N ( G F ( q )) , the q -ary chec k matrix of the code. • C , set o f cod ew o rds of the LDPC code. • C n ( a ) , set of co dew ords with the n th coordin ate equal to a ; fo r given 1 ≤ n ≤ N an d a ∈ G F ( q ) . • x = ( x 1 , x 2 , . . . , x N ) a q -a ry cod ewor d tra nsmitted over the chan nel. The T anner grap h associated with an LDPC code co nsists of N variable no des an d M chec k nod es represen ting the N columns and the M lines of the ma trix H . A variable node and a check nod e are co nnected by an ed ge if the cor respond ing element of matrix H is n ot zero. Each edg e of the gra ph is labeled b y the correspon ding non zero element o f H . Notatio ns related to the T anner graph: • H , the T an ner graph of the code. • n ∈ { 1 , 2 , . . . , N } a variab le node o f H . • m ∈ { 1 , 2 , . . . , M } a check node of H . • H ( n ) , set of n eighbor check n odes of the variable node n . • H ( m ) , set of neighbor v ar iable nodes of the check nod e m . • L ( m ) , set of lo c al co nfigurations verifying the check node m ; i.e. the set of sequ ences of G F ( q ) -sym bols a = ( a n ) n ∈H ( m ) , verif ying the lin ear con straint: X n ∈H ( m ) h m,n a n = 0 • L ( m | a n = a ) , set o f local configurations a verifying m , such that a n = a ; for giv en n ∈ H ( m ) and a ∈ G F ( q ) . 2 An iterati ve decoding algor ithm con sists of an initialization step followed by an iterative exchange of m essages between variable and check nodes connected in the T anner grap h. Notatio ns related to an iterative decoding algorit hm: • γ n ( a ) , the a priori information o f the va riable node n concerning the symbol a . • ˜ γ n ( a ) , the a posteriori in formation o f the variable node n concerning the symbol a . • α m,n ( a ) , the message fr om the check node m to th e variable n ode n concerning th e symbol a . • β m,n ( a ) , the message fr om the variab le node n to th e check node m concernin g the symbol a . I I . R E A L I Z A T I O N S O F T H E M I N - S U M D E C O D I N G F O R N O N B I N A RY L D P C C O D E S A. Min - Sum decod ing The Min-Sum decoding i s generally implemented in the log- probab ility domain an d it perform s th e f ollowing operations: Initialization • A priori informatio n : γ n ( a ) = − ln ( Pr ( x n = a | chan nel )) • V ariable no de messages : α m,n ( a ) = γ n ( a ) Iterations • Chec k nod e pr o cessing β m,n ( a ) = min ( a n ′ ) n ′ ∈H ( m ) ∈ L ( m | a n = a ) X n ′ ∈H ( m ) \{ n } α m,n ′ ( a n ′ ) • V ariable no de pr ocessing α m,n ( a ) = γ n ( a ) + X m ′ ∈H ( n ) \{ m } β m ′ ,n ( a ) • A posteriori information ˜ γ n ( a ) = γ n ( a ) + X m ∈H ( n ) β m,n ( a ) For practical purpo ses, messages α m,n ( a ) and β m,n ( a ) should be no rmalized in orde r to avoid computatio nal insta- bility (o therwise they could “escap e” to in finity). The check node p rocessing, which domin ates the dec oding complexity , can be impleme nted using a forwar d – backwar d computation method. Assuming that the T anner graph is cycle free, the a posteriori informa tion con verges after finitely many iterations to [3]: ˜ γ n ( a ) = min a ∈ C n ( a ) N X k =1 γ k ( a k ) ! Moreover , if s n = argm in a ∈ G F ( q ) ( ˜ γ n ( a )) is the m ost likely symbol accordin g to the a posterior i inform ation computed above, then the sequence s = ( s 1 , s 2 , . . . , s N ) is a codeword (this is no longer true if the T anner g raph con tains cycles) and it can b e easily verified th at s = argmax a ∈ C Pr ( x = a | channel ) . Thus, under the cycle free assumption the Min-Sum d ecoding always outputs a c odew ord and the above equality looks rather like a m aximum likelihood dec oding, which is in contrast with the su b-optimality prop erty . In [3], the author conclud es that the Min -Sum deco ding is op timal in terms of block err or p r obab ility , b u t ou r explanation is q uite different. What r eally happen s is that proba bilities from the above equality d o not take into acco unt the dependence relations between co dew ord’ s symbols (o r eq uiv alen tly between grap h’ s variable nodes). T akin g into accoun t only the chan nel obser- vation an d no prior info rmation about d ependen ce relations between c odew ord’ s sy mbols, we have Pr ( x = a | channel ) = N Y n =1 Pr ( x n = a n | channel ) , therefore e ven a sequen ce x which is not a c odeword has a n on zer o probability . In some sense, the Min-Sum decodin g con verges t o a maximum likelihood de- coding, distorted by depend ence r elations between codewor d ’ s symbols . B. E q uivalent iterative deco ders The term of equivalent (iter ative) decoders will be em- ployed several times th rough th is pap er . W e begin this section by p roviding its rigoro us definition. Consider th e a po steriori informa tion av ailable at a variable node n after the l th decodin g iteration: it de fines an order between th e symbols of the Galois field, starting with th e most likely symb ol a nd end ing with the least likely on e. Note that the most likely symbol m ay correspo nd to the minimum o r to the maxim um of the a posteriori informatio n, dep ending on the decoding algorithm . W e call this order the a posteriori o rder o f variable n ode n at iteration l . W e say that two decoders ar e equiva le n t if, for each variable node n , they b oth induce th e same a posteriori or der at any iteration l . In particular , assuming that the hard decoding correspo nds to the most likely symbo l, both d ecoders ou tput the same sequence o f G F ( q ) -sym bols. T wo iter ativ e decode rs, which ar e equivalent to the Min- Sum dec oder are presented b elow . 1) Min-S um 0 decodin g : The Min- Sum 0 decodin g pe rforms the following ope rations. Initialization • A priori information 1 γ n ( a ) = ln ( Pr ( x n = 0 | channel ) / Pr ( x n = a | c hannel )) • V ariable nod e messages : α m,n ( a ) = γ n ( a ) Iterations • Check no de pr ocessing : same as for Min- Sum (II-A) • V ariable nod e pr ocessing α ′ m,n ( a ) = γ n ( a ) + X m ′ ∈H ( n ) \{ m } β m ′ ,n ( a ) α m,n ( a ) = α ′ m,n ( a ) − α ′ m,n (0) • A posteriori information : same as f or Min- Sum (II-A) W e note that th e exchanged messages re present log- likelihood ratios with respect to a fix ed symbol ( here, the symbol 0 ∈ G F ( q ) is used, but obvio usly othe r symbols may be considered ). Its main advantage with resp ect to the classical 1 W e assume that all c ode words a re sent equally likel y . 3 Min-Sum decoding is that it is comp utationally stable, so that there is no nee d to nor malize the exchan ged messages. T his algorithm is also known a s the Extend ed Min-Sum algorith m [5], [6]. 2) Min-Sum ⋆ decodin g : The Min- Sum ⋆ decodin g perfo rms the following operations. Initialization • A priori information γ n ( a ) = ln ( Pr ( x n = s n | channel ) / Pr ( x n = a | c hannel )) where s n is the most likely symbo l for x n . • V ariable no de messages : α m,n ( a ) = γ n ( a ) Iterations • Chec k nod e pr o cessing : same as fo r Min- Sum (II-A) • V ariable no de pr ocessing α ′ m,n ( a ) = γ n ( a ) + X m ′ ∈H ( n ) \{ m } β m ′ ,n ( a ) α ′ m,n = min a ∈ G F ( q ) α ′ m,n ( a ) α m,n ( a ) = α ′ m,n ( a ) − α ′ m,n • A posteriori information : same as f or Min- Sum (II-A) As the Min- Sum 0 decodin g, the Min-Sum ⋆ decodin g also perfor ms in the LLR doma in and is c omputation ally stable. The main dif fe rence is that th e exchanged messages represent log-likelihood ratios with respect to the most likely symbol, which may vary f rom a variable n ode to ano ther , or within a fixed variable node, fr om an iteration to the other . Theor em 1: The Min-Sum , Min-Sum 0 and Min -Sum ⋆ de- coders a re equivalent. It fo llows that the Min-Sum ⋆ decodin g do es not pr esent any pr actical in terest: the equ i valent Min -Sum 0 decodin g is already co mputationa lly stable and less comp lex ( it does not require the minimum co mputation in the variable node pr o- cessing step). Howe ver , th e Min-Sum ⋆ motiv ate s the d ecoding algorithm s th at will be intro duced in th e fo llowing section. The fundamen tal o bservation is that messages concerning most likely symbols are always equal to ze ro, and messages con- cerning the other symbols are positive. Therefore, excha nged messages can be seen as metr ics ind icating how far is a given symbol fro m the most likely one. This will be d e veloped in the next section. I I I . M I N - N O R M D E C O D I N G F O R N O N B I N A RY L D P C C O D E S According to the discussion in the above section, we inter- pret v ar iable node messages as metrics indic ating the distance between a gi ven sym bol and the most likely on e. Con sider a ch eck nod e m and a variable node n ∈ H ( m ) . Using the received extrinsic in formation , i.e. metr ics received from variable no des n ′ ∈ H ( m ) \ { n } , the check n ode m has to ev aluate: • th e mo st likely symbo l for variable node n , • h ow far the o ther sym bols are from the most likely one. T o simplif y notation, we set H ( m ) = { n, n 1 , . . . , n d } and let s i be th e most likely sym bols co rrespon ding to variable node n i , i = 1 , . . . , d . Since the line ar constraint corre- sponding to the check n ode m h as to be satisfied, the most likely symbol fo r the variable node n is th e uniqu e symbo l s ∈ G F ( q ) , such that: ( s, s 1 , . . . , s d ) ∈ L ( m ) On the other hand, each symbo l a ∈ G F ( q ) correspond s to a set of d -tu ples ( a 1 , · · · , a d ) , such that ( a, a 1 , . . . , a d ) ∈ L ( m ) : L a ( m ) = { ( a 1 , . . . , a d ) | ( a, a 1 , . . . , a d ) ∈ L ( m ) } Thus, iden tifying s ≡ ( s 1 , . . . , s d ) a nd a ≡ L a ( m ) , the distance between th e most likely symbo l s and the symbo l a can be ev aluate d as the distance fro m the sequence ( s 1 , . . . , s d ) to the set L a ( m ) . As u sual, we con sider that the distanc e from a point to a set is equal to the minimum distance between the giv en point and the po ints o f the given set. In addition, we have to specify a rule that co mputes th e distance between two sequences ( s 1 , . . . , s d ) and ( a 1 , . . . , a d ) , takin g into accoun t the received “marginal d istances” b etween s i and a i ’ s. This can be don e by using the distance associated with one of the p -norm s ( p ≥ 1 ), or the infinity (also called maximu m) norm on an appro priate multidimensiona l vector space. Precisely : β m,n ( a ) = dist (( s 1 , . . . , s d ) , L a ( m )) = min ( a 1 ,...,a d ) ∈L a ( m ) dist (( s 1 , . . . , s d ) , ( a 1 , . . . , a d )) = min ( a 1 ,...,a d ) ∈L a ( m ) k dist ( s 1 , a 1 ) , . . . , dist ( s d , a d ) k p = min ( a 1 ,...,a d ) ∈L a ( m ) k α 1 ( a 1 ) , . . . , α d ( a d ) k p Note th at for p = 1 we ob tain the Min-Sum ⋆ decodin g from the above section. T aking in to accou nt that: k k 1 ≥ k k 2 ≥ · · · ≥ k k ∞ it fo llows th at using any o f the k k p or k k ∞ norms would reduce the value of check node messages, which is desi- rable, sinc e these m essages are a ctually overestimated by the Min-Sum ⋆ algorithm . One could thin k that the infin ity nor m would excessi vely reduce check n ode messages values, but this is not true. I n fact, the distance between k k 1 and the infinity norm is less than twice the distance between k k 1 and the Eu clidian ( p = 2 ) n orm. In practice, it turns o ut that the infinity n orm induces a more accurate co mputation of chec k node messages than k k 1 . W e f ocus now on the Euclidea n and th e infin ity no rms. The de riv ed deco ding algo rithms are called Euclidea n deco- ding , respecti vely Min-Max deco ding . They p erform the same initialization step , variable nodes processing, and a po steriori informa tion u pdate as the Min- Sum ⋆ decodin g; ther efore only the chec k nod e processing step will be descr ibed below . A. E u clidean decod in g • Check no des pr o cessing β m,n ( a ) = min ( a n ′ ) n ′ ∈H ( m ) ∈ L ( m | a n = a ) s X n ′ ∈H ( m ) \{ n } α 2 m,n ′ ( a n ′ ) 4 B. Min - Max deco ding • Chec k nod es pr oce ssing β m,n ( a ) = min ( a n ′ ) n ′ ∈H ( m ) ∈ L ( m | a n = a ) max n ′ ∈H ( m ) \{ n } α m,n ′ ( a n ′ ) Theor em 2: Over G F (2) , any dec oding algorithm u sing any one of the p -norms, with p ≥ 1 , or the infinity-no rm is equiv alent to the Min-Sum decod ing. I n particu lar , the M in- Sum, Min -Max, and Euclidian dec odings are equiv alen t. I V . I M P L E M E N TA T I O N O F T H E M I N - M A X D E C O D E R In this section we gi ve details abou t th e implemen tation of the c heck node pr ocessing within the Min-M ax d ecoder . First, we give a standard implementation using a well-k nown forward-ba ckward computatio n techniqu e. Next, we show that the compu tations per formed with in the standard implementa- tion do not need to use th e info rmation concern ing all symbols of the Galois field and , conseq uently , we derive the so-called selective imp lementation of the Min- Max de coder . A. S tandard imple m e n tation of the Min- Ma x decod er Let m b e a ch eck no de an d H ( m ) = { n 1 , n 2 , . . . , n d } be the set of variable nodes connected to m in the T an ner graph. W e recur si vely d efine for ward an d backward metrics, ( F i ) i =1 ,d − 1 and r espectiv ely ( B i ) i =2 ,d as fo llows: Forward metrics • F 1 ( a ) = α m,n 1 ( h − 1 m,n 1 a ) • F i ( a ) = min a ′ ,a ′′ ∈ G F ( q ) a ′ + h m,n i a ′′ = a ( max ( F i − 1 ( a ′ ) , α m,n i ( a ′′ ))) Backward metrics • B d ( a ) = α m,n d ( h − 1 m,n d a ) • B i ( a ) = min a ′ ,a ′′ ∈ G F ( q ) a ′ + h m,n i a ′′ = a ( max ( B i +1 ( a ′ ) , α m,n i ( a ′′ ))) Then c heck nod e messages can be computed a s follows: • β m,n 1 ( a ) = B 2 ( a ) and β m,n d ( a ) = F d − 1 ( a ) • β m,n i ( a ) = min a ′ ,a ′′ ∈ G F ( q ) a ′ + a ′′ = − h m,n i a ( max ( F i − 1 ( a ′ ) , B i +1 ( a ′′ ))) B. S elective implemen tation of th e Min- Max deco der In this section we focus on the b uilding blocks of the standard imp lementation, which are min-max compu tations o f the following type: f ( a ) = m in a ′ ,a ′′ ∈ G F ( q ) h ′ a ′ + h ′′ a ′′ = ha ( max ( f ′ ( a ′ ) , f ′′ ( a ′′ ))) The compu tation of f requires O ( q 2 ) compariso ns. Our goal is to reduce this complexity by reducing th e number of symb ols a ′ and a ′′ in volved in th e m in-max c omputation . W e start with the following proposition. Pr opo sition 3: Let ∆ ′ , ∆ ′′ ⊂ G F ( q ) b e two subsets o f the Galois field , such that card (∆ ′ ) + ca rd (∆ ′′ ) ≥ q + 1 . Then for any a ∈ G F ( q ) , ther e exist a ′ ∈ ∆ ′ and a ′′ ∈ ∆ ′′ such that: ha = h ′ a ′ + h ′′ a ′′ Cor ollary 4: Let ∆ ′ , ∆ ′′ ⊂ G F ( q ) be such that th e set { f ′ ( a ′ ) | a ′ ∈ ∆ ′ } ∪ { f ′′ ( a ′′ ) | a ′′ ∈ ∆ ′′ } contains th e q + 1 lowest values of th e set { f ′ ( a ′ ) | a ′ ∈ G F ( q ) } ∪ { f ′′ ( a ′′ ) | a ′′ ∈ G F ( q ) } Then for any a ∈ G F ( q ) the f ollowing equality holds: f ( a ) = min a ′ ∈ ∆ ′ ,a ′′ ∈ ∆ ′′ h ′ a ′ + h ′′ a ′′ = ha ( max ( f ′ ( a ′ ) , f ′′ ( a ′′ ))) Example 5: Assume tha t we have to compute the values of f and the b ase Galois field is G F (8) . W e proceed as follows: • Determin e the 9 smallest values between the 16 values of the set { f ′ (0) , f ′ (1) , . . . , f ′ (7) , f ′′ (0) , f ′′ (1) , . . . , f ′′ (7) } . Let us assume for instance that the 9 smallest values are { f ′ (1) , f ′ (2) , f ′ (3) , f ′ (4) , f ′ (6) , f ′ (7) , f ′′ (0) , f ′′ (1) , f ′′ (5) } . • Set ∆ ′ = { 1 , 2 , 3 , 4 , 6 , 7 } and ∆ ′′ = { 0 , 1 , 5 } , the n comp ute the values of f using only the symbo ls in ∆ ′ and ∆ ′′ . In th is way the co mputation o f the 8 values of f tak es o nly 6 × 3 = 18 comparison s instead of 64 . In general, let q ′ = card (∆ ′ ) a nd q ′′ = card (∆ ′′ ) , where the subsets ∆ ′ and ∆ ′′ are assum ed to satisfy the con ditions of the above coro llary (then q ′ + q ′′ ≥ q + 1 ). If q ′ + q ′′ = q + 1 , then the complexity of the “m in-max” compu tation can b e red uced by at least a factor of four, from q 2 to q ′ q ′′ ≤ q 2 ( q 2 + 1 ) . The main prob lem we enc ounter is th at we should sor t the 2 q values o f f ′ and f ′′ in order to figure out which symbols participate in the sets ∆ ′ and ∆ ′′ . In order to av oid the sorting process, we generate the subsets: ∆ ′ k = { a ′ ∈ G F ( q ) | ⌊ f ′ ( a ′ ) ⌋ = k } ∆ ′′ k = { a ′′ ∈ G F ( q ) | ⌊ f ′′ ( a ′′ ) ⌋ = k } where ⌊ x ⌋ deno tes the integer part of x . W e then define: ∆ ′ = ∆ ′ 0 ∪ · · · ∪ ∆ ′ t and ∆ ′′ = ∆ ′′ 0 ∪ · · · ∪ ∆ ′′ t starting with t = 0 and increasing its value u ntil we get card (∆ ′ ) + ca rd (∆ ′′ ) ≥ q + 1 . The use of the sets ∆ ′ and ∆ ′′ has a dou ble interest: • it reduce s the number of symbols req uired by (and th en the complexity of) th e m in-max compu tation, • m ost of the maxima computatio ns become ob solete. Inde ed, max ( f ′ ( a ′ ) , f ′′ ( a ′′ )) is calculated only if symbols a ′ and a ′′ belong to sets of the same index ( i.e. a ′ ∈ ∆ k ′ and a ′′ ∈ ∆ k ′′ with k ′ = k ′′ ). Otherwise, the ma ximum corr esponds to th e symbol belo nging to the set of m aximal in dex. Finally , the proposed app roach is carr ied ou t in pr actice as follows: • T he r eceiv ed a prior i info rmation is norm alized such that its average value is eq ual to a pred efined constant A I (fo r “ A verage a priori In formation ”). This con stant is chosen such that the integer part provides a sharp criterio n to distingu ish between the symbols of the Galo is field, m eaning that sets ∆ ′ k and ∆ ′′ k only c ontain f ew elements. • W e use a pr edefined threshold C O T (for “Cut Off Thresh- old”) r epresenting th e m aximum value of variable node mes- sages. T hus, incoming variable n ode messages greater than or 5 10 -6 10 -5 10 -4 10 -3 10 -2 10 -1 3.2 3.3 3.4 3.5 3.6 3.7 3.8 3.9 4 4.1 4.2 4.3 4.4 4.5 4.6 4.7 4.8 4.9 Bit Error Rate E b /N 0 (dB) length = 2016 bits (504 GF(16)-symbols) Sum-Product Euclidian Min-Max standard Min-Max selective Min-Sum Fig. 1. Decoding performance of G F (16) -LDPC co des, A WGN, 16 -QAM, coding rate 1 / 2 , maximum number of de coding iterati ons = 200 equal to the predefined thresh old will not p articipate in the min-max com putations for check node p rocessing. It fo llows that the rank k of subsets ∆ ′ k and ∆ ′′ k ranges from 0 to C O T − 1 . In this case, the sub sets ∆ ′ k and ∆ ′′ k may co ntain all tog ether less than q + 1 symbols, in which case the min- max complexity may be significantly reduced. In practice, this generally oc curs during the last iterations, when decod ing i s qu ite advanced and doubts per sist only about a reduced number of symbols. Constants A I and C O T have to b e determine d by Monte Carlo simulation and they only dep end o n the card inality of the Galois field. V . S I M U L A T I O N R E S U LT S W e pr esent M onte-Carlo simulation r esults for co ding rate 1 / 2 o ver the A WGN c hannel. Fig. 1 presents the perfo rmance of G F (16 ) -LDPC codes with the 16 -QAM modu lation (Galois field sy mbols are m apped to co nstellation sym bols). W e no te that th e Eu clidian and the Min-Max (standar d and selectiv e implementatio ns) algorithms achieve nea rly the same decoding perfor mance, and the g ap to the Sum- Product decodin g per- forman ce is only of 0 . 2 dB For the selecti ve implementation we u sed constan ts A I = 12 and C OT = 31 . Fig. 2 presen ts the deco ding com plexity in numb er of operation s pe r decod ed bit (all deco ding iterations included ) of the Min-Sum, the stand ard and the selectiv e Min-Max decoder s over G F (16 ) . The Min-Sum and the standard Min - Max de coders perfo rm nearly the sam e numb er of oper ations per iteratio n, but th e second decod er has better perfo rmance and needs a smaller n umber of iteration s to converge. On the contrary , the standard and the selectiv e Min-Max deco ders have the same p erform ance and the y perfor m the same number of dec oding iterations. Simply , the selecti ve deco der takes a smaller n umber of operatio ns to perform each deco ding iteration, which explains its lower com plexity (by a facto r o f 4 with respect to the standard im plementation ). V I . C O N C L U S I O N S W e shown that the extrinsic messages exch anged with in the Min-Sum decodin g of non binar y LDPC codes may b e seen 0 5 10 15 20 25 30 35 40 45 50 3.5 3.6 3.7 3.8 3.9 4 4.1 4.2 4.3 Average number of operations (in thousands) per decoded bit E b /N 0 (dB) Min-Sum Min-Max standard Min-Max selective Fig. 2. Decoding comple xity of G F (16) -LDPC codes, A WGN, 16 -QAM, coding rate 1 / 2 , m aximum number of de coding itera tions = 200 as metrics indicating the distance between a gi ven symbol and the mo st likely o ne. By u sing approp riate metr ics, we have derived several lo w-co mplexity quasi-op timal iterative algorithm s f or decoding non-b inary codes. The Euclidian and the Min-Max algo rithms are on es of them. Furtherm ore, we gave a can onical selective imp lementation of the Min-Max decodin g, w hich reduces the num ber of op erations taken to perfor m each decoding iteration, witho ut a ny per formanc e degradation. The quasi-optimal pe rforman ce tog ether with the low complexity make th e Min-Max d ecoding very attractive for p ractical pu rposes. R E F E R E N C E S [1] R. G. Gallager , Low Density P arity Check Codes , Ph.D. thesis, MIT , Cambridge , Ma ss., Septembe r 1960. [2] R. G. Gallager , Low Density P arity Chec k Codes , M.I.T . Press, 1963, Monograph. [3] N. Wibe rg, Codes and decodin g on genera l graphs , Ph.D. thesis, Likopi ng Univ ersity , 1996, Sweden. [4] L . Barnault and D. Decle rcq, “Fast decoding al gorithm for LDP C over GF (2 q ) , ” in Information Theory W orkshop, 2003. Proce edings. 2003 IEEE , 2003, pp. 70–73. [5] D. Declercq and M. Fossorier , “Extended min-sum alg orithm for de coding LDPC codes over GF ( q ) , ” in Information Theory , 2005. ISIT 2005. Pr oceedi ngs. International Symposium on , 2005, pp. 464–468. [6] D. Declercq and M. Fossorie r , “Deco ding algorithms for nonbinary LDPC codes o ver GF ( q ) , ” Communicati ons, IEEE T ransacti ons on , vol. 55, pp. 633–643, 2007. [7] MC Dave y and DJC MacKay , “Lo w density parity check codes over GF(q), ” Informatio n Theo ry W orkshop, 1998 , pp. 70–71, 1998. [8] X. Y . Hu and E. Elefther iou, “Cycle T anner- graph codes over GF( 2 b ), ” Informatio n Theory , 2003. Pr oceedings. IEE E Inte rnational Symposium on . [9] H. W ymeersch, H. Stee ndam, and M. Moe necla ey , “Log-domain decoding of LDPC codes over GF(q), ” Communic ations, 2004 IEEE Internat ional Confer ence on , vol. 2, 2004. A P P E N D I X I A LT E R N AT I V E R E A L I Z AT I O N S O F T H E M I N - S U M A L G O R I T H M In this section we introd uce two alternative realizations, called Min-Sum 0 and Min-Sum ⋆ , of the M in-Sum a lgorithm. W e prove that they a re eq uiv alen t to the MSA alg orithm o f section and, over G F (2 ) , the M in-Sum ⋆ is e quiv alen t to the Min-Max algo rithm. W e assume th at th e T an ner graph H is cycle free thought this section 2 . 2 Ne vert heless, this assumption can be remo ved by repla cing H with computat ion trees a nd “code words” with “pseudo-code words” 6 Notatio n . • n ∈ N = { 1 , 2 , . . . , N } a variab le nod e of H . • m ∈ M = { 1 , 2 , . . . , M } a check nod e of H . • For each check no de m ∈ M and each sequenc e ( a n ) n ∈H ( m ) of G F ( q ) -sym bols ind exed by the set of variable nodes con nected to m , we note: m h ( a n ) n ∈H ( m ) i = X n ∈H ( m ) h m,n a n The sequence ( a n ) n ∈H ( m ) is said to satisfy the check node m if m h ( a n ) n ∈H ( m ) i = 0 . Th us m h ( a n ) n ∈H ( m ) i = 0 ⇔ ( a n ) n ∈H ( m ) ∈ L ( m ) The Min-Sum 0 decodin g perf orms the following co mputa- tions: Initialization • A priori information γ n ( a ) = ln Pr ( x n = 0 | cha nnel observation ) Pr ( x n = a | c hannel obser vation ) • V ariable to check messages initialization α m,n ( a ) = γ n ( a ) Iterations • Chec k to variable messages β m,n ( a ) = min ( a n ′ ) n ′ ∈H ( m ) ∈ L ( m | a n = a ) X n ′ ∈H ( m ) \{ n } α m,n ′ ( a n ′ ) • V ariable to check messages α ′ m,n ( a ) = γ n ( a ) + X m ′ ∈H ( n ) \{ m } β m ′ ,n ( a ) α m,n ( a ) = α ′ m,n ( a ) − α ′ m,n (0) • A posteriori information ˜ γ n ( a ) = γ n ( a ) + X m ∈H ( n ) β m,n ( a ) Theor em 6: The Min-Sum 0 decodin g c on verges after finitely many iterations to ˜ γ n ( a ) = min a =( a 1 ,...,a N ) a ∈C : a n = a X k ∈N γ k ( a k ) − min a =( a 1 ,...,a N ) a ∈C : a | V (1) n =0 X k ∈N γ k ( a k ) where V (1) n := ∪ m ∈H ( n ) H ( m ) is the set of variable no des separated by at most 1 check no de fr om n (including n ) . Pr oof: The proof is derived in same ma nner as that of theorem 3.1 in [3]. The critical po int is that we hav e to contro l the effect of withdrawing the α m,n (0) message from all the others α m,n ( a ) messages. Fix a variable nod e n an d let: f ( a ) = min a =( a 1 ,...,a N ) a ∈C : a n = a X k ∈N γ k ( a k ) f = min a =( a 1 ,...,a N ) a ∈C : a | V (1) n =0 X k ∈N γ k ( a k ) ˜ f ( a ) = f ( a ) − f W e have to prove that, after finitely many iterations, the equality ˜ γ n ( a ) = ˜ f ( a ) hold s. Since the T an ner graph H is cycle free , it can be seen as a tree graph rooted at n . Let H ( n ) = { m 1 , m 2 , . . . , m d } a nd, fo r j = 1 , 2 , . . . , d , let H j be the sub-graph of H emanating fro m the ch eck no de m j , as represented below: ?>=< 89:; n z z z z z z z z z z z z 5 5 5 5 5 5 5 5 5 U U U U U U U U U U U U U U U U U U U U U U U U H 1 H d m 1 ; ; ; ; ; m 2 I I I I I I · · · · · · m d ? ? ? ? ? H 2 Fig. 3. Graphical represent ation of sub- graphs H j Let also C j be the linear code correspond ing to the sub-grap h H j ∪ { n } . Sin ce γ k (0) = 0 for any k = 1 , . . . , N , we have f = d X j =1 f j , wh ere f j = min a ∈C j a | H ( m j ) =0 X k ∈H j γ k ( a k ) . Th erefore, ˜ f ( a ) = min a =( a 1 ,...,a N ) a ∈C : a n = a X k ∈N γ k ( a k ) − f = γ n ( a ) + d X j =1 min a ∈C j a n = a X k ∈H j γ k ( a k ) − d X j =1 f j Denoting g m j ( a ) = min a ∈C j a n = a X k ∈H j γ k ( a k ) − f j we g et ˜ f ( a ) = γ n ( a ) + d X j =1 g m j ( a ) This fo rmula h as the same stru cture as the update rule of the a poster iori in formation ˜ γ n ( a ) . It fo llows th at the equa lity ˜ γ n ( a ) = ˜ f ( a ) hold s provided that β m j ,n ( a ) = g m j ( a ) . Due to the symmetr y o f the situation it suffices to carry ou t the case j = 1 . For this, let H ( m 1 ) = { n, n 1 , n 2 , . . . , n d } and , for j = 1 , 2 , . . . , d , let H 1 ,j be th e sub- graph of H 1 emanating from the variable node n j , as repre sented below: ?>=< 89:; n · · U U U U U U U U U U U U U U U U U U U U U U U U m 1 z z z z z z z z z z z z 6 6 6 6 6 6 6 6 6 U U U U U U U U U U U U U U U U U U U U U U U U U m d H 1 H 1 , 1 ?>=< 89:; n 1 w w w w w w > > > > > ?>=< 89:; n 2 J J J J J J · · · · · · ?>=< 89:; n d H H H H H H H 1 ,d H 1 , 2 Fig. 4. Graphical represent ation of subg raphs H 1 ,j 7 Let also C 1 ,j be the linear code co rrespond ing to the sub-grap h H 1 ,j and d efine t j ( a j ) = min a ∈C 1 ,j a n j = a j X k ∈H 1 ,j γ k ( a k ) . T hen: min a ∈C 1 a n = a X k ∈H 1 γ k ( a k ) ! = min ( a 1 ,...,a d ) ∈ G F ( q ) d m 1 h a,a 1 ,...,a d i =0 d X j =1 t j ( a j ) and f 1 = min a ∈C 1 a | H ( m 1 ) =0 X k ∈H 1 γ k ( a k ) = d X j =1 min a ∈C 1 ,j a n j =0 X k ∈H 1 ,j γ k ( a k ) = d X j =1 t j (0) Therefo re: g m 1 ( a ) = min ( a 1 ,...,a d ) ∈ G F ( q ) d m 1 h a,a 1 ,...,a d i =0 d X j =1 t j ( a j ) − d X j =1 t j (0) = min ( a 1 ,...,a d ) ∈ G F ( q ) d m 1 h a,a 1 ,...,a d i =0 d X j =1 ( t j ( a j ) − t j (0)) Defining t = min a ∈C 1 ,j a | V (1) n =0 X k ∈H 1 ,j γ k ( a k ) , f ′ n j ( a j ) = min a ∈C 1 ,j a n j = a j X k ∈H 1 ,j γ k ( a k ) − min a ∈C 1 ,j a | V (1) n =0 X k ∈H 1 ,j γ k ( a k ) = t j ( a j ) − t and f n j ( a j ) = f ′ n j ( a j ) − f ′ n j (0) = t j ( a j ) − t j (0) we get g m 1 ( a ) = min ( a 1 ,...,a d ) ∈ G F ( q ) d m 1 h a,a 1 ,...,a d i =0 d X j =1 f n j ( a j ) This fo rmula h as th e same structure as the update rule of the check to variable messages β m 1 ,n ( a ) . It follows th at the equality β m 1 ,n ( a ) = g m 1 ( a ) holds, provided that α m 1 ,n j ( a ) = f n j ( a ) . This derives from the fact that f n j ( a ) verifies the same update rule as α m 1 ,n j ( a ) or, equiv alently , f ′ n j ( a ) verifies the same update ru le as α ′ m 1 ,n j ( a ) . Th e pro of can be proceed ed in same manner a s for f ( a ) , an d then will be omitted. This pro cess is repeated r ecursively until th e leaf variable nodes are reached. Finally , we rem ark that if n j where a leaf variable node, then f n j ( a j ) = γ n j ( a j ) = α m 1 ,n j ( a j ) and so we ar e don e. The Min-Sum ⋆ decodin g perf orms the f ollowing com puta- tions: Initialization • A priori information γ n ( a ) = ln Pr ( x n = s n | channel obser vation ) Pr ( x n = a | c hannel obser vation ) where s n is the most likely symbo l for x n . • V ariable to chec k messages initialization α m,n ( a ) = γ n ( a ) Iterations • Check to variable messages β m,n ( a ) = min ( a n ′ ) n ′ ∈H ( m ) ∈ L ( m | a n = a ) X n ′ ∈H ( m ) \{ n } α m,n ′ ( a n ′ ) • V ariable to chec k messages α ′ m,n ( a ) = γ n ( a ) + X m ′ ∈H ( n ) \{ m } β m ′ ,n ( a ) α ′ m,n min a ∈ G F ( q ) α ′ m,n ( a ) α m,n ( a ) = α ′ m,n ( a ) − α ′ m,n • A posteriori information ˜ γ n ( a ) = γ n ( a ) + X m ∈H ( n ) β m,n ( a ) Fixe a variable node n , no te H ⋆ = H \ ( { n } ∪ H ( n )) and let C ⋆ be the linear code associated with H ⋆ (when a node of H is rem oved we also remove all the ed ges tha t are in cident to the no de). W ith this n otation w e have the following theorem . Theor em 7: The Min-Sum ⋆ decodin g converges after finitely many iteratio ns to ˜ γ n ( a ) = min a =( a k ) k ∈N a ∈C : a n = a X k ∈N γ k ( a k ) − min a =( a k ) k ∈H ⋆ a ∈C ⋆ X k ∈H ⋆ γ k ( a k ) The proo f can be deri ved in same m anner os th at of the a bove theorem, an d then will b e omitted. W e also note that the conv ergence for mula of the MSA decoder is (see theorem 3.1 in [3 ]): ˜ γ n ( a ) = min a =( a k ) k ∈N a ∈C : a n = a X k ∈N γ k ( a k ) For each of the Min-Sum 0 and Min-Sum ⋆ decoder s the con ver- gence formu la is obtained by withdrawing from the right hand side term 3 of the above formula a ter m that is independen t of a . Note also th at similar fo rmulas hold after any fixed num ber of iterations - to see this, it suffices to cut off th e tree gr aph H at the ap propria te dep th. Theref ore, we get th e following: Cor ollary 8: The MSA, Min-Sum 0 and Min-Sum ⋆ de- coders a re equivalent. W e prove now that the MSA a nd the MMA decoder s are equiv alent over G F (2) (in a similar mane r can be p roved that over G F (2) th e MSA decoder is equiv alent to any oth er Min- k k p decoder . ) Pr oof: Since MSA and Min-Sum ⋆ decoder are equiv alen t, it suffices to prove that Min-Su m ⋆ and MMA decoders are equiv alent over G F (2) . In this case, the v ariable to check 3 Note also that the a priori informat ions γ n ( a ) are not computed in the same way for the thre e decoders b ut they only differ by a term inde pendent of a . Thus, for the MSA decoder γ n ( a ) = − ln( Pr ( x n = a )) , for the Min-Sum 0 decode r γ n ( a ) = − ln( Pr ( x n = a ) ) + ln( Pr ( x n = 0)) and for the Min-Sum ⋆ decode r γ n ( a ) = − ln( Pr ( x n = a )) + ln( Pr ( x n = s n )) , all probabil ities being conditioned by the channel observat ion. 8 messages α m,n ( a ) are n on negative and they conce rn only two symbols a ∈ { 0 , 1 } . Moreover , for one o f these symbo ls the correspo nding variable to check message in zero (p recisely , for the symbo l realizing the minimu m of { α ′ m,n ( a ) , a = 0 , 1 } ) . Fix a check nod e m , a variable node n and a sym bol a ∈ G F (2) , and let (¯ a n ′ ) n ′ ∈H ( m ) \{ n } , such th at m h (¯ a n ′ ) n ′ , a i = 0 , be the sequen ce realizing th e minimu m: min ( a n ′ ) n ′ ∈H ( m ) ∈ L ( m | a n = a ) X n ′ ∈H ( m ) \{ n } α m,n ′ ( a n ′ ) Then, fo r the Min-Sum ⋆ decoder β m,n ( a ) = X n ′ ∈H ( m ) \{ n } α m,n ′ (¯ a n ′ ) Suppose that there are two symbols, say ¯ a n ′ 1 and ¯ a n ′ 2 , of the sequence ( ¯ a n ′ ) n ′ ∈N ( m ) \{ n } , whose correspon ding v ariable to check messages ar e not zero, i.e. α m,n ′ i (¯ a n ′ i ) > 0 , i = 1 , 2 . Then, consider a n ew seq uence ( ¯ ¯ a n ′ ) n ′ ∈H ( m ) \{ n } , suc h that ¯ ¯ a n ′ = ¯ a n ′ if n ′ 6 = n ′ i and ¯ ¯ a n ′ i = ¯ a n ′ i + 1 mod 2 . This sequence still satisfies the check m , i.e. m h ( ¯ ¯ a n ′ ) n ′ , a i = 0 an d X n ′ ∈H ( m ) \{ n } α m,n ′ ( ¯ ¯ a n ′ ) < X n ′ ∈H ( m ) \{ n } α m,n ′ (¯ a n ′ ) as α m,n ′ ( ¯ ¯ a n ′ i ) = 0 < α m,n ′ (¯ a n ′ i ) , what co ntradicts the minimality of the sequence (¯ a n ′ ) n ′ ∈H ( m ) \{ n } . It follows that there is a t mot one sym bol ¯ a n ′ whose co rrespond ing variable to check message α m,n ′ (¯ a n ′ ) 6 = 0 . C onsequen tly , the sequ ence (¯ a n ′ ) n ′ ∈H ( m ) \{ n } also r ealizes the minimum min ( a n ′ ) n ′ ∈H ( m ) ∈ L ( m | a n = a ) max n ′ ∈H ( m ) \{ n } α m,n ′ ( a n ′ ) so the update ru les for check to variable messages co mputes the same messages for b oth decode rs. A P P E N D I X I I P R O O F O F P RO P O S I T I O N 3 Pr oof: Let a ∈ G F ( q ) . Con sider th e functions ϕ, ψ : G F ( q ) → G F ( q ) , defined by ϕ ( x ) = ha + h ′ x , and ψ ( x ) = h ′′ x . Since ϕ , and ψ are injective, we have: card ( ϕ (∆ ′ )) + car d ( ψ (∆ ′′ )) = car d (∆ ′ ) + ca rd (∆ ′′ ) ≥ q + 1 It follows that ϕ (∆ ′ ) ∩ ψ (∆ ′′ ) 6 = ∅ , so there ar e a ′ ∈ ∆ ′ , and a ′′ ∈ ∆ ′′ such that ϕ ( a ′ ) = ψ ( a ′′ ) ⇔ ha + h ′ a ′ = h ′′ a ′′ ⇔ a = h − 1 ( h ′ a ′ + h ′′ a ′′ )

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment