Self-Corrected Min-Sum decoding of LDPC codes

In this paper we propose a very simple but powerful self-correction method for the Min-Sum decoding of LPDC codes. Unlike other correction methods known in the literature, our method does not try to correct the check node processing approximation, bu…

Authors: Valentin Savin

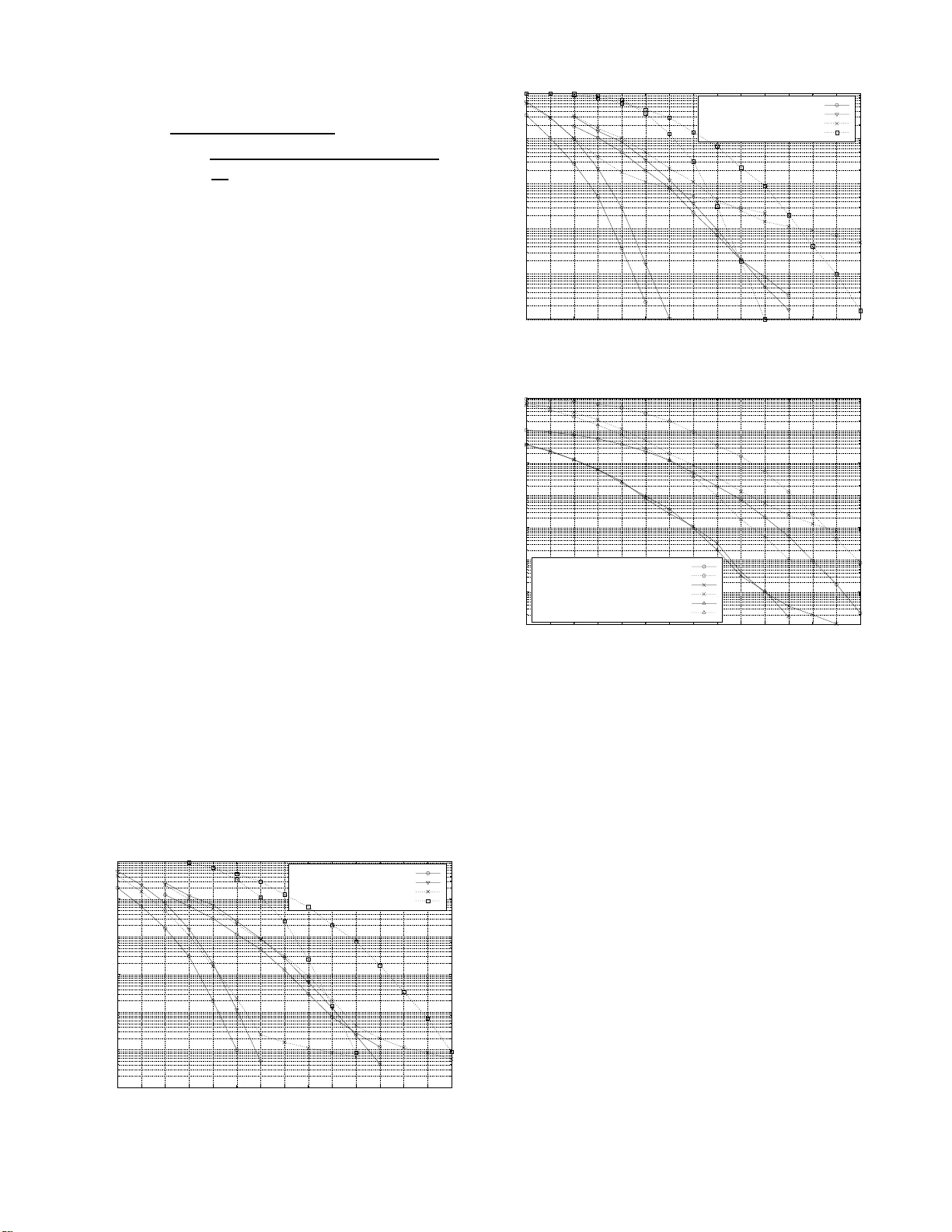

1 Self-Correc ted Min-Sum decoding of LDPC codes V alentin Savin, CEA-LETI, MIN A TEC, Grenoble, Fran ce, valentin.savin@cea.fr Abstract — In this paper we propose a v ery simple but po werful self-correction m ethod for the Min-Su m decoding of LPDC codes. Unlike other correction methods kn own in the li terature, our method does not try to correct the check node processing approx- imation, but it modifies the variable node processing by erasing unreliable messages. Howe ve r , this positive ly affects check node messages, which become symmetric Gaussian distributed, and we show that this is sufficient to ensu re qu asi-optimal decoding perfo rmance. Mon te-Carlo simulations show th at th e proposed Self-Corrected M in-Sum decoding perfo rms ve ry close to the Sum-Product decoding, wh ile preserving th e main features of the M in-Sum d ecoding, that is lo w complexity and i ndependen ce with respect to noise variance estimation errors. Index T erms — LDPC codes, graph codes, Min-Sum decoding. I . I N T R O D U C T I O N Low density parity ch eck ( LDPC) cod es can be itera- ti vely d ecoded using message- passing typ e alg orithms th at may b e c lassified as o ptimal, sub-o ptimal or quasi-o ptimal. The optimal itera ti ve decod ing is performe d by the Sum- Product algorithm [ 6] at th e price of an increased co mplexity , computatio n instability , and depe ndence on therm al no ise estimation err ors. Th e Min- Sum algor ithm [6] perfo rms a sub- optimal iterative decoding, less complex than the Sum -Product decodin g, and independent of therma l noise estimation er rors. The sub-optimality o f the Min-Sum decoding co mes f rom the overestimation o f check-n ode messages, which lead s to perfor mance loss with resp ect to the Su m-Produ ct decodin g. Howe ver , as we will highligh t in this paper, it is no t the overestimation by itself, which cau ses the Min -Sum sub- optimality , but rather th e loss of the symmetric Gaussian distribution of check-no de m essages. Sev eral correction methods were pro posed in th e literature in o rder to recover the perf ormance loss of the Min -Sum decodin g with respect to the Sum-Prod uct dec oding. W e call such algorith ms quasi-o ptimal. Ide ally , a q uasi-optimal algo- rithm shou ld perf orm very close to th e Sum- Product decodin g, while preserving th e main features of the Min-Sum decoding, that is low complexity and independen ce with respect to noise variance estimation error s. The most popula r correction methods are th e Norm alized Min-Sum and the O ffset Min- Sum algorith ms [1]. These algor ithms sh are the idea that the perfor mance loss can b e rec overed by red ucing th e am plitudes of check-n ode me ssages, which is d esirable as these m essages are actually overestimated within the Min-Sum decoding . In [5] we showed that th e p erforma nce loss o f th e Min -Sum decodin g can be recov ered by “cleanin g” the compu tation trees associated with the decoding process. While this was the first Part of this work was performed in the frame of the ICTN EWCOM++ project which is a partly EU funded Network of Excell ence of the 7th European Framework Program. example of a self-correction method of the Min-Sum decoding, the d rawback is that it requires a dif f erent processing order of nodes at ea ch iteration, which may b e h ardly imp lementable in hard ware. The starting p oint of this paper was to figure out how to benefit from the id ea of “cleaning” computation trees withou t requirin g any pa rticulary proce ssing or der of no des. The propo sed S elf-Corr ected Min-Sum decoding detec ts u nreliable informa tion simply by the sign fluctu ation of variable no de messages. Precisely , any variable nod e m essage cha nging its sign betwe en two consecutive iteratio ns is erased , meanin g that any such a fluctua ting me ssage is set to zer o. Th e Self- Corrected Min -Sum de coding will be analyzed fro m two different but complemen tary points of view . T hey can be summarized as follows: • E rasing fluctuating me ssages may be seen as clea ning com- putation trees associated with the deco ding pro cess of some unreliable info rmation. W e will show that the Self- Corrected Min-Sum decodin g behaves as th e Min -Sum dec oding applied on a sub-tree o f une rased message s. • W e also show that the check nod e m essages exchanged within the Self- Corrected Min-Sum d ecoding h ave a symm e- tric Ga ussian d istribution, and that this is sufficient to ensur e quasi-optim al decoding perf ormanc e. The paper is organized as follows. In the next section we introdu ce an d explain the Self-Cor rected Min-Sum alg orithm. In section III we study co mputation trees associated with the decodin g pr ocess, and describe the b ehavior of the Self- Corrected Min-Sum decodin g on grap h with cycles. In section IV we show that check n ode m essages have a sym metric Gaussian distribution and we discuss the analysis of the Self- Corrected Min-Su m decoding by Gaussian appr oximation . Finally , section V pr esents simu lation r esults and section VI conclud es this pap er . The following notations concern bipartite graphs and message-passing algo rithms runn ing on these graphs, and will be used throug hout the pap er . • H , th e T an ner gra ph of a LDPC c ode, co mprising N variable nodes and M che ck nodes, • n ∈ { 1 , 2 , . . . , N } , a variable node o f H , • m ∈ { 1 , 2 , . . . , M } , a ch eck no de of H , • H ( n ) , set of n eighbo r check no des of the variable nod e n , • H ( m ) , set o f neigh bor variable n odes of the check no de m , • γ n , a prio ri informatio n (LLR) of the variable n ode n , • ˜ γ n , a posterio ri informa tion (LLR) of the variable nod e n , • α m,n , th e variable-to-ch eck m essage fro m n to m , • β m,n , the check-to -variable message from m to n . 2 I I . S E L F - C O R R E C T E D M I N - S U M D E C O D I N G The Self-Correc ted Min-Sum d ecoding p erform s the same initialization step, check nod e pro cessing, and a p osteriori informa tion up date as the classical Min- Sum deco ding. The variable node pr ocessing is m odified as shown below: Initialization • A priori in formation γ n ( a ) = ln ( Pr ( x n = 0 | c hannel ) / Pr ( x n = 1 | c hannel )) • V ariable-to -check messages initialization α m,n = γ n Iterations • Chec k nod e pr o cessing β m,n = Y n ′ ∈H ( m ) \{ n ′ } sgn ( α m,n ′ ) · min n ′ ∈H ( m ) \{ n } ( | α m,n ′ | ) • A posteriori information ˜ γ n = γ n + X m ∈H ( n ) β m,n • V ariable no de pr ocessing α tmp m,n = ˜ γ n − β m,n if sgn ( α tmp m,n ) = sgn ( α m,n ) then α m,n = α tmp m,n else α m,n = 0 The variable n ode proc essing is explained below: • First, we com pute the new extrinsic LLR fo r the curren t iteration by α tmp m,n = ˜ γ n − β m,n . Howe ver, u nlike the classical Min-Sum decodin g, this value is stored as an intermediary (tempor ary) value. W e no te that at th is m oment the message α m,n still c ontains the value of the previous iteration. • Next, we com pare the signs of the temporary α tmp m,n (extrinsic LLR co mputed at the curre nt iter ation) an d the message α m,n that was sen t by the variable node n to the check node m at the p revious iteration. If the two signs are equal w e up date the variable node m essage by α m,n = α tmp m,n and sen d this value to th e check node m . • On th e other hand, if th e two signs are different, the variable node n send an er asure to the check n ode m , meaning that the v ariable no de message α m,n is set to ze ro. It should be noted that we consider the zero message to ha ve both negati ve and positive sign s. I n other words, whe never the old message α m,n = 0 , we upd ate the new variable node message by α m,n = α tmp m,n . Fig. 1 p resents th e percentage of sign c hanges f or each decodin g iter ation. Obviou sly , this percentag e ten ds to zero in case of suc cessful d ecoding . The dotted curves, co rrespond ing to successfu l decoding , indicate th at the Self- Corrected Min- Sum d ecoder conver ges faster than the Min-Sum d ecoder . In case of u nsuccessful decod ing, it can be observed that th e percentag e o f sign changes tend s very quick ly to a p ositiv e value. At the first iteration bo th Min-Sum and Self-Corrected Min-Sum d ecoding s present the same percen tage of sign changes, since in bo th cases variable-to -check message s are 0 0.05 0.1 0.15 0.2 0 10 20 30 40 50 60 70 80 90 100 110 120 130 140 150 160 170 180 190 200 Percentage of sign changes Iteration Min-Sum Self-Corrected Min-Sum Unsuccessful decoding Successful decoding Fig. 1. Percent age of sign changes per iteration: A WGN, coding rate 1 / 2 , E b / N 0 = 1 dB, maximum iteration number = 200 initialized from the same a prio ri inform ation. Nonethe less, they be have in a completely different way du ring th e first iterations: the percen tage of sign changes incr eases in case of Min- Sum deco ding, while it d ecreases w ithin the Self- Corrected Min -Sum decoding . Thus, one con sequence of the self-correctio n method is to control sign fl uctuation s when they occur, which help the de coder to enter into a convergence mode. I I I . C O M P U TA T I O N T R E E S Computation trees proved to be very usef ul in understandin g the behavior of message-pa ssing d ecoders runn ing on grap hs with cycles [6]. Con sider some in formatio n iteratively com- puted at some variable n ode n . By examining the updates that have occurred, one may recursi vely trace back throu gh time the computatio n of this inf ormation . This trace ba ck will for m a tree graph rooted at n and consisting of interconnected variable and che ck nod es in the same way as in the or iginal graph , but the sam e variable o r ch eck n odes may ap pear at several places in th e tree. W e deno te by Γ ( l ) n and A ( l ) m,n the c omputatio n trees of ˜ γ n and α m,n at the l th iteration. Both trees are rooted at n , the difference is that Γ ( l ) n does co ntain a co py of m as child node o f n , wh ile A ( l ) m,n does not. Mo reover , we n ote th at the se computatio n trees d o not d epend o n the decodin g algorithm, but only on the T anner gra ph and the iteratio n numb er . Let T be an arbitrary bipartite tr ee o f depth 1 L , w hose roo t and leaf nod es are all variable nod es. I f Dec is a m essage- passing decode r runnin g on T , we denote by De c ( T ) the a posteriori information of the root node of T at the L th iteration. Example 1: Let MS and SCMS denote the Min -Sum and Self-Corrected Min-Sum decoders respectively . Then MS ( A ( l ) m,n ) and SCMS ( A ( l ) m,n ) are equal to th e variable n ode messages α m,n computed b y th e Min -Sum an d Self- Corrected Min-Sum algo rithms respectively , at the l th iteration. Pr oposition 2: For any nodes n, m and any iteration l , there exists a sub-tree T ( l ) m,n ⊂ A ( l ) m,n containing n such that: SCMS ( A ( l ) m,n ) = MS ( T ( l ) m,n ) 1 The depth of a vari able node is defined recursiv ely from root to leaf nodes: the root node is of depth zero and the depth of any other va riable node is one plus the depth of its grandparent v ariable node. The depth of the tree is th e maximum depth of its va riable nodes. 3 7654 0123 n F F β =0 g g P P P P P P P P P P P P P P k k X X X X X X X X X X X X X X X X X X X X X X X X X X X X m 1 C C α =0 X X 1 1 1 1 1 1 1 1 i i S S S S S S S S S S S S S S S S S S m 2 · · · · · · · · · m i H 1 B B ?>=< 89:; n 1 C C O O [ [ 6 6 6 6 ?>=< 89:; n 2 = = | | | | O O g g P P P P P P · · · · · · ?>=< 89:; n j D D O O Z Z 6 6 6 6 Fig. 2. Computation tree (part of) The same hold s for th e a posteriori inf ormation tree Γ n . Indeed , assume that H ( n ) = { m, m 1 , . . . , m i } , and le t A ( l ) m,n be the co mputation tree represented in Fig. 2. If non e of the messages fro m variable nodes n 1 , . . . , n j to th e paren t check n ode m 1 has b een erased at iteration l − 1 , then th e processing of n 1 , . . . , n j and m 1 correspo nds to classical Min- Sum upda tes. On the con trary , assume that at iter ation l − 1 , the message sent by the variable no de n 1 to its paren t ch eck node m 1 has been set to zero. Then, at iteration l , the check node m essage sent by the ch eck nod e m 1 to its pa rent variable node n is also equal to zero. Th erefore , we can omit th is message when p rocessing the variable no de n , o r eq uiv alen tly , the sub -tree H 1 correspo nding to the check no de m 1 can b e discarded. The p roposition is proved by m oving down the tree and ap plying the above arguments recursiv ely . From a more intuitive pe rspective, the ab ove p roposition states that the S elf-Corr ected Min- Sum d ecoding beha ves as the Min-S um de coding app lied on th e su b-tr e e of u nerased messages . I V . A N A L Y S I S O F T H E S E L F - C O R R E C T E D M I N - S U M D E C O D I N G U S I N G G AU S S I A N A P P R OX I M A T I O N Throu ghout this section we restrict ourselves to the A WGN channel with noise variance σ 2 , an d we consider th e BPSK modulatio n mappin g bit 0 7→ +1 and bit 1 7→ − 1 . W ithou t loss of genera lity , we further assume that the all-zero co deword is sent ov er the chann el. At the receiver side, a me ssage-passing algorithm is used to decode the received signal. Le t P l e be the dec oding error pr obability at iteration l . Therefo re, P l e is simply the av erage p robability that variable n ode messag es are non-p ositiv e: P l e = Pr( α l m,n ≤ 0) where sup erscript l is used ( and will be used from now on) to d enote messages sen t at the l th iteration. W e note that the pr obability Pr( α l m,n = 0 ) is zero for the Sum- Product or the Min-Sum d ecoding, but this is n ot the c ase anymo re for th e Self-Cor rected Min-Sum d ecoding . Th e Gaussian ap- proxim ation is an app roach to track th e error p robab ility of a message-passing d ecoding , b ased on Gaussian den sities. The following conditions are n eeded for the analysis b y Gaussian approx imation: (GD) Gaussian distribution condition : m essages received at ev ery nod e at ev ery iteration a re ind ependen t an d iden tically distributed (i.i.d), with symmetric Gaussian distribution of th e form: f ( x ) = 1 √ 4 π m e − ( x − m ) 2 4 m where th e param eter m is the mean. W e will denote by m 0 = 2 / σ 2 the m ean of the a priori informa tion, and by m l α and m l β the means of variable an d check nod e messages at iter ation l, respectively . (CNP) Che ck no de processing co ndition: sgn ( β l +1 m,n ) = Y n ′ ∈H ( m ) \{ n ′ } sgn ( α l m,n ′ ) (VNP) V ariable nod e processing con dition: α l m,n = γ n + X m ′ ∈H ( n ) \{ m } β l m ′ ,n The irr egularity of the LDPC co de is specified as usual, using v ariab le and check node distrib utio n d egree polyno mials: λ ( x ) = P d v i =2 λ i x i − 1 , where d v is the maximum v ariable node degree an d λ i is the fraction of e dges con nected to variable nodes of d egree i , respec ti vely ρ ( x ) = P d c j =2 ρ j x j − 1 , where d c is the ma ximum chec k nod e degree and ρ j id th e f raction of edg es conn ected to ch eck no des of degree i Theor em 3: Let ϕ be th e f unction defined by: ϕ ( x ) = d v X i =2 λ i Q s 1 σ 2 + ( i − 1) Q − 1 1 − ρ (1 − 2 x ) 2 ! where Q ( x ) = 1 √ 2 π Z + ∞ x e − t 2 2 dt . Then ϕ de pends only on σ , λ , an d ρ , an d fo r any message-passing d ecoder satisfyin g the con ditions (DG), (CNP), and (VNP), the following r ecur- rence r elation holds: P l +1 e = ϕ ( P l e ) The main conseq uence of th is theorem is that the exact check no de pr ocessing is not r eally imp ortant. It is o nly im - portant that check node messages hav e a symmetric Gaussian distribution an d their signs verify the (CNP) condition. Th e proof can b e derived similarly as in [4], no ting that only the condition s (DG), ( CNP) and (VNP) are needed, not the Sum- Product dec oder assumptio n by itself. Conditions (CNP) an d (VNP) are verified by bo th Sum- Product and Min-Su m decod ers. The perform ance gap between these d ecoders is exp lained by th e fact th at the Min-Sum fails to satisfy the Gaussian distribution (GD) conditio n for check nod e m essages. This can be seen in Fig. 3 ( a ) and ( b ) that represent the empirical pr obability densities of check node messages comp uted by the Sum- Product and th e Min- Sum decod ers. A straightfo rward con sequence o f th e above theorem is that if the check no de m essages of the Min-Sum decoder would ha ve a Gaussian-like distrib u tion, then the Min- Sum and th e Sum -Produ ct d ecoders would exhibit the sam e perfor mance. In Fig. 3 ( c ) , it can be seen that the empirical prob ability density o f check n odes m essages exchanged by the Self- Corrected Min-Sum decoding is very close to the Gaussian density o f th e (GD) cond ition. For the e mpirical prob ability 4 0 0.05 0.1 0.15 -6 -4 -2 0 2 4 6 8 10 12 14 16 18 20 22 24 26 Probability Distribution Function Empirical distribution: Sum-Product, 20th iteration Gaussian distribution, m = 10.7755 (empirical mean) ( a ) Sum-Product decoding 0 0.05 0.1 0.15 -6 -4 -2 0 2 4 6 8 10 12 14 16 18 20 22 24 26 Probability Distribution Function Empirical distribution: Min-Sum, 20th iteration Gaussian distribution, m = 5.3347 (empirical mean) ( b ) Min-Sum decoding 0 0.05 0.1 0.15 -6 -4 -2 0 2 4 6 8 10 12 14 16 18 20 22 24 26 Probability Distribution Function Empirical distribution: Self-Corrected Min-Sum, 20th iteration Gaussian distribution, m = 9.0683 (empirical mean) ( c ) Self-Corre cted Min-Sum decoding Fig. 3. Em pirica l probabilit y density vs. Gaussian density after 20 iteratio ns ( E b / N 0 = 1 . 5 dB) density we taken into account only u nerased ( i.e. non zero) check node messages. As discussed in the above section , the Self-Corrected Min-Su m d ecoding behaves as the M in-Sum decodin g ap plied on u nerased messages. It follows that the Self-Corrected M in-Sum decoding satisfies the three cond i- tions (DG) , (CNP) and ( VNP) and th erefore there sho uld be no gap betwe en its perfor mance an d the Sum-Prod uct decodin g p erform ance. Howe ver , we sh ould be careful ab out this assertion , b ecause the recurr ence relation of th e er ror probab ility of the Self- Corrected Min-Sum decoding should be slightly different fr om the o ne of the Sum-Pro duct deco ding. In fact, if we see the Self-Corr ected Min-Sum as the M in- Sum decodin g applied on unerased messages, then the variable node degrees may vary from one iteration to anoth er a nd this depend s on the erasure probab ility . For instance, in Fig. 2, if α = α m 1 ,n 1 is erased at some iteration, th en the sub -tree H 1 is discar ded and the degree of the variable node n decreases by on e. So far we have g iv en a rath er intuitive explanatio n of the observed behavior . In ord er to write it formally , we n eed to split the error prob ability as P l e = P l + E l , where P l = Pr( α l m,n < 0) and E l = Pr( α l m,n = 0) . The second probab ility is called the erasure p robability at iteration l . W e also note R l e = Pr( β l m,n ≤ 0) = R l + F l , where R l = Pr( β l m,n < 0) and R l = Pr( β l m,n = 0) . The joint recurren ce relatio n of ( P l , E l ) is derived in se veral steps as follows. 1) A check node message β l +1 m,n is erased if and o nly if there exists an era sed incoming variable node me ssages α l m,n ′ = 0 . Since this hap pens with prob ability (1 − E l ) j − 1 , where j is the degree of the check n ode m , and averaging over all possible check n ode degrees, we get: F l +1 = d c X j =2 ρ j 1 − (1 − E l ) j − 1 = 1 − ρ (1 − E l ) 2) A chec k node message β l +1 m,n < 0 if and only if all incoming variable n ode messages α l m,n ′ are n ot erased, and if the numbe r o f incoming negativ e messages is odd. Using [2], lemma 4 . 1 , we get: R l +1 = 1 2 d c X j =2 ρ j (1 − E l ) j − 1 1 − 1 − 2 P l 1 − E l j − 1 ! = 1 2 ρ (1 − E l ) − ρ (1 − E l − 2 P l ) On th e o ther han d, at iteration l + 1 , check node m essages have a symmetric Gaussian distribution with mean m l +1 β . It follows that: R l +1 = Q s m l +1 β 2 m l +1 β = 2 Q − 1 R l +1 2 3) At iteration l + 1 , th e temporary extrinsic LLR α tmp m,n is th e sum of the a p riori inf ormation γ n and the unerased incom ing check no de messages β l +1 m,n 6 = 0 . If the variable nod e n is of degree i , the expected nu mber o f not erased incom ing ch eck node m essages is (1 − F l +1 )( i − 1) = ρ (1 − E l )( i − 1) . Then the expectation o f α tmp m,n is m 0 + ρ (1 − E l )( i − 1) m l +1 β and it follows that: Pr( α tmp m,n < 0 ) = Q s m 0 + ρ (1 − E l )( i − 1) m l +1 β 2 For conv enience, we denote the above probab ility by Q l +1 i . Furthermo re, since the variable node message α l +1 m,n < 0 iff α l m,n ≤ 0 and α tmp m,n < 0 , and averaging over all p ossible variable node degree s, we get: P l +1 = P l e d v X i =2 λ i Q l +1 i 4) W ith the ab ove notatio n, the variable n ode message α l +1 m,n is e rased if and only if α l m,n < 0 and α tmp m,n > 0 , o r α l m,n > 0 and α tmp m,n < 0 . Consequen tly , we obtain : E l +1 = P l (1 − X λ i Q l +1 i ) + (1 − P l e ) X λ i Q l +1 i Moreover , we note that th e following equality holds: P l +1 e = P l (1 − X λ i Q l +1 i ) + X λ i Q l +1 i 5 For conv enience, we intr oduce the following notation: R ( x, y ) = ρ (1 − y ) − ρ (1 − y − 2 x ) 2 Q i ( x, y ) = Q r 1 σ 2 + ρ (1 − y )( i − 1) Q − 1 ( R ( x, y )) Theor em 4: Let φ = ( φ 1 , φ 2 ) , wh ere: φ 1 ( x, y ) = ( x + y ) d v X i =2 λ i Q i ( x, y ) φ 2 ( x, y ) = x + (1 − y − 2 x ) d v X i =2 λ i Q i ( x, y ) Then th e following recur rence relation h olds: ( P l +1 , E l +1 ) = φ ( P l , E l ) V . S I M U L A T I O N R E S U LT S W e pr esent Monte-Carlo simulation re sults for co ding rate 1 / 2 over the A WGN chann el with QPSK modu lation. Floating-po int perf ormanc e of o ptimized irregular LDPC codes under th e Sum-Product, Min-Sum, Self- Corrected Min- Sum, and Normalized Min-Sum de coders is presented in Fig. 4 and Fig. 5 in ter ms o f b it an d frame error r ates, r espectiv ely . W e note tha t the perfo rmance gap between the Self-Corrected Min-Sum and th e Su m-Produ ct decoder s is only o f 0 . 05 d B for sho rt code leng ths ( 230 4 bits) and of 0 . 1 dB fo r long co de lengths ( 8064 bits). Mo reover , in case of shor t code lengths the Self-Cor rected Min-Su m decode r outperf orms the Sum- Product decoder at high SNR values, correspon ding to the error floor o f the seco nd decoder . Concerning the Normalize d Min-Sum decode r , its bit erro r rate performan ce is very close to the one of the Self-Corr ected Min- Sum d ecoder at low SNR values; howev er , it presents an error flo or at higher SNR values. Furthermo re, it b ehaves completely faulty in terms of frame e rror rate, which is quite surp rising as this does not fit with th e bit error rate behavior . Fig 6 presents fixed poin t simulation results for the qu asi- cyclic LDPC codes of leng th 2304 bits from the IEE E- 802 . 1 6 e standard [3]. The above conclusions also apply in this case. 10 -7 10 -6 10 -5 10 -4 10 -3 10 -2 10 -1 0.8 0.9 1 1.1 1.2 1.3 1.4 1.5 1.6 1.7 1.8 1.9 2 2.1 2.2 Bit Error Rate E b /N 0 (dB) length = 2304 bits length = 8064 bits Sum-Product Self-Corrected Min-Sum Norm. Min-Sum (scaling by 0.8) Min-Sum Fig. 4. Irregular LDPC codes, floating point simulation, Bit Error Rate, max iter number = 200 10 -5 10 -4 10 -3 10 -2 10 -1 10 0 0.8 0.9 1 1.1 1.2 1.3 1.4 1.5 1.6 1.7 1.8 1.9 2 2.1 2.2 Frame Error Rate E b /N 0 (dB) length = 2304 bits length = 8064 bits Sum-Product Self-Corrected Min-Sum Norm. Min-Sum (scaling by 0.8) Min-Sum Fig. 5. Irregul ar L DPC codes, floating poi nt simulation, Frame Error Rate, max iter number = 200 10 -7 10 -6 10 -5 10 -4 10 -3 10 -2 10 -1 10 0 1.2 1.3 1.4 1.5 1.6 1.7 1.8 1.9 2 2.1 2.2 2.3 2.4 2.5 2.6 Bit and Frame Error Rates E b /N 0 (dB) Min-Sum: BER Min-Sum: FER Norm. Min-Sum (scaling by 0.75): BER Norm. Min-Sum (scaling by 0.75): FER Single-Corrected Min-Sum: BER Single Corrected Min-Sum: FER Fig. 6. IEEE- 802 . 16 e L DPC codes, fixed point simulation ( − 8 ≤ γ , α, β < 8 and − 32 ≤ ˜ γ < 32 , w ith quantizat ion step 0 . 25 ), max iter number = 30 V I . C O N C L U S I O N S A very simple but powerful self-correction method for the Min-Sum decod ing of LDPC codes was presented in th is paper . The prop osed Self-Corr ected Min-Sum algor ithm p er- forms qu asi-optimal iterative d ecoding , wh ile preser ving low Min-Sum com plexity and indep endence with r espect to noise variance estimation errors. This makes the Self-Cor rected Min- Sum dec oding very attractive for practica l purp oses. R E F E R E N C E S [1] J . Chen and M. P . Fossorier . Near optimum univ ersal beli ef propagati on based de coding of low density parity chec k codes. IE EE T rans. on Comm. , 50(3):406– 414, 2002. [2] R. G. Gallager . Low Density P arity Chec k Codes . M. I.T . Press, 1963. Monograph. [3] IE EE-802.16e. Physical and medium access control layers for combined fixed and mobile operation in licensed bands, 200 5. amendment to Air Interf ace for Fixed Broadband Wi reless Access Systems. [4] F . Lehmann, F . Lehmann, G. Maggio, and G. Maggio. Analysis of the itera ti ve decoding of LDPC and product codes using the gaussian approximat ion. Informati on Theory , IEEE T ransactio ns on , 49:2993– 3000, 2003. [5] V . Sa vin. Iterati ve L DPC de coding using nei ghborhood reliab ilitie s. In Informatio n Theory , 2007 IEEE Internati onal Symp osium on , 2007. [6] N. Wi berg. Codes and decoding on gene ral graphs . PhD thesis, Likoping Uni versity , 1996. Sweden.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment