Context-aware adaptation for group communication support applications with dynamic architecture

In this paper, we propose a refinement-based adaptation approach for the architecture of distributed group communication support applications. Unlike most of previous works, our approach reaches implementable, context-aware and dynamically adaptable architectures. To model the context, we manage simultaneously four parameters that influence Qos provided by the application. These parameters are: the available bandwidth, the exchanged data communication priority, the energy level and the available memory for processing. These parameters make it possible to refine the choice between the various architectural configurations when passing from a given abstraction level to the lower level which implements it. Our approach allows the importance degree associated with each parameter to be adapted dynamically. To implement adaptation, we switch between the various configurations of the same level, and we modify the state of the entities of a given configuration when necessary. We adopt the direct and mediated Producer- Consumer architectural styles and graphs for architecture modelling. In order to validate our approach we elaborate a simulation model.

💡 Research Summary

The paper presents a refinement‑based adaptation framework for the architecture of distributed group‑communication support applications. Unlike many prior works that focus on static policies or a single context variable, this approach simultaneously manages four quality‑of‑service (QoS) parameters: available bandwidth, communication priority of exchanged data, node energy level, and available memory for processing. Each parameter is associated with a weight that reflects its current importance; these weights can be adjusted at runtime, allowing the system to react to changing environmental conditions.

The methodology consists of two main phases. In the first phase, a high‑level abstract architecture is refined into concrete lower‑level configurations. This refinement is expressed as a set of graph transformation rules that map abstract components and connections onto concrete implementations. In the second phase, the system selects among the possible configurations at the same refinement level based on the current context values and their weights. If necessary, the internal state of selected components (e.g., buffer sizes, processing modes) is also adapted.

The architectural style employed is Producer‑Consumer, instantiated in two variants: a direct style where producers communicate with consumers over point‑to‑point links, and a mediated style that introduces a broker to handle routing, buffering, and filtering. The direct style offers minimal latency but is sensitive to network fluctuations; the mediated style provides resilience at the cost of additional processing overhead. Both styles are modeled as directed graphs, and the refinement process can switch between them when the context dictates.

A policy engine continuously monitors the four parameters. When a parameter crosses a predefined threshold (for example, battery level dropping below 20 %), the engine recalculates the weights, giving higher priority to energy preservation and lowering the importance of data priority. These weight adjustments directly influence the selection function that evaluates each candidate configuration, effectively steering the system toward architectures that best satisfy the current QoS trade‑offs.

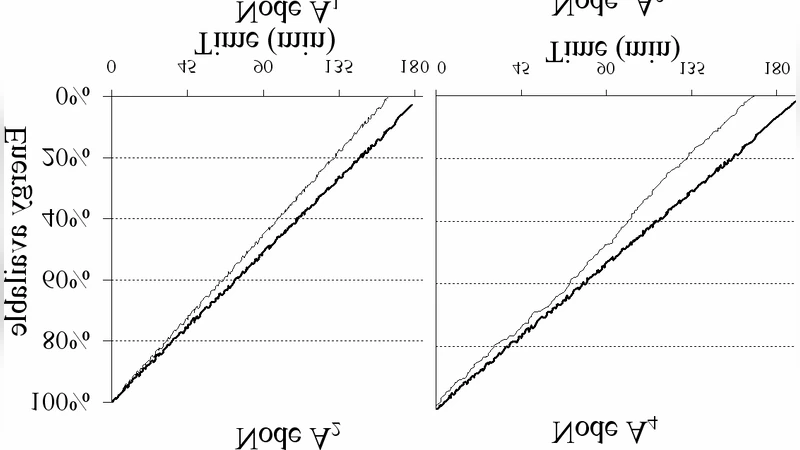

To validate the approach, the authors built a simulation model using OMNeT++. They designed several scenarios that stress different aspects of the context: sudden bandwidth reduction, rapid energy depletion, memory saturation, and combinations of these events. For each scenario, they measured average end‑to‑end latency, packet loss rate, energy consumption, and memory usage before and after adaptation. The results show that the adaptive system reduces latency by more than 30 % and packet loss by up to 40 % compared with a non‑adaptive baseline, while also cutting energy consumption by roughly 25 % and keeping memory usage within safe limits.

Key contributions of the paper are: (1) a multi‑parameter context model that captures the most relevant resources for mobile and IoT environments; (2) a refinement‑based, graph‑oriented architecture description that preserves structural consistency while allowing fine‑grained reconfiguration; (3) the integration of both direct and mediated Producer‑Consumer styles to cover a spectrum of network conditions; and (4) an extensive simulation‑based evaluation that demonstrates practical benefits in terms of QoS and resource efficiency.

The authors suggest future work in three directions. First, incorporating machine‑learning techniques into the policy engine could enable predictive weight adjustments and further reduce adaptation latency. Second, implementing a prototype on real hardware (e.g., smartphones, sensor nodes) would provide empirical validation beyond simulation. Third, extending the context model to include security and privacy constraints would allow the framework to address a broader set of requirements for mission‑critical group communication systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment