Computationally Efficient Estimators for Dimension Reductions Using Stable Random Projections

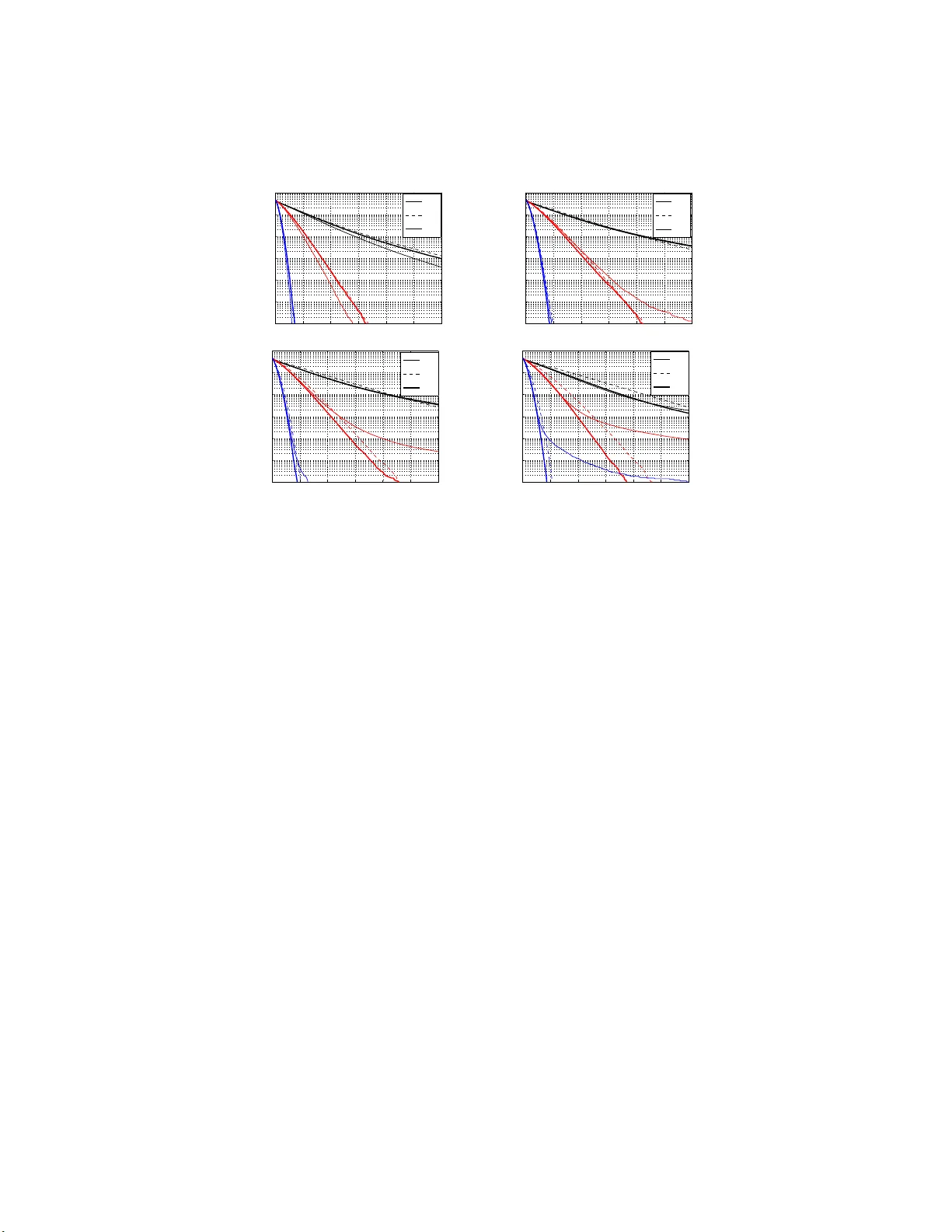

The method of stable random projections is a tool for efficiently computing the $l_\alpha$ distances using low memory, where $0<\alpha \leq 2$ is a tuning parameter. The method boils down to a statistical estimation task and various estimators have b…

Authors: Ping Li