On some entropy functionals derived from Renyi information divergence

We consider the maximum entropy problems associated with R\'enyi $Q$-entropy, subject to two kinds of constraints on expected values. The constraints considered are a constraint on the standard expectation, and a constraint on the generalized expecta…

Authors: Jean-Franc{c}ois Bercher (LSS, IGM-LabInfo)

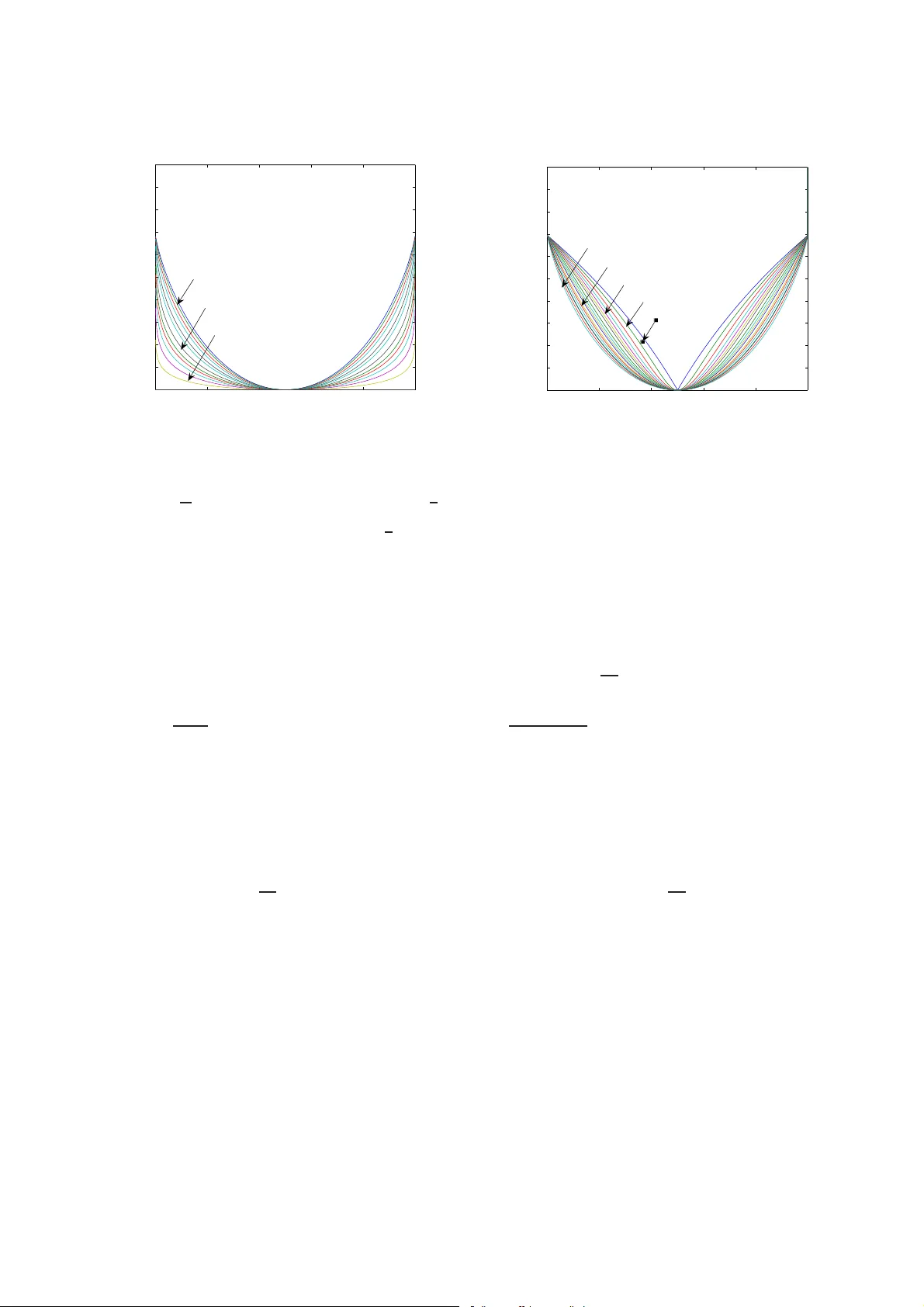

On Some Entropy Functionals deri ved from Rényi Information Di ver genc e J.-F . Bercher 1 Laborato ir e des Signaux et Systèmes, CNRS-Univ P aris Sud-Supele c, 91192 Gif-sur-Yve tte ce dex , Fr ance Abstract W e consider the maximum entropy problems associated with Rényi Q -entropy , subject to two kinds of constraints on expected v al ues. The constraints considered are a constraint on t he standard expectation, and a constraint on t he generalized expectation as encountered in nonex t ensi ve statistics. The optimum maximum entropy probability distri bution s, which can exhibit a po wer-law behav i our , are deriv ed and characterized. The Rényi entrop y o f the optimum distributions can be viewed as a function of the con st raint. This defines tw o families of entropy functionals in the space of possible expec ted values. General properties of t hese functionals, including nonne gativity , minimum, con vex i ty , are documented. Their relationships as well as numerical aspects are also discussed. Finally , we work out some specific cases for the reference measure Q ( x ) an d recov er in a limit case some well-kno w n entropies. K e y wor ds: Rény i entropy, Rényi di verge nces, maxi mum entropy princi ple, nonext ensivit y, Tsallis distrib utions 1. Introduction Consider two univariate contin uous probability distributions with den sities P and Q with respect to the L ebesgue measure. The Rényi information di vergenc e introdu ced in [ 32 ] has the form D α ( P || Q ) = − H ( α ) Q ( P ) = 1 α − 1 log Z D P ( x ) α Q ( x ) 1 − α dx, (1) where α is a positive real an d D th e domain o f definition of the integral. In the discrete c ase, the continuo us sum is replaced by a d iscrete one wh ich extend s on a subset D o f integers. The oppo site H ( α ) Q ( P ) of the Rényi info rmation div ergence can be viewed as a Rényi entropy relative to the r eference m easure Q , and can be ca lled Q - entropy . By L ’Hospital’ s rule, Kullback div ergen ce is recovered in the limit α → 1 . Applications and areas o f inter est in Rényi entr opy are plen tiful: comm unication and co ding theory [ 10 ], d ata min- ing, dete ction, segmentation , classification [ 29 , 5 ], hy pothesis testing [ 23 ], characteriza tion of signals an d sequenc es [ 38 , 19 ], signal processing [ 5 , 3 ], image matching and r egistration [ 29 , 15 ]. C o nnection with th e log -likelihood has been Email addre ss: jf.bercher @esiee.fr (J.-F . Berc her). 1 On sabbatic al lea ve from ESIEE -Pari s, Franc e Preprint submitte d to Informatio n Sci ences 1 Nov ember 2018 outined in [ 33 ], wher e is also defined a measure o f the intrinsic shape of a distrib utio n which can serve as a measure of tail hea viness [ 27 ]. Rényi entro pies for large families of un i variate and biv a riate distributions are gi ven in [ 25 , 26 ]. Di- vergence measures based o n entr opy func tions can be used in the pr ocess of inference [ 12 ], i n clustering or partionning problem s [ 22 , 2 , 7 ]. Rényi en tropy als o plays a central role in the theory of multifractals, see the revie w [ 18 ] and [ 4 ]. In statistical physics, following Tsallis propo sal [ 34 , 35 ] of another entropy (which is simply related to Rényi entropy) , there has b een a high inte rest on these a lternative entr opies and the d ev elopmen t of a com munity in “non extensi ve therm ostatistics”. Indeed , the a ssociated m aximum e ntropy distributions exhibit a power -law behaviour , with a rem arkable ag reement with experim ental d ata, see fo r instance [ 6 , 35 ] and references therein. These optimu m distributions, called Tsallis distributions, are similar to Generalized Pareto Distributions, wh ich also have an hig h interest in oth er fields, namely reliability theory [ 1 ], climatology [ 24 ], radar imaging [ 21 ] or actuarial sciences [ 8 ]. Jaynes’ m aximum e ntropy prin ciple [ 16 , 17 ] sug gests that the least biased pr obability distribution that describes a partially-k nown system is the pro bability distribution with max imum entropy compatib le with all the av ailab le prior informa tion. When prio r information is av ailable in the form of constraints o n e xpected values, the maxim um entropy method a mounts to m inimize Kullback in formation d i vergence D ( P || Q ) (or equiv a lently maxim izing Shann on Q - entropy) subject to normalization an d th ese an obser vation constraints. In the case of a sin gle co nstraint o n the mean of the distribution, say E P [ X ] = m , the minimum of Kullback information in the set of all prob ability distributions with expectation m is of course a function of m , denoted F ( m ) as follows F ( m ) = min P D ( P || Q ) s.t. m = E P [ X ] and R D P ( x ) dx = 1 (2) It is a ‘co ntracted’ version of Shann on Q - entropy and is called a level-1 entr opy func tional, or rate f unction, in th e theory o f large d eviations, e. g. [ 11 ]. Th e max imum entropy m ethod is a wid ely an d successful method extensively used in a large v ariety of prob lems and contexts. W e f ocus here on solutions and properties of maximum entropy p roblems analog to ( 2 ) for the Rényi information div ergence ( 1 ), an d on the associated en tropy f unctiona ls. The m aximum Rényi-Tsallis e ntropy distribution, with its power law beh avior , is at the hear t of no nextensiv e statis tics, but have also be considered in [ 13 , 14 ]. I n n onextensive statistics, one still consider the usual class ical mean constraint, but als o a ‘gener alized’ α -expectation constraint. This ‘genera lized’ α -expectation is in f act the expectation with respect to the distribution P ∗ ( x ) = P ( x ) α Q ( x ) 1 − α R D P ( x ) α Q ( x ) 1 − α dx , (3) that is a weighted geo metric mean o f P and Q . It is nothin g else but the ‘escort’ o r zoom ing distrib ution of no nextensiv e statistics [ 35 ] an d multifra ctals. O f cou rse, with α = 1 , the escort distribution P ∗ reduces to P and the gen eralized mean E P ∗ [ X ] reduces to the standard one. Therefo re, the m aximum entropy problems associated to Rényi inf ormation di vergence ( 1 ), subject to no rmalization and to a classical (C) or generalized (G) mean constraint states as: F ( C resp. G ) α ( m ) = min P D α ( P || Q ) s.t. ( C ) m = E P [ X ] or ( G ) m = E P ∗ [ X ] and R D P ( x ) dx = 1 (4) where F ( C ) α ( m ) and F ( G ) α ( m ) are the lev el-one en tropy func tionals associated to R ényi Q -entropy fo r the classical an generalized co nstraints respectively . Since Rényi entropy redu ces to Shann on’ s for α = 1 , function als F ( . ) α ( m ) will reduce to F ( m ) when α → 1 . 2 Hence, in this paper, we c onsider the forms and prop erties of m aximum entropy solutions associated to Rényi Q - entropy , subject to two kin d of constra ints, as explained ab ove. Th e value of the ma ximum entro py problem s a t th e optimum define classes of entropy f unctionals F ( . ) α ( m ) associated to each choice of r eference Q , and indexed by the parameter α . T he in troduction o f the r eference m easure Q , and the refore th e definition o f fu nctionals F ( . ) α ( m ) is, to the best of our knowledge, new in this setting. In section 2 , the exact form of the probability distributions P that realize the minimu m of the Rényi inf ormation diver gence in the righ t side of ( 4 ) are first der i ved. Then we g i ve some proper ties of these distributions and of their partition functions. W e sho w that the entro py function als F ( . ) α ( m ) are simply lin ked to these partition func tions. Genera l pro perties o f the entr opy functio nals, includ ing non negativity , conv exity , ar e established . W e also indicate how the problems ( 4 ) can be tack led numer ically , fo r specific v alu es of the constraints, even thouh the max imum entropy distributions exhibit implicit r elationships. A d iv ergen ce in the objec t space, that r educes to a Bregman diver g ence for α → 1 is defined. These re sults are illustrated in section 3 where we study four special cases of ref erence Q , and cha racterize the associated entropy functionals. It is then shown that som e well-known entr opies are recovered. 2. The minimum of Rényi diver g ence Let us define by P ν ( x ) = [1 + γ ( x − ¯ x )] ν Z ν ( γ , ¯ x ) Q ( x ) , (5) a probability den sity function on a s ubset D of R , where D e nsure that t he numerator of ( 5 ) is al ways nonnegative and its integral finite. The normalization Z ν ( γ , x ) is the partition function defined by Z ν ( γ , x ) = Z D [1 + γ ( x − x )] ν Q ( x ) dx (6) The density P ν depend s of th ree param eters: the exponent ν which can be con sidered as a shape parameter, a scale parameter γ and a location pa rameter ¯ x . But these param eters can be also be linked. For instance, ¯ x might be a fu nction of ν and γ . When non ambigo us, we m ay also denote by E ν [ X ] the statistical mean with respect to P ν ( x ) . W ith these no tations, we ha ve the following result. Theorem 1 (C) The distribution P C ( x ) in the family ( 5 ) with ν = ξ = 1 α − 1 and x = E P [ X ] = E ξ [ X ] , has the minimum Rényi diver gence to Q D α ( P || Q ) ≥ D α ( P C || Q ) (7) for all pr obab ility distri butions P ( x ) absolutely continuou s with r espect to P C ( x ) with a given (classical) e xp ecta- tion x . (G) The d istrib u tion P G ( x ) in the family ( 5 ) with ν = − ξ = 1 1 − α and x = E P ∗ G [ X ] = E − ( ξ + 1) [ X ] , ha s the minimum Rényi diver gen ce to Q D α ( P || Q ) ≥ D α ( P G || Q ) (8) for all pr obab ility distributions P ( x ) ab solutely contin uous with respect to P G ( x ) with a given generalized e xpec- tation x . Corollary 2 The solu tion to the minimization o f Rén yi diver gen ce in ( 4 ) is as given in theo r em 1 for the particular values γ ∗ of γ su ch that x = m . It is importa nt to em phasize that x is here a statistical mean, and not the constraint m , and as such a function of γ . Proof. See App endix A Remark 3 When α tends to 1, | ν | tends to + ∞ . Let us intr od uce ˜ γ such that γ = ˜ γ /ν . Then P ν ( x ) = e ν log [ 1+ ˜ γ ν ( x − ¯ x ) ] − log Z ν ( ˜ γ , ¯ x ) Q ( x ) , (9) and lim | ν |→ + ∞ P ν ( x ) = e ˜ γ ( x − ¯ x ) − log Z ν ( ˜ γ , ¯ x ) Q ( x ) , (10) 3 that is the stan dar d e xponen tial, whic h is the well-kn own solution of th e minimisation of Kullbac k-Leibler diver gence subject to a constraint on an e xpected va lue [ 20 , Theo 2.1, page 38 ]. In this case, the lo g-partition function becomes lim | ν |→ + ∞ log Z ν ( ˜ γ , ¯ x ) = ˜ γ ¯ x − log Z D e ˜ γ x Q ( x ) dx (11) Properties o f entr opy func tionals F ( C ) α ( m ) a nd F ( G ) α ( m ) are of course linked to the pro perties of th e optimu m dis- tribution ( 5 ) a nd its partition function ( 6 ). In Proper ty 4 , we char acterize partitio n functio ns of successi ve expo nents, which enab les to deriv e the expression of the Rényi en tropy associa ted to the op timum distribution. In Proposition 6 , we give the expression of the deriv ative of the partition f unction with r espect to γ . Since the o ptimum distribution ( 5 ) is ‘self-r eferential’ (be cause it d epends o f its mean, wh ich g i ves an imp licit relatio n), direct determina tion of its parameters is difficult. It co uld rely on tabulation or on iterative techniq ues [ 36 ], th at still suppo se that the solutio n is an attractive fixed point. W e defin e in Pr oposition 9 two functionals whose maximization provide the γ parameter of the optimu m distributions associated to the classical and gener alized mean con straint. Then gener al p roperties of nonnegativity , minimum , con vexity are then given in Proposition 11 . W e also show that the two entropy optimization problem s ar e related and that function als F ( . ) α ( m ) obey a special symmetry . Finally , we d efine a divergence in the space of possible means. Property 4 P artition functions of s uccessive exponents ar e linked by Z ν +1 ( γ , x ) = E ν +1 − k h ( γ ( x − x ) + 1) k i Z ν +1 − k ( γ , x ) . (12) An inter esting pa rticular case is for k=1: Z ν +1 ( γ , x ) = E ν [ γ ( x − x ) + 1] Z ν ( γ , x ) . (13) This is easily ch ecked by dir ect calculation. As a direct consequen ce, we may also observe that Z ν +1 ( γ , x ) = Z ν ( γ , x ) if and only if x = E ν [ x ] . Whe n x is a fixed parameter m , this will be only true for a sp ecial value γ ∗ such that E ν [ x ] = m . Now , using ( 13 ) in Prop erty 4 , it is p ossible to give the expr ession of the Rényi d i vergence associated to the distribution ( 5 ) and in particular to the solutions P C and P G of problem s ( 4 ): Property 5 The Rén yi information divergence associa ted to the optimum distributions ( 5 ) in th eor em 1 is (C) D α ( P || Q ) = − log Z ξ ( γ , x ) = − log Z ξ +1 ( γ , x ) , and (G) D α ( P || Q ) = − log Z − ξ ( γ , x ) = − log Z − ( ξ + 1) ( γ , x ) . Proof. The Rényi entropy associated to ( 5 ) writes D α ( P || Q ) = 1 α − 1 log Z P ( x ) α Q ( x ) 1 − α dx = 1 α − 1 log Z (1 + γ ( x − ¯ x )) αν Q ( x ) dx − α α − 1 log Z ν ( γ , ¯ x ) , that simply reduces to D α ( P || Q ) = 1 α − 1 log Z αν ( γ , ¯ x ) − α α − 1 log Z ν ( γ , ¯ x ) . (C) In on e han d, if ν = ξ = 1 α − 1 , then αν = α α − 1 = ξ + 1 , and D α ( P || Q ) = 1 α − 1 log Z ξ +1 ( γ , ¯ x ) − α α − 1 log Z ξ ( γ , ¯ x ) Therefo re, wh en ¯ x = E ξ [ x ] , then ( 13 ) giv es Z ξ +1 ( γ , ¯ x ) = Z ξ ( γ , ¯ x ) , and it simply remains D α ( P || Q ) = − log Z ξ ( γ , x ) = − log Z ξ +1 ( γ , x ) . (G) I n th e oth er hand , if ν = − ξ = 1 1 − α , then αν = α 1 − α = − ξ − 1 , an d D α ( P || Q ) = 1 α − 1 log Z − ( ξ + 1) ( γ , ¯ x ) − α α − 1 log Z − ξ ( γ , ¯ x ) . When ¯ x = E − ( ξ + 1) [ x ] , we have Z − ξ ( γ , ¯ x ) = Z − ( ξ + 1) ( γ , ¯ x ) according to ( 13 ) and it remains D α ( P || Q ) = − log Z − ξ ( γ , x ) = − log Z − ( ξ + 1) ( γ , x ) . Since the Rényi informa tion d iv ergen ce of d istributions ( 5 ) is simply the log-partitio n fu nction, it will be useful to examine the behaviour of the partition fu nction with respect to the p arameter γ . Hence, the follo win g pr oposition giv es the expression of the d eriv ative of the partition function. 4 Proposition 6 F or the partition fu nction ( 6 ) w ith domain of definition D , th e derivative with respect to γ of the partition function with characteristic e xpon ent ν is given by d dγ Z ν ( γ , x ) = ν E ν − 1 [ x − x ] − γ d x dγ Z ν − 1 ( γ , x ) . (14) if (a) the domain D does n ot depend of γ , or (b) o n subsets of γ such that the doma in incr ement δ D associated to th e variation δ γ r e mains empty , or (c) for ν > 0 in the con tinuous case or ν > 1 in the discrete case. Proof. See App endix B Using th is p roposition on the derivati ve of the p artition fu nction an d Proper ty 4 on the link between p artitions function s of succesiv e e x ponen ts, we read ily ha ve Property 7 If x = E ν − 1 [ X ] , th en, with the same conditions as in pr oposition 6 : d dγ log Z ν ( γ , x ) = − γ ν d x dγ , (15) and d dx log Z ν ( γ , x ) = − γ ν. (16) This is immediately checked using ( 13 ) and ( 14 ) with x = E ν − 1 [ X ] . It is now interesting to consider the special case where x is a fix e d value, s ay m . Then, it is immediate to check that the extrem a of the function log Z ν ( γ , m ) oc cur for γ ∗ such that m = E ν − 1 [ X ] : Property 8 If x is a fixed value m , then d dγ log Z ν ( γ , m ) γ = γ ∗ = 0 . (17) if and only if γ ∗ is such that m = E ν − 1 [ X ] . This result is importan t because it provid es an easy way to fin d the value of th e parameter γ o f the op timum distribu- tions ( 5 ) that solves the maximum entropy pro blems ( 4 ). Proposition 9 The values γ ∗ of the pa rameter γ of the optimum distrib utions that solve the maximum entr opy pr ob- lems ( 4 ) ar e the minimum of the maximizers of D C ( γ ) = − log Z ξ +1 ( γ , m ) (18) D G ( γ ) = − log Z − ξ ( γ , m ) (19) wher e the two partitions functio ns in volved are conve x, possibly on several well defi ned intervals. Then , the entr opy functiona ls F ( . ) α ar e simp ly given by F ( C resp. G ) α ( m ) = D C resp. G ( γ ∗ ) . (20) Proof. Ind eed, Theor em 1 and its corro lary in dicates that the solutio n for the classical constrain t (C) is o btained for x = m = E ξ [ X ] and b y x = m = E − ξ − 1 [ X ] for the ge neralized constraint (G). T hen by Proper ty 8 it suffices to look for the extrema of D C ( γ ) = − log Z ξ +1 ( γ , m ) in the first case or of D G ( γ ) = − log Z − ξ ( γ , m ) in the second case. W ith similar conditions of deriv atio n as in Proposition 6 the second de riv ative o f the partitio n function with respect to γ writes d 2 Z ν ( γ , m ) dγ 2 = ν ( ν − 1 ) Z D ( x − m ) 2 [1 + γ ( x − ¯ x γ )] ν − 2 Q ( x ) dx (21) = ν ( ν − 1 ) E ν − 2 ( X − m ) 2 Z ν − 2 ( γ , m ) . (22) For ν = ξ + 1 and ν = − ξ , the factor ν ( ν − 1) reduc es t o α ( α − 1) 2 . Since α is positi ve, the second deriv ativ e is alw ay s positive and the par tition f unctions Z ξ +1 ( γ , m ) an d Z − ξ ( γ , m ) are con vex on their domain of definition. On these domains, the function als in ( 18 ) and ( 19 ) are then unimod al and their extrema are maxima. In the discrete case and for ν < 0 , Z ν ( γ , m ) has singularities for all γ = 1 m − k , where k is an in teger in the sup port of the distribution. Th erefore, Z ν ( γ , m ) is only defined o n segments 1 m − k , 1 m − k − 1 , for m 6∈ ( k + 1 , k ) ), and 1 m − k − 1 , 1 m − k for m ∈ ( k + 1 , k ) . In such a case, − log Z ν ( γ , m ) may present several maxima. The situation 5 ν < 0 occur s fo r the classical constraint when α ∈ (0 , 1) (since the index ξ + 1 = α/ ( α − 1) is negativ e ), and for the gen eralized constraint when α > 1 . An example of fun ctional D C ( γ ) with α = 0 . 5 in the case of a Po isson distribution is reported in Fig. 6 . In the ν > 0 discr ete case or in the continuous case, there is a single maximum. Finally , since the expression of the Rényi info rmation divergence o f the op timum distributions is precisely the opposite of th e log -partition fu nction as indicated in Prop erty 5 , th e value o f fu nctionals ( 18 ) and ( 19 ) at their optima γ ∗ such that ¯ x = m is precisely the value of entro py functionals F (1) α ( m ) and F ( α ) α ( m ) . Remark 10 Wh en α tends to 1, the parameter ˜ γ ∗ is thus the maximizer of ( 11 ) , and w e obtain lim α → 1 F ( . ) α = sup ˜ γ ˜ γ ¯ x − log Z D e ˜ γ x Q ( x ) dx , (23) that is the Cramér tr a nsform of Q ( x ) . W ith the help of these different results it is now possible to char acterize more precisely the en tropy functionals Proposition 11 Entr o py function als F ( C ) α ( m ) a nd F ( G ) α ( m ) a r e nonn e g ative, with an unique minimum at m Q , the mean of Q, and F ( . ) α ( m Q ) = 0 . Furthermor e, F ( C ) α ( m ) is strictly conve x for α ∈ [0 , 1] . Proof. Rényi informa tion di vergence D α ( P || Q ) is al ways nonn egati ve, and equ al to zero f or P = Q . Since function als F ( . ) α ( x ) are defined as the minimum of D α ( P || Q ) , they are always non negativ e. If P = Q, we ha ve also P ∗ = Q and m = E P [ X ] = E P ∗ [ X ] = m Q . Therefo re F ( . ) α ( m Q ) = 0 and m Q is a global minimum. From ( 16 ), we have d dx log Z ν +1 ( γ , x ) = − γ ( ν + 1 ) . The n, fun ctionals F ( . ) α ( x ) are only m inimum if γ = 0 , and the correspo nding optimum probability distributions are simply P = Q, and D α ( Q || Q ) = 0 . Ther efore, F ( . ) α ( x ) have an unique minimum for x = m Q , the mean of Q , and F ( . ) α ( m Q ) = 0 . Finally , we examine the co n vexity of F ( C ) α ( m ) , for α ∈ [0 , 1] . Let P 1 and P 2 be the distributions that achie ve the minimization of D α ( P || Q ) subject to the constra ints x 1 = E P [ X ] and x 2 = E P [ X ] respectiv ely . Then, F ( C ) α ( x 1 ) = D α ( P 1 || Q ) , and F ( C ) α ( x 2 ) = D α ( P 2 || Q ) . In the same w ay , denote F ( C ) α ( µx 1 + (1 − µ ) x 2 ) = D α ( ˆ P || Q ) , whe re ˆ P denotes the o ptimum distribution with mean µx 1 + (1 − µ ) x 2 . Distributions ˆ P ( u ) and µP 1 ( u ) + (1 − µ ) P 2 ( u ) have the same mean µx 1 + (1 − µ ) x 2 . Hen ce, when D α ( P || Q ) is a conv ex fu nction of P, that is for α ∈ [0 , 1] , we have D α ( P ∗ || Q ) ≤ µD α ( P 1 ( u ) || Q ) + (1 − µ ) D α ( P 2 ( u ) || Q ) , that is F ( C ) α ( µx 1 + (1 − µ ) x 2 ) ≤ µ F ( C ) α ( x 1 ) + (1 − µ ) F ( C ) α ( x 2 ) and F ( C ) α ( x ) is a conve x function. Up to no w t he two op timization problem s have been co nsidered in para llel. But here is a special symm etry th at enab les to re late the solution s of th e minimization of Rényi divergence s ubject to classical and generalized m ean con straints. Then, there exists a simple relationship between the entropy fu nctionals F ( C ) α ( x ) and F ( G ) α ( x ) . Let us consider our origin al Rényi div ergence minim ization p roblem, on one hand with index α 1 and subject to a classical m ean constrain t m , and on the other hand with index α 2 and subjec t to a g eneralized mean constraint m . The associated functionals, b y Property 9 , are D C ( γ ) = − log Z ξ 1 +1 ( γ , m ) and D G ( γ ) = − log Z − ξ 2 ( γ , m ) . Thus, we will hav e po intwise equ ality o f these fun ctions if ξ 1 + 1 = − ξ 2 , that is if ind exes α 1 and α 2 satisfy α 1 = 1 / α 2 . In this case, we will o f cour se have equality of th e optimu m parame ters γ , an d the two optim ization pr oblems will have the same optimu m value. Because of the pointwise equality function s D G ( γ ) and D G ( γ ) , it is clear tha t th e associated d iv e rgences are equal at the optimum, tha t is D α 1 ( P C || Q ) = D α 2 ( P G || Q ) . Besides this is easily c hecked in the ge neral case: for th e escort distribution P ∗ ( x ) in ( 3 ), we always have the equality D 1 α ( P ∗ || Q ) = D α ( P 1 || Q ) . Hence, the minimization of the α Rén yi div ergence subject to the generalized mean constraint is exactly equ iv alent to the minimization of the 1 /α Rényi di vergenc e subject to the classical mean constraint inf P 1 D α ( P 1 || Q ) s.t E P ∗ [ X ] = m = inf P ∗ D 1 α ( P ∗ || Q ) s.t E P ∗ [ X ] = m , (24) so that generalized and classical m ean con straints can always be swapped, p rovided the index α is c hanged into 1 / α , as was argued in [ 31 , 28 ]. Hen ce, eq uality ( 24 ) enab les us to complete the character ization of en tropy fun ctionals F ( C ) α ( m ) and F ( G ) α ( m ) : 6 Property 12 Entr opy functiona ls F ( C ) α ( m ) and F ( G ) α ( m ) a dmit the symmetry F ( G ) α ( x ) = F ( C ) 1 /α ( x ) . Besides, F ( C ) α ( m ) is strictly conve x for α ∈ [0 , 1] and F ( G ) α ( m ) is strictly conve x for α ∈ [1 , + ∞ ] . Interestingly , it is also possible to de fine a diver gence in the object space , that is a kin d of generalized distance between two “objects”. These di vergences may be used for instance in clustering [ 30 ]. Th e ob jects are here co nsidered as generalized means of distributions wit h minimu m divergence to a ref erence measure Q ( x ) . Proposition 13 If P 1 and P 2 ar e two distributions in ( 5 ) with exponent ν = − ξ ( generalized constr a int), with P 2 ≪ P 1 , and with r espective parameters γ 1 , γ 2 and means m 1 , m 2 , then F ( G ) α ( m 2 , m 1 ) = D α ( P 2 || P 1 ) = F ( G ) α ( m 2 ) − F ( G ) α ( m 1 ) + 1 α − 1 log 1 − ( α − 1) d F ( G ) α dm ( m 1 )( m 2 − m 1 ) ! , (25) and F ( G ) α ( m 2 , m 1 ) ≥ 0 , with equality if and only if m 2 = m 1 . Proof. The result is obtain ed by simple computations. First, we ha ve D α ( P 2 || P 1 ) = 1 α − 1 log Z [1 + γ 2 ( x − m 2 )] α 1 − α Z − ξ ( γ 2 , m 2 ) α [1 + γ 1 ( x − m 1 )] Z − ξ ( γ 1 , m 1 ) 1 − α Q ( x ) dx which can be rewritten as D α ( P 2 || P 1 ) = 1 1 − α ( α log Z − ξ ( γ 2 , m 2 ) + (1 − α ) log Z − ξ ( γ 1 , m 1 ) − log Z − ξ − 1 ( γ 2 , m 2 )) (26) + 1 α − 1 log 1 + γ 1 Z ( x − m 1 ) [1 + γ 2 ( x − m 2 )] − ξ − 1 Z − ξ − 1 ( γ 2 , m 2 ) Q ( x ) dx (27) In the first line, we have Z − ( ξ + 1) ( γ 2 , m 2 ) = Z − ξ ( γ 2 , m 2 ) by Prop erty 4 , eq. ( 13 ), and we recognize from Proposition 9 that F ( G ) α ( m ) = − log Z − ξ ( γ , m ) . In th e second lin e, the integral re duces t o ( m 2 − m 1 ) since m 2 is the genera lized mean of the distribution P 2 . Finally , γ 1 can be expr essed as the derivati ve of the log-partition fu nction as stated b y ( 16 ) in Property 7 . By definition, F ( G ) α ( m 2 , m 1 ) is the Rényi info rmation divergence D α ( P 2 || P 1 ) wh ich is always g reater or equal to zero, with equality if and only if P 2 = P 1 , which implies m 2 = m 1 . For α → 1 , F ( G ) α ( m 2 , m 1 ) reduces to a stan dard Br egman d iver gence . Indeed, using log(1 − x ) ≃ − x , we have simply lim α → 1 F ( G ) α ( m 2 , m 1 ) = F ( G ) α ( m 2 ) − F ( G ) α ( m 1 ) − d F ( G ) α dm ( m 1 )( m 2 − m 1 ) . 3. Examples of entropy funct ionals W e now examine 4 sp ecial cases for the reference mesure Q ( x ) : a uniform and an expon ential distrib ution that model systems with continuous states; and then a Berno ulli (two-levels ) an d a Poisson distribution wh ich may mo del systems with discrete states. Th e minima of the Rényi di vergence, that is the entropies F ( C or G ) α ( x ) , are attained for the values γ ∗ that maximiz e the functionals D C ( γ ) and D G ( γ ) in Prop osition 9 . This inv o lves the c omputatio n of Z ν ( γ , m ) for all reference me asures Q considered, and t he resolution of d dγ Z ν +1 ( γ , m ) = 0 . The case α = 1 is obtained in the limit | ν | → + ∞ , since | ξ | → + ∞ when α tends to 1. Results of numer ical e valuations for v aryin g α are provided. 3.1. Uniform r efer ence Let us fir st consider the case o f the u niform referenc e Q ( x ) o n [0 , 1 . The p artition functio n is given by Z ν ( γ , m ) = R D [ γ ( x − m ) + 1 ] ν dx, where th e domain D is defined b y D = D Q ∩ D γ , with D Q = { x : x ∈ [0 , 1] } an d D γ = { x : γ ( x − m ) + 1 ≥ 0 } . 7 0 0.2 0.4 0.6 0.8 1 0 1 2 3 4 5 6 x α =0.98 α =0.45 α =0.3 α =0.1 Fig. 1. Entrop y functional F ( C ) α ( x ) for a uniform referen ce mea- sure and α ∈ (0 , 1) . 0 0.2 0.4 0.6 0.8 1 0 1 2 3 4 5 6 x α =0.01 α =0.6 α =0.98 Fig. 2. Entro py functi onal F ( G ) α ( x ) for a uniform refer ence mea- sure and α ∈ (0 , 1) . Computation of the partition functio n in the d ifferent doma ins together with th e fact that m ∈ [0 , 1] leads to Z ν ( γ , m ) = 1 γ (1 + ν ) ( γ − γ m + 1) ν +1 U ( γ − 1 m − 1 ) − ( − γ m + 1) ν +1 U ( − γ + 1 m ) , for all γ if ν ≥ 0 , f or γ ∈ 1 m − 1 , 1 m if ν < 0 , and Z ν ( γ , m ) = + ∞ othe rwise , where U deno tes the Hea v iside distribution: U ( t ) = 0 for t < 0 an d U ( t ) = 1 for t > 0 . The first deriv a ti ve of the partition functio n is gi ven by d dγ Z ν ( γ , m ) = − ν γ ( m − 1) + 1 γ 2 ( ν + 1 ) ( γ ( m − 1) + 1) ν U ( γ − 1 m − 1 ) + γ m ( ν ) + 1 γ 2 ( ν + 1 ) (1 − γ m ) ν U ( − γ + 1 m ) . (28) W e next hav e to look for the expr ession o f entropy function als F ( . ) α ( x ) . Unfor tunately , no an alytical solution can be exhibited her e, but the two functio nals still ca n be ev aluated numerically . For the classical m ean constra int (C) we can check that F ( C ) α ( x ) is a family o f convex fun ctions o n (0 , 1) , minimu m fo r the mean of the refe rence measure Q , as was indicated in Proposition 11 . In the sam e way , we can check that for the gen eralized mea n co nstraint ( G) F ( G ) α ( x ) is a family of nonnegative fu nctions on (0 , 1) , also minimum f or the mean of the r eference measur e Q . The entropies F ( C ) α ( x ) and F ( G ) α ( x ) were ev aluate d numer ically and are given in Figs. 1 an d 2 for α ∈ (0 , 1) . Of course, the α ↔ 1 /α duality gi ven in Prope rty 12 enables to extend these tw o func tionals for α > 1 . Hence, it is apparen t that the minimizatio n o f F ( . ) α ( x ) un der some constraint would au tomatically lead to a solution on (0,1). Moreover , the parameter α may serve to tune the curvature of the functio nal and the degree of pen alization of bound s. 3.2. Exponen tial r efer enc e The expon ential probability density f unction is Q ( x ) = β e − β x , for x ≥ 0 an d β > 0 . The p artition function is giv e n by Z ν ( γ , m ) = β Z D [ γ ( x − m ) + 1 ] ν e − β x dx (29) where D = n x : x ≥ max n 0 , m − 1 γ o if γ > 0 or x ∈ [0 , m − 1 γ ] if γ < 0 o , ensures tha t the in tegrand [ γ ( x − m ) + 1] is nonnegative and the integral finite. The ev aluatio n of Z ν ( γ , m ) on the different dom ains gi ves: 8 Z ν ( γ , m ) = e − β γ m − 1 γ γ β ν Γ ( ν + 1) if γ > 1 m > 0 ν ≥ 0 e − β γ m − 1 γ γ β ν Γ( ν + 1 , β 1 − γ m γ ) if 1 m > γ > 0 e − β γ m − 1 γ β γ − ν Γ ν + 1 , β − γ m + 1 γ − Γ ( ν + 1) if γ < 0 < 1 m ν ≥ 0 (30) and Z ν ( γ , m ) = + ∞ for γ < 0 o r γ > 1 m if ν < 0 . Let us now examin e the behavior of the entropies F ( . ) α ( x ) when α → 1 . This amounts to study Z ν ( γ , m ) and its maximum when | ν | → + ∞ . The simplest deriv ation is as fo llows. As in Remar k 3 , let γ = ˜ γ /ν , so tha t (1 + γ ( x − m )) ν ∼ exp( ˜ γ ( x − m )) . In this case, one easily obtain that log Z ν ( ˜ γ , m ) ≃ lo g β − ˜ γ m − log( β − ˜ γ ) , (31) whose deriv a ti ve is equal to zero for ˜ γ ∗ = β − 1 m . (32) W e shall also note that if ν < 0 , the sign o f γ = ˜ γ /ν is th e sign of (1 − β m ) . Since Z ν ( γ , m ) is on ly defined for γ > 0 when ν < 0 , it means that we only have a solu tion for m < 1 /β . In deed, f or γ > 0 and ν < 0 , the factor (1 + γ ( x − m )) ν is decreasing, and consequently the mean of the optimu m distrib u tion ( 5 ) cannot be greater than t he mean of the referen ce distribution, m Q = E Q [ X ] = 1 /β . W ith the op timum v alue ˜ γ ∗ , the log partition function becomes log Z ν ( γ ∗ , m ) ≃ − ( β m − 1) + log ( β m ) ( ∀ m if ν → + ∞ , for m < 1 /β if ν → −∞ ) . (33) Finally , we thus obtain F ( C ) α → 1 ( x ) = − log Z ξ +1 ( γ ∗ , x ) = ( β x − 1) − log ( β x ) , (34) for x < 1 /β whe n α tends to 1 by lower v alues, and fo r all x if α tends to 1 by high er v alu es. By the duality property 12 , this expression is also the limit form of functional F ( G ) α ( x ) . As was expected, the functio nal ( β x − 1) − log ( β x ) is strictly convex, positi ve and zero fo r x = 1 /β , the mean o f the expo nential d istribution. It w as employed in speech proc essing and is called the Itakura-Saà ¯to entr op y functiona l. For β = 1 , it reduces to the so-called B ur g entr o py that is well-known in spectr um analysis. The entro py fun ctionals c an be ev aluated numeric ally . For in stance, F ( G ) α ( x ) is gi ven on Fig. 3 for α > 0 . It is a f amily of nonnegative functio ns, equal to zero for x = m Q = 1 / β , and co n vex for α ∈ [1 , + ∞ ) . 3.3. Bernoulli r efer en ce Let us no w consider the case of the Berno ulli measure Q ( x ) = β δ ( x ) + (1 − β ) δ ( x − 1) . Of course, the (g eneralized) mean of optimum distributions is somewhere in the in terval [0 , 1] . When γ is o utside o f the interval ( 1 m − 1 , 1 m ) , the probab ility distribution reduces to a pu re state — δ ( x ) o r δ ( x − 1) , and its (generalized) mean is 0 or 1 . Incor poration of the bounds into the domain dep ends on the sign of ν : for ν < 0 , Z ν ( γ , m ) di verges to + ∞ on the boun ds whereas it remains finite for ν > 0 . The expression of the partitio n function follows directly from the definition: Z ν ( γ , m ) = β (1 − γ m ) ν + (1 − β )(1 + γ (1 − m )) ν . (35) In contrast to the previous case, it is possible her e to o btain an explicit expression of the entropy function als for any α . Indeed , if p den otes the v alue of the optimum distribution at x = 1 , then the genera lized expectatio n is m = P 1 x =0 xP ( x ) α Q ( x ) 1 − α P 1 x =0 P ( x ) α Q ( x ) 1 − α = (1 − β ) 1 − α p α β 1 − α (1 − p ) α + (1 − β ) 1 − α p α (36) 9 0 0.5 1 1.5 2 2.5 3 0 1 2 3 4 5 6 x α =0.1 α =0.5 α =1 α =3 α =10 Fig. 3. Entropy funct ional F ( G ) α ( x ) for an exponentia l reference measure with β = 1 and α > 0 . By Property 12 it is also F ( C ) 1 /α ( x ) . and it is therefo re po ssible to e xpress p as a function of m : p = β 1 − α x 1 α ( β 1 − α x ) 1 α + ((1 − β ) 1 − α (1 − x )) 1 α . (37) Now , since the Rényi informatio n divergence is D α ( P || Q ) = 1 α − 1 log β 1 − α (1 − p ) α + (1 − β ) 1 − α p α (38) it suffices to r eplace p by the expression ( 37 ) which leads to F ( G ) α ( m ) = α 1 − α log h β 1 − 1 α (1 − m ) 1 α + (1 − β ) 1 − 1 α m 1 α i (39) The case of the classical mean is even simp ler: we have m = p , an d F ( C ) α ( m ) has the expression of the divergence in ( 38 ) with p replaced by m . It is also interesting to n ote, and ch eck, that th e α ↔ 1 /α duality of Prop erty 12 links these two expressions. The limit case α → 1 is easily deriv ed using L ’Hospital’ s rule. It comes F ( . ) α → 1 ( x ) = x ln x 1 − β + (1 − x ) ln 1 − x β . (40) This expression is the celeb rated F ermi-Dirac en tr opy that is stric tly con vex, nonn egati ve, and eq ual to zero for x = E Q [ X ] = 1 − β , th e mean m Q of the reference measure. Plots of the entropy fu nctionals are given in Figs. 4 and 5 fo r α ∈ (0 , 1) and β = 1 / 2 . In both cases, we have a f a mily of non negativ e fun ctions, equal to zero for the mean of the referen ce measure. It ca n also b e checked that F ( C ) α ( x ) is conv ex for α ∈ (0 , 1] . 3.4. P oisson refer ence As a final example, let us consider the case of a Poisson measure Q ( x ) = µ x x ! e − µ , for x ≥ 0 . Domain D is D = D Q ∩ D γ , where D Q = N + and D γ = { x : γ ( x − m ) + 1 ≥ 0 } . The partition function is giv en by Z ν ( γ , m ) = X D [ γ ( x − m ) + 1 ] ν µ x x ! e − µ . (41) Three cases appear, accord ing to the value of γ : (a) if 1 m ≥ γ ≥ 0 , then D reduces to D 1 = { x : x ∈ [0 , + ∞ ) } ; 10 0 0.2 0.4 0.6 0.8 1 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 x α =0.98 α =0.38 α =0.1 Fig. 4. Entropy funct ional F ( C ) α ( x ) for a Bernoull i reference mea- sure and α ∈ (0 , 1) . 0 0.2 0.4 0.6 0.8 1 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 x α =0.98 α =0.68 α =0.3 α =0.1 α =0.01 Fig. 5. Entropy funct ional F ( G ) α ( x ) for a Bernoull i referen ce m ea- sure and α ∈ (0 , 1) . (b) f or γ ≥ 1 m the dom ain is D 2 = n x : x ∈ [ l m − 1 γ m , + ∞ ) o ; (c) when γ < 0 , D = D 3 = n x ∈ [0 , j m − 1 γ k ] o . In these expr essions ⌊ x ⌋ denotes the flo or function that returns the largest integer less than or equal to x; and ⌈ x ⌉ is the ceil function , the smallest integer not less than x . Closed-form formulas can not be derived in the gen eral case, b ut o nly in the case of an integer e xpone nt ν . When ν is not an integer , we will have to resort to the serie ( 41 ), po ssibly truncated for numerical computation s. In ord er to sa ve space, we only sketch the deriv ation in D 1 : Z ν ( γ , m ) = (1 − γ m ) ν e − µ + ∞ X x =0 ( θ x + 1 ) ν µ x x ! (42) with θ = γ 1 − γ m . In the serie above the ratio of successi ve terms (1+ θ x + θ )) ν ( x +1)(1+ θ x ) ν µ is the ratio of two co mpletely f actored polyno mials. This ind icates that th e serie can be written as a gen eralized hypergeo metric function, when ν is integer . So doing, we obtain Z ν ( γ , m ) = (1 − γ m ) ν e − µ | ν | F | ν | ( a, ..., a ; b, ..., b ; µ ) with a = (1 + θ ) /θ and b = 1 /θ fo r ν > 0 ; or with a = 1 /θ and b = (1 + θ ) /θ for ν < 0 . The deriv a ti ve with respect to γ is d dγ Z ν +1 ( γ , m ) = (1 − γ m ) ν e − µ ( ν + 1 ) + ∞ X x =0 ( x − m ) ( 1 + θx ) ν µ x x ! , (43) that can also be expressed using hypergeometr ic fun ctions. Formulas f or d omains D 2 and D 3 also inv o lve hypergeo- metric functions. W ith these formulas, or by direct e valuation o f ( 41 ), fu nctionals D C ( γ ) and D G ( γ ) can be ev aluated and maximized on their domains of definition so as to find the optimum value γ ∗ . Giv en the sign s of ν and γ , and the suppor ts D 1 , D 2 and D 3 , it is alrea dy possible to dedu ce that the solution γ ∗ is necessarily in a specific inter val. Hence, we o btain here that for ν > 0 (respecti vely for ν < 0 ) , solutions associated to a con straint m > µ correspo nds t o ca se (a) (resp. case (c)) and th at solution s for m < µ co rrespond to case ( c) (resp. case (a)). The argumentatio n relies o n the fact that if P i and P j are two optimum d istributions with supp orts D i and D j , with the same (g eneralized) mean but dif ferent parameters, then by Th eorem 1 D α ( P j || Q ) ≥ D α ( P i || Q ) if P j is dominated by P i . 11 In the ca se m > µ , ν < 0 , the solution with minimu m di vergence is fo r a distribution P 3 in case (c), and furtherm ore we hav e D α ( P 3 || Q ) → 0 . Th is can be seen as follows. Let x ∈ D 3 and k = j m − 1 γ k , so that x < k + 1 . Let now γ = 1 m − k + ǫ w ith ǫ ∈ 0 , 1 m − k − 1 − 1 m − k . Then the mean of the distribution is gi ven by E ν [ X ] = 1 Z ν ( γ , m ) k − 1 X x =0 x k − x k − m ν Q ( x ) + k [ k − m ] ν Z ν ( γ , m ) ǫ ν Q ( k ) , (44) and any value high er than µ = E Q [ X ] can be o btained by tuning ǫ, for many v alues of k . When k incre ases , γ = 1 m − k tends to 0 by lower values and P 3 tends to Q , which results in D α ( P 3 || Q ) → 0 . The ν < 0 case ha s the specificity that Z ν ( γ , m ) exhibits singularities at γ = 1 m − k for all k ≥ 0 . Then Z ν ( γ , m ) , with ν = − ( ξ + 1) or ν = ξ , is only con vex on intervals [ 1 m − k , 1 m − k − 1 ] or [ 1 m − k − 1 , 1 m − k ] (for k + 1 > m > k ), with Z ν ( γ , m ) = + ∞ on the boun ds of each interval. Consequently , − lo g Z ν ( γ , m ) may presen t se veral maxima . This is illustrated in Fig. 6 where function D C ( γ ) with α = 0 . 5 p resents many extrema. The solution with m inimum Rényi div ergence corr esponds to the minimum of these maxima. The limit case α → 1 is obtained with | ν | = | ξ | → + ∞ . According to the discussion above, the optimum γ correspo nds to case ( a) for { m > µ, ν > 0 } and { m < µ, ν < 0 } , and to case (c) for { m < µ, ν > 0 } . For case (a) , the support is D 1 , a nd the derivati ve of the partition function Z ν ( γ , m ) is given by ( 43 ). In this deri vati ve, the sum can be rewritten as + ∞ X x =0 ( x − m ) (1 + θ x ) ν µ x x ! = + ∞ X x =0 ( µ (1 + θx + θ ) ν − m (1 + θx ) ν ) µ x x ! , (45) so that Z ν +1 ( γ , m ) is min imum when th e RHS o f ( 45 ) is equal to zero. W e have to solve this eq uation in θ. Suppose that θ is small and th at θ x ≪ 1 f or th e significative values o f the pro bability distribution. In this ca se, we use th e approx imation (1 + θ x ) ν = e ν log(1+ θ x ) ≈ e ν θ x , that leads to + ∞ X x =0 µe ν θ ( x +1) − me ν θ x µ x x ! = e µe ν θ µe ν θ − m = 0 (46) The solution is giv e n by θ ∗ = 1 ν log( m µ ) , that in turn provide s γ ∗ = ln m µ ν + m ln m µ . (47) In case (a) , γ is positi ve, and this will b e true for γ ∗ if { m > µ, ν > 0 } or { m < µ, ν < 0 } . For the log-p artition function , when | ν | → + ∞ , this leads to − log Z ν +1 ( γ ∗ , m ) ≈ m log m µ + ( µ − m ) . (48) In domain D 3 , the deriv ative of the partition fun ction Z ν ( γ , m ) is equal to zero if k X x =0 ( x − m ) (1 + θ x ) ν µ x x ! = 0 , with k = m − 1 γ , γ < 0 . If γ is sma ll enou gh, k → + ∞ and we obtain for ν > 0 th e same formulatio n and solution as in D 1 The solution γ ∗ in ( 47 ) is now negati ve, that imposes m < µ for ν > 0 . Finally , we have sho wn above that if m > µ with ν < 0 then D α ( P 3 || Q ) → 0 . Hence, we obtain that the entropy functionals con verge to F ( . ) α → 1 ( x ) = x ln x µ + ( µ − x ) (49) with the restriction that F ( . ) α ( x ) = 0 for x > µ if (C) α < 1 or (G) α > 1 . This func tional is simp ly the cross-entr opy between x a nd µ or Kullback-Leib ler (Shann on) en tropy f unctional with respect to µ [ 9 ]. It measures a ‘distance’ b etween a possible mean (observable) and a referen ce mean µ , and it 12 −1 −0.5 0 0.5 1 1.5 2 2.5 3 −4 −3 −2 −1 0 1 γ µ =3, α =0.5, m=1.15 Fig. 6. E xample of functional D C ( γ ) for the Poisson refere nce with classica l mean constrai nt, with µ = 3 , α = 0 . 5 ( ξ = − 2 ) and m = 1 . 15 . It presents singulariti es at 1 / ( m − k ) , ∀ k , and maxima at γ = 0 . 35 and γ = 1 . 24 . 0 2 4 6 8 10 12 0 1 2 3 4 5 6 7 8 9 x α =0.01 α =0.7 α =0.98 α =10 α =2 α =1 Fig. 7. Entropy functional F ( G ) α ( x ) for a Poisson reference mea- sure with µ = 3 and α ≥ 0 . For α → 1 , F ( . ) α ( x ) con ver ges to x l n x µ + ( µ − x ) . has been u sed as a regular ization function al in se veral app lied pro blems, such as astronom y , tomograph y , RMN, and spectrometry . As in the pr e vious cases, th e entr opy functio nals F ( C ) α ( x ) and F ( G ) α ( x ) can be ev aluated numer ically . For instance, F ( G ) α ( x ) is gi ven on Fig. 7 f or µ = 3 . It presen ts an un ique minimu m for m = µ, and we n ote that it is is not conve x for small values o f α. 4. Conclusion and future work By weakening one of the postulates that lead to the definition of Shanno n en tropy , R ényi [ 32 ] introduced a one parameter family of entro py and div e rgence. Shann on entropy a nd Kullback-Leibler di vergence are re covered in the limiting case for the parameter α → 1 . In this work, we considered the m aximum entropy problems a ssociated with Rényi Q - entropies. W e character ized the solution s for a stand ard mean co nstraint a nd for the generalized mean constraint of n onextensive statistics. W e defined and discussed the entropy functionals as a function of the constraints. These entropies were charac terized a nd various properties and relationships were highlighted. W e also discussed numerical aspects. Fin ally we illu strated this setting th rough some spec ific examples and recovered some well-kown entropy functionals. Future work will con sider the extension o f this setting in the m ultiv ariate case. An issue that should be examined is the fact that the direct multiv ariate extensio n of ( 5 ) is not separab le in the c ase o f a separa ble ref erence Q ( x ) ; which means that some dependa nces are implicitely intr oduced in the maximum entropy s olution. W e also inten d to in vestigate a p ossible un derlying g eometrical stru cture of the maxim um entropy distributions ( 5 ). This struc ture sh ould extend the g eometrical structure of exponential families and inv o lve the Bregman -like di vergence introdu ced by ( 25 ). Finally , maximum entropy methods have b een succ essfully employed for solving inverse pro blems. W e in tend to consider th e po tential of Rényi entropies an d divergence in this field. A simple con tribution would be to examine the interest of a Rényi entropy fun ctional, e.g . ( 39 ), as a potential in a Mar kov field for image de con volution or restoration . Ap pendix A. Proof of T heorem 1 Let us begin with t he classical constrain t (C). In this first case, we follow the approach of [ 37 ]. Consider the functio nal Bregman di vergence : B h ( f , g ) = Z d ( f , g ) h ( x ) dx = Z − f ( x ) α − g ( x ) α − α ( f ( x ) − g ( x )) g ( x ) α − 1 h ( x ) dx 13 where h ( x ) is a n onnegative fun ctional, ass ociated to the ( pointwise) Bregman divergence d ( f , g ) built upon the strictly con vex function − x α for α ∈ (0 , 1) . Then B Q 1 − α ( P, P C ) = − Z S P ( x ) α − P C ( x ) α − α ( P ( x ) P C ( x ) α − 1 − P C ( x ) α ) Q ( x ) 1 − α dx (A.1) = − Z S P ( x ) α Q ( x ) 1 − α dx + Z S P C ( x ) α Q ( x ) 1 − α dx. (A.2) with h ( x ) = Q ( x ) 1 − α and where S d enotes the support of P C ( x ) . The second line follo ws from the fact that when P and P C have the same mean ¯ x = E P C [ X ] = E P [ X ] , then usin g the e x pression in ( 5 ) with ν = ξ = 1 α − 1 it is possible to check that Z S P ( x ) P C ( x ) α − 1 Q ( x ) 1 − α dx = Z S P C ( x ) α Q ( x ) 1 − α dx = Z ξ ( γ , ¯ x ) − α provided the whole su pport of P ( x ) is includ ed in S , which is the case by the absolu te con tinuity of P ( x ) with respect to P C ( x ) . The Bregman d iv e rgence B Q 1 − α ( P, P C ) being alw ays p ositi ve and equ al to zero if and only if P = P C , the equality ( A.2 ) implies that, for α ∈ (0 , 1) , D α ( P || Q ) ≥ D α ( P C || Q ) (A.3) which means that P C is th e distribution with minimum Rényi (Tsallis) d iv e rgence to Q , in th e set of all d istributions P ≪ P C with a given mean ¯ x , for α ∈ (0 , 1) . T he case α > 1 can be derived accordin gly , beginning with the Bregman div ergence associated to the strictly conve x fu nction x α . As far as the gen eralized mean co nstraint (G) is concerned , let us now co nsider th e Rényi info rmation d iv ergen ce D α ( P || P G ) from P to P G , with P G giv en in ( 5 ) with ν = − ξ = 1 1 − α ( α − 1) D α ( P || P G ) = lo g Z S P ( x ) α P G ( x ) 1 − α dx, (A.4) with S the supp ort of P G ( x ) , and which can be rearran ged as ( α − 1) D α ( P || P G ) = lo g Z S P ( x ) α Q ( x ) 1 − α R S P ( x ) α Q ( x ) 1 − α dx [ γ ( x − x ) + 1] dx (A.5) + log Z S P ( x ) α Q ( x ) 1 − α dx − (1 − α ) log Z 1 1 − α ( γ , x ) . (A.6) The gen eralized mean with resp ect to P appear s in the first term, and cancels if P and P G have the same generalized mean ¯ x and P G ≫ P . In such a case, we obtain D α ( P || P G ) = 1 ( α − 1) log Z P ( x ) α Q 1 − α dx + log Z 1 1 − α ( γ , x ) (A.7) = D α ( P || Q ) − D α ( P G || Q ) , (A.8) where we used the fact that D α ( P G || Q ) = − lo g Z 1 1 − α ( γ , x ) as stated in Pr oposition 5 . Since the Rényi information div ergence is always greater or equal to zero, we hav e D α ( P || Q ) ≥ D α ( P G || Q ) (A.9) and con clude tha t P G is th e distribution with minimum Rényi (Tsallis) divergence to Q , in the set of all distributions P ≪ P G with a giv en generalized α -mean ¯ x . Finally , it is easy to check , giv en th e expression of P G and the fact th at αξ = ξ + 1 , that the g eneralized mean of P G is also the standard mean of the distribution with exponent ν = − ( ξ + 1) , that is E ( α ) P G [ X ] = E P ∗ G [ X ] = E − ( ξ + 1) [ X ] . Note that the equality in ( A.8 ), D α ( P || Q ) = D α ( P || P G ) + D α ( P G || Q ) , is a pythagorean eq uality , which m eans that P G is the orthogo nal pr ojection of P on the set of probability distributions with fixed generalized mean ¯ x . 14 Ap pendix B. Proof of Proposition 6 The exact b ehaviour depends on the refe rence distribution Q ( x ) and on the sign of the expon ent ν . Because the dom ain of definition D might depend on γ , the deriv ative o f the partition functio n writes d Z ν ( γ , ¯ x γ ) dγ = lim δγ → 0 1 δ γ ( Z ν ( γ + δ γ , ¯ x γ + δ γ ) − Z ν ( γ , ¯ x γ )) where ¯ x γ and ¯ x γ + δ γ now den ote the par ameter ¯ x for distrib utions with parameter γ an d γ + δγ . Let us begin with the continuo us case. I f δ D denotes the domain increment associated to the v ar iation δ γ , it remains d Z ν ( γ , ¯ x γ ) dγ = Z D d dγ (1 + γ ( x − ¯ x γ )) ν Q ( x ) dx (B.1) + lim δγ → 0 1 δ γ Z δ D (1 + ( γ + δ γ ) ( x − ¯ x γ + δ γ )) ν Q ( x ) dx (B.2) Of course, when D d oes not dep end on γ , we only have t he first term, and it is easy to obtain ( 14 ). Othe rwise, in ord er to satisfy the positivity of the integrand, the dom ain D is bou nded ab ove by ¯ x γ − 1 γ for γ < 0 an d below by the same value for γ > 0 . Then, the second integral, say G , can be expressed as G = sig n ( γ ) Z ¯ x γ − 1 γ ¯ x γ + δγ − 1 γ + δγ (1 + ( γ + δγ ) ( x − ¯ x γ + δ γ )) ν Q ( x ) dx (B.3) = sign ( γ ) γ + δ γ Z a 0 y ν Q y − 1 γ + δ γ + ¯ x γ + δ γ d y (B.4) with a = ( γ + δγ ) ( ¯ x γ + δ γ − ¯ x γ ) − δγ γ , that tends to zero with δ γ if ¯ x γ is continuo us. At first o rder, we th en obtain G = sig n ( γ ) Q ¯ x γ + δ γ − 1 γ + δ γ γ + δ γ Z a 0 y ν d y ∝ a 1+ ν 1 + ν for ν > − 1 . Then, it is readily checked that lim δγ → 0 1 δγ G = 0 for ν > 0 , so that ( B.2 ) is always zero f or ν > 0 and ( 14 ) is true. In the discrete case, the partition function is Z ν ( γ , ¯ x γ ) = X x ∈D (1 + γ ( x − ¯ x γ )) ν Q ( x ) There exists sing ular isolated values o f γ such th at 1 + γ ( x − ¯ x γ ) = 0 , fo r x integer . For such values, the correspo nding term in the partition function diverges for ν < 0 . Contrary to the continuou s case where the domain of γ is contiguous, the domain o f values of γ ensuring that the partitio n functio n is finite will be interrup ted by isolated values of γ : the domain of possible γ will b e constituted of segments. As in the contino us case, the deri vati ve of the partition func tion writes as the sum of two terms, the seco nd one in volving a domain increment d Z ν ( γ , ¯ x γ ) dγ = X D d dγ (1 + γ ( x − ¯ x γ )) ν Q ( x ) (B.5) + lim δγ → 0 1 δ γ X δ D (1 + ( γ + δγ ) ( x − ¯ x γ + δ γ )) ν Q ( x ) (B.6) If D d oes n ot de pend o n γ , there is no dom ain increm ent and the d eriv ative is g i ven by ( B.5 ). When the bou nds of D dep end of γ , the domain inc rement is given by the integers in the interval ⌈ ¯ x γ + δ γ − 1 γ + δ γ ⌉ , ⌈ ¯ x γ − 1 γ ⌉ ( γ > 0 ) or ⌊ ¯ x γ − 1 γ ⌋ , ⌊ ¯ x γ + δ γ − 1 γ + δ γ ⌋ ( γ < 0 ); w here ⌊ x ⌋ is the floo r fu nction that returns the largest integer less than or equal to x; and ⌈ x ⌉ is the ceil fu nction, th e smallest integer not less than x . If γ belongs in some interval such th at 15 the d omain incremen t remains emp ty , the n the deri vati ve is of co urse simply ( B.5 ). An extension will occur fo r an infinitesimal variation δγ if ¯ x γ − 1 γ is precisely an integer , say k , Then, the second sum reduces to G = (1 + ( γ + δ γ ) ( k − ¯ x γ + δ γ )) ν Q ( k ) (B.7) = − δ γ γ − ( γ + δ γ ) ( ¯ x γ + δ γ − ¯ x γ ) ν Q ( k ) , (B.8) and finally lim δγ → 0 1 δ γ G = lim δγ → 0 δ γ ν − 1 ( γ + δ γ ) ( ¯ x γ + δ γ − ¯ x γ ) δ γ − 1 γ 1+ ν = 0 for ν > 1 . (B.9) since all ter ms in the p arenthesis remains finite when δ γ → 0 . In such case the derivati ve reduces to ( B.5 ) and ( 14 ) is true. References [1] M. Asadi, I. Ba yramoglu, T he mean residual life function of a k-out -of-n struct ure at the system le vel, IEEE Tra nsactions on Reliabi lity 55 (2006) 314–318 . [2] A. Banerjee , S. Merugu, I. S. Dhillon, J. Ghosh, Clustering with Bregman di verge nces, J. Mac h. Lea rn. Res 6 (2005) 1705–1749. [3] R. Baraniuk , P . Fland rin, A. Janssen, O. Michel, Measuring time-fre quenc y information content using the Rény i entropies, IEEE Transac tions on Informatio n Theory 47 (2001) 1391–1409. [4] A. G. Bashki rov , On m aximum ent ropy principle , supersta tistics, po wer-law dist ribut ion a nd Rén yi parameter , Physica A 34 0 (2 004) 153–162. [5] M. Basse ville, Dist ance measures for signal processing and pattern recognition, Signal Processing 18 (1989) 349–369. [6] C. Beck, Generali zed s tati stical m echani cs of cosmic rays, Physica A 331 (2004) 173–181. [7] D. Bhandari, N. R. Pal, Some ne w information m easures for fuzzy sets, Informati on Sciences 67 (1993) 209–228. [8] A. C. Cebrian, M. Denuit, P . Lambert, Generali zed pareto fit to the society of actua ries’ large claims database, North American Actua rial Journal 7 (2003) 18–36. [9] I. Csiszár , Why le ast squa res and m aximum entropy? a n axi omatic ap proach to inferenc e for li near in verse probl ems, Annals of Statistic s 19 (1991) 2032–20 66. [10] I. Csiszár , Gene ralized cu tof f rates and Rén yi’ s informati on measures, IEEE Tra nsactio ns on Inf ormation Theory 41 (1995) 26–34. [11] R. S. Ellis, Entropy , Large Deviati ons, and Statistica l Mechanics, vol. 271 of Grundlehre n der mathematisc hen W issenschaften , Springer - V erlag, 1985. [12] M. D. Esteban, Div ergence statistics based on entropy function s an d stratified sampling, Information Sciences 87 (1995) 185–203. [13] A. Golan, J. M. Perlof f, Comparison of m aximum entrop y and higher-orde r entrop y estimators, Journal of Econometrics 107 (2002) 195 – 211. [14] M. Grendar , M. Gren dar , Maximum en tropy method with non-lin ear m oment constra ints: chall enges, AIP , 2004. [15] Y . He, A. Hamza, H. Krim, A genera lized di vergenc e m easure for rob ust image re gistration, IEEE T ransactions on S ignal Proc essing, [see also Acoustic s, Speech, and Signal Processing 51 (2003) 1211–1220. [16] E. T . Jaynes, Information theory and statistic al mechanics, Phys. Re v . 108 (1957) 171. [17] E. T . Jaynes, On the rational e of maximum ent ropy methods, Proc. IEEE 70 (1982) 939–952. [18] P . Ji zba, T . Ari m itsu, The world acc ording to Rényi: thermodynamic s of mult ifract al syste m s, Annals of Physics 312 (2004) 17–59. [19] A. Krishnamacha ri, V . m oy M andal, Karmeshu, Study of dna bind ing sites using the rén yi parametric entrop y measure, Journal of Theo retica l Biology 227 (2004) 429–436. [20] S. Kullback, Informatio n T heory and Stati stics, Wile y , Ne w Y ork, 1959. [21] B. LaCour , Statistica l chara cteriz ation of acti ve sonar re verberati on using ext reme va lue theory , Oceani c Engineering, IEEE J ournal of 29 (2004) 310–316 . [22] M. M. Mayoral, Rényi’ s entropy as an index of di versity in simple-stage cluster sampling, Information Sciences 105 (1998) 101–114. [23] I. Molina, D. Morale s , Ré nyi statistics for te sting hypotheses in mixe d linear re gression model s, Journal of Sta tistical Plan ning a nd Inference 137 (2007) 87–102 . [24] M. A. J. V . Montfort, J. V . W itter , Generali zed Paret o distribut ion applied t o ra infa ll depths, Hydrologi cal Sci ences Journal 31 (1986) 15 1–162. [25] S. Nadaraja h, K. Zografos, F ormulas for Rén yi i nformation and relat ed mea sures for u ni variat e distribu tions, Information Scienc es 155 (2003) 119–138. [26] S. Nadara jah, K. Zogra fos, Expressio ns for Rén yi and shann on entr opies for bi v ariate distrib utions, Information Scie nces 170 (2 005) 173–1 89. [27] A. K. Nanda, S. S. Maiti, Rényi informati on measure for a used item, Information Sciences 177 (2007) 4161–4175. [28] J. Naudts, Dual description of nonexte nsive ensembles, Chaos, Solitons, and Fractals 13 (2002) 445–450. 16 [29] H. Neemuch wala , A. He ro, P . carson, Image matching using alpha-entrop y measures an d ent ropic grap hs, Signal Processing 85 (2005) 277– 296. [30] R. Nock, F . Nielsen, On weighting clusteri ng, IE EE Tra ns. Patt ern Anal. Mach . Intell 28 (2006) 1223–1235. [31] G. A. Raggio, On equiv alence of thermostatistic al formali sms, http://arxi v .org/ab s/cond-mat/9909161 (1999). [32] A. Rényi, On measures of entrop y and informat ion, Uni v . Ca lifornia Press, Berk eley , Calif., 1961. [33] K.-S. Song, Rén yi informat ion, loglik elihood and an intrinsic distrib ution measure, Journal of Statist ical Planning and Inference 93 (2001) 51–69. [34] C. Tsallis, Possible generali zation of bolt zmann-gib bs stati s tics, Journal of Statistica l Physics 52 (1988) 479–487. [35] C. Tsallis, Entropic nonextensi vity: a possi ble measure of complexit y , Chaos, S oliton s,& Fractals 13 (2002) 371–391. [36] C. Tsallis, R. S. Mendes, A. R. Plastino, The role of constraints within generaliz ed none xtensiv e statisti cs, Physica A 261 (1998) 534–554. [37] C. V ignat, A. Hero, J. A. Costa, About closedness by con volution of the T sallis maximize rs, Physica A 340 (2004) 147–152. [38] S. V inga, J. S. Almeida, Rényi continu ous entropy of DNA seque nces, Journal of Theoreti cal Biolog y 231 (2004) 377–388. 17

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment