Analysis of boosting algorithms using the smooth margin function

We introduce a useful tool for analyzing boosting algorithms called the smooth margin function,'' a differentiable approximation of the usual margin for boosting algorithms. We present two boosting algorithms based on this smooth margin, coordinate ascent boosting’’ and ``approximate coordinate ascent boosting,’’ which are similar to Freund and Schapire’s AdaBoost algorithm and Breiman’s arc-gv algorithm. We give convergence rates to the maximum margin solution for both of our algorithms and for arc-gv. We then study AdaBoost’s convergence properties using the smooth margin function. We precisely bound the margin attained by AdaBoost when the edges of the weak classifiers fall within a specified range. This shows that a previous bound proved by R"{a}tsch and Warmuth is exactly tight. Furthermore, we use the smooth margin to capture explicit properties of AdaBoost in cases where cyclic behavior occurs.

💡 Research Summary

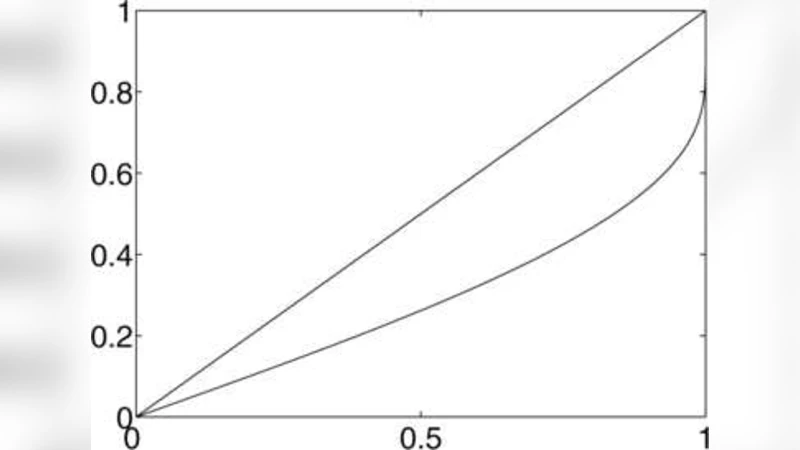

This paper introduces a novel analytical tool for boosting algorithms called the “smooth margin function,” a differentiable approximation of the traditional margin used in boosting theory. The smooth margin, defined as (\Upsilon(r) = -\ln(1-r^{2})\ln\frac{1+r}{1-r}), is monotone increasing, sharply rises when the underlying margin (r) is small, and flattens as (r) approaches one. By replacing the discrete, non‑differentiable margin with this smooth surrogate, the authors are able to cast boosting as a continuous optimization problem and derive precise convergence results.

Two new boosting procedures are built directly on the smooth margin: Coordinate Ascent Boosting (CAB) and Approximate Coordinate Ascent Boosting (ACAB). Both algorithms follow a coordinate‑ascent paradigm: at each iteration the weak learner that yields the greatest increase in the smooth margin is selected, and the corresponding coefficient is updated. CAB performs an exact line search along the chosen coordinate, guaranteeing maximal increase of the smooth margin; ACAB uses a computationally cheaper approximate update while still preserving monotonicity of the smooth margin. Unlike AdaBoost, whose objective function is not explicitly tied to the margin, CAB and ACAB optimize a single, well‑behaved objective, which enables rigorous analysis.

The authors prove that both CAB and ACAB converge to the maximum possible margin at a rate of (O\big(\frac{\log m}{\epsilon^{2}}\big)) iterations, where (m) is the number of training examples and (\epsilon) is the desired margin error. This rate matches the best known bounds for gradient‑based methods but is derived here from recursive equalities specific to the smooth margin. The paper also revisits Breiman’s arc‑gv algorithm, previously known only to converge asymptotically to the maximal margin, and shows that arc‑gv enjoys the same (O(\frac{\log m}{\epsilon^{2}})) convergence rate when analyzed through the smooth margin lens.

A central contribution concerns the “bounded‑edges” regime, where the weak learner’s edge (\gamma_t) stays within a fixed interval (

Comments & Academic Discussion

Loading comments...

Leave a Comment