Sparse estimation of large covariance matrices via a nested Lasso penalty

The paper proposes a new covariance estimator for large covariance matrices when the variables have a natural ordering. Using the Cholesky decomposition of the inverse, we impose a banded structure on the Cholesky factor, and select the bandwidth adaptively for each row of the Cholesky factor, using a novel penalty we call nested Lasso. This structure has more flexibility than regular banding, but, unlike regular Lasso applied to the entries of the Cholesky factor, results in a sparse estimator for the inverse of the covariance matrix. An iterative algorithm for solving the optimization problem is developed. The estimator is compared to a number of other covariance estimators and is shown to do best, both in simulations and on a real data example. Simulations show that the margin by which the estimator outperforms its competitors tends to increase with dimension.

💡 Research Summary

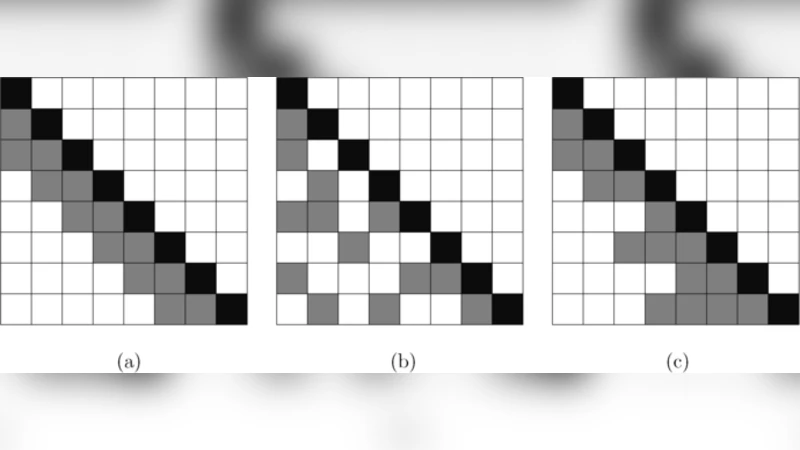

The paper addresses the problem of estimating large covariance matrices (or their inverses) in high‑dimensional settings where the number of variables p may be comparable to or exceed the sample size n. When the variables possess a natural ordering—such as time points in longitudinal data or spatial locations—one can exploit this structure to impose sparsity on the inverse covariance (precision) matrix. Existing approaches include simple banding of the covariance or its Cholesky factor, and L1‑penalized (lasso) methods applied directly to the entries of the Cholesky factor. Simple banding forces the same bandwidth for every row, limiting flexibility, while lasso on the Cholesky factor can set coefficients to zero at arbitrary positions but does not guarantee a sparse precision matrix because the resulting Cholesky factor may lose its banded form.

The authors propose a novel “nested lasso” penalty applied to the regression coefficients that constitute the lower‑triangular Cholesky factor T of Σ⁻¹ = Tᵀ D⁻¹ T. For each row j, the penalty is defined as

J₀(φ_j) = λ

Comments & Academic Discussion

Loading comments...

Leave a Comment