Figuring out Actors in Text Streams: Using Collocations to establish Incremental Mind-maps

The recognition, involvement, and description of main actors influences the story line of the whole text. This is of higher importance as the text per se represents a flow of words and expressions that once it is read it is lost. In this respect, the…

Authors: T. Rothenberger, S. Oez, E. Tahirovic

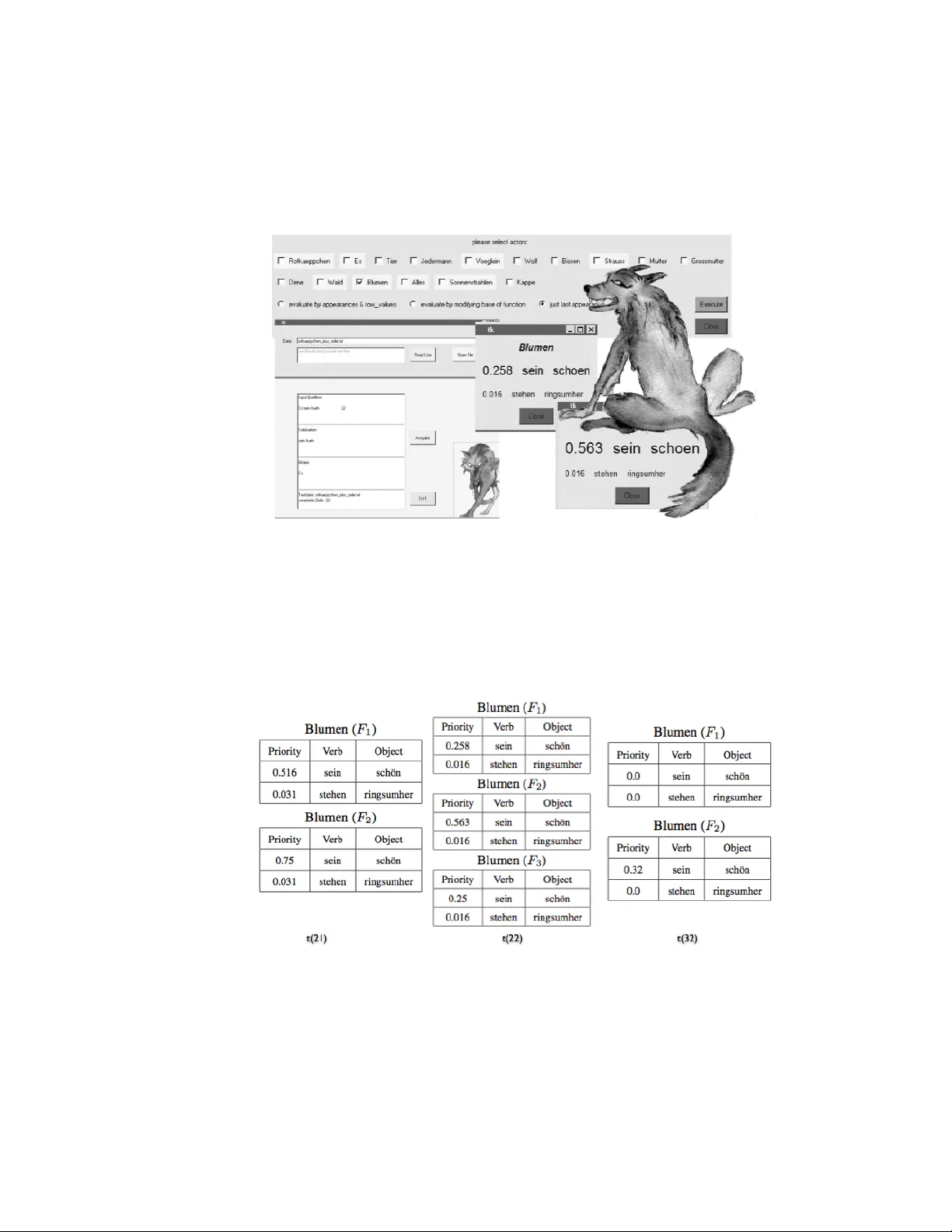

Figuring out Actors in T ext Streams: Using Collo cations to establish Incremen tal Mind-maps T. Rothen b erger, S. ¨ Oz, E. T ahiro vic Johann W olfgang Go ethe-Univ ersit y F rankfurt am Main, Dept. of Computer Science Rob ert-Ma y er-Str. 11-15, 60486 F rankfurt am Main, German y Email: { tahiro v, o ez, rothen b } @cs.uni-frankfurt.de C. Sc hommer Univ ersit y of Luxem b ourg, Dept. of Computer Science and Comm unication 6, Rue Coudenho v e-Kalergi, L-1359 Luxem b ourg Email: c hristoph.sc hommer@uni.lu July 30, 2018 Abstract The recognition, in v olvemen t, and description of main actors influences the story line of the whole text. This is of higher imp ortance as the text p er se repre- sen ts a flow of words and expressions that once it is read it is lost. In this resp ect, the understanding of a text and moreo v er on ho w the actor exactly b eha v es is not only a ma jor concern: as human b eings try to store a giv en input on short-term memory while asso ciating diverse asp ects and actors with incidents, the following approac h represents a virtual arc hitecture, where collo cations are concerned and tak en as the asso ciativ e completion of the actors’ acting. Once that collo cations are discov ered, they b ecome managed in separated memory blo cks broken down b y the actors. As for human b eings, the memory blo c ks refer to asso ciative mind- maps. W e then present several priority functions to represent the actual temp oral situation inside a mind-map to enable the user to reconstruct the recent ev en ts from the disco v ered temp oral results. 1 Motiv ation The main idea is to try to differentiate sentences on the basis of actors that app ear in them. W e pursue one actor p er sentenc e rule to a void ambiguit y , so that eac h sen tence becomes a part of the knowledge base for just one actor. Eac h actor who app ears once receiv es his o wn mind-map. W e reconstruct the story line of the text from these mind-maps to find out more ab out single actors b y examining in teraction among them. Because of an incremental nature, we examine one sentence per time in a 1 2 AR CHITECTURE 2 subsequen t manner while up dating our mind-map con tin uously . In order to understand the motiv ation and the sub ject itself, it would b e helpful to take some time to closer examine the corresp onding concepts. Some of the concepts are sub ject of research in computational linguistics while others are more related to computer science. W e in terpret incr emental as w e refer to its ability to gradually adjust to c hanges that happ en ov er a p erio d of time so that the presen t condition reflects these changes. The cen tral concept of this w ork is the concept of collo cation, which is one of the most frequen t stipulated concepts in natural language pro cessing and computational linguistics. The difficulty to reconcile all asp ects of collo cations in one single definition has o ccupied man y natural language exp erts and computer linguists ov er a large perio d of time. In [2], The term c ol lo c ation . . . is used for word com binations that are lexically determined and constitute particular syntactic dep endencies such as v erb-ob ject, verb- sub ject, adjectiv e-noun relations,etc. The mind-map concept w e would lik e to presen t in this pap er b ears resem blance to canonical concept of the asso ciativ e mind-map, in a sense that the deriv ation a of mind-map address, for a particular collo cation, results from the collo cation’s structure/formation itself. Questions similar to our task ha v e already b een raised in the domain of computer linguistic particularly with regard to information and disc ourse structure. Most the work that has b een done on this field is to present the semantical, temp oral and psyc hological attributes of a discourse in a wa y that is interpretable by a mac hine. This differs from our task in the sense that features of our discourse analysis will b e presen ted to a human user who will b e hop efully able to get a go o d picture of the discourse considered. In [1], a topic-tree mo del to form a discourse structure acceptable for further mac hine interpretation is in tro duced. They denote the focus of a sen tence as a topic that corresp onds corresp onds to our notion of actor or char acter , who has to b e iden tified for each sentence. In [4], an asso ciativ e mind-map is established that bases on artificial cells that communicate to eac h other and merge in case they represent the same con tent. Connections through other artificial cells are done b y the Hebbian Learning principle, taking in to accoun t a forget in case the connection is less stimulated. 2 Arc hitecture Giv en a text stream, w e will iden tify the main actors in order to understand how they interact and concentrate on current ev ents that hav e tak en place in the shorter past. The interpretation of the concept shorter p ast will b e defined b y a function in dep endence to the p osition of the collo cation in the text and the n umber of times where this collo cation app ears in the present moment. A detailed elab oration of the priorit y functions men tioned follo ws. The interaction b etw een main actors can often rev eal a coherence ab out the story line of the text. This brings us to the incremental nature of our work that p ermits an insigh t into the story line at an user-defined p oint of time. Eac h sentence has to pass a pre-pro cessing step, which is done sentence-wide. Here, the sentence is brought to a form that supp orts the identification of the corresp onding 2 AR CHITECTURE 3 actor/c haracter. 2.1 Initializing the Mind-Map After having successfully identified the actor, the information in the sentence b ecomes part of the mind-map that is set up for this particular actor; additionally , w e k e ep in mind the information ab out a story line that is broken do wn b y actors in the text. The user in teractively may decide the direction of action in the text. He will b e presen ted the most newsworth y en tries of the mind-map according to the already mentioned priorit y function, for all or for a couple of c hosen actors. In this wa y , we can spare the user from reading the whole text in search for a particular sp ot or in his efforts to get an understanding of what the text is ab out. The system is v alidated on fairy tales as these texts are simple regarding the linearity of the story line and the structure of the sen tences. In particular, the tests and v alidation of the results will b e carried out on the text of Little R e d Riding Ho o d by the Br others Grimm . Before w e manage collo cations in the mind-map of a particular actor, each text stream item is pre-pro cessed. This do es not violate the request of a stream as the collo ca- tion representation in the mind-map follows immediately . F or this, w e p erform several pre-pro cessing actions like stemming and parsing. This is not of to o muc h freedom in rearranging the input stream. A more efficien t identification of actors can b e ac hiev ed if the text stream can b e mo dified at once, therefore phenomenons like main clauses and sub ordinate clause, direct/rep orted sp eech, active/passiv e etc. are pro cessed. W e stem each w ord and iden tify each compound sentence while breaking them down in to a more simple represen tation. With loss of information, w e dissolve multiple sen tences b y deleting conjunctions and rely on some correctiv e actions by the human sup ervisor in the sense of h uman-computer interaction. How ever, for a directed or rep orted sp eec h, it w ould b e advisable to em b ed the statements that app ear b et ween quotations in to the story . This could be accomplished by erasing quotation marks and omitting the nota- tion ab out the sp eak er. Interrogativ e sentences as suc h carry no information relev ant for our task, b ecause it is quite difficult and therefore hard to determine: what is their con tribution in forming the information structure of the text? F or this, we b eliev e to b e wise to leav e them out as a part of the pre-pro cessing step. T o discov er an unique form of the sentences and to transfer indirect sentences, w e use again a stemmer, a particular grammar and the human sup ervisor who takes some correctiv e actions and who detects the correct reference b etw een pronouns and the corresp onding actors. Additionally , we manage the position of the sentence/collocation in the text by a simple coun ter that is incremented sen tence by sen tence. The follo wing is an example, how the prepro cessing step lo oks like. F ollo wing an orginal text like → Der W olf legte sich wieder ins Bett und fing an zu sc hnarchen. Der J¨ ager ging v orb ei. Er dach te, die alte F rau schnarc ht so laut, da muss ich einmal nachsehen. Er trat ein. Im Bett lag der W olf, den er so lange gesuc ht hatte. w e dissolve the comp ound sentences incrementally . W ord items are brought to their 2 AR CHITECTURE 4 basic form, categories are assigned, and the correct references b etw een pronouns and actors established. t 1 : Der W olf legte sich wieder ins Bett. ⇒ W olf(N) - legen(V) - Bett(N) t 2 : Der W olf fing an zu sc hnarc hen. ⇒ W olf(N) - anfangen(V) - schnarc hen(N) t 3 : Der J¨ ager ging vorbei. ⇒ J¨ ager(N) - gehen(V) - v orb ei(N) t 4 : Er dach te. ⇒ F rau(N) - sein(V) - alt(ADJ) t 5 : Die alte F rau schnarc ht so laut. ⇒ F rau(N) - schnarc hen(V) - laut(ADJ) t 6 : Ich muss einmal nachsehen. ⇒ J¨ ager(N) - nac hsehen(V) - Haus(N) t 7 : Er trat ein. ⇒ J¨ ager(N) - ein treten(V) - Haus(N) t 8 : Im Bett lag der W olf. ⇒ Bett(N) - liegen(V) - W olf(N) t 9 : Er hatte den W olf so lange gesuc h t. ⇒ J¨ ager(N) - suchen(V) - W olf(N) 2.2 Assign collo cations to an actor T o assign eac h sentence to one particular actor, the canonical sentence/collocation structure is made up of sev eral parts: • A sen tence starts with a sub ject representing the actor (to whom the sentence is assigned). • It is follow ed b y a verb in the sentence that indicates the relation b et w een the actor and the third part of the collo cation. • The third part is a generalized notion of an ob ject in the sen tence, which is in volv ed in some w ay with the actor. It is normally a noun or an adjective dep ending on the wa y , how the sentence/collocation in question is generated in the pre-pro cessing. • A t the end of each collo cation, a p osition coun ter supp orts the pro cess of repre- sen ting collo cations in a correct order. The collo cation that ends with an adjective is created from a w ord com bination adje ctive + noun in the original text. The adje ctive describ es one particular feature of the noun ; this yields on a new collo cation in which noun b ecomes the actor and adje ctive an obje ct of the collo cation. W e assign the w ord sein a linking role in the collo cation and represen t it as a fact. The new collo cation inherits the collo cation num b er of the sen tence, which it w as a part of and thus preserves the time flow of the text. This wa y w e achiev e the unique sentence structure that w e need, and k eep the original semantical v alue of the text. 2 AR CHITECTURE 5 alte F r au ⇒ F r au - sein - alt Blumen standen ringsherum ⇒ Blume - stehen - ringsherum W e k eep a dynamic list of each actor who app ears in text. Each actor has one main mind-map blo c k and one priorit y list for each priority function. Priority lists sav e collo cations sorted by resp ective priority function enabling output in accordance with the particular function. These functions dep end, in general, on the presen t momen t and the rep etition b ehavior of a particular collo cation. In this resp ect, our strategy is as follows: • W e iden tify the actor in the curren tly pro cessed collo cation. • W e prov e if this is the first app earance in the text. This can b e achiev ed by con tacting the dynamic collective actor list. If so, a new mind-map blo ck is allo cated for this actor . If the actor already exists in the mind-map, w e then use the verb (second part) of the collo cation as a k ey to sav e this collo cation in his main mind-map blo ck. It is p ossible that this verb app ears in relation with this actor again: then a list for it already exists in the mind-map. Such a list is kept for each verb : what we still ha ve to do is to examine if the obje ct (third part) can b e found in the list. If so, we detect a reo ccurrence of the curren t collo cation. • F urther steps are to re-compute the priority functions and to sort the priority lists according to the new v alues. W e preserve this wa y the correct order in the lists which we later use in output for chosen actors. 2.3 Priorit y F unctions The priority lists is managed for each actor . The presentation of the results - showing recen t/current collo cations broken down b y actors - o ccurs directly from these priority lists. Each priority list has its own priority function by which the collo cations b ecome sorted. Dep endent on the user’s decision (who may interv ene time by time), items of c hosen actors are exp orted from the priority lists. Ho wev er, not all collo cations can b e presen ted as a result. Since we try to mo del the current ev ents in the story line, we prefer of just taking the mos t r e c ent collo cations. T o pro vide the user with insight into eac h actor throughout the entire story line, we presen t at least five entries. Additionally , we use a threshold ∆ to allo w the output of the most recent collo cations according to the priorit y function and display those en tries in the actor’s priority list that lie ab o v e. The results for a particular actor are presen ted in a separate window, whereas the fon t size in whic h the results are written dep ends on the v alue ev aluated by the priorit y function. With the idea of priority functions, w e mo del a concept of oblivion in the program. T aking the v alues computed by priority functions, we obtain an order among the collo- cations. F or further elab oration it is imp ortant to define the notion of r e c ent or curr ent ev ents. Observing rep etition of a particular collo cation could increase the imp ortance 2 AR CHITECTURE 6 of the collo cation for the story line. Besides chronological features of the collo cations, w e considered the num b er of rep etitions as a meaningful feature in determining the priorit y of the collo cation. This means that the collo cations whic h ha v e sev eral o ccur- rences in the text, should receive a larger priority than those whic h o ccur only once. Of course there are man y other features whic h could seem reasonable in calculating the priorit y of the collo cation, lik e app earance of a main c haracter in it. W e incorp o- rated the impact of rep etitions in the calculation of t wo of our priority functions. All priorit y functions should share some imp ortant prop erties in order to mo del the story line primarily in accordance with its chronological asp ects. Such a function must b e defined on a subset D of the set of natural num b ers N . It m ust b e contin uous and monotonously decreasing: ∀ x, y ∈ D : x < y ⇒ f ( x ) > f ( y ) W e provide several priority functions from which the user can choose: let ~ x k ∈ N d b e a dynamic vector of all sentence num b ers in which the collo cation k app ears, d is at most the num b er of all sen tences in the text F 1 ( c, ~ x k ) = P i 0 . 5 c − x k i where c the num b er of the sentence which is currently examined. W e can see that this function incorp orates a rep etition characteristic of a collo cation in the calculation of the priority . The most recen t o ccurrences of the collo cation carry more weigh t in the sum than those which lie far in the past. Because w e chose the geometric progression the earlier o c currences will b e for gotten very fast. If a o ccurrence happ ened in a very near past it has a relatively big influence on the whole sum. The coefficient of the geometric progression remains 0.5 all through the pro cess. The second priority function considers the num b er of rep etitions only ( d the dimension of the v ector ~ x k of the first function). In this case we increase with each rep etition the co efficien t of the geometric progression. The priorit y is c alculated in dep endence of the curren t co efficien t b a , num b er of the curren tly examined sen tence c and the num b er l k of most recent sentence in which a collo cation k app eared: b a = P d i =1 0 . 5 i Since we increase the coefficient, with each rep etition, priority of the collo cations that exp erience repetitions is uprated. W e can see that the step of increase for the co efficient is calculated as a sum of a geometric progression with a start co efficient 0.5. This function rates rep etitions higher than the first one since with each rep eated o ccurrence the co efficient changes p ermanently and the chronological order of rep etitions pla ys no part any more. The second function is then: F 2 ( b a, c, l k ) = b a c − l k The third function is the simplest of all three. The repetitions are considered irrelev an t. Priorit y dep ends on the curren t sentence num b er c and the num b er l k of the last sen tence with a particular collo cation k observ ed. 3 IMPLEMENT A TION 7 F 3 ( c, l k ) = 0 . 5 c − l k 2.4 Example These functions provide a wa y to measure relev ance of rep etition for calculating the priorit y of collo cations. Let c = 20 and → Wolf - sein - b¨ ose (fifth sentence) → Wolf - sein - b¨ ose (fifteen th sen tence) → Wolf - sein - b¨ ose (sev enteen sentence) then → F 1 = 0 . 5 20 − 5 + 0 . 5 20 − 15 + 0 . 5 20 − 17 ≈ 0 . 15628 → F 2 = (0 . 5 1 + 0 . 5 2 + 0 . 5 3 ) 20 − 17 ≈ 0 . 66992 → F 3 = 0 . 5 20 − 17 = 0 . 125 W e can already see on this example how rep etitions exert a differen t impact on the resp ectiv e function ev aluation. 3 Implemen tation Figure 1 shows the w orkb ench of the soft w are system, whic h has b een already presented in [3]. It consists of sev eral panels: in the top, the user ma y select the discov ered actors, whic h may b e h uman b eings, references to human b eings lik e Je dermann , or ev en animals. W e then can select among the different priority functions receiving the mind-map with the asso ciative relationships, including a relev ance v alue. In this resp ect, Figure 1 shows the situation for the actor Blumen after having read the text stream. The highest asso ciation is with sein sch¨ on . The priority v alues dep end on the c hosen priorit y function. 4 T est and V alidation As an example, we examine the german fairy tale R otk¨ ap chen by taking the priorit y functions for eac h actor. With c , we express the current sentence num b er. In Figure 2, w e compare first the impact of unequal influence of rep etition for t w o priority functions of the same actor. The collo cation Blumen - sein - sch¨ on app ears b oth in fifteenth as w ell as in tw en tieth sen tence with F 1 (21 , (15 , 20)) < F 2 (0 . 75 , 21 , 20) 4 TEST AND V ALIDA TION 8 Figure 1: The situation for the actor Blumen after having read the text stream. De- p ending on the priority function, the v alues differ, but the highest asso ciation keeps stable ([3]). Figure 2: The actor Blumen and its asso ciated collo cations after having read the tw ent y-first sen tence (left). The collo cation Blumen - sch¨ on pro ves the highest v alue with priority function F 2 = 0 . 563 whereas priority functions F 1 = 0 . 258 and F 3 = 0 . 25 are low er. The collo cations for Blumen after having read the thirty-second sentence (right). The discrepancy b et ween sch¨ on and ringsumher is strongest for priority function F 2 . 4 TEST AND V ALIDA TION 9 Figure 3: The history of Gr oßmutter within the text at time p oints 10, 35, and 36. The word item wohnen has disapp eared (v alue of 0.0) as it has b een forgotten whereas the asso ciation with schwach weakly exists. The asso ciation Gr oßmutter - rufen has no ob ject as rufen do es not need one. W e may observe that the second function is stronger influenced b y rep etitions. The priorit y v alues for Blumen - stehen - ringsumher are the same since this collo cation o ccurred only once so far. In Figure 2, all three functions for the same actor here. The third function obviously calculates priority without regard to rep etition since F 3 (22 , 20) < F 1 (21 , (15 , 20)) < F 2 (0 . 75 , 21 , 20) After ha ving read the thirt y-second sentence, the list of collo cations has changed (see Figure 2). F ollowing the priorit y function F 1 , all asso ciated weigh ts are 0.0 whereas priorit y function F 2 giv es a v alue of 0.32 for Blumen - sein - sch¨ on . A similar situation o ccurs for Gr oßmutter (see Figure 3). F 1 for gets collo cations which o ccured more than once faster than F 2 . Moreov er, w e can recognize the geometric progression of the priorities calculated b y F 1 for the actor R otk¨ app chen . None of these collo cations o ccurred more than once. The last of them (or the first b y priorit y) o c- curred in the sentence num b er 32 with F 1 (32 , (32)) = 0 . 5 32 − 32 = 0 . 5 0 = 1 Once having read the text stream, the main memory for Wolf is • gehen: [[W ald, Großmutter] [W ald, 0.6, [10, 15]] [Großm utter, 0.5, [18]]] with a priority list of → sein - b¨ ose - 0.5 5 CONCLUSIONS 10 → sein - hungrig - 0.4 → sein - listig - 0.3 → suchen - Nahrung - 0.2 → ansprechen - Rotk¨ appchen - 0.1 5 Conclusions In this pap er, w e ha v e presen ted an architecture of revealing the action/story line in texts by recov ering collo cations b et w een the actors.This has b een done incrementally as the text can b e seen as a text stream that once it is read it is lost. Bringing all sen tences on a u nique form allo ws the iden tification of the actor in a particular sen tence. The num b er of actors p er sentence is limited to one. The collo cations corresp onds to eac h inv olv ed action of an actor; this is managed by a mind-map allo cated for each actor. A set of priorit y functions sort the collo cations in each actor’s mind-map by their actuality . An user may then decide which part and ab ov e all when he w ants to c heck the story line. The output result presents the most actual collocations that actors are inv olved in; from these, the user ma y conclude how the actors interact with each other. 6 Ac kno wledgemen t This w ork has b een p erformed at the researc h lab oratory ILIAS - Intel ligent and A dap- tive Systems within the pro ject TRIAS , which is funded by the Universit y of Luxem- b ourg. References [1] G. Heyer, M. Luter, U. Quasthoff, T. Wittig, C. W olff: Learning Relations using Collo ca- tions. In: Maedc he, Alexander; Staab, Steffen; Nedellec, C.; Hovy , Ed (ed.). Pro ceedings of IJCAI W orkshop on On tology Learning, Seattle/W A, August 2001, pp. 19-24. [2] B. Krenn : CDB - A Database of Lexical Collo cations. Austrian Research for Artificial In teligence. [3] T. Rothen b erger, S. ¨ Oz, E. T ahirovic: INASCO - Bestimmung eines inkrementellen As- soziativsp eic hers f ¨ ur Kollok ationen. Internal Rep ort. Johann W olfgang Go ethe Universit¨ at F rankfurt am Main, 2006. [4] C. Schommer: Incremental Discov ery of Asso ciation Rules with Dynamic Neural no des. Pro ceedings of the W orkshop on Sym b olic Net w orks. ECAI 2004, V alencia, Spain. 2004. [5] B. Schroeder, M. Hilker, R. W eires: Dynamic Association Netw orks in Information Man- agemen t. Pro ceedings 21st International Conference on Computer, Electrical, and Systems Science, and Engineering (CESSE 2007). Vienna, Austria, 2007.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment