Deconvolution of confocal microscopy images using proximal iteration and sparse representations

We propose a deconvolution algorithm for images blurred and degraded by a Poisson noise. The algorithm uses a fast proximal backward-forward splitting iteration. This iteration minimizes an energy which combines a \textit{non-linear} data fidelity term, adapted to Poisson noise, and a non-smooth sparsity-promoting regularization (e.g $\ell_1$-norm) over the image representation coefficients in some dictionary of transforms (e.g. wavelets, curvelets). Our results on simulated microscopy images of neurons and cells are confronted to some state-of-the-art algorithms. They show that our approach is very competitive, and as expected, the importance of the non-linearity due to Poisson noise is more salient at low and medium intensities. Finally an experiment on real fluorescent confocal microscopy data is reported.

💡 Research Summary

The paper addresses the problem of deconvolving fluorescence confocal microscopy images that suffer from both optical blur and Poisson (shot) noise. While classic Richardson‑Lucy (RL) methods handle Poisson statistics, they tend to amplify noise after a few iterations, and most existing regularized variants assume Gaussian noise or ignore the non‑linear nature of the variance‑stabilizing transform (VST).

The authors first model the observation as y_i ∼ Poisson((h⊛x)_i) and apply the Anscombe VST, z_i = 2√(y_i + 3/8). Although the VST stabilizes variance, the transformed data obey the non‑linear relation z ≈ 2√(h⊛x) + ε with ε ∼ N(0,1). Consequently, they formulate a data‑fidelity term F that explicitly incorporates this non‑linearity. The unknown image x is represented in an over‑complete dictionary Φ (e.g., orthogonal wavelets, tight curvelet frames) as x = Φα, where α are the coefficients.

The overall objective is

J(α) = F(HΦα) + λ∑ψ(α_i) + ι_C(Φα),

where H denotes circular convolution with the PSF, ψ is a sparsity‑promoting penalty (typically ℓ₁), λ > 0 balances regularization, and ι_C enforces positivity of the reconstructed intensities.

Because F is smooth with a Lipschitz‑continuous gradient and the regularization term is convex but non‑smooth, the problem fits the forward‑backward splitting framework. The iterative scheme is

α^{t+1} = prox_{μ_t f₂}(α^t – μ_t ∇f₁(α^t)),

with f₁ = F(HΦ·) and f₂ = λ∑ψ + ι_C. The proximal operator of f₂ reduces to soft‑thresholding (for ℓ₁) followed by projection onto the positive orthant, possibly combined with a frame‑specific operator when Φ is a tight frame. Convergence is guaranteed for step sizes μ_t satisfying 0 < inf μ_t ≤ sup μ_t < 3/(2‖H‖²‖z‖_∞).

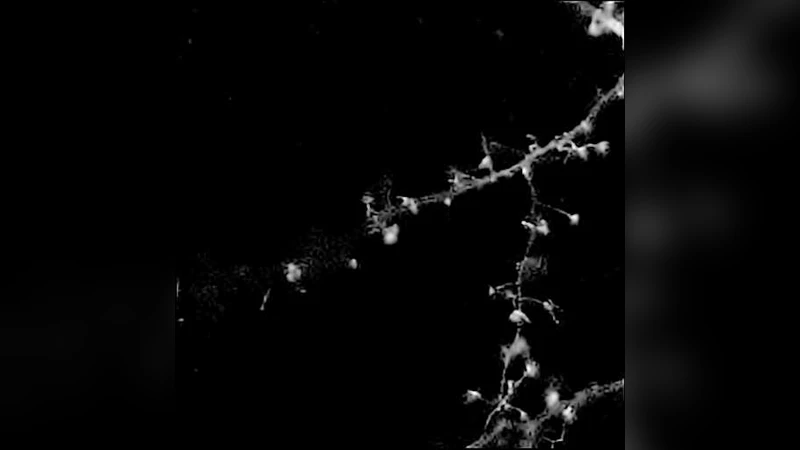

Experimental evaluation includes simulated neuron and endothelial‑cell phantoms (maximum intensities 30, 100, 255) and a real confocal image of GFP‑labeled neurons. The proposed method is compared against four baselines: RL‑TV (total variation regularization), RL‑MRS (multi‑resolution wavelet regularization), NaiveGauss (Gaussian‑noise assumption), and AnsGauss (Anscombe VST followed by Gaussian‑based deconvolution). All algorithms run for 200 iterations.

Results show that at low and medium intensities the proposed approach achieves the lowest ℓ₁‑error and comparable or better mean‑square error than RL‑MRS, while preserving fine structures such as dendritic spines. RL‑TV produces strong visual results at high intensity but suffers from staircase artifacts. NaiveGauss and AnsGauss perform poorly at low intensities because they ignore the Poisson non‑linearity. In terms of computational cost, the MATLAB implementation of the new algorithm requires about 2.7 s on a 2 GHz Core 2 Duo, considerably faster than the C++ RL‑MRS implementation (≈15 s) and comparable to the simple Gaussian baselines.

The paper’s contributions are threefold: (1) a principled data‑fidelity term that respects the Poisson statistics after VST, (2) a fast proximal forward‑backward algorithm that jointly enforces sparsity and positivity, and (3) empirical evidence that combining multiple transforms (wavelets, curvelets) can further improve reconstruction quality. Limitations include the need for manual tuning of λ and dependence on the choice of dictionary; the authors suggest future work on automatic λ selection and learned dictionaries to better adapt to specific biological structures.

Comments & Academic Discussion

Loading comments...

Leave a Comment