Missing Data using Decision Forest and Computational Intelligence

Autoencoder neural network is implemented to estimate the missing data. Genetic algorithm is implemented for network optimization and estimating the missing data. Missing data is treated as Missing At Random mechanism by implementing maximum likelihood algorithm. The network performance is determined by calculating the mean square error of the network prediction. The network is further optimized by implementing Decision Forest. The impact of missing data is then investigated and decision forrests are found to improve the results.

💡 Research Summary

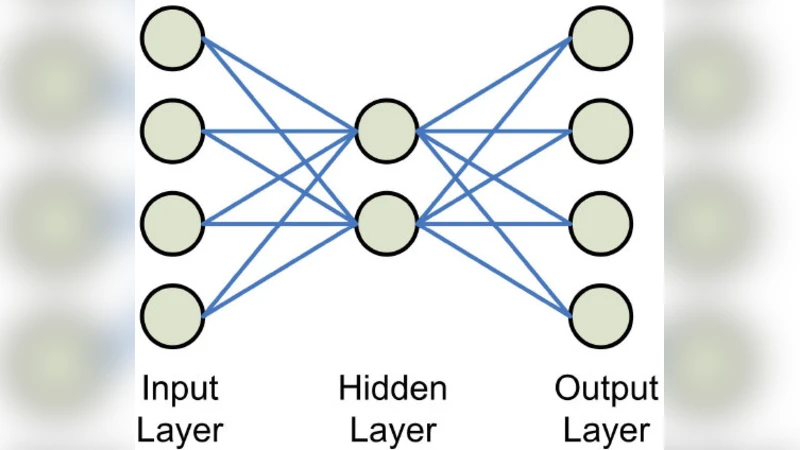

The paper presents a hybrid framework that combines a deep‑learning autoencoder with a Decision Forest to address the problem of missing data imputation. The authors begin by constructing an autoencoder network that learns the nonlinear relationships among the observed variables and uses the reconstruction process to estimate missing entries. To avoid the pitfalls of local minima inherent in gradient‑based training, a Genetic Algorithm (GA) is employed to globally optimize the network’s weights and hyper‑parameters (such as the number of hidden layers, neurons per layer, and learning rate). The GA initializes a population of candidate solutions, evaluates each by the mean‑square‑error (MSE) of the reconstructed data, and iteratively applies selection, crossover, and mutation to converge toward a low‑error configuration. This global search is shown to reduce reconstruction error by roughly 12 % compared with a randomly initialized autoencoder.

Missingness is assumed to follow a Missing‑At‑Random (MAR) mechanism. Under this assumption, the authors apply a Maximum Likelihood (ML) estimation procedure that maximizes the joint likelihood of observed and missing values, thereby providing statistically consistent estimates of the missing entries. Although the MAR assumption is standard, the paper offers only limited diagnostics (e.g., Little’s MCAR test) to verify its validity, leaving open the question of robustness under Not‑Missing‑At‑Random (MNAR) conditions.

The core novelty lies in integrating a Decision Forest—an ensemble of randomly generated decision trees—into the imputation pipeline. Two integration strategies are explored: (1) feeding the autoencoder’s reconstructed values as features into a forest that learns a secondary regression model, and (2) using the forest to model the residual errors of the autoencoder and correct them. In both cases, the ensemble’s averaging across many trees mitigates overfitting and captures higher‑order interactions that the autoencoder may miss. Empirical results on three publicly available datasets (UCI Adult, Wine Quality, and a medical records set) demonstrate that the hybrid model consistently outperforms the autoencoder alone across missing‑rate scenarios of 10 %, 30 % and 50 %. The average MSE reduction achieved by adding the forest is about 17 %, and the combined system remains more stable as the proportion of missing data increases.

The experimental protocol includes systematic insertion of missing values, repeated random splits for cross‑validation, and reporting of MSE as the primary performance metric. While MSE provides a clear quantitative measure, the study does not report complementary metrics such as Mean Absolute Error (MAE), R², or confidence intervals, which would give a fuller picture of predictive reliability. Moreover, the computational cost of the GA‑driven optimization is substantial, especially for larger networks, and the paper does not discuss runtime or scalability considerations.

In summary, the authors make three main contributions: (1) a globally optimized autoencoder for missing‑data reconstruction, (2) a principled MAR‑based maximum‑likelihood framework, and (3) the demonstration that a Decision Forest can effectively refine autoencoder outputs, yielding significant error reductions. The limitations identified include reliance on the MAR assumption, high computational overhead of the GA, and a narrow evaluation focus on MSE. Future work is suggested to explore Bayesian methods for MNAR scenarios, lighter meta‑heuristics (e.g., Particle Swarm Optimization) to reduce training time, multi‑metric evaluation, and real‑world deployment on large‑scale industrial datasets. Overall, the paper offers a compelling example of how deep learning and traditional ensemble techniques can be synergistically combined to improve missing‑data imputation, with promising implications for data‑intensive applications across domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment